Accelerating Discovery: How HPC Parallelization Transforms NGS Immunology Data Analysis for Researchers

This article explores the critical role of High-Performance Computing (HPC) parallelization in managing the computational challenges of Next-Generation Sequencing (NGS) for immunology research.

Accelerating Discovery: How HPC Parallelization Transforms NGS Immunology Data Analysis for Researchers

Abstract

This article explores the critical role of High-Performance Computing (HPC) parallelization in managing the computational challenges of Next-Generation Sequencing (NGS) for immunology research. We address the foundational concepts of parallel computing in bioinformatics, detail methodological approaches for implementing workflows (e.g., for AIRR-seq data), provide solutions for common bottlenecks and optimization strategies, and examine validation frameworks and comparative performance of popular tools. Aimed at researchers and drug development professionals, this guide synthesizes current best practices to enhance scalability, speed, and reproducibility in immunological data analysis, ultimately accelerating therapeutic discovery.

The HPC Imperative: Why Parallel Computing is Non-Negotiable for Modern NGS Immunology

The application of High-Performance Computing (HPC) parallelization is not merely an enhancement but a fundamental necessity for research in Next-Generation Sequencing (NGS) immunology. The sheer volume and multidimensional complexity of Adaptive Immune Receptor Repertoire sequencing (AIRR-seq) and single-cell immune profiling data create a "data deluge" that overwhelms traditional analytical pipelines. This whitepaper delineates the scale of this challenge, presents current methodologies, and frames them within the imperative for distributed, scalable computing architectures to enable discovery in immunology and therapeutic development.

The Scale of the Data Deluge: Quantitative Benchmarks

The data generated by modern NGS immunology techniques is characterized by high dimensionality, depth, and velocity. The following tables summarize the core quantitative metrics.

Table 1: Data Scale per Sample for Key NGS Immunology Assays

| Assay Type | Estimated Raw Data per Sample (GB) | Typical Cells/Sequences per Sample | Key Measured Features | Approx. Final Matrix Size (Features x Cells) |

|---|---|---|---|---|

| Bulk AIRR-seq (Ig/TCR) | 5 - 20 GB | 10^4 - 10^7 sequences | V/D/J genes, CDR3 seq, SHM, isotype | ~10 columns x 10^6 sequences |

| Single-Cell RNA-seq (scRNA-seq) | 50 - 200 GB | 5,000 - 20,000 cells | 20,000+ transcripts | 20,000 genes x 10^4 cells |

| Single-Cell V(D)J + 5' Gene Expression | 100 - 500 GB | 5,000 - 20,000 cells | Paired Ig/TCR, full-length transcriptome | (20,000 genes + 2 chains) x 10^4 cells |

| CITE-seq / ATAC-seq Multiome | 200 - 1000 GB | 5,000 - 20,000 cells | Transcriptome + Surface Proteins / Chromatin Accessibility | (20k + 200) features x 10^4 cells |

Table 2: Computational Resource Requirements for Primary Analysis

| Analysis Step | Typical Tool Example | Approx. Compute Time (Single Sample) | Recommended RAM | HPC Parallelization Strategy |

|---|---|---|---|---|

| Demultiplexing & FASTQ Generation | bcl2fastq, mkfastq |

1-4 hours | 16 GB | Embarrassingly parallel by lane/sample |

| AIRR-seq: Assembly & Annotation | MiXCR, pRESTO |

2-8 hours | 32-64 GB | Sample-level parallelism; multithreading within sample |

| scRNA-seq: Alignment & Quantification | Cell Ranger, STARsolo |

4-12 hours | 64-128 GB | Sample-level parallelism; GPU acceleration possible |

| Single-Cell V(D)J Assembly | Cell Ranger V(D)J, Scirpy |

3-6 hours | 64 GB | Sample-level parallelism |

Core Experimental Protocols & Methodologies

Protocol for Bulk AIRR-Seq (Lymphocyte Separation & Library Prep)

Objective: To sequence the repertoire of B-cell or T-cell receptors from a peripheral blood or tissue sample.

- Sample Preparation: Isolate PBMCs using Ficoll density gradient centrifugation. Isolate lymphocytes (e.g., CD19+ B cells, CD3+ T cells) via magnetic-activated cell sorting (MACS).

- Nucleic Acid Extraction: Extract total RNA using TRIzol or column-based kits. Convert to cDNA using reverse transcriptase with gene-specific primers for constant regions of Ig or TCR chains.

- Multiplex PCR Amplification: Perform nested or semi-nested PCR using multiple forward primers targeting V gene families and reverse primers for C regions. Incorporate unique molecular identifiers (UMIs) and sequencing adapters.

- Library Preparation & QC: Purify amplicons, quantify by fluorometry, and assess size distribution via Bioanalyzer. Perform Illumina library prep (fragmentation, indexing, adapter ligation).

- Sequencing: Sequence on Illumina platforms (MiSeq, NovaSeq) using 2x300 bp or 2x150 bp paired-end runs to cover full CDR3 regions.

Protocol for Single-Cell Immune Profiling (10x Genomics Platform)

Objective: To simultaneously capture transcriptome and paired V(D)J sequences from single lymphocytes.

- Single-Cell Suspension: Create a high-viability (>90%) single-cell suspension from tissue or sorted cells. Adjust concentration to 700-1200 cells/µl.

- Gel Bead-in-emulsion (GEM) Generation: Load cells, Gel Beads (containing barcoded oligonucleotides with UMI, cell barcode, and poly-dT), and partitioning oil onto a 10x Chromium chip. Each cell is co-partitioned with a bead in a droplet.

- Reverse Transcription & Barcoding: Within each droplet, cells are lysed, and mRNA is reverse-transcribed. The cDNA molecules are tagged with the cell-specific barcode and UMI.

- cDNA Amplification & Library Construction: Break droplets, pool cDNA, and amplify by PCR. The amplified cDNA is then split for two libraries:

- 5' Gene Expression Library: Fragmentation and attachment of sample index via PCR.

- V(D)J Enrichment Library: Target-specific PCR for TCR or Ig constant regions, followed by fragmentation and indexing.

- Sequencing: Pool libraries and sequence on Illumina NovaSeq. Recommended depth: ≥20,000 reads/cell for gene expression; ≥5,000 reads/cell for V(D)J.

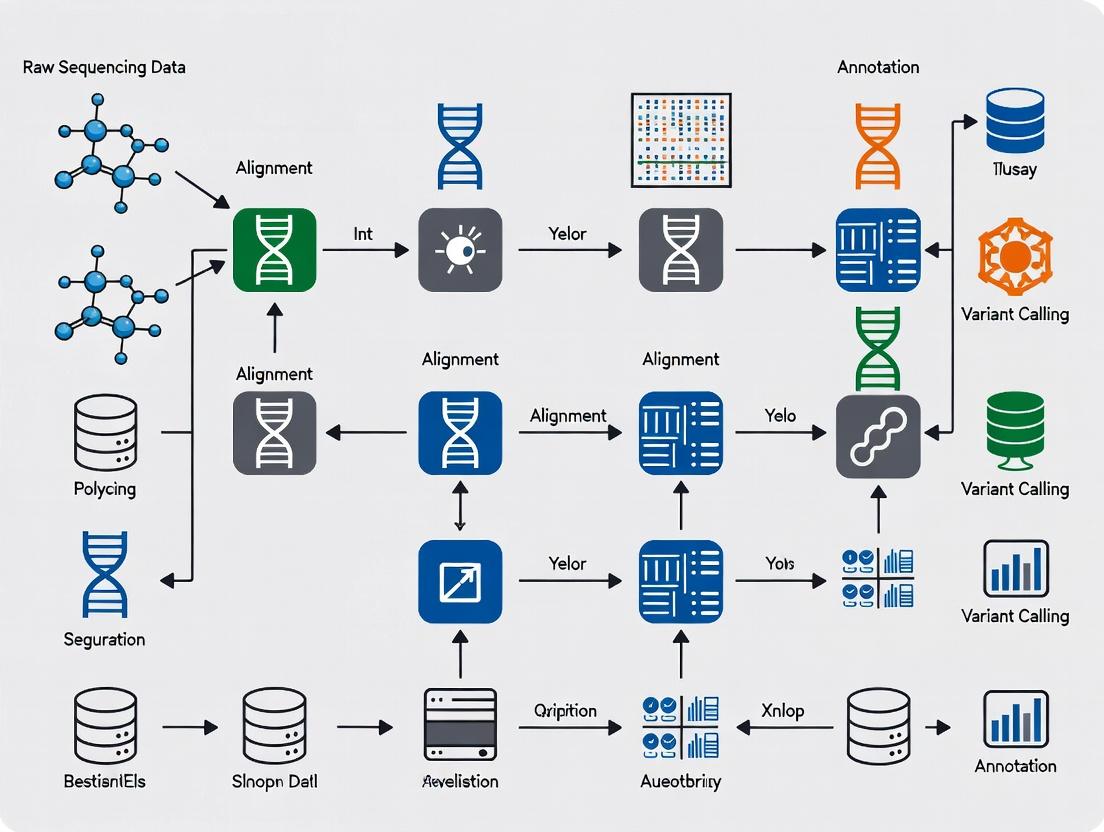

Visualization of Workflows and Relationships

Title: NGS Immunology Data Generation and HPC Convergence

Title: HPC-Parallelized Analytical Pipeline for Immunology Data

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents & Kits for NGS Immunology Experiments

| Category | Item / Kit Name (Example) | Primary Function in Protocol |

|---|---|---|

| Cell Isolation | Ficoll-Paque PLUS | Density gradient medium for PBMC isolation from whole blood. |

| MACS MicroBeads (e.g., anti-CD19, anti-CD3) | Magnetic beads for positive or negative selection of specific lymphocyte populations. | |

| Nucleic Acid Handling | TRIzol LS Reagent | Simultaneous isolation of high-quality RNA, DNA, and proteins from small samples. |

| SMARTer Human BCR/TCR Kits (Takara Bio) | For bulk AIRR-seq: cDNA synthesis with template switching and PCR amplification of Ig/TCR regions. | |

| Single-Cell Platform | Chromium Next GEM Single Cell 5' Kit (10x Genomics) | Core reagent kit for partitioning cells, barcoding cDNA, and generating libraries for 5' gene expression + V(D)J. |

| Chromium Single Cell Human TCR/BCR Ab Kits | For enriching and constructing V(D)J libraries from the same cells as the gene expression assay. | |

| Library Prep & QC | KAPA HyperPrep Kit | For robust, high-yield Illumina library construction from fragmented DNA. |

| Agilent High Sensitivity DNA Kit | For precise quantification and size distribution analysis of NGS libraries on a Bioanalyzer. | |

| Sequencing | Illumina NovaSeq 6000 S4 Reagent Kit | High-output flow cell and reagents for deep sequencing of multiplexed libraries. |

| Data Analysis | Cell Ranger Suite (10x Genomics) | Primary analysis pipeline for demultiplexing, barcode processing, alignment, and feature counting of single-cell data. |

| Immune-specific R/Python Packages (scirpy, Immunarch) | Secondary analysis toolkits for repertoire analysis, clonal tracking, and integration with transcriptome data. |

The analysis of Next-Generation Sequencing (NGS) data in immunology, particularly for repertoire sequencing (AIRR-Seq) of B-cell and T-cell receptors, presents a monumental computational challenge. A single experiment can generate terabytes of data, and the serial processing of these datasets creates a critical bottleneck. High-Performance Computing (HPC) parallelization is no longer optional but essential for advancing research in vaccine development, cancer immunotherapy, and autoimmune disease profiling. This guide details the core parallel computing paradigms—shared and distributed memory models, implemented via OpenMP and MPI—that are fundamental to accelerating the workflows in this field.

Foundational Parallel Computing Models

Shared Memory Architecture

In a shared memory system, multiple processors (or cores) operate independently but share the same, globally accessible memory space. This architecture is typical of modern multi-core servers and workstations. The primary advantage is simplified data management, as any processor can directly access any memory location without explicit data transfers. However, scalability is limited by hardware constraints (memory bandwidth, cache coherence) and the need for careful synchronization to avoid race conditions.

Distributed Memory Architecture

A distributed memory system consists of a network of independent nodes, each with its own local memory. Processors on one node cannot directly access the memory of another node; communication must occur via explicit message passing over the network. This model offers superior scalability, allowing the integration of hundreds or thousands of nodes to tackle massive problems, albeit with increased programming complexity due to the need for data partitioning and communication.

Comparative Analysis: Shared vs. Distributed Memory

Table 1: Comparison of Shared and Distributed Memory Architectures

| Feature | Shared Memory (e.g., Multi-core CPU) | Distributed Memory (e.g., Compute Cluster) |

|---|---|---|

| Memory Access | Uniform, global address space. | Non-uniform, local memory only. |

| Scalability | Limited to cores/sockets in a single node (dozens). | Highly scalable across many nodes (thousands). |

| Communication | Implicit, via memory reads/writes (fast). | Explicit message passing (network speed). |

| Programming Model | Thread-based (e.g., OpenMP, pthreads). | Process-based (e.g., MPI). |

| Data Consistency | Requires synchronization (locks, barriers). | Each process has independent data. |

| Typical Use Case | Loop parallelization, fine-grained tasks. | Large-scale simulations, embarrassingly parallel data processing. |

| Cost | Lower per-node, higher for large scale-up. | Higher initial setup, better scale-out. |

Core Technologies: OpenMP and MPI

OpenMP (Shared Memory Parallelism)

OpenMP (Open Multi-Processing) is an API for shared-memory parallel programming in C, C++, and Fortran. It uses compiler directives (pragmas) to create multi-threaded programs, managing a pool of threads that execute work concurrently.

Key Experiment Protocol: Parallelizing AIRR-Seq Sequence Alignment

- Objective: Accelerate the alignment of millions of short NGS reads to V(D)J reference germline sequences using a Smith-Waterman-like algorithm.

- Methodology:

- Baseline: Profile a serial alignment code to identify the hotspot loop iterating over read sequences.

- Parallelization: Insert an OpenMP

#pragma omp parallel fordirective before the loop. - Schedule: Apply a

dynamicscheduling clause due to variable read lengths and alignment complexity. - Reduction: Use a

reduction(+:total_matches)clause to safely accumulate global statistics. - Compilation: Compile with

-fopenmp(GCC) or/openmp(Intel).

- Expected Outcome: Near-linear speedup on a multi-core server, reducing alignment time from hours to minutes for a standard dataset.

OpenMP Fork-Join Execution Model

MPI (Distributed Memory Parallelism)

The Message Passing Interface (MPI) is a standardized, portable library for writing parallel programs that run on distributed memory systems. It coordinates multiple processes, each with separate address spaces, communicating through send/receive operations.

Key Experiment Protocol: Distributed Clustering of T-Cell Clones

- Objective: Perform hierarchical clustering on a massive distance matrix derived from millions of unique T-cell receptor (TCR) sequences across a cluster.

- Methodology:

- Partitioning (MPI_Scatter): The root process (Rank 0) reads the full dataset and distributes subsets of TCR sequences to all other processes.

- Local Computation: Each process computes a sub-matrix of pairwise distances for its local sequences.

- Global Reduction (MPIReduce/MPIGather): Local matrices or cluster centroids are reduced to the root process for global analysis.

- Synchronization: Use

MPI_Barrierto ensure all processes reach the same point. - Execution: Launch with

mpirun -np 256 ./clustering_program.

- Expected Outcome: The task scales across hundreds of nodes, processing datasets intractable for a single machine.

MPI Distributed Memory Communication

Hybrid MPI+OpenMP for NGS Immunology Pipelines

The most powerful approach for complex NGS immunology workflows is a hybrid model. This leverages MPI for coarse-grained, inter-node parallelism (e.g., processing different samples or genomic regions on different cluster nodes) and OpenMP for fine-grained, intra-node parallelism (e.g., multi-threading the alignment of reads within a single sample on a node's many cores).

Example Workflow: Hybrid Pipeline for Repertoire Analysis

- MPI Level: Different processes handle different patient samples or batches of FASTQ files.

- OpenMP Level: Within each process, threads parallelize quality control, adapter trimming, and sequence alignment steps.

Table 2: Performance Comparison of Parallel Models on a Simulated AIRR-Seq Dataset (100M Reads)

| Parallel Model | Hardware Configuration | Execution Time | Speedup (vs. Serial) | Parallel Efficiency | Best For Stage |

|---|---|---|---|---|---|

| Serial Baseline | 1 CPU core | 12.5 hours | 1.0x | 100% | N/A |

| Pure OpenMP | 1 node, 32 cores | 0.52 hours | 24.0x | 75% | Read Alignment, Quality Filtering |

| Pure MPI | 32 nodes, 1 core/node | 0.48 hours | 26.0x | 81% | Embarrassingly parallel sample processing |

| Hybrid (MPI+OpenMP) | 8 nodes, 4 MPI tasks/node, 8 threads/task | 0.22 hours | 56.8x | 89% | End-to-End Multi-Sample Pipeline |

Hybrid MPI+OpenMP NGS Workflow

The Scientist's Toolkit: Essential Research Reagents & HPC Solutions

Table 3: Key Computational "Reagents" for Parallel NGS Immunology Research

| Item / Tool | Category | Function in the "Experiment" |

|---|---|---|

| Slurm / PBS Pro | Job Scheduler | Manages resources and queues computational jobs on an HPC cluster, allocating nodes for MPI/OpenMP tasks. |

| Intel MPI / OpenMPI | MPI Implementation | Provides the library for distributed memory programming, enabling communication between processes across nodes. |

| GCC / Intel Compiler | Compiler Suite | Compiles source code with support for OpenMP directives and MPI libraries (-fopenmp, -lmpi). |

| Performance Profiler | Diagnostic Tool | Identifies bottlenecks (e.g., perf, Intel VTune, Scalasca). Critical for optimizing parallel efficiency. |

| AIRR-Compliant Tools | Domain Software | Parallelized immunogenomics software (e.g., MiXCR, pRESTO) that may use OpenMP/MPI internally for acceleration. |

| Container Runtime | Deployment Tool | Ensures reproducible software environments across HPC nodes (e.g., Singularity/Apptainer, Docker). |

| Parallel File System | Data Management | Provides high-speed, concurrent access to large NGS datasets from all compute nodes (e.g., Lustre, GPFS). |

| Version Control (Git) | Code Management | Tracks changes in custom parallel analysis scripts, enabling collaboration and reproducibility. |

Transitioning from serial to parallel processing is the decisive step in breaking the computational bottleneck in NGS immunology research. The strategic application of shared memory (OpenMP) and distributed memory (MPI) models—often in a hybrid combination—enables researchers to scale analyses from single workstations to vast clusters. This directly accelerates the discovery pipeline, from characterizing adaptive immune responses to identifying therapeutic targets, ultimately reducing the time from sequencing data to actionable immunological insight. Mastering these core HPC concepts is fundamental for any research team aiming to leverage the full potential of modern immunogenomics data.

Within the context of a thesis on High-Performance Computing (HPC) parallelization for Next-Generation Sequencing (NGS) immunology data research, selecting the appropriate computational framework is critical. Immunology studies, such as T-cell receptor repertoire analysis, single-cell RNA sequencing of immune cells, and vaccine development pipelines, generate massive, complex datasets. This guide provides an in-depth technical comparison of four dominant parallelized frameworks—Apache Spark, Apache Hadoop, Nextflow, and Snakemake—for orchestrating these workloads on HPC clusters.

Framework Core Architectures & Suitability for Immunology

Apache Hadoop

Hadoop is a distributed storage and batch processing framework based on the MapReduce programming model. Its core components are the Hadoop Distributed File System (HDFS) and Yet Another Resource Negotiator (YARN). It excels at processing extremely large, immutable datasets through a fault-tolerant, disk-oriented parallelization model.

Apache Spark

Spark is an in-memory, distributed data processing engine designed for speed. It extends the MapReduce model with Resilient Distributed Datasets (RDDs) and DataFrames, supporting iterative algorithms, interactive queries, and stream processing, which is valuable for iterative machine learning on immunogenomic data.

Nextflow

Nextflow is a reactive workflow framework and domain-specific language (DSL) designed for scalable and reproducible computational pipelines. It is agnostic to the underlying execution platform (HPC schedulers, cloud) and uses a dataflow model, making it ideal for complex, multi-step NGS immunology pipelines.

Snakemake

Snakemake is a workflow management system based on Python. It uses a rule-based syntax to define workflows, which are then executed as a directed acyclic graph (DAG). It is tightly integrated with HPC schedulers and Conda environments, promoting reproducibility in bioinformatics analysis.

Quantitative Framework Comparison

Table 1: Core Technical Specifications & Suitability

| Feature | Apache Hadoop | Apache Spark | Nextflow | Snakemake |

|---|---|---|---|---|

| Primary Paradigm | Batch Processing (MapReduce) | In-Memory Data Processing | Dataflow / Reactive Workflow | Rule-Based Workflow (DAG) |

| Execution Model | Disk I/O Intensive | In-Memory Iterative | Process-Centric / Dataflow | Rule-Centric / DAG |

| Language | Java (API in Java, Python, etc.) | Scala (API in Java, Python, R, SQL) | DSL (Groovy-based) | Python-based DSL |

| Scheduling | YARN | Standalone, YARN, Mesos, Kubernetes | Built-in (via executors for SLURM, SGE, etc.) | Built-in (for SLURM, SGE, etc.) |

| Best For | Large-scale log processing, historical batch ETL | Iterative ML (e.g., clustering immune cell populations), real-time analytics | Complex, portable NGS pipelines (e.g., full genome immunogenomics) | Modular, reproducible NGS analysis steps (e.g., variant calling) |

| Key Strength | Fault tolerance on commodity hardware, proven at petabyte scale | Speed for iterative algorithms, rich libraries (MLlib, GraphX) | Portability, implicit parallelism, rich tooling (Wave, Tower) | Readability, integration with Python ecosystem, Conda support |

| Immunology Use Case | Archival & batch processing of raw sequencing data from large cohorts | Machine learning on immune repertoire diversity metrics | End-to-end single-cell immune profiling pipeline (Cell Ranger → Seurat) | ChIP-seq or ATAC-seq analysis for immune cell epigenomics |

Table 2: Performance & Usability Metrics (Representative Benchmarks)

| Metric | Apache Hadoop | Apache Spark | Nextflow | Snakemake |

|---|---|---|---|---|

| Learning Curve | Steep | Moderate | Moderate | Gentle (for Python users) |

| Fault Tolerance | High (task re-execution) | High (RDD lineage) | High (process retry, checkpointing) | High (rule retry) |

| Data Handling | HDFS (large files) | HDFS, S3, Cassandra, etc. | Local, S3, Google Storage, iRODS | Local, cloud (via plugins) |

| Community in Bioinfo | Low (general big data) | Growing (ADAM, Glow projects) | Very High (nf-core) | Very High (widely adopted) |

| Typical Latency | Minutes to Hours | Seconds to Minutes (in-memory) | Minutes (process overhead) | Minutes |

Experimental Protocols for Immunology Pipelines

Protocol 1: Scalable TCR Repertoire Analysis with Spark

Objective: To analyze T-cell receptor (TCR) sequencing data from multiple patients in parallel to identify clonal expansions.

- Data Ingestion: Store FASTQ or annotated TSV files in a distributed storage system (e.g., HDFS, S3).

- Spark Session Initialization: Launch a Spark session on a YARN or Kubernetes cluster with allocated executors.

- Data Loading: Use

spark.read.csv()or a specialized genomics library (e.g., Glow) to load data as a DataFrame. - Parallel Processing: Perform operations like grouping by CDR3 amino acid sequence, counting reads per clone, and calculating clonality metrics using DataFrame transformations (

groupBy,agg). - Downstream Analysis: Persist results for further statistical analysis or visualization. Utilize Spark MLlib for clustering similar repertoires across patients.

Protocol 2: Reproducible Single-Cell RNA-Seq Pipeline with Nextflow

Objective: To create a portable, scalable workflow for processing 10x Genomics single-cell immune cell data.

- Pipeline Definition: Write a

main.nfscript defining processes (e.g.,CELLRANGER_COUNT,SEURAT_ANALYSIS). - Configuration: Specify pipeline parameters (inputs, references) in a

nextflow.configfile. Configure the SLURM executor for HPC with memory and CPU directives. - Execution: Launch with

nextflow run main.nf -profile slurm. Nextflow manages job submission, monitoring, and consolidation of outputs. - Resumption: Use

-resumeflag to continue from cached results after interruptions.

Protocol 3: Epigenomic Analysis Workflow with Snakemake on HPC

Objective: To design a modular ATAC-seq workflow for identifying open chromatin regions in dendritic cells.

- Rule Creation: Write a

Snakefilewith rules for each step:trim_reads,align_bwa,call_peaks_macs2,annotate_peaks. - Input/Output Declaration: Define rule inputs and outputs to establish dependencies. Use wildcards for sample-level parallelization.

- Cluster Configuration: Create a

cluster.jsonprofile to submit each rule job to a SLURM scheduler with resource requests. - Execution & Scaling: Run with

snakemake --cluster "sbatch" --jobs 12. Snakemake will submit up to 12 jobs concurrently, respecting dependencies.

Visualizing Framework Workflows

Title: Parallel Data Processing and Workflow DAG Models

Title: Framework Interaction with HPC Schedulers

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Computational & Data Resources for NGS Immunology

| Item | Function in Immunology Research | Example/Format |

|---|---|---|

| Reference Genome | Baseline for alignment of sequencing reads; defines gene models and coordinates. | GRCh38 (human), GRCm39 (mouse); FASTA file + GTF annotation. |

| Immune-Specific Databases | Curated sets of immune gene sequences, receptors, and epitopes for annotation. | IMGT/GENE-DB (antibodies/TCRs), Immune Epitope Database (IEDB). |

| Cell Ranger Reference | Pre-processed genome reference package for 10x Genomics immune profiling pipelines. | refdata-gex-GRCh38-2020-A.tar.gz (includes pre-mRNA sequences). |

| Conda/Bioconda Environment | Reproducible, version-controlled installation of bioinformatics software stacks. | environment.yml file specifying versions of Cell Ranger, Seurat, etc. |

| Container Images (Docker/Singularity) | Encapsulated, portable software environments ensuring identical analysis runs. | Singularity .sif images for Nextflow/nf-core pipelines on HPC. |

| Sample Manifest File | Metadata linking biological samples to data files and experimental conditions. | CSV file with columns: sample_id, patient_id, fastq_path, phenotype. |

High-throughput sequencing of the adaptive immune repertoire (AIR) generates immense, complex datasets. Core analytical challenges—clonal expansion analysis, V(D)J recombination profiling, and high-resolution HLA typing—are computationally intensive and inherently parallelizable. This whitepaper details these challenges and their methodologies within the thesis that High-Performance Computing (HPC) parallelization is critical for scaling NGS-based immunology research, enabling real-time analytics for vaccine development, cancer immunotherapy, and autoimmune disease monitoring.

Core Challenges & Quantitative Landscape

The scale of data generation and analysis for immune repertoire sequencing (Rep-Seq) and HLA typing presents specific computational bottlenecks suitable for HPC decomposition.

Table 1: Quantitative Demands of Immunology NGS Analysis

| Analysis Task | Typical Data Volume Per Sample | Key Computational Steps | Primary HPC Parallelization Target |

|---|---|---|---|

| V(D)J Recombination & Clonotype Assembly | 1-10 GB (RNA-seq) | Read alignment, CDR3 extraction, clonotype clustering | Embarrassingly parallel per sample; multi-threaded alignment (e.g., IgBLAST). |

| Clonal Expansion Dynamics | 10,000 - 1,000,000+ unique clonotypes | Diversity indices, lineage tracking, statistical comparison | Batch processing of multiple timepoints/samples; Monte Carlo simulations. |

| High-Resolution HLA Typing | 5-15 GB (WES/RNA-seq) | Read mapping to polymorphic loci, allele calling, phasing | Concurrent analysis of multiple HLA loci; genotype imputation pipelines. |

Table 2: Current Tool Performance Benchmarks (2024)

| Tool/Algorithm | Primary Use | Runtime (Single Sample, Typical) | Memory Footprint |

|---|---|---|---|

| MiXCR | V(D)J alignment & clonotyping | 15-30 minutes | 8-16 GB |

| IMGT/HighV-QUEST | Germline alignment & annotation | 1-2 hours (via web) | N/A (Web Service) |

| OptiType | HLA typing from RNA-seq | 30-60 minutes | 4-8 GB |

| arcasHLA | HLA typing from WES/RNA-seq | 1-2 hours | 8-12 GB |

| ImmunoSEQ Analyzer | Commercial clonal analysis | Varies | Cloud-based |

Detailed Experimental Protocols

Protocol: Single-Cell V(D)J Recombination Analysis (10x Genomics Chromium)

Objective: To profile paired T-cell receptor (TCR) or B-cell receptor (BCR) sequences from single cells.

- Cell Preparation: Isolate viable lymphocytes (viability >90%) at a concentration of 700-1200 cells/µL.

- Gel Bead-in-Emulsion (GEM) Generation: Use the Chromium Controller to partition single cells with gel beads containing Unique Molecular Identifiers (UMIs) and cell barcodes.

- Reverse Transcription: Within each GEM, poly-adenylated RNA (including V(D)J transcript) is reverse-transcribed into cDNA, incorporating the cell barcode and UMI.

- Library Construction: Perform two separate PCR amplifications: one for the 5’ gene expression library and one for the V(D)J-enriched library using locus-specific primers.

- Sequencing: Run on Illumina NovaSeq (Recommended: 150 bp Paired-End). Target: 5,000 read pairs per cell for V(D)J library.

- HPC-Amenable Analysis: Use

Cell Ranger(multi-threaded) pipelines (cellranger vdj) for alignment (to GRCh38 + IMGT reference), contig assembly, and clonotype calling. Downstream clustering by CDR3 amino acid sequence.

Protocol: Bulk Sequencing for Clonal Expansion Tracking

Objective: To quantify T-cell/B-cell clonal dynamics over time or between conditions.

- Sample & Primer Strategy: Isolate genomic DNA or RNA from PBMCs/tissue. Use multiplex PCR primers targeting all known V and J gene segments (e.g., BIOMED-2 protocol).

- Amplification & Sequencing: Amplify rearranged CDR3 regions. Use a two-step PCR to add Illumina adapters and sample indices. Sequence on MiSeq or HiSeq (2x300bp recommended for full CDR3 coverage).

- Data Processing (Parallelizable Steps):

- Preprocessing: Demultiplex samples (parallel by lane).

- Alignment & Assembly: Use

MiXCRin batch mode (mixcr analyze amplicon), deploying one job per sample on an HPC cluster. - Clonotype Table Generation: Export clonotype tables (reads, UMIs, frequency) for each sample.

- Clonal Expansion Analysis: Merge clonotype tables. Calculate metrics (Shannon entropy, Gini index, clonality). Identify expanded clones (e.g., >5% of repertoire) and track them across longitudinal samples using custom R/Python scripts across multiple compute nodes.

Protocol: High-Resolution HLA Typing via Whole Exome Sequencing (WES)

Objective: To determine an individual's HLA alleles at nucleotide resolution.

- Library Prep & Sequencing: Perform standard WES (e.g., Illumina Nextera Flex) with a minimum mean coverage of 100x.

- Data Extraction: Extract all reads mapped to the HLA genomic region (chr6:28,510,120-33,480,577, GRCh38) and unmapped reads using

samtools. - Parallelized Typing:

- Step A: Run multiple typing algorithms (e.g.,

OptiType,HLA-HD,arcasHLA) concurrently as separate HPC jobs. - Step B: For each tool, the process involves:

- Alignment: Competitive mapping to a database of all known HLA alleles (e.g., IMGT/HLA).

- Genotyping: Bayesian or maximum likelihood estimation of the most probable pair of alleles at each locus (A, B, C, DRB1, DQB1, etc.).

- Step A: Run multiple typing algorithms (e.g.,

- Consensus Calling: Compare results from multiple tools to generate a high-confidence consensus genotype, resolving ambiguities.

Visualizations

Title: HPC Parallelization Workflow for Immunology NGS Data

Title: V(D)J Recombination Mechanism & Junctional Diversity

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Kits for Featured Protocols

| Item | Supplier/Example | Primary Function |

|---|---|---|

| Chromium Next GEM Single Cell 5’ Kit v2 | 10x Genomics | Partitions single cells for linked 5’ gene expression and V(D)J sequencing. |

| BIOMED-2 Multiplex PCR Primers | Invitrogen, In-house | Amplifies all possible V-J rearrangements from gDNA for bulk clonality studies. |

| TCR/BCR Immune Panel | Illumina (TruSight) | Hybrid capture-based enrichment of TCR/BCR loci for high-sensitivity detection. |

| HLA Typing Kits (PCR-SSO/SSP) | One Lambda (Thermo Fisher), SeCore | Traditional, non-NGS based typing for validation of NGS results. |

| IMGT/HighV-QUEST Database | IMGT | The international reference for immunoglobulin and TCR allele sequences. |

| Illumina DNA Prep | Illumina | Library preparation for WES, providing input for HLA typing pipelines. |

| PhiX Control v3 | Illumina | Sequencing run spike-in control for low-diversity libraries (e.g., amplicon). |

Building Your Pipeline: A Practical Guide to Parallelizing Immunology NGS Workflows

High-throughput sequencing of immune repertoires (Rep-Seq) and single-cell immune profiling generate complex datasets requiring computationally intensive analysis. Framed within a thesis on High-Performance Computing (HPC) parallelization for NGS immunology data research, this guide dissects the standard immunology NGS workflow into its constituent, parallelizable tasks. The core challenge lies in efficiently mapping the sequential steps of Quality Control (QC), Alignment, Assembly, and Clonotyping to parallel architectures to accelerate insights into adaptive immune responses for vaccine and therapeutic development.

Core Workflow Stages and Parallel Task Mapping

The standard bulk B-cell or T-cell receptor sequencing workflow proceeds through defined stages, each containing tasks with inherent parallelism.

Quality Control (QC) & Preprocessing

Primary Parallel Task: Per-File/Per-Read Processing QC operates in an "embarrassingly parallel" mode. Each input FASTQ file, or even batches of reads within a file, can be processed independently.

- Key Sub-tasks: Read trimming (adapters, low-quality bases), quality scoring, filtering, and format conversion.

- Parallel Model: Data-level parallelism. Multiple worker nodes/cores process different input files simultaneously with no inter-process communication.

Alignment

Primary Parallel Task: Partitioned Reference Genome/Transcriptome Mapping Alignment maps preprocessed reads to reference V(D)J gene segments. Parallelization strategies include:

- Reference Partitioning: Dividing the reference database (e.g., IMGT) across cores, with each core aligning all reads to its subset, followed by result aggregation.

- Read Partitioning (More Common): Distributing reads across cores, with each core aligning its read subset against the entire reference. This is highly efficient for large read sets.

- Seed-and-Extend Parallelization: Within each alignment operation (e.g., using

BWAorIgBLAST), the seed-finding and extension steps can be vectorized or multithreaded.

Assembly

Primary Parallel Task: Contig Building from Read Overlaps For workflows requiring de novo assembly of complete V(D)J sequences from short reads (e.g., from RNA-Seq data).

- Graph-Based Parallelism: The assembly process builds a de Bruijn graph where nodes represent k-mers. Graph construction and traversal can be parallelized by partitioning the k-mer space or using parallel graph algorithms.

- Sample-Level Parallelism: Each sample's reads are assembled independently, allowing for parallel execution per sample across a cluster.

Clonotyping & Quantification

Primary Parallel Task: Independent Clonotype Inference per Sample This stage groups identical immune receptor sequences into clonotypes and calculates their abundance.

- Parallel Model: Task-level parallelism. Each processed sample's aligned/assembled sequences are subjected to clonotyping (clustering by sequence identity/similarity) independently. This is a major bottleneck that benefits significantly from HPC distribution.

Quantitative Workflow Benchmarks

The following table summarizes typical computational demands for a standard bulk TCR-seq analysis of 10^8 reads, highlighting stages with high parallelization potential.

Table 1: Computational Profile of Core Immunology NGS Steps

| Workflow Stage | Primary Tool Examples | Approx. CPU Hours (Serial) | Memory Peak (GB) | I/O Intensity | Parallelization Efficiency (Strong Scaling) | Key Parallel Task |

|---|---|---|---|---|---|---|

| QC & Preprocessing | Fastp, Trimmomatic, Cutadapt | 2-4 | 2-4 | High | Very High (0.9+) | Per-file read processing |

| Alignment | IgBLAST, MiXCR, BWA | 20-40 | 8-16 | Medium | High (0.7-0.8) | Partitioned read alignment |

| Assembly | Trinity, SPAdes (Ig-specific) | 60-120 | 32-64+ | High | Medium (0.5-0.7) | Parallel graph traversal |

| Clonotyping | VDJPuzzle, Change-O, scirpy | 10-20 | 4-8 | Low | Very High (0.9+) | Per-sample clustering |

Experimental Protocol: A Parallelized Bulk BCR-Seq Analysis

This protocol outlines a parallelized workflow for bulk B-cell receptor repertoire sequencing analysis on an HPC cluster using a job scheduler (e.g., Slurm).

Objective: To identify and quantify clonal B-cell populations from total RNA of human PBMCs. Sample: 10 samples, paired-end 150bp sequencing on Illumina NovaSeq. HPC Setup: Cluster with 10+ nodes, each with 32 cores and 128GB RAM.

Methodology:

Project Setup & Data Organization:

- Create a structured project directory with subdirectories:

/raw_data,/scripts,/results/{qc, aligned, clonotypes}. - Use a sample sheet (

samples.csv) to manage metadata.

- Create a structured project directory with subdirectories:

Parallelized QC (Array Job):

- Write a single SLURM submission script that deploys an array job (

--array=1-10). Each job in the array calls

fastpindependently on one sample pair:Aggregate QC reports using

multiqc.

- Write a single SLURM submission script that deploys an array job (

Parallelized Alignment with IgBLAST:

- Prepare the IgBLAST database (IMGT reference) once in a shared location.

- Launch another array job (1-10). Each job:

a. Formats the IgBLAST command with sample-specific input/output.

b. Runs IgBLAST with multithreading (

-num_threads 32):

Parallelized Clonotype Definition:

- Write a Python/R script that loads the IgBLAST output for one sample, performs CDR3 amino acid clustering, and applies a nucleotide similarity threshold (e.g., 95%) to define clonotypes.

- Deploy this script using an array job, with each task processing one sample's alignment file.

- The script outputs a clonotype table per sample (columns: cloneid, CDR3aa, Vgene, Jgene, count).

Aggregation and Analysis:

- A final, single-threaded job collates all per-sample clonotype tables, normalizes by total reads, and merges for cross-sample comparison (e.g., using

alakazamorimmunarchR packages).

- A final, single-threaded job collates all per-sample clonotype tables, normalizes by total reads, and merges for cross-sample comparison (e.g., using

Workflow Visualization

Title: Parallel Task Mapping in Immunology NGS Workflow

The Scientist's Toolkit: Research Reagent & Software Solutions

Table 2: Essential Tools for Immunology NGS Analysis

| Item / Solution | Provider / Project | Primary Function in Workflow |

|---|---|---|

| IMGT/GENE-DB | IMGT | The international standard reference database for immunoglobulin and T-cell receptor gene sequences. Critical for alignment and gene assignment. |

| IgBLAST | NCBI | Specialized alignment tool for V(D)J sequences against IMGT references. Outputs detailed gene annotations. |

| MiXCR | Milaboratory | Integrated, high-performance software suite that performs all analysis steps (alignment, assembly, clonotyping) with robust parallelization support. |

| Change-O & Alakazam | Immcantation Portal | A suite of R packages for advanced post-processing of IgBLAST/MiXCR outputs: clonal clustering, lineage analysis, repertoire statistics. |

| Trimmomatic / fastp | Open Source | Fast, multithreaded pre-processing tools for read trimming and quality control. Enable parallel per-file processing. |

| AIRR Community Standards | AIRR Community | Defines standard file formats (AIRR-seq) and data representations, enabling interoperability between tools and reproducibility. |

| 10x Genomics Cell Ranger | 10x Genomics | End-to-end analysis pipeline for single-cell immune profiling data (scRNA-seq + V(D)J), optimized for parallelism. |

| immunarch | ImmunoMind | An R package focused on reproducible repertoire analysis and visualization, supporting data from multiple alignment tools. |

The analysis of Next-Generation Sequencing (NGS) data in immunology, particularly for T-cell and B-cell receptor repertoire profiling, presents a computationally intensive challenge. The core thesis of modern immunogenomics research hinges on the effective parallelization of these workloads on High-Performance Computing (HPC) clusters. This guide provides an in-depth technical examination of three pivotal, parallel-aware software tools—MixCR, VDJer, and Cell Ranger—focusing on their algorithms, HPC deployment strategies, and their role in accelerating therapeutic discovery.

Core Tool Architectures & Parallelization Strategies

MixCR

MixCR is a comprehensive, Java-based framework for adaptive immune repertoire analysis. Its parallelization is engineered for multi-core and distributed systems.

- Core Algorithm: Employs a multi-stage alignment and assembly pipeline:

align,assemble,export. - Parallelization Model: Utilizes intrinsic multi-threading (via Java's concurrency libraries) for processing individual sequencing reads independently during the alignment stage. On HPC, it can be deployed in an array job paradigm, where different samples or chunks of a single large sample are processed in parallel across cluster nodes.

VDJer

VDJer is a specialized, highly parallel tool for V(D)J recombination analysis from RNA-Seq data.

- Core Algorithm: Leverages de Bruijn graph-based assembly to reconstruct full-length V(D)J sequences from short reads.

- Parallelization Model: Implements fine-grained, multi-threaded processing for graph construction and traversal. It is designed to exploit the many-core architecture of modern CPUs, making it suitable for single-node, high-memory HPC servers. Its efficiency scales with core count for a given sample.

Cell Ranger (10x Genomics)

Cell Ranger is a commercial, integrated suite for analyzing single-cell immune profiling data from 10x Genomics Chromium platform.

- Core Algorithm: A complex pipeline including barcode processing, read alignment (using a modified STAR aligner), UMI counting, and V(D)J assembly.

- Parallelization Model: Uses a coarse-grained, multi-stage pipeline where computationally heavy stages (like alignment) are internally multi-threaded. For HPC, it is deployed via the

mkfastq,count, andvdjsubcommands, each capable of leveraging multiple cores on a single node. Large-scale studies are parallelized at the sample level using job arrays or workflow managers.

Quantitative Performance Comparison on HPC

The following table summarizes key performance and deployment characteristics based on recent benchmarks and documentation.

Table 1: Parallel-Aware Immunology Tool Comparison for HPC Deployment

| Feature | MixCR | VDJer | Cell Ranger |

|---|---|---|---|

| Primary Language | Java | C++ | C++ / Python |

| Parallel Paradigm | Multi-threaded, Sample-level array jobs | Fine-grained multi-threading | Stage-internal multi-threading, Sample-level array jobs |

| Optimal HPC Deployment | 8-16 cores/node, High Memory | 16-32 cores/node, Very High Memory | 16-32 cores/node, Very High Memory (>64GB RAM) |

| Typical Runtime (Human PBMC, 10^5 cells) | ~2-4 hours (multi-threaded) | ~1-3 hours (multi-threaded) | ~6-10 hours (count + vdj) |

| Key Scaling Factor | Number of reads/sample | Core count per sample | Core count per sample, RAM |

| License | Open Source (Apache 2.0) | Open Source (GPLv3) | Commercial (Free for academic use) |

| Primary Output | Clonotype tables, alignments | Assembled V(D)J sequences | Filtered contigs, clonotype tables, Seurat-compatible matrices |

Experimental Protocol: A Standardized HPC Repertoire Analysis Workflow

This protocol outlines a typical parallelized workflow for TCR repertoire analysis from bulk RNA-Seq data.

Title: High-Throughput TCR Repertoire Profiling on an HPC Cluster

1. Experimental Design & Data Acquisition:

- Obtain bulk RNA-Seq FASTQ files (paired-end) from T-cell populations of interest.

- Ensure sequencing includes reads spanning the CDR3 region.

2. HPC Environment Setup:

- Load required modules: Java JDK >=11 (for MixCR), GCC (for VDJer), Python.

- Install or load the chosen software (MixCR/VDJer).

- Download necessary reference databases (IMGT, etc.).

3. Parallelized Data Processing with MixCR (Example):

4. Post-Processing & Clonotype Merging:

- Use

mixcr exportClonesto generate clonotype frequency tables for each sample. - Aggregate results from all array jobs for downstream comparative analysis (diversity, visualization).

5. Downstream Analysis:

- Utilize R packages (

immunarch,tcR) for repertoire diversity analysis, tracking, and visualization.

Workflow & System Architecture Visualizations

Diagram Title: HPC Parallelization Strategy for Repertoire Analysis

Diagram Title: Core Computational Pipeline for V(D)J Analysis

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagents and Materials for NGS-Based Immunology Studies

| Item | Function in Experimental Protocol | Example/Note |

|---|---|---|

| 10x Genomics Chromium Controller & Kits | Enables high-throughput single-cell partitioning, barcoding, and library prep for immune profiling. | Chromium Next GEM Single Cell 5' Kit v3 |

| IMGT/GENE-DB Reference Database | Gold-standard curated database of immunoglobulin and T-cell receptor gene alleles. Critical for accurate V(D)J gene assignment. | Freely available for academic research. |

| Spike-in RNA Controls | Used to monitor technical variability and sensitivity during sequencing library preparation. | External RNA Controls Consortium (ERCC) spikes. |

| UMI (Unique Molecular Identifier) Oligos | Short random nucleotide sequences added to each transcript during library prep to enable accurate digital counting and PCR duplicate removal. | Integral part of modern single-cell and bulk immune repertoire kits. |

| Cell Hashing Antibodies (TotalSeq) | Antibody-oligo conjugates that allow sample multiplexing by tagging cells from different samples with unique barcodes prior to pooling. | Enables cost reduction and batch effect minimization. |

| PhiX Control Library | Sequenced alongside immune libraries to provide an internal control for cluster density, sequencing quality, and phasing/prephasing calculations on Illumina platforms. | Standard for Illumina run quality monitoring. |

The analysis of Adaptive Immune Receptor Repertoire Sequencing (AIRR-Seq) data is computationally intensive, requiring the processing of millions of nucleotide sequences to characterize B-cell and T-cell receptor diversity. This process aligns with the broader thesis that High-Performance Computing (HPC) parallelization is not merely beneficial but essential for advancing Next-Generation Sequencing (NGS) immunology research and accelerating therapeutic discovery. HPC clusters, managed by workload managers like SLURM and PBS, enable the scalable execution of pipelines, transforming raw sequencing data into biologically interpretable repertoires.

Core AIRR-Seq Analysis Workflow and Parallelization Strategy

A standard AIRR-Seq pipeline involves discrete, computationally heavy steps that are inherently parallelizable at the sample level and, within certain steps, at the data level.

Diagram Title: Core AIRR-Seq Computational Pipeline

The table below outlines the parallelization potential and typical resource requirements for each stage, based on current tool benchmarks.

Table 1: Computational Profile of Key AIRR-Seq Pipeline Steps

| Pipeline Step | Example Tool(s) | Parallelization Level | Key Resource Demand | Estimated Runtime per 10^7 Reads* |

|---|---|---|---|---|

| QC & Trimming | fastp, Trimmomatic | Per-sample, multi-threaded | CPU, I/O | 15-30 minutes |

| VDJ Assembly | mixcr, igblast, pRESTO | Per-sample, multi-threaded | High CPU, Memory | 1-3 hours |

| Gene Annotation | mixcr, Change-O | Per-sample, single/multi-threaded | CPU, Memory | 30-60 minutes |

| Clonotyping & Quantification | mixcr, Immunarch | Per-sample, single-threaded | Memory, I/O | 15-30 minutes |

| Repertoire Analysis & Visualization | Immunarch, scRepertoire | Per-project, single-threaded (R/Python) | Memory, Graphics | Variable |

*Runtime estimates are for a single sample on a node with 8-16 CPU cores and 32-64 GB RAM. Actual time varies with data quality and tool parameters.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Reagents and Software for AIRR-Seq Experiments

| Item | Function in AIRR-Seq Research | Example Product/Kit |

|---|---|---|

| 5' RACE Primer | Amplifies the highly variable V region from mRNA templates for library prep. | SMARTer Human TCR/BCR a/b/g Profiling Kit |

| Unique Molecular Identifiers (UMIs) | Short random nucleotide sequences attached to each cDNA molecule to correct for PCR amplification bias and errors. | NEBNext Immune Sequencing Kit |

| PhiX Control | Spiked into sequencing runs for error rate calibration and cluster density estimation on Illumina platforms. | Illumina PhiX Control v3 |

| pRESTO Toolkit | A suite of Python utilities for processing raw paired-end sequencing reads, handling UMIs, and error correction. | pRESTO (github.com/kleinstein/presto) |

| MiXCR | A comprehensive, aligner-based software for one-stop VDJ analysis from raw reads to clonotype tables. | MiXCR (https://mixcr.readthedocs.io) |

| Immcantation Portal | A containerized framework (Docker/Singularity) providing a standardized pipeline from raw reads to population-level analysis. | Immcantation (immcantation.org) |

Experimental Protocol: A Typical AIRR-Seq Data Generation Workflow

Methodology: Library Preparation and Sequencing for B-Cell Receptor Repertoire

- Sample Input: Isolate total RNA or mRNA from human PBMCs or sorted B-cell populations.

- cDNA Synthesis & 5' RACE: Use a template-switching reverse transcriptase with a 5' RACE (Rapid Amplification of cDNA Ends) approach. This ensures capture of the complete V(D)J region from the mRNA's 5' end.

- UMI Integration: Incorporate Unique Molecular Identifiers (UMIs) during the initial cDNA synthesis or first-round PCR. This is critical for accurate quantification and error correction.

- Primary PCR: Amplify the V(D)J region using a multiplex of forward primers specific to the leader/framework 1 region of each V gene family and a reverse primer specific to the constant region (e.g., IgH Cγ, Cμ).

- Secondary PCR (Indexing): Add Illumina sequencing adapters and sample-specific dual indices via a second, shorter PCR cycle.

- QC & Pooling: Quantify libraries via qPCR or Bioanalyzer, then pool equimolar amounts.

- Sequencing: Run on an Illumina MiSeq, HiSeq, or NovaSeq platform using paired-end 2x300 bp or 2x150 bp chemistry to ensure full coverage of CDR3 regions.

HPC Job Submission: SLURM and PBS Script Examples

The following scripts demonstrate how to deploy the MiXCR analysis pipeline on an HPC cluster, parallelizing at the sample level.

SLURM Job Script Example (Single Sample)

This script is designed to be submitted once per sample (e.g., via a job array).

PBS Pro/Torque Job Script Example (Multi-Sample Parallel Wrapper)

This script uses a PBS job array to process multiple samples in parallel.

Master Submission Script for a Full Project

A bash script to coordinate submission of multiple jobs or a job array.

Resource Management and Optimization Strategies

Effective HPC usage requires careful resource estimation. The table below provides a guideline for requesting cluster resources based on common AIRR-Seq project scales.

Table 3: HPC Resource Allocation Guidelines for AIRR-Seq Projects

| Project Scale | Sample Count | Approx. Total Reads | Recommended Partition/Queue | Memory per Job | Cores per Job | Walltime (per sample) | Storage Estimate (Raw+Processed) |

|---|---|---|---|---|---|---|---|

| Pilot Study | 5-10 | 50-100 million | standard, short | 32 GB | 8 | 3-5 hours | 50-100 GB |

| Mid-Size Study | 50-100 | 0.5-1 billion | standard, highmem | 64 GB | 12-16 | 4-6 hours | 0.5-1 TB |

| Large Cohort | 500+ | 5+ billion | bigmem, long | 128 GB+ | 16-24 | 6-8 hours | 5-10 TB+ |

Diagram Title: HPC Resource Request Decision Flow

The integration of robust, parallelized AIRR-Seq analysis pipelines within SLURM and PBS HPC environments is a cornerstone of modern computational immunology. The step-by-step job submission frameworks presented here directly support the thesis that systematic HPC utilization is fundamental to extracting reproducible, high-fidelity insights from complex NGS immune repertoire data. This approach enables researchers and drug development professionals to scale analyses from pilot studies to large clinical cohorts, ultimately accelerating the discovery of biomarkers, therapeutic antibodies, and vaccine candidates.

High-Performance Computing (HPC) is revolutionizing Next-Generation Sequencing (NGS) immunology research, enabling the analysis of complex repertoires in autoimmune diseases, cancer immunotherapy, and vaccine development. The core challenge lies in the efficient management of massive NGS datasets—primarily FASTQ (raw reads) and BAM (aligned reads) files—which can scale to petabytes in population-scale studies. This whitepaper, framed within a broader thesis on HPC parallelization for NGS immunology, details strategies for leveraging parallel file systems like Lustre and IBM Spectrum Scale (GPFS) to overcome I/O bottlenecks, accelerate preprocessing, and facilitate scalable genomic analysis.

Parallel file systems distribute data across multiple storage nodes and network paths, providing the high aggregate bandwidth necessary for concurrent access by thousands of compute cores.

Key Characteristics for NGS Data:

- Lustre: A widely adopted, open-source file system in HPC. It separates metadata (managed by Metadata Servers - MDS) from object data (stored on Object Storage Servers - OSS). Lustre excels with large, sequential reads/writes typical of genomic files.

- IBM Spectrum Scale (GPFS): A high-performance, clustered file system known for strong data consistency and advanced policy-based storage management. It is robust for mixed workloads involving both large files and frequent metadata operations.

Quantitative Comparison of Parallel File Systems:

Table 1: Comparison of Lustre and GPFS for Genomic Workloads

| Feature | Lustre | IBM Spectrum Scale (GPFS) |

|---|---|---|

| Architecture | Object-based, decoupled metadata & data | Block-based, shared-disk cluster |

| Strength for FASTQ/BAM | Excellent for large, sequential I/O patterns | Strong for mixed workloads & complex metadata |

| Typical Max Bandwidth | 100s of GB/s to >1 TB/s | 100s of GB/s |

| Metadata Performance | Can become a bottleneck with many small files | Generally higher metadata performance |

| Data Striping | Configurable stripe count & size across OSS | Block-level allocation across servers |

| Best Use Case | Large-scale, monolithic file processing | Environments requiring strong consistency & tiering |

Optimized Strategies for FASTQ and BAM File Handling

File System Configuration and Layout

Lustre Striping for Large Files: For individual large FASTQ or BAM files, aggressive striping distributes chunks across many OSSes.

- Protocol:

lfs setstripe -c -1 -S 64m /path/to/directorysets files to stripe across all available OSSes with a 64MB chunk size, maximizing read/write parallelism. - Consideration: Avoid excessive striping for files < 1GB due to overhead.

GPFS Block Allocation & Policy Management: Use GPFS storage policies to place active project data on high-performance tiers (SSD) and archive data on cheaper tiers.

Directory Structure: Organize projects to avoid placing millions of files in a single directory. Use a hashed or project/sample-based directory tree to distribute metadata load.

Parallel I/O Patterns and Tools

Embarrassingly Parallel Preprocessing: Tools like fastp, BBDuk, or Trimmomatic can be run in parallel on many samples using job arrays (SLURM, PBS). Each task must read/write to independent files to avoid contention.

Parallelized Alignment & Processing: Use tools designed for parallel I/O:

- BWA-MEM: Run

bwa memwith one thread per process but launch many concurrent processes on different sample chunks. - SAMtools/htslib: Library is thread-safe for reading/writing BAMs (

-@flag for decompression/compression threads). - sambamba: A faster, parallel alternative to SAMtools for multi-threaded BAM operations.

- GATK4: Uses Apache Spark for distributed processing across clusters, effectively leveraging parallel file systems.

Experimental Protocol for Benchmarking Parallel I/O:

- Objective: Measure read/write throughput for a BAM sorting workflow under different stripe counts.

- Setup: Create a 100GB BAM file on a Lustre file system. Configure test directories with stripe counts of 1, 4, 16, and -1 (all).

- Method: Use

sambamba sort -t <threads>with 32 threads. Clear cache between runs. Measure wall-clock time using/usr/bin/time -v. - Metrics: Record elapsed time, CPU time, and I/O throughput (from Lustre client stats or

iostat). - Analysis: Plot time vs. stripe count to identify optimum for given file size and cluster configuration.

Managing Metadata Performance

High metadata operations (e.g., ls, find, opening/closing millions of small files) can cripple performance.

Solutions:

- Archive Small Files: Use

taror HDF5 containers to bundle small FASTQ or intermediate files. - Use a Database: Store file metadata and paths in a SQL/NoSQL database instead of relying on filesystem

statoperations. - Lustre Specific: Use

lfs findinstead of GNUfind. Consider a dedicated Metadata Target (MDT) for project directories with huge file counts.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Software and Library Tools for Parallel NGS Data Management

| Tool/Reagent | Category | Primary Function in Parallel Workflow |

|---|---|---|

| htslib (SAMtools) | Core Library | Provides parallelized read/write routines for BAM/CRAM formats; foundational for most tools. |

| sambamba | Processing Tool | Drop-in parallel replacement for SAMtools sort, markdup, and filter; optimized for multi-core. |

| GNU Parallel | Workflow Manager | Simplifies running thousands of jobs concurrently across samples or file chunks. |

| SLURM/PBS Pro | Job Scheduler | Manages resource allocation and job arrays for massive embarrassingly parallel tasks. |

| MPI-IO (via h5py/mpi4py) | I/O Library | Enables single shared-file parallel I/O patterns for advanced custom analysis. |

| IOzone/FIO | Benchmarking Tool | Measures filesystem performance under different access patterns to guide optimization. |

| Spectrum Scale RAID | GPFS Utility | Manages data tiering and placement policies to keep hot genomic data on fast storage. |

| Lustre Monitoring Tool (LMT) | Monitoring | Tracks Lustre filesystem health and performance metrics to identify bottlenecks. |

Visualizing the Parallel NGS Data Workflow

Diagram 1: Parallel NGS Data Management on Lustre/GPFS

Diagram 2: Lustre Parallel I/O for Multi-threaded BAM Sorting

Solving Scalability Issues: Expert Strategies for HPC Performance Tuning in NGS Immunology

In the pursuit of accelerating Next-Generation Sequencing (NGS) immunology data research, High-Performance Computing (HPC) parallelization is paramount. A key challenge in this domain is the efficient analysis of vast datasets from technologies like single-cell RNA sequencing and T-cell receptor repertoire profiling. The core thesis posits that systematic identification and resolution of performance bottlenecks—Input/Output (I/O), Memory, and Central Processing Unit (CPU)—through targeted profiling is critical for scaling complex immunogenomic workflows. This guide provides an in-depth methodology for leveraging modern HPC monitoring tools to diagnose these bottlenecks, thereby optimizing pipeline throughput and enabling faster insights into immune responses and therapeutic targets.

The Bottleneck Triad in NGS Immunology HPC Workflows

NGS immunology pipelines (e.g., for AIRR-seq or bulk/single-cell immune profiling) impose unique demands on HPC clusters.

| Bottleneck Type | Typical Manifestation in NGS Immunology | Impact on Research Pace |

|---|---|---|

| I/O | Concurrent reading/writing of millions of short reads (FASTQ), intermediate alignment files (SAM/BAM), and large annotation databases (e.g., IMGT). Network filesystem latency. | Idle CPUs waiting for data, drastically slowing alignment (Cell Ranger, STAR) and assembly steps. |

| Memory | In-memory processing of large reference genomes, holding hash tables for aligners, and loading massive cell-by-gene matrices for clustering. | Job failures (OOM - Out Of Memory), excessive swapping, limiting concurrent job execution per node. |

| CPU | Multi-threaded computations in read alignment, variant calling, and clonal abundance estimation. Load imbalance between threads. | Sublinear scaling with added cores, prolonged time-to-result for urgent translational research questions. |

Essential HPC Monitoring Tools & Metrics

A curated toolkit is required for comprehensive profiling.

| Tool Category | Specific Tools (2024-2025) | Primary Metric Focus | Best For NGS Step |

|---|---|---|---|

| Cluster-Wide Resource Managers | Slurm, PBS Pro, Kubernetes with HPC extensions | Job queue wait times, aggregate cluster utilization | Workflow submission & scheduling |

| Node-Level Performance Profilers | Intel VTune Profiler, AMD uProf, perf (Linux) |

CPU instruction retirement, cache misses, core utilization | Alignment, variant calling (CPU-intensive) |

| Memory Usage Trackers | valgrind/massif, jemalloc heap profiler, sar -R |

Heap allocation, memory bandwidth, swap usage | De novo assembly, large matrix operations |

| I/O Performance Analysers | Darshan, iostat, Lustre monitoring (lfs), IOstat |

Read/write throughput, metadata operations, IOPS | Reading FASTQ, writing BAM, database queries |

| Parallel Performance Analysers | Scalasca, TAU, HPCToolkit | MPI/OpenMP communication overhead, load imbalance | Parallelized genome assembly, population-scale analysis |

| Integrated Dashboards | Grafana + Prometheus (with HPC exporters), NetData | Real-time visual correlation of I/O, Mem, CPU | Holistic pipeline monitoring and alerting |

Experimental Protocols for Bottleneck Diagnosis

Follow these structured protocols to isolate bottlenecks.

Protocol 4.1: I/O Bottleneck Characterization for Alignment Workflows

Objective: Quantify filesystem latency's impact on a STAR or Cell Ranger alignment job.

- Instrumentation: Preload the Darshan runtime library (

LD_PRELOAD). - Job Execution: Run a representative alignment job on a dedicated node, targeting a Lustre/GPFS filesystem.

- Data Collection:

- Use

darshan-parserto generate a summary of I/O operations, bytes transferred, and access patterns. - Concurrently, use

iostat -x 5on the compute and storage nodes to monitor await time and utilization.

- Use

- Analysis: Identify if the job is read-bound (reference genome, FASTQ) or write-bound (BAM output). High

awaittimes indicate storage contention.

Protocol 4.2: Memory Footprint Analysis for Clustering Algorithms

Objective: Profile memory consumption of a single-cell clustering tool (e.g., Scanpy, Seurat).

- Setup: Configure a job using

cgroupsto limit memory and trigger graceful OOM logging. - Profiling: Launch the analysis with

valgrind --tool=massif. - Execution: Process a dataset with 100,000+ cells and 20,000+ genes.

- Post-processing: Use

ms_printon the generatedmassif.outfile to visualize heap usage over time. Identify peak allocation points and the functions responsible.

Protocol 4.3: CPU Parallel Scaling Efficiency Test

Objective: Measure the strong scaling efficiency of an immune repertoire diversity calculation (e.g., using immuneSIM).

- Baseline: Run the computation on a single core, record wall-clock time (

T1). - Scaled Runs: Repeat with 2, 4, 8, 16, and 32 cores on the same node, keeping the total problem size constant.

- Data Collection: Use

perf stat -e cycles,instructions,cache-missesfor each run. - Calculation: Compute parallel efficiency:

E = (T1 / (N * Tn)) * 100%. Use HPCToolkit to identify code regions that scale poorly.

Visualizing Data Flow and Bottlenecks

Diagram 1: Data Flow & Bottleneck Points in NGS Alignment

Diagram 2: HPC Bottleneck Diagnosis & Optimization Workflow

The Scientist's Toolkit: Research Reagent Solutions

Essential software and hardware "reagents" for performance experiments.

| Item | Category | Function in Bottleneck Diagnosis |

|---|---|---|

| Darshan 3.4.0+ | Software Profiler | Lightweight, low-overhead I/O characterization tool for understanding data access patterns. |

| Intel VTune Profiler 2024 | Software Profiler | Deep CPU and memory hierarchy analysis, including GPU offload analysis for accelerated pipelines. |

Slurm with sacct & seff |

Resource Manager | Provides built-in job efficiency reports (CPU and memory use vs. requested) post-execution. |

| Grafana + Prometheus Stack | Dashboard | Enables real-time visualization of cluster-wide metrics (node heatmaps, Lustre throughput). |

| Lustre Filesystem | Hardware/Storage | Parallel distributed filesystem; its health and striping configuration are critical for I/O performance. |

| Compute Node with NVMe SSD | Hardware/Compute | Provides local high-speed "burst buffer" storage to alleviate shared filesystem I/O pressure. |

| High Memory Node (e.g., 1TB+ RAM) | Hardware/Compute | Allows memory-intensive tasks (large reference assembly) to proceed without swapping. |

| Infiniband HDR Interconnect | Hardware/Network | Low-latency, high-bandwidth network crucial for MPI-based parallel genomics tools and storage access. |

For researchers parallelizing NGS immunology workflows, systematic bottleneck diagnosis is not an optional systems task but a core research accelerator. By methodically applying the protocols and tools outlined—profiling I/O with Darshan, memory with massif, and CPU with VTune/perf—teams can transform opaque performance limitations into actionable engineering insights. This direct approach ensures that HPC resources are fully leveraged, shortening the cycle from raw sequencing data to immunological discovery and therapeutic candidate identification. The integrated use of structured profiling dashboards and a deep toolkit is the definitive path to robust, scalable computational immunogenomics.

The analysis of immune repertoire sequencing (Rep-Seq) data presents a quintessential High-Performance Computing (HPC) challenge characterized by highly irregular and data-dependent workloads. This technical guide details parallelization strategies within the context of NGS immunology research, focusing on dynamic load balancing to optimize pipeline efficiency for diversity metrics, clonal tracking, and lineage reconstruction.

Immune repertoire data, generated via bulk or single-cell sequencing of B-cell or T-cell receptors, exhibits extreme heterogeneity. Key computational steps—sequence annotation, clonotype clustering, and phylogenetic tree construction—have execution times that vary dramatically per input sequence, leading to severe load imbalance in naive parallel implementations. Efficient parallelization is critical for scaling to cohorts of thousands of samples, a necessity in vaccine and therapeutic antibody development.

Workload Characterization and Bottleneck Analysis

The irregularity stems from data itself. For example, clonotype clustering using tools like IGoR or MiXCR involves all-vs-all comparisons within samples, where cluster sizes follow a heavy-tailed distribution.

Table 1: Workload Variability in Key Rep-Seq Pipeline Stages

| Pipeline Stage | Primary Tool(s) | Key Load Determinant | Typical Time Range per 10^5 Reads | Parallelization Granularity |

|---|---|---|---|---|

| Raw Read Quality Control | FastQC, Trimmomatic | Read Length | 2-5 minutes | Embarrassingly parallel (by file) |

| V(D)J Alignment & Assembly | MiXCR, IMGT/HighV-QUEST | Sequence diversity, error rate | 10-60 minutes | Fine-grained (by read batch) |

| Clonotype Clustering | CD-HIT, VSEARCH | Cluster density & size distribution | 5-120 minutes | Highly Irregular (per cluster group) |

| Diversity Metric Calculation (Shannon, Chao1) | scRepertoire, vegan | Number of unique clonotypes | 1-30 minutes | Moderate (by sample) |

| Lineage Tree Construction | IgPhyML, dnaml | Clonal family size, tree depth | 30 minutes - 10+ hours | Highly Irregular (per clonal family) |

Load Balancing Strategies: A Technical Deep Dive

Static Pre-Partitioning with Cost Heuristics

For predictable irregularity, pre-partitioning based on cost estimators can be effective.

- Methodology: Prior to the main computation, perform a lightweight profiling run (e.g., using a subset of data or k-mer based complexity score) to estimate workload per unit. Use this to bin tasks for each processor.

- Example Protocol: Before full clonotype clustering, perform dereplication and length-based grouping. Assign groups of unique sequences to MPI ranks proportionally to the square of group size, approximating O(n²) comparison cost.

Dynamic Work Stealing & Queue-Based Pools

This is the most robust strategy for deeply irregular tasks like lineage tree building.

- Implementation: Use a master-worker model. The master maintains a queue of coarse-grained tasks (e.g., clonal families to process). Idle workers pull ("steal") tasks from the queue or from other busy workers. Implemented via OpenMP tasks, Intel TBB, or Ray framework.

- Experimental Workflow:

- Input: A list of clonal families (FASTA files of aligned sequences).

- Master Process: Parses list, populates a thread-safe priority queue (prioritized by family size).

- Worker Processes (N instances): Each worker loop: a. Fetch a family from the queue. b. Execute multiple sequence alignment (MAFFT). c. Perform model selection (ModelTest-NG). d. Construct maximum likelihood tree (IQ-TREE). e. Return results to master.

- Termination: When queue is empty and all workers are idle.

Adaptive Mesh Refinement (AMR) Analogy

Inspired by CFD, this treats clonal families as "cells." Large families are recursively split into sub-families for parallel tree inference, later merged.

Diagram 1: Adaptive workload splitting for large clonal families.

Case Study: Parallelizing the AIRR Diversity Metrics Workflow

A common task is computing alpha/beta diversity across a patient cohort.

Table 2: Load Balancing Performance Comparison

| Strategy | Implementation | Avg. Load Imbalance* (%) | Speedup (16 cores vs 1) | Best For |

|---|---|---|---|---|

| Static (Equal Sample Split) | MPI Scatter | 45.2 | 6.1 | Homogeneous sample sizes |

| Dynamic (Central Queue) | Python Multiprocessing Pool | 12.8 | 13.8 | Variable clonotype counts |

| Dynamic (Work Stealing) | Intel TBB Flow Graph | 8.5 | 14.7 | Highly irregular metric costs (e.g., Chao1 vs Shannon) |

Load Imbalance = (1 - avg_worktime/max_worktime) * 100

Diagram 2: Master-worker dynamic load balancing for diversity analysis.

The Scientist's Toolkit: Essential Research Reagents & Software

Table 3: Key Research Reagent Solutions for Rep-Seq Analysis

| Item / Reagent | Function / Purpose | Example Product / Tool |

|---|---|---|

| UMI-Linked Library Prep Kit | Enables accurate PCR error correction and precise molecule counting, critical for diversity quantification. | NEBNext Immune Sequencing Kit, SMARTer TCR a/b Profiling Kit |

| Multiplex PCR Primers (V/J genes) | Amplifies the highly variable V(D)J region for repertoire coverage. | IMGT approved primers, Archer Immunoverse |

| Spike-in Control Sequences | Quantifies sequencing depth and detects amplification bias. | ERCC (External RNA Controls Consortium) RNA Spike-In Mix |

| Barcoded Beads for Single-Cell | Enables partitioning and barcoding of single cells for paired-chain analysis. | 10x Genomics Chromium Next GEM, BD Rhapsody Cartridge |

| High-Fidelity Polymerase | Minimizes PCR errors during library amplification. | KAPA HiFi HotStart ReadyMix, Q5 High-Fidelity DNA Polymerase |

| Alignment & Annotation Engine | Core software for assigning V, D, J genes and CDR3 regions. | IMGT/HighV-QUEST, MiXCR |

| Clonotype Clustering Tool | Groups sequences originating from the same progenitor cell. | CD-HIT, Change-O, SCOPer |

| Parallel Computing Framework | Implements dynamic load balancing for irregular workloads. | Ray, Apache Spark, MPI (OpenMPI), OpenMP |

High-throughput sequencing of adaptive immune repertoires (AIRR-Seq) generates vast datasets, but the computational bottleneck lies not in the raw reads themselves, but in aligning them against massive, complex reference resources. A typical human genome reference (GRCh38) is ~3.2 GB, but immunogenomics analyses require additional references: the full human immunoglobulin (Ig) and T-cell receptor (TCR) loci, germline gene databases from IMGT (over 2,000 sequences), and personalized reference genomes incorporating somatic hypermutation landscapes. When indexed for tools like BWA, Bowtie2, or HISAT2, these references can expand to 20-50 GB in memory. Parallel processing across High-Performance Computing (HPC) clusters is essential, but inefficient memory management leads to redundant loading, I/O contention, and crippling overhead. This guide details strategies for mastering memory management when working with these colossal datasets in parallel environments, framed within a broader thesis on HPC parallelization for accelerating NGS-based immunology research and therapeutic discovery.

Core Memory Challenges and Parallelization Models

The primary challenge is the trade-off between memory footprint and I/O speed. Loading a complete reference index into memory on every node (shared-nothing architecture) maximizes speed but wastes memory and strains shared storage. A shared-memory model (using, e.g., OpenMP) on a large-memory node can be efficient but limits scalability. The optimal solution often involves a hybrid approach.

Table 1: Parallelization Models for Reference Genome Alignment

| Model | Description | Pros | Cons | Best For |

|---|---|---|---|---|

| Shared-Nothing (MPI) | Each compute node loads its own copy of reference/index. | Simple, highly scalable, minimal inter-node communication. | Massive memory duplication, high I/O load on storage, slow startup. | Cloud environments with ephemeral storage, jobs with long runtime. |

| Shared-Memory (OpenMP) | Multiple threads on a single node share one copy in RAM. | Zero data duplication, fast inter-thread access. | Limited to single node's memory and CPU cores. | Single, large-memory server; alignment of many reads against a single reference. |

| Hybrid (MPI+OpenMP) | MPI processes across nodes, each with OpenMP threads sharing a local copy. | Balances scalability and memory efficiency. | More complex programming. | Large HPC clusters with multi-core nodes. |

| Memory-Mapped Files | Index files are memory-mapped (mmap); pages are loaded on-demand from fast storage. |

Drastically reduces initial load time, efficient RAM use. | Performance dependent on storage speed (requires NVMe/SSD). | All models, as a foundational technique. |

Strategic Methodologies for Efficient Management

In-Memory Caching and Shared Filesystems

Utilize a high-speed, parallel filesystem (e.g., Lustre, GPFS, BeeGFS). Implement a node-local caching layer. On job start, the first process on a node copies the index from parallel storage to node-local SSD or RAM disk. Subsequent processes on the same node access this local copy.

Experimental Protocol: Node-Local Cache Performance Test

- Setup: A cluster with 10 nodes, each with 32 cores and 1TB local NVMe storage. Reference: GRCh38 + IMGT Ig loci indexed for BWA-MEM (~35 GB).

- Workflow:

- Method A (Baseline): All 320 MPI processes read index directly from parallel NFS.

- Method B (Cached): One process per node copies index to

/dev/shm. Other processes on the node use the copy.

- Metrics: Measure total job start-to-alignment time and network/storage I/O load.

- Result: Method B reduced start-up latency by 85% and cut network I/O from 10.2 TB to 350 GB.

Index Partitioning (Chunking)

For extremely large references (e.g., metagenomic or pan-genome graphs), partition the index. Tools like bwa-mem can be modified to load only a subset of the index (e.g., per-chromosome) relevant to a batch of reads.

Experimental Protocol: Chromosome-Specific Index Alignment

- Index Preparation: Split the BWA index by major chromosomes (1-22, X, Y, MT) and the Ig loci.

- Read Sorting: Pre-sort FASTQ files by read name or using a lightweight pre-alignment to assign reads to likely chromosomes (e.g., using

samtools quickcheckon a subset). - Parallelized Alignment: Launch separate job arrays, each loading only a 2-3 GB chromosome-specific index.

- Result: Peak memory per node reduced from 35 GB to <5 GB, enabling more concurrent jobs per node.

Use of Memory-Efficient Data Structures

Adopt tools designed for low-memory footprint. For example, minimap2 uses a minimized splice-aware index. For AIRR-Seq, consider igblast or MiXCR, which use compressed, specialized germline databases.

Table 2: Tool-Specific Index Memory Footprint (Human Genome + Ig/TCR)

| Tool | Index Type | Typical Size (Disk) | Peak RAM Load | Parallelization Native Support |

|---|---|---|---|---|

| BWA-MEM | FM-index | 5-7 GB | ~35 GB | MPI, OpenMP (limited) |

| Bowtie2 | FM-index | ~4 GB | ~4.5 GB | OpenMP (pthreads) |

| HISAT2 | Graph FM-index | Varies (~10 GB) | ~12 GB | OpenMP (pthreads) |