Bayesian Optimization for Antibody Developability: A Complete Guide for Researchers and Drug Developers

This article provides a comprehensive overview of Bayesian optimization (BO) for predicting and optimizing antibody developability scores, a critical bottleneck in biotherapeutic discovery.

Bayesian Optimization for Antibody Developability: A Complete Guide for Researchers and Drug Developers

Abstract

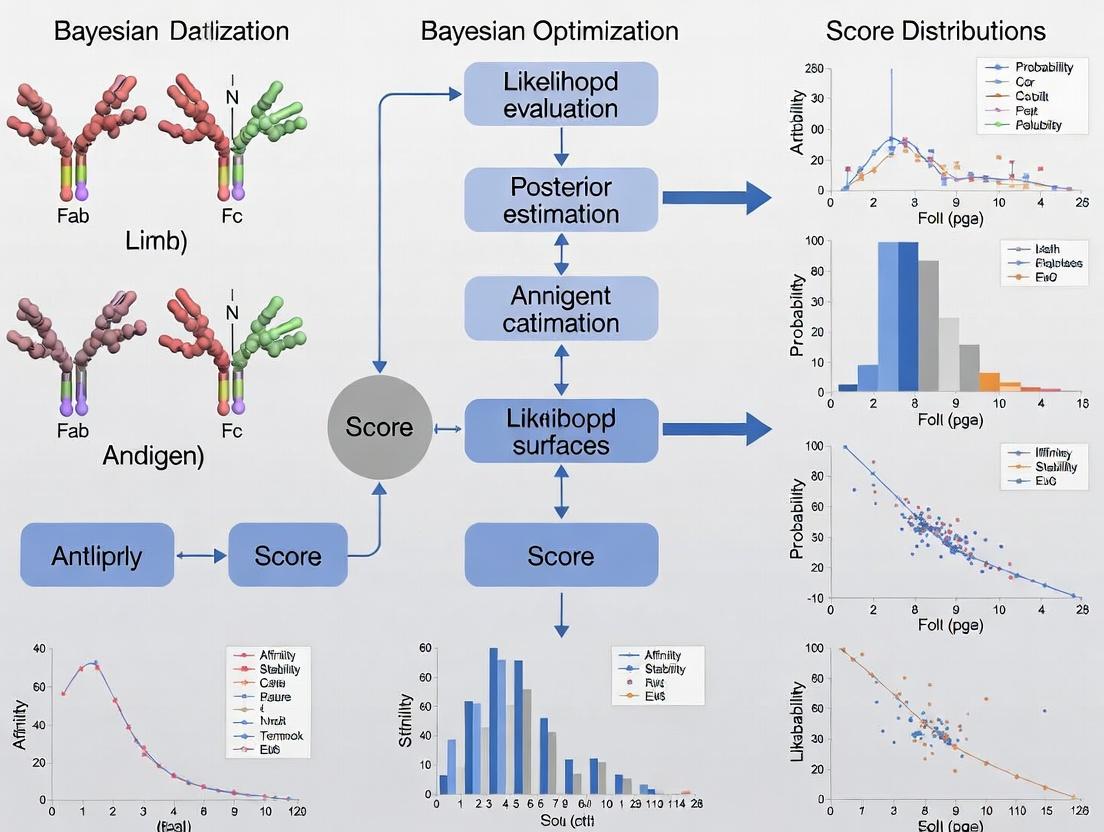

This article provides a comprehensive overview of Bayesian optimization (BO) for predicting and optimizing antibody developability scores, a critical bottleneck in biotherapeutic discovery. We explore the foundational principles linking BO to computational antibody engineering, detailing core methodological frameworks and acquisition functions tailored for high-dimensional biological data. Practical application guides demonstrate integration with machine learning models and sequence-structure-function pipelines. We address key challenges in navigating complex, noisy biological landscapes, including handling multi-objective optimization and constrained design spaces. Finally, we compare BO against alternative optimization strategies, validate its performance with recent case studies, and discuss its translational potential for accelerating the development of safer, more manufacturable antibody therapeutics.

What is Bayesian Optimization and Why is it Transformative for Antibody Developability?

Within the broader thesis on Bayesian optimization for antibody developability scores, this guide provides a technical deep-dive into the core physical stability challenges of aggregation and viscosity. These are two of the most critical parameters predictive of successful antibody therapeutic development, directly impacting manufacturability, formulation, dosage, and patient compliance.

Core Developability Challenges: Mechanisms and Measurement

Protein Aggregation

Aggregation is the irreversible self-association of protein molecules, a major cause of immunogenicity and loss of efficacy.

Primary Mechanisms:

- Colloidal Instability: Weak, reversible self-association driven by attractive electrostatic and hydrophobic interactions.

- Conformational Instability: Partial unfolding exposes hydrophobic patches, leading to strong, often irreversible aggregation.

- Surface-Induced Aggregation: Adsorption and denaturation at air-liquid or solid-liquid interfaces (e.g., during manufacturing).

Key Experimental Protocols:

1. Static Light Scattering (SLS) for Second Virial Coefficient (B22):

- Purpose: Quantifies net protein-protein interactions (attractive or repulsive) in dilute solution.

- Protocol:

- Prepare a series of purified antibody solutions (e.g., 5-10 concentrations from 0.5 to 10 mg/mL) in a suitable buffer.

- Filter all samples (0.1 µm) to remove particulates.

- Measure the scattering intensity (Rayleigh ratio) of each concentration using an instrument like a Wyatt Dawn Heleos II.

- Perform Debye plot analysis: (Kc)/R(θ=0) vs. concentration (c), where K is an optical constant and R is the Rayleigh ratio.

- The slope of the linear fit is 2B22. A positive B22 indicates net repulsion (good), a negative B22 indicates net attraction (poor developability).

2. Accelerated Stability Studies for Aggregation Propensity:

- Purpose: Assess aggregation kinetics under stress.

- Protocol:

- Formulate antibody at target concentration (e.g., 1 mg/mL) in histidine buffer, pH 6.0.

- Aliquot samples into sterile vials.

- Subject samples to thermal stress (e.g., 40°C) and/or mechanical stress (e.g., orbital shaking at 200 rpm) for 2-4 weeks.

- At defined timepoints (T0, 1wk, 2wk, 4wk), analyze samples by:

- Size-Exclusion Chromatography (SEC): Quantifies soluble aggregate percent.

- Micro-Flow Imaging (MFI) or Light Obscuration: Quantifies sub-visible and visible particles.

High Concentration Viscosity

High viscosity (>15 cP at 150 mg/mL) impedes manufacturing, complicates subcutaneous injection, and impacts patient experience.

Primary Drivers:

- Electrostatic Attraction: Charge-charge interactions leading to reversible self-association and network formation.

- Hydrophobic Interactions: Especially at the Fab region, leading to clustering.

- Shape and Flexibility: Extended or flexible molecular structures increase hydrodynamic volume and drag.

Key Experimental Protocol:

1. Measurement of Dynamic Viscosity:

- Purpose: Characterize concentration-dependent viscosity and shear-thinning behavior.

- Protocol (using a cone-and-plate rheometer):

- Concentrate purified antibody to >100 mg/mL using centrifugal concentrators (e.g., Amicon Ultra).

- Load sample onto the temperature-controlled plate (e.g., 25°C) of the rheometer (e.g., TA Instruments DHR).

- Lower the measuring cone to the defined gap (typically ~50 µm).

- Perform a flow sweep: Apply a logarithmic shear rate ramp (e.g., from 1 to 1000 s⁻¹).

- Record the shear stress. Viscosity (η) is calculated as shear stress / shear rate.

- Plot viscosity vs. shear rate. Report the low-shear (Newtonian plateau) viscosity at target concentrations (e.g., 50, 100, 150 mg/mL).

Data Presentation: Key Developability Parameters

Table 1: Quantitative Developability Benchmarks for Monoclonal Antibodies

| Parameter | Method | Ideal Range | Developability Risk Threshold |

|---|---|---|---|

| B22 (ml*mol/g²) | Static Light Scattering | ≥ 1.0 x 10⁻⁴ | ≤ 0 |

| % Soluble Aggregate (Initial) | Size-Exclusion Chromatography | ≤ 2.0% | > 5.0% |

| % HIC-HPLC (Hydrophobicity) | Hydrophobic Interaction Chromatography | ≤ 40% (early elution) | > 60% (late elution) |

| Low-Shear Viscosity @ 150 mg/mL | Cone-and-Plate Rheometry | ≤ 15 cP | > 20 cP |

| Tm1 (Fab) (°C) | Differential Scanning Calorimetry | ≥ 65°C | < 60°C |

| Non-specific Binding (SPR Response, RU) | Surface Plasmon Resonance (on polyclonal IgG surface) | ≤ 50 RU | > 150 RU |

Signaling and Experimental Workflow Visualizations

Diagram 1: Viscosity Driver Pathways (100 chars)

Diagram 2: Core Developability Assessment Workflow (99 chars)

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Developability Assessment Experiments

| Item (Example Vendor/Product) | Function in Developability Assessment |

|---|---|

| HisTrap Excel Column (Cytiva) | Standardized, high-capacity affinity chromatography for consistent, high-yield purification of His-tagged mAbs/Fabs for screening campaigns. |

| Tycho NT.6 (NanoTemper) | Rapid, low-volume (< 4 µL) assessment of thermal unfolding (Tm, onset) to triage unstable clones early. |

| UNcle (Unchained Labs) | Multi-attribute stability platform: measures aggregation, melting, and colloidal stability (via dye-binding) in a single, micro-volume instrument. |

| Amicon Ultra Centrifugal Filter (Merck Millipore) | For concentrating mAb samples to high concentration (>100 mg/mL) required for viscosity and aggregation stress studies. |

| Siliconized Low-Retention Microtubes (Eppendorf Protein LoBind) | Minimizes surface adsorption and protein loss during handling of low-concentration or high-value samples. |

| Stability Storage Buffers (e.g., Histidine, Succinate, PBS) | For formulating clones under standard conditions to compare inherent stability, free from formulation-specific effects. |

| Aggregation-Stress Reagents (e.g., GdnHCl, Na2SO4) | Used in controlled stress experiments to probe conformational vs. colloidal aggregation pathways. |

This whitepaper provides a foundational guide to Bayesian Optimization (BO), a powerful strategy for optimizing expensive-to-evaluate functions. The context is its transformative application in the development of therapeutic antibodies, specifically for optimizing complex, multi-parameter "developability scores" that predict an antibody's likelihood of successful progression through drug development pipelines.

What is Bayesian Optimization?

At its core, Bayesian Optimization is a smart, iterative strategy for finding the best possible input for a "black-box" function—a system where you can see inputs and outputs but don't know the internal formula. It is particularly valuable when each evaluation (like a lab experiment) is costly, time-consuming, or resource-intensive. BO intelligently selects the most promising experiment to run next, balancing exploration of unknown regions with exploitation of known promising areas.

The Core Principles: A Two-Step Cycle

The BO process operates on a simple but powerful two-step cycle, built upon a probabilistic model.

Principle 1: Build a Probabilistic Model (The Surrogate)

Instead of testing the real, expensive function randomly, BO builds a cheap, probabilistic approximation called a surrogate model. The most common surrogate is the Gaussian Process (GP). Think of a GP as a "fuzzy line" that represents all possible shapes the true function could have, given the data observed so far. It provides not just a prediction but also an estimate of uncertainty (the "fuzziness") at every point.

Principle 2: Guide Experimentation with an Acquisition Function

The acquisition function uses the surrogate model's predictions and uncertainties to decide where to sample next. It quantifies the "promise" of testing a new point. A popular function is Expected Improvement (EI), which calculates how much better a new point is expected to be than our current best observation. The next experiment is chosen at the point that maximizes this function.

Title: The Bayesian Optimization Iterative Cycle

Application in Antibody Developability Optimization

Antibody developability is a multi-faceted challenge. A high developability score indicates favorable properties like stability, solubility, low viscosity, and low immunogenicity. These scores often come from complex in silico models or resource-intensive in vitro assays. BO is perfectly suited to navigate this high-dimensional "sequence space" to find antibody variants with optimal scores.

Key Experimental Parameters for BO in Antibody Engineering

| Parameter Category | Example Variables | Why it's Important for BO |

|---|---|---|

| Sequence Features | CDR loop sequences, Framework mutations, Glycosylation sites | The primary design space. BO searches combinations to optimize the score. |

| Expression Conditions | Temperature, Cell line, Media formulation | Affects yield and quality, which are part of the developability score. |

| Biophysical Assay Outputs | Thermal Stability (Tm), Aggregation Propensity, Polydispersity | Direct inputs into the composite developability score function being optimized. |

Example Protocol: BO for Optimizing a Stability Score

- Define the Search Space: Specify the mutable amino acid positions in the antibody variable region (e.g., 5 positions with 20 possible amino acids each).

- Choose an Initial Design: Select 10-20 initial antibody sequences using a space-filling design (e.g., Latin Hypercube) to get baseline data.

- Build the Initial Model: Express and purify these variants. Measure Thermal Melting Temperature (Tm) via Differential Scanning Fluorimetry (DSF). Use these (sequence, Tm) pairs to train the initial GP surrogate model.

- Iterative Optimization Loop: a. Calculate the Expected Improvement (EI) acquisition function across the entire sequence space using the GP. b. Select the sequence with the highest EI. c. Synthesize, express, and test this new candidate to obtain its experimental Tm. d. Update the GP model with this new data point.

- Termination: Stop after a fixed number of iterations (e.g., 50 cycles) or when improvement plateaus.

| Item | Function in BO for Antibody Development |

|---|---|

| Gene Fragment Libraries | Provides the diverse set of DNA sequences encoding the initial antibody variants for testing. |

| High-Throughput Expression System (e.g., transient HEK293 cells) | Enables rapid production of hundreds of antibody variants for screening. |

| Differential Scanning Fluorimetry (DSF) Plate Reader | Measures thermal stability (Tm) in a high-throughput, quantitative manner for model training. |

| Surface Plasmon Resonance (SPR) or Bio-Layer Interferometry (BLI) Instrument | Quantifies binding affinity (KD), a critical component of many developability scores. |

| Bayesian Optimization Software (e.g., BoTorch, GPyOpt, scikit-optimize) | Open-source Python libraries that implement the GP models and acquisition functions. |

| Cloud/High-Performance Computing (HPC) Resources | Provides the computational power to run the surrogate model and acquisition function calculations. |

Title: Integrated Lab & In-Silico BO Workflow for Antibodies

The strategic value of BO in antibody development is clear from its efficiency gains, as summarized in the table below.

| Performance Metric | Traditional Grid/ Random Search | Bayesian Optimization | Implication for Antibody Projects |

|---|---|---|---|

| Experiments to Find Optimum | Often requires 80-90% of search space to be tested. | Typically finds optimum after testing 20-30% of the space. | Reduces costly lab experiments (expression, purification, assays) by 60-70%. |

| Resource Efficiency | Low. High fraction of experiments provide little improvement. | Very High. Each experiment is chosen for maximum learning. | Maximizes the value of limited protein material and scientist time. |

| Handling Noise | Poor. Requires replicates to average out experimental noise. | Good. Probabilistic models can inherently account for measurement uncertainty. | Robust to inherent variability in biological expression and assays. |

Bayesian Optimization is not just a mathematical curiosity; it is a pragmatic framework for accelerating scientific discovery. By framing the search for better antibodies as an optimization of a complex, expensive-to-evaluate developability score, researchers can leverage BO to make every experiment count. Its core principles—using a surrogate model to represent uncertainty and an acquisition function to guide decisions—provide a systematic, efficient, and powerful strategy for navigating the vast design space of biologics, ultimately speeding the delivery of novel therapeutics to patients.

Antibody drug discovery is a high-stakes, resource-intensive endeavor. A single candidate requires rigorous experimental validation across multiple developability parameters—stability, solubility, immunogenicity, and affinity. Traditional high-throughput screening (HTS) approaches, while comprehensive, are prohibitively expensive and time-consuming. Bayesian optimization (BO) emerges as a powerful machine learning framework for the sequential, intelligent design of experiments. By leveraging probabilistic models to predict promising antibody sequences and strategically select the next round of experiments, BO aims to maximize the probability of success while minimizing the number of costly wet-lab assays. This whitepaper details the integration of Bayesian optimization for optimizing antibody developability scores, providing a technical roadmap for implementation.

The Bayesian Optimization Framework for Antibody Development

Bayesian optimization is an iterative process designed to find the global optimum of a black-box, expensive-to-evaluate function. In antibody development, the "function" is the experimental assay output (e.g., aggregation score, thermal stability). The core components are:

- Probabilistic Surrogate Model: Typically a Gaussian Process (GP) that models the unknown relationship between antibody sequence/feature space and the developability score, providing both a prediction and uncertainty estimate.

- Acquisition Function: A utility function that uses the surrogate model's predictions to decide the next most informative sequence to test. Common functions include Expected Improvement (EI) and Upper Confidence Bound (UCB).

Diagram: Bayesian Optimization Cycle for Antibody Development

Experimental Protocols for Key Developability Assays

Integrating BO requires standardized, quantitative assays. Below are detailed protocols for critical developability metrics.

High-Throughput Thermal Shift (HT-TS) for Stability

Objective: Determine the melting temperature (Tm) as a proxy for conformational stability. Protocol:

- Sample Prep: Dispense antibody candidates (0.2 mg/mL in PBS) into a 96- or 384-well plate.

- Dye Addition: Add a fluorescent dye (e.g., SYPRO Orange) that binds to hydrophobic regions exposed upon unfolding.

- Thermal Ramp: Perform a controlled temperature ramp (e.g., 25°C to 95°C at 1°C/min) in a real-time PCR instrument.

- Data Analysis: Monitor fluorescence. Fit the sigmoidal melt curve to determine Tm. A higher Tm indicates greater stability.

Self-Interaction Chromatography (SIC) for Solubility

Objective: Measure propensity for self-interaction, correlating with solution viscosity and aggregation. Protocol:

- Column Preparation: Immobilize the antibody of interest onto a chromatography resin (e.g., NHS-activated Sepharose) to create a "homologous" stationary phase.

- Equilibration: Pack the column and equilibrate with a suitable buffer.

- Analytical Run: Inject a monomeric sample of the same antibody. The retention time (or retention factor, k') is measured.

- Interpretation: A higher k' indicates stronger self-interaction, a negative developability signal.

Affinity Capture LC-MS for Poly-Specificity

Objective: Assess non-specific binding to a diverse bead-based library. Protocol:

- Bead Incubation: Incubate antibody samples with a mixture of magnetic beads coated with human cell membrane proteins, lysate, or other diverse ligands.

- Washing: Magnetically separate beads and wash to remove non-specifically bound antibodies.

- Elution & Analysis: Elute bound antibodies and quantify the percentage recovered via LC-MS.

- Output: Lower recovery percentages indicate lower polyspecificity (more desirable).

Data Presentation: Comparative Analysis of Screening Approaches

Table 1: Cost & Resource Comparison of Antibody Screening Strategies

| Screening Aspect | Traditional HTS (Brute-Force) | Bayesian-Optimized Sequential Screening |

|---|---|---|

| Typical Initial Library Size | 10^3 - 10^6 variants | 10^2 - 10^3 variants (initial DOE) |

| Estimated Cost per Assay Cycle | $50,000 - $500,000+ | $5,000 - $50,000 (per iteration) |

| Average Cycles to Hit ID | 1-2 (exhaustive) | 5-10 (iterative) |

| Total Projected Cost | Very High ($200K-$1M+) | Optimized (40-70% reduction reported) |

| Primary Resource Drain | Materials, Reagents, Labor | Computational Power, Strategic Design |

| Key Advantage | Comprehensive data | Efficient learning; targets promising space |

Table 2: Example Developability Score Outcomes from a BO Study*

| Iteration | Candidates Tested | Avg. Tm (°C) | Avg. SIC k' | Top Candidate Score (Composite) |

|---|---|---|---|---|

| 1 (Initial DOE) | 24 | 64.2 ± 3.1 | 0.42 ± 0.15 | 0.65 |

| 3 | 72 | 67.5 ± 2.4 | 0.31 ± 0.11 | 0.78 |

| 6 | 144 | 69.8 ± 1.7 | 0.22 ± 0.08 | 0.91 |

| Improvement | +500% in info gain | +8.7% | -47.6% | +40% |

*Data synthesized from recent literature on ML-guided protein engineering. Composite score is a normalized weighted sum of Tm, SIC, and polyspecificity.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item/Reagent | Function in Developability Screening | Key Consideration |

|---|---|---|

| SYPRO Orange Dye | Fluorescent probe for thermal shift assays; binds hydrophobic patches. | Concentration must be optimized to avoid signal quenching. |

| Protein A/G/L Beads | For initial purification and titer check before developability assays. | Ensure high binding capacity for diverse mAb subclasses. |

| Self-Interaction Sepharose | Activated resin (e.g., NHS) for creating custom SIC columns. | Coupling efficiency of the mAb must be validated. |

| Magnetic Polyspecificity Beads | Beads coated with diverse ligands (e.g., Membrane Proteome) for AC-SINS/LC-MS. | Batch-to-batch consistency is critical for comparability. |

| Size-Exclusion Columns (UPLC) | For analyzing aggregation propensity (monomer vs. aggregate percent). | High-resolution columns (e.g., BEH200) are needed for subtle variants. |

| Stabilization Buffers | Formulation screens to assess excipient effects on stability. | Use DOE to minimize buffer testing with BO across conditions. |

Implementation Workflow & Pathway Diagram

Diagram: Integrated BO-Antibody Development Workflow

The traditional high-cost paradigm of antibody screening is unsustainable. Bayesian optimization provides a mathematically rigorous framework for smart, sequential experimentation. By building a probabilistic model of the sequence-developability landscape, BO guides researchers toward optimal candidates with far fewer experimental cycles. Successful implementation requires integration of robust quantitative assays, careful definition of the optimization objective, and computational infrastructure. The result is a significant reduction in resource expenditure and accelerated timelines, enabling a more efficient path to viable therapeutic candidates.

The successful translation of a therapeutic antibody candidate from discovery to clinical use hinges on its "developability"—a composite profile of biophysical and biochemical properties that dictate manufacturability, stability, and safety. Poor developability is a primary cause of late-stage failure. Bayesian Optimization (BO) emerges as a powerful machine learning framework to navigate this high-dimensional, resource-intensive landscape. By modeling the complex relationship between antibody sequence/structure and developability scores, BO can guide the efficient exploration of the design space towards candidates with optimal manufacturability profiles.

Core Developability Attributes and Quantitative Scoring Systems

Developability is assessed through a panel of in silico and in vitro assays. Key attributes and their target thresholds are summarized below.

Table 1: Core Developability Attributes, Assays, and Ideal Targets

| Developability Attribute | Primary Assay/Score | Typical Target/Threshold | Rationale |

|---|---|---|---|

| Solubility & Viscosity | Diffusion Interaction Parameter (kD) | kD > -8 mL/g | Predicts low viscosity at high concentrations. |

| Self-Interaction Chromatography (SIC) | Normalized Retention Volume < 1.5 | Measures colloidal self-interaction; low value indicates favorable behavior. | |

| Thermal Stability | Melting Temperature (Tm) | Tm1 > 65°C | Indicates resistance to thermal unfolding. |

| Aggregation Temperature (Tagg) | Tagg > 60°C | Temperature at which aggregation initiates. | |

| Colloidal Stability | Diffusion Interaction Parameter (kD) | (See above) | Also a proxy for colloidal stability. |

| PEG-Induced Precipitation | Low m-value (slope) | Low propensity to aggregate under molecular crowding. | |

| Chemical Stability | Oxidation Rate (Met/Trp) | Low rate by LC-MS | Resistance to chemical degradation. |

| Polyreactivity & Non-specific Binding | Heparin Chromatography Retention Time | Low retention vs. standard | Indicates low negative charge patch binding. |

| Polyspecificity-Reactivity Assay (PSR) | Signal < 2x negative control | Measures non-specific binding to a diverse antigen panel. | |

| Charge Heterogeneity | Isoelectric Point (pI) & Cation Exchange Chromatography (CEX) | Main peak > 90%; minimal acidic/basic variants | Predicts homogeneity and behavior in formulation. |

| Fab Fragmentation & Hinge Stability | IdeS/Lys-C Digestion Rate | Low fragmentation rate by SEC | Indicates structural integrity. |

| Expression Titer | Transient Expression in HEK293/CHO | > 1 g/L | Early indicator of manufacturability. |

Detailed Experimental Protocols for Key Assays

Self-Interaction Chromatography (SIC)

Objective: Quantify antibody self-interaction through affinity to immobilized self. Protocol:

- Column Preparation: Covalently immobilize the purified mAb of interest onto NHS-activated Sepharose resin per manufacturer's protocol to create a stationary phase.

- System Equilibration: Pack the column and equilibrate with PBS (pH 7.4) on an HPLC system.

- Sample Analysis: Inject the same mAb as analyte at 1-2 mg/mL in PBS. Use a flow rate of 0.5 mL/min.

- Data Analysis: Determine the normalized retention volume (k'). Calculate k' = (VR - V0)/V0, where VR is the analyte peak retention volume and V0 is the column void volume. Lower k' indicates weaker self-interaction.

Polyspecificity-Reactivity Assay (PSR)

Objective: Evaluate non-specific binding to a membrane-based array of diverse cellular antigens. Protocol:

- Array Blocking: Incubate the commercial polyspecificity reagent (e.g., Lipid-Array, Mosaic-Array) in blocking buffer (PBS with 1% BSA, 0.1% Tween-20) for 1 hour.

- Primary Antibody Incubation: Apply the purified mAb (typically at 10 µg/mL in blocking buffer) to the array and incubate for 2 hours at RT.

- Washing: Wash array 3x with PBS + 0.1% Tween-20.

- Detection: Incubate with fluorophore-conjugated anti-human Fc secondary antibody for 1 hour. Wash again.

- Imaging & Quantification: Scan the array using a fluorescence scanner. Quantify signal intensity for each spot. Normalize signals to internal controls. A mAb is considered polyspecific if reactivity exceeds 2x the negative control across multiple unrelated antigens.

PEG-Induced Precipitation Assay

Objective: Assess colloidal stability under molecular crowding conditions. Protocol:

- Sample Preparation: Prepare a series of 96-well plates with a gradient of PEG 6000 (e.g., 0% to 25% w/v) in PBS, pH 7.4.

- Antibody Addition: Add an equal volume of mAb solution (final concentration 0.5 mg/mL) to each PEG solution. Mix thoroughly.

- Incubation: Incubate plate at 4°C for 18-24 hours to reach equilibrium.

- Measurement: Centrifuge plates briefly. Measure the absorbance at 350 nm (A350) of the supernatant in each well to determine turbidity.

- Data Analysis: Plot A350 vs. %PEG. Fit a sigmoidal curve. The m-value (midpoint of precipitation) and the slope at the midpoint are critical parameters. A higher m-value and shallower slope indicate superior colloidal stability.

Bayesian Optimization for Developability Navigation

BO is a sequential design strategy for optimizing black-box functions that are expensive to evaluate (like developability assays). It uses a probabilistic surrogate model (e.g., Gaussian Process) to approximate the landscape and an acquisition function (e.g., Expected Improvement) to decide which candidate to test next.

Diagram Title: Bayesian Optimization Workflow for Antibody Developability

Integrating Assays into a BO-Driven Pipeline

The iterative BO cycle requires a quantitative, multi-parametric scoring function. A common approach is to create a Developability Index (D.I.) that aggregates key assay results into a single, maximizable score.

Diagram Title: Integrated BO and Assay Pipeline

Table 2: Example Developability Index (D.I.) Calculation

| Parameter | Assay | Weight (w) | Normalized Score (S) | Contribution (w * S) |

|---|---|---|---|---|

| Self-Interaction | SIC (k') | 0.25 | 1.0 (if k'<1.0) to 0.0 (if k'>2.0) | 0.25 |

| Thermal Stability | Tm1 (°C) | 0.20 | Linear: 0.0 at 55°C, 1.0 at 75°C | 0.18 |

| Colloidal Stability | PEG m-value | 0.20 | Linear scaling based on benchmark | 0.16 |

| Non-specific Binding | PSR Signal | 0.20 | 1.0 (if <2x control), 0.0 (if >5x) | 0.20 |

| Expression | Titer (g/L) | 0.15 | Linear: 0.0 at 0.1 g/L, 1.0 at 2 g/L | 0.12 |

| Total | Developability Index (D.I.) | 1.00 | 0.91 |

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Reagents for Developability Assays

| Reagent/Solution | Vendor Examples | Function in Developability Assessment |

|---|---|---|

| HEPES or PBS Buffers | Thermo Fisher, Sigma-Aldrich | Standard formulation buffers for assessing physical stability and interactions under physiological conditions. |

| PEG 6000/8000 | Sigma-Aldrich, Hampton Research | Used in precipitation assays to induce molecular crowding and probe colloidal stability. |

| Heparin Sepharose 6 Fast Flow | Cytiva | Stationary phase for heparin chromatography to assess charge-based non-specific binding. |

| NHS-activated Sepharose 4 Fast Flow | Cytiva | Used to immobilize mAbs for constructing custom Self-Interaction Chromatography (SIC) columns. |

| Polyspecificity Reagent (PSR) Array | BioRad, Retrogenix | Membrane spotted with diverse human membrane proteins for assessing non-specific binding. |

| IdeS/FabRICATOR Enzyme | Genovis | Specific protease cleaving IgG below the hinge, used to assess hinge stability and fragmentation propensity. |

| CHO or HEK293 Transient Expression System | Thermo Fisher, ATCC | Cell lines and transfection reagents for small-scale expression to determine titer and protein quality early. |

| Capillary Electrophoresis (CE)-SDS Reagents | ProteinSimple, SCIEX | Cartridges and reagents for analyzing size heterogeneity, fragmentation, and purity with high sensitivity. |

| Differential Scanning Calorimetry (DSC) Capsules | Malvern Panalytical | High-quality capsules and buffers for determining melting temperatures (Tm) with precise thermal control. |

This technical guide examines the role of Bayesian Optimization (BO) as a critical framework for closing the iterative loop between computational antibody design and experimental validation. Framed within a thesis on BO for antibody developability scores, we detail how BO efficiently navigates high-dimensional sequence spaces to propose candidates with optimized predicted developability, which are then validated in the lab, creating a data-driven feedback cycle.

The design of therapeutic antibodies requires balancing multiple, often competing, developability criteria (e.g., solubility, specificity, low viscosity, low immunogenicity). In silico models predict these scores, but the sequence space is vast and non-linear. Exhaustive screening is impossible, and naive selection from prediction models can be suboptimal. Bayesian Optimization provides a principled, sample-efficient strategy to sequentially select the most informative candidates for lab testing, thereby bridging the design-make-test-analyze (DMTA) cycle.

Core Bayesian Optimization Framework

Mathematical Formulation

BO aims to find the global optimum of an expensive black-box function ( f(x) ), where ( x ) represents an antibody sequence or descriptor. It combines:

- A probabilistic surrogate model (typically Gaussian Process, GP) to approximate ( f ).

- An acquisition function ( \alpha(x) ) to decide the next candidate to evaluate.

The algorithm iterates:

- Build/Update the surrogate model using all observed data ( D{1:t} = {(xi, y_i)} ).

- Find ( x_{t+1} = \arg\max \alpha(x) ).

- Evaluate ( f(x_{t+1}) ) experimentally (lab validation).

- Augment data ( D{1:t+1} = D{1:t} \cup {(x{t+1}, y{t+1})} ).

- Repeat until convergence or budget exhaustion.

Key Components for Antibody Developability

Surrogate Models:

- Gaussian Process (GP): Models uncertainty explicitly. Kernel choice (e.g., Matern, RBF) encodes assumptions about sequence similarity.

- Sparse GP / Deep GP: Scalable variants for high-dimensional data.

- Bayesian Neural Networks: Flexible for complex, high-dimensional landscapes.

Acquisition Functions (Balancing Exploration/Exploitation):

- Expected Improvement (EI): Most common, favors points likely to improve over current best.

- Upper Confidence Bound (UCB): Weighs mean prediction and uncertainty.

- Predictive Entropy Search: Information-theoretic, seeks to reduce uncertainty about the optimum.

Integrated Workflow: From In Silico to In Vitro

The following diagram illustrates the closed-loop BO workflow connecting computational design and lab validation.

Title: BO closed-loop workflow for antibody optimization

Experimental Protocols for Lab Validation

Validation of BO-proposed sequences requires robust, medium-throughput developability assays.

Protocol 4.1: High-Throughput Solubility and Aggregation Propensity

- Objective: Measure non-specific interaction and aggregation risk.

- Method: Use a Cellular Thermal Shift Assay (CETSA)-like principle in a plate format.

- Express and purify candidate Fabs/scFvs/antibodies (96-well format).

- Dilute to a standard concentration (e.g., 1 mg/mL) in PBS.

- Aliquot into PCR plates and subject to a thermal gradient (e.g., 40°C to 70°C, 10 steps).

- Incubate for 15 min, then cool to 4°C.

- Centrifuge to pellet aggregates.

- Transfer supernatant to a new plate and quantify soluble protein via a fluorescence dye (e.g., Sypro Orange). Data: Melting temperature (Tm) and % soluble protein at a stressed temperature (e.g., 55°C).

Protocol 4.2: Affinity Capture Self-Interaction Chromatography (AC-SIC)

- Objective: Quantify self-interaction propensity, a key predictor of viscosity.

- Method:

- Immobilize the candidate antibody onto a NHS-activated sensor chip or resin (low density).

- Use the immobilized antibody as the stationary phase in a chromatography column or SPR flow cell.

- Inject a solution of the same antibody at varying concentrations (0.1-5 mg/mL) over the surface.

- Measure the retention time (column) or binding response (SPR). A longer retention/higher response indicates stronger self-interaction.

- Output: The negative logarithm of the kD,SI (self-interaction dissociation constant). Higher pK_D,SI is favorable.

Protocol 4.3: Polyspecificity and Off-Target Binding (Antigen-Independent)

- Objective: Assess risk of non-specific binding.

- Method: Cascade Bioscience's PScreen or similar.

- Express antibody with a C-terminal Fc tag.

- Incubate clarified supernatant with a microarray of ~10,000 human membrane proteins.

- Detect binding via fluorescent anti-Fc antibody.

- Data: Polyspecificity Score (PSR): Ratio of signals from specific binding (e.g., to a known target) vs. the median signal from all non-target membrane proteins.

Data Presentation: Benchmarking BO Performance

Table 1: Comparison of Optimization Algorithms on a Simulated Antibody Developability Benchmark

| Algorithm | Iterations to Hit | Best Composite Score Achieved | Total Lab Experiments Required | Sample Efficiency Gain* |

|---|---|---|---|---|

| Random Search | 42 ± 8 | 0.72 ± 0.05 | 100 | 1.0x (Baseline) |

| Grid Search | 65 ± N/A | 0.68 ± 0.03 | 100 | 0.6x |

| Genetic Algorithm | 28 ± 5 | 0.79 ± 0.04 | 100 | 1.5x |

| Bayesian Optimization (GP-UCB) | 15 ± 3 | 0.88 ± 0.02 | 50 | ~2.8x |

| Bayesian Optimization (EI) | 18 ± 4 | 0.86 ± 0.03 | 50 | ~2.3x |

*Sample Efficiency Gain: Relative reduction in experiments needed to achieve the same score target vs. Random Search.

Table 2: Example Lab Validation Results for BO-Optimized vs. Parent Antibody

| Developability Assay | Parent Antibody | BO Candidate #7 | BO Candidate #12 | Ideal Range |

|---|---|---|---|---|

| Thermal Stability (Tm, °C) | 62.1 | 68.4 | 65.9 | >65 °C |

| % Soluble at 55°C | 45% | 92% | 85% | >80% |

| AC-SIC pK_D,SI | 3.2 | 4.8 | 4.1 | >4.0 |

| Polyspecificity Score (PSR) | 12.5 | 3.1 | 5.4 | <8 |

| HEK293 Transient Titer (mg/L) | 450 | 380 | 510 | >500 |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for BO-Driven Antibody Developability Workflows

| Item | Function in Workflow | Example Product/Catalog |

|---|---|---|

| High-Throughput Cloning System | Enables rapid assembly of 10s-100s of BO-designed variant sequences. | NEBuilder HiFi DNA Assembly Kit (NEB) |

| Mammalian Transient Expression Kit | Medium-scale, parallel expression of antibody variants in 96-deep well plates. | Expi293 Expression System (Thermo Fisher) |

| Automated Protein A Purification Resin/Plates | Parallel capture and purification of antibodies from crude supernatant. | MabSelect PrismA Pre-packed 96-well plates (Cytiva) |

| Differential Scanning Fluorimetry (DSF) Dye | For high-throughput thermal stability screening (Protocol 4.1). | PROTEOSTAT HS-Thermal Shift Dye (Promega) |

| Biolayer Interferometry (BLI) System & Tips | For label-free, semi-parallel measurement of self-interaction (AC-SIC) and affinity. | Octet RED96e with Anti-Human Fc Capture (AHC) tips (Sartorius) |

| Membrane Proteome Array | For comprehensive polyspecificity screening (Protocol 4.3). | PScreen Array (Cascade Biosciences) |

| Laboratory Automation Workstation | For reliable liquid handling in all multi-well plate-based steps. | ASSIST PLUS Pipetting Robot (Integra) |

Advanced Considerations and Future Directions

- Multi-Objective BO (MOBO): Directly optimizes multiple, competing developability scores (e.g., stability vs. expression titer) using a Pareto front.

- Contextual BO: Incorporates experimental batch conditions or cell line data as additional context to the model.

- Transfer Learning & Warm Starts: Initializing the BO surrogate model with historical data from similar campaigns to accelerate convergence.

The pathway diagram below illustrates the logical flow of a multi-objective BO approach for balancing key developability properties.

Title: Multi-objective BO for balancing antibody properties

Bayesian Optimization is a powerful, adaptive framework that systematically connects predictive in silico models of antibody developability to focused, informative laboratory experiments. By quantifying uncertainty and balancing exploration with exploitation, BO drastically reduces the experimental burden required to identify developable leads, directly addressing a core challenge in modern biologic drug development. Its integration into the DMTA cycle represents a paradigm shift towards more efficient and data-driven antibody engineering.

How to Implement Bayesian Optimization for Antibody Sequence and Property Design

Within a broader thesis on Bayesian Optimization (BO) for antibody developability scores, surrogate models form the critical prediction engine. BO iteratively proposes candidate antibody sequences by leveraging a surrogate model to approximate the expensive, noisy, or low-throughput experimental assays (e.g., solubility, viscosity, aggregation propensity). Gaussian Processes (GPs) and Random Forests (RFs) are two dominant surrogate modeling frameworks, each with distinct strengths for biological data characterized by high dimensionality, nonlinearity, and often limited sample sizes.

Core Surrogate Model Architectures

Gaussian Processes (GPs)

A GP defines a distribution over functions, fully specified by a mean function m(x) and a covariance (kernel) function k(x, x'). For a dataset D = {(xi, yi)} i=1:n, with antibody representations xi and scalar developability scores yi, the GP prior is: f(x) ~ GP(m(x), k(x, x')).

The kernel function encodes assumptions about function smoothness and periodicity. The predictive distribution for a new point x is Gaussian with closed-form mean and variance: μ(x) = k^T (K + σ_n²I)⁻¹ y σ²(x) = k(x, x) - k^T (K + σ_n²I)⁻¹ k where K is the kernel matrix, k is the vector of covariances between x and training points, and σ_n² is the noise variance.

Key Protocols for GP Implementation:

- Feature Representation: Convert antibody sequences (e.g., CDR regions) into numerical feature vectors. Common methods include:

- One-hot encoding of amino acids.

- Learned embeddings from protein language models (e.g., ESM-2).

- Physicochemical property vectors (e.g., z-scales).

- Kernel Selection & Training: Choose a kernel (e.g., Matérn 5/2 for moderate smoothness, Radial Basis Function for high smoothness). Hyperparameters (length scales, variance) are optimized by maximizing the log marginal likelihood: log p(y|X) = -½ y^T (K + σn²I)⁻¹ y - ½ log|K + σn²I| - (n/2) log(2π).

- Prediction & Uncertainty Quantification: Use the predictive equations above to estimate the developability score (mean) and the model's uncertainty (variance) for any new sequence.

Random Forests (RFs)

An RF is an ensemble of B decision trees, where each tree is trained on a bootstrap sample of the data and a random subset of features at each split. For regression, the final prediction is the average of individual tree predictions.

Key Protocols for RF Implementation:

- Tree Construction: For each tree, recursively partition the feature space to minimize the Mean Squared Error (MSE) at each node.

- Ensemble Aggregation: Predictions from all trees Tb(x) are aggregated: ŷ = (1/B) Σ{b=1}^B T_b(x).

- Uncertainty Estimation: While not probabilistic by design, uncertainty can be estimated as the variance of predictions across the individual trees in the forest.

Comparative Analysis for Biological Data

Table 1: Comparison of GP and RF Surrogate Models for Antibody Data

| Feature | Gaussian Process (GP) | Random Forest (RF) |

|---|---|---|

| Prediction Output | Full posterior distribution (mean & variance). | Point estimate; variance estimated from tree ensemble. |

| Uncertainty Quantification | Inherent, principled, and calibrated. | Empirical, can be less reliable in extrapolation. |

| Handling High-Dim Features | Can struggle; requires careful kernel choice/dimensionality reduction. | Generally robust; feature sampling is intrinsic. |

| Data Efficiency | Excellent with small datasets (<~1000 samples). | Requires more data to perform well. |

| Interpretability | Low; kernel mechanics are opaque. | Moderate; feature importance metrics available. |

| Computational Cost | O(n³) for training, O(n) per prediction; scales poorly with >10k samples. | O(B * n * p log n); scales efficiently to large n and p. |

| Nonlinearity Capture | Flexible, governed by kernel. | Highly flexible, may overfit on small noisy data. |

Experimental & Computational Workflows

Title: Surrogate Model-Driven Antibody Optimization

The Scientist's Toolkit: Research Reagent & Software Solutions

Table 2: Essential Tools for Surrogate Modeling in Antibody Development

| Item / Solution | Function in Workflow |

|---|---|

| Python Data Stack (NumPy, pandas) | Core numerical and data manipulation for feature preparation and analysis. |

| scikit-learn | Provides robust, standard implementations of Random Forests and foundational utilities for model evaluation and preprocessing. |

| GPy / GPflow / GPyTorch | Specialized libraries for flexible Gaussian Process modeling, with varied backends (NumPy, TensorFlow, PyTorch). |

| BoTorch / Dragonfly | Advanced Bayesian optimization platforms that integrate GP/RF surrogates with acquisition functions for experimental design. |

| ESM-2 Protein Language Model | Generates state-of-the-art contextual embeddings for antibody sequences as informative feature vectors. |

| High-Throughput Solubility/Viscosity Assays | Generates the essential experimental developability data (y-values) for training and validating surrogate models. |

| Laboratory Automation & LIMS | Tracks and manages the physical samples and experimental data, linking sequence identifiers to assay results. |

Data Integration & Model Decision Logic

Title: Model Selection Decision Tree

GPs and RFs provide complementary frameworks for constructing surrogates in antibody developability optimization. GPs offer principled uncertainty—a cornerstone for efficient Bayesian optimization—making them ideal for data-scarce early-stage projects. RFs deliver robust performance on larger, higher-dimensional datasets with greater computational efficiency. The integration of these models into a closed-loop BO pipeline, powered by modern biological feature extraction, is transforming the rational design of developable therapeutic antibodies.

Within the paradigm of Bayesian optimization (BO) for antibody developability, the acquisition function serves as the core decision-making engine. This guide provides an in-depth technical analysis of three principal acquisition functions—Expected Improvement (EI), Upper Confidence Bound (UCB), and Probability of Improvement (PI)—framing their mechanics, comparative performance, and practical selection criteria within the context of optimizing complex biological properties like stability, solubility, and low immunogenicity.

Antibody developability encompasses a suite of biophysical properties critical for successful therapeutic progression. High-throughput screening is often infeasible due to cost and time constraints. Bayesian optimization emerges as a powerful strategy for navigating this high-dimensional, experimental-expensive landscape. After modeling the relationship between antibody sequence or structure and a developability score using a surrogate model (e.g., Gaussian Process), the acquisition function dictates the next most informative sequence to experimentally characterize.

Mathematical Foundations of Acquisition Functions

All acquisition functions, denoted by α(x), balance exploration (probing uncertain regions) and exploitation (refining known promising regions). They operate on the posterior distribution provided by the Gaussian Process.

Key Terms:

- f(x): True, unknown objective function (e.g., developability score).

- μ(x): Posterior mean prediction of the surrogate model.

- σ(x): Posterior standard deviation (uncertainty) of the surrogate model.

- f(x⁺): Best observed function value (incumbent) so far.

- ξ: User-defined parameter controlling exploration-exploitation trade-off.

Probability of Improvement (PI)

PI measures the likelihood that a candidate point x will yield an improvement over the incumbent.

α_PI(x) = P(f(x) ≥ f(x⁺) + ξ) = Φ( (μ(x) - f(x⁺) - ξ) / σ(x) )

where Φ(·) is the cumulative distribution function of the standard normal.

Expected Improvement (EI)

EI computes the magnitude of improvement expected from a candidate point, not just its probability.

α_EI(x) = E[max(f(x) - f(x⁺), 0)]

With an analytic form:

α_EI(x) = (μ(x) - f(x⁺) - ξ) Φ(Z) + σ(x) φ(Z), if σ(x) > 0

α_EI(x) = 0, if σ(x) = 0

where Z = (μ(x) - f(x⁺) - ξ) / σ(x), and φ(·) is the standard normal density function.

Upper Confidence Bound (UCB)

UCB uses an optimistic estimate of the possible function value, defined by a confidence interval.

α_UCB(x) = μ(x) + κ * σ(x)

where κ is a parameter controlling the weight of exploration.

Comparative Analysis & Quantitative Performance

The performance of these functions varies based on problem dimensionality, noise, and the optimization landscape's smoothness. Recent benchmarking studies in computational biology provide the following insights:

Table 1: Comparative Summary of Acquisition Functions

| Feature / Criterion | Probability of Improvement (PI) | Expected Improvement (EI) | Upper Confidence Bound (UCB) |

|---|---|---|---|

| Core Principle | Chance of any improvement | Average magnitude of improvement | Optimistic value bound |

| Exploration Parameter | ξ (moderate influence) | ξ (subtle influence) | κ (direct, linear control) |

| Exploitation Tendency | Very high (can get stuck) | Balanced (default choice) | Tunable via κ |

| Sensitivity to Noise | High (sensitive to best f(x⁺)) | Moderate | Moderate |

| Common Use Case in Biology | Low-dimensional, noise-free screens | General-purpose, most prevalent | Rapid early exploration |

| Typical κ/ξ Values | ξ = 0.01 - 0.1 | ξ = 0.01 | κ = 2.0 - 3.0 |

Table 2: Performance on Benchmark Biological Problems (Hypothetical Data)

| Benchmark (Goal) | Best-Performing AF | Convergence Speed (Iterations) | Final Developability Score (A.U.) |

|---|---|---|---|

| Antibody Affinity Maturation (in silico) | EI (ξ=0.01) | ~45 | 92.4 |

| Protein Solubility Engineering | UCB (κ=2.5) | ~30 (fast initial gain) | 88.1 |

| Viscosity Reduction (High-dim Library) | EI (ξ=0.05) | ~60 | 85.6 |

| Stability Thermal Shift (Low-noise) | PI (ξ=0.0) | ~35 | 94.2 |

Experimental Protocols for Benchmarking

To empirically compare acquisition functions in an antibody developability context, the following in silico protocol is standard:

Protocol 1: Computational Benchmarking Workflow

- Dataset Curation: Assemble a labeled dataset pairing antibody variant sequences (e.g., CDR region libraries) with a developability score (e.g., predicted stability ΔΔG, aggregation propensity score).

- Surrogate Model Training: Initialize a Gaussian Process (GP) model with a chosen kernel (e.g., Matérn 5/2) on a random subset (typically 5-10%) of the data.

- Acquisition Loop: For each iteration i until budget is exhausted: a. Compute the posterior GP mean μ(x) and uncertainty σ(x) for all candidate sequences in the hold-out pool. b. Compute the acquisition function value α(x) for all candidates using the incumbent best score f(x⁺). c. Select the candidate x* with maximum α(x). d. "Query" the oracle (i.e., retrieve the true score from the hold-out dataset for x). e. Augment the training data with {x, f(x*)} and update the GP posterior.

- Metrics Tracking: Record the best score found (f(x⁺)) vs. iteration number. Repeat the entire process with multiple random seeds to compute average performance curves.

- Analysis: Compare the convergence rate and final best score across different acquisition functions (EI, PI, UCB) and parameter settings.

Visualization of the Bayesian Optimization Cycle

Title: Bayesian Optimization Loop for Antibody Design

The Scientist's Toolkit: Research Reagent & Software Solutions

Table 3: Essential Resources for Implementing BO in Antibody Development

| Item / Resource Name | Category | Function / Application |

|---|---|---|

| Gaussian Process Library (GPyTorch, scikit-learn) | Software | Provides the core surrogate modeling capability for regression and uncertainty estimation. |

| BoTorch or Ax Framework | Software | Specialized libraries for Bayesian optimization, offering implementations of EI, UCB, PI, and more advanced functions. |

| PyMOL / Rosetta | Software | For generating or analyzing antibody structural features that can be used as input descriptors for the GP model. |

| Developability Prediction Webserver (e.g., Absolut!) | Software/Service | Provides in silico developability scores (solubility, stability) to act as the objective function or initial data source. |

| Phage Display or Yeast Library | Wet-lab Reagent | Physical variant library for experimental validation of the top sequences proposed by the BO algorithm. |

| Surface Plasmon Resonance (SPR) Chip | Laboratory Equipment | Used to measure binding affinity (KD) of selected antibody variants, a key developability and efficacy metric. |

| Differential Scanning Calorimetry (DSC) | Laboratory Equipment | Measures thermal stability (Tm) of antibody candidates, a critical developability objective. |

| CHO Cell Line Transfection Kit | Wet-lab Reagent | For expressing recombinant antibody variants for downstream in vitro characterization. |

The choice of acquisition function is context-dependent. For most antibody developability tasks, Expected Improvement (EI) serves as a robust default due to its balance between exploration and exploitation. Upper Confidence Bound (UCB) is preferable when early, rapid progress is critical and the parameter κ can be scheduled to reduce exploration over time. Probability of Improvement (PI) is best reserved for low-noise, low-dimensional landscapes where convergence to a very local optimum is acceptable. Ultimately, integrating domain knowledge—such as expected noise levels and the cost of experimentation—into the selection and parameterization of the acquisition function is paramount for accelerating the discovery of developable therapeutic antibodies.

Within the framework of Bayesian Optimization (BO) for antibody developability scoring, the representation of antibody variants is the critical first step. Effective encoding transforms complex biological molecules into numerical feature vectors that a BO algorithm can process. This guide details current methodologies for encoding antibody sequence and structural information, serving as the foundational input for predictive models in developability optimization pipelines.

Sequence-Based Encodings

Sequence encodings are derived from the amino acid sequence of the antibody's variable regions (VH and VL). They are computationally efficient and do not require resolved 3D structures.

One-Hot Encoding (OHE)

The most basic encoding, representing each amino acid in a sequence as a binary vector of length 20.

Methodology:

- Define a canonical ordering of the 20 standard amino acids.

- For each position in the padded/fixed-length sequence, create a 20-dimensional vector where the index corresponding to the amino acid is set to 1, and all others are 0.

- Concatenate vectors for all positions to form a final feature vector of length

(sequence_length * 20).

Learned Embeddings (e.g., from Protein Language Models)

Deep learning models like ESM-2 and AntiBERTy are pre-trained on millions of protein sequences and learn context-aware, continuous vector representations.

Methodology for ESM-2:

- Input the full VH and VL sequence (e.g., combined as a single string with a separator token) to the pre-trained model.

- Extract the hidden state representations from the final layer for each token.

- Apply a pooling operation (e.g., mean pooling) across the sequence dimension to obtain a fixed-size embedding per chain or for the paired variable region. The ESM-2 650M parameter model produces an embedding of dimension 1280.

Physicochemical Property Encodings

Amino acids are represented by quantitative descriptors of their intrinsic properties.

Methodology:

- Select a set of relevant physicochemical scales (e.g., hydrophobicity, volume, charge, isoelectric point).

- For each amino acid in the sequence, replace it with its normalized value on each scale.

- The feature vector is the concatenation of these per-position property values. Common descriptors include AAIndex and Z-scales.

k-mer Composition & BLOSUM Matrices

- k-mer: Count the frequency of short, overlapping sequence fragments.

- BLOSUM Substitution: Encode each amino acid by its row from a BLOSUM substitution matrix (e.g., BLOSUM62), which captures evolutionary similarity.

Table 1: Comparison of Sequence Encodings for BO Input

| Encoding Type | Dimensionality (Example) | Pros | Cons | Suitability for BO |

|---|---|---|---|---|

| One-Hot | High (e.g., 500*20=10,000) | Simple, interpretable, no data loss. | Very high-dim, sparse, ignores similarity. | Poor; high dimensionality challenges GP models. |

| ESM-2 Embedding | Fixed (e.g., 1280 or 2560) | Context-aware, information-dense, state-of-the-art performance. | Requires inference pass; black-box nature. | Excellent; dense, lower-dim, captures complex patterns. |

| Physicochemical | Moderate (e.g., 500*5=2500) | Biologically interpretable, continuous. | Requires manual scale selection; incomplete. | Moderate; may require dimensionality reduction. |

| k-mer Frequency | Fixed (e.g., 8000 for 3-mer) | Captures local motifs, fixed size. | Loses sequential order for long-range interactions. | Good; fixed size, but can be high-dim. |

Structure-Based Encodings

These encodings require a 3D atomic model of the antibody Fv region, typically obtained from homology modeling or AlphaFold2.

Internal Coordinates (Dihedrals)

Encode the protein backbone conformation using the dihedral angles Phi (φ) and Psi (ψ) for each residue.

Methodology:

- From the PDB file or structural model, calculate φ and ψ angles for all residues in the Fv region using tools like

MDTrajorBiopython. - Represent each angle as its sine and cosine value to avoid discontinuity at ±180°.

- The feature vector is the concatenation of

[sin(φ), cos(φ), sin(ψ), cos(ψ)]for each residue.

3D Zernike Descriptors (3DZD)

A rotation-invariant mathematical descriptor for 3D shape, often applied to molecular surfaces or electrostatic fields.

Methodology:

- Calculate the molecular surface or electrostatic potential grid for the antibody Fv region.

- Project the 3D function onto a basis of Zernike polynomials up to a specific order (e.g., n=20).

- Use the calculated Zernike moments as the feature vector. The number of descriptors is determined by

(n/2 + 1)².

Graph-Based Encodings (Protein Graph Networks)

Represent the antibody as a graph where nodes are residues (or atoms) and edges represent spatial or topological connections.

Methodology:

- Node Features: Encode residue type (e.g., one-hot), physicochemical properties, or solvent accessibility.

- Edge Construction: Connect nodes based on spatial proximity (e.g., Cα atoms within 10Å) or peptide bonds.

- Edge Features: Include distance, dihedral angles, or vector direction. This structured data is fed directly into Graph Neural Networks (GNNs) prior to or as part of a BO loop.

Spatial Atom & Residue Counts (Voxelization)

Discretize the 3D space around the antibody into voxels and count atom/residue occurrences or properties.

Methodology:

- Define a 3D bounding box encompassing the Fv region.

- Divide the box into a 3D grid (e.g., 20x20x20 voxels).

- For each voxel, compute a feature such as atomic density, partial charge, or hydrophobic atom count.

- Flatten the 3D grid to create a feature vector.

Table 2: Comparison of Structure-Based Encodings for BO Input

| Encoding Type | Dimensionality (Example) | Pros | Cons | Requirement |

|---|---|---|---|---|

| Dihedral Angles | Moderate (e.g., 264*4=1056) | Direct conformational description, continuous. | Requires accurate backbone modeling; sensitive to missing residues. | 3D Model |

| 3D Zernike | Low (e.g., 121 for n=20) | Rotation-invariant, compact, describes global shape. | Loses local, high-resolution details. | 3D Model + Surface/Field Calc. |

| Graph Network | Variable (Node/Edge Features) | Captures relational structure, powerful for GNNs. | Not a fixed vector (requires GNN); complex pipeline. | 3D Model + Graph Construction |

| Voxel Grid | High (e.g., 20^3=8000) | Captures 3D spatial distribution, CNN-compatible. | High-dim, rotation-sensitive, grid artifacts. | 3D Model + Voxelization |

Integrated and Developability-Specific Features

Beyond raw sequence/structure, features directly correlated with developability profiles are crucial for BO objectives.

Common Feature Set Includes:

- Net Charge & Dipole Moment: Calculated from sequence or structure at a given pH.

- Hydrophobic Surface Area (HSA): Calculated using tools like

PyMOLorRosetta. - Patch Analysis: Identification of hydrophobic or charged patches on the molecular surface.

- Instability Index & B-Factor Profiles: Predictions of aggregation propensity and flexibility.

- Structural Deviations: Root-mean-square deviation (RMSD) from a germline or canonical structure.

Experimental Protocol for Feature Extraction (Example - Hydrophobic Patch Analysis):

- Input: PDB file of antibody Fv model.

- Surface Calculation: Generate the solvent-accessible surface (SAS) using the

MSMSalgorithm (probe radius 1.4Å). - Residue Labeling: Classify surface residues as hydrophobic (A, V, I, L, M, F, Y, W) based on sidechain atoms exposed to solvent.

- Clustering: Cluster hydrophobic residues whose SAS atoms are within 5Å of each other.

- Quantification: For each cluster, sum the SAS area of constituent atoms. The largest patch area and total patch area are common metrics.

Visualization of Encoding Workflows for BO Pipeline

Antibody Encoding Pathways for Bayesian Optimization

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Tools for Antibody Representation and Encoding

| Item / Solution | Function / Description | Example Tools / Sources |

|---|---|---|

| Antibody Sequence Database | Source of antibody variable region sequences for training or context. | OAS, SAbDab, NCBI IgBlast |

| Structure Modeling Suite | Generate 3D models from sequence when experimental structures are unavailable. | AlphaFold2, IgFold, RosettaAntibody, MODELLER |

| Molecular Visualization & Analysis | Visualize structures, calculate surfaces, and measure distances/angles. | PyMOL, ChimeraX, VMD |

| Protein Language Model | Generate state-of-the-art contextual sequence embeddings. | ESM-2 (Hugging Face), AntiBERTy, ProtT5 |

| Bioinformatics Toolkit | Programmatic sequence manipulation, alignment, and basic feature calculation. | Biopython, ANARCI (for CDR numbering) |

| Geometric Descriptor Library | Compute rotation-invariant 3D shape descriptors from structures. | PyZernike, MDTraj (for dihedrals) |

| Graph Representation Library | Construct protein graphs from PDB files for GNN input. | Pytorch Geometric (PyG), DGL, BioPandas |

| Feature Integration Platform | Environment to concatenate, normalize, and manage diverse feature sets for BO. | Scikit-learn, Pandas, Jupyter Notebooks |

This whitepaper explores the integration of Bayesian Optimization (BO) with Deep Learning (DL) to construct hybrid models for the enhanced prediction of antibody developability scores. Framed within a broader thesis on BO for antibody optimization, this guide provides a technical framework for researchers aiming to accelerate therapeutic antibody design by leveraging synergies between probabilistic inference and deep neural networks.

Antibody developability encompasses a set of biophysical and biochemical properties that determine the likelihood of a candidate therapeutic antibody succeeding through development and manufacturing. Key metrics include solubility, viscosity, aggregation propensity, and stability. Predicting these scores early in discovery is critical for de-risking pipelines.

Traditional methods rely on high-throughput experimental screening, which is resource-intensive. In silico predictions offer a solution, but model accuracy, data efficiency, and uncertainty quantification remain significant hurdles. This is where hybrid models combining the adaptive sampling of BO with the representational power of DL present a transformative opportunity.

Theoretical Foundations: BO and DL Synergy

Bayesian Optimization (BO) is a sequential design strategy for global optimization of black-box functions that are expensive to evaluate. It consists of two core components:

- A probabilistic surrogate model (typically a Gaussian Process, GP) that approximates the unknown objective function.

- An acquisition function that guides the next query point by balancing exploration and exploitation.

Deep Learning (DL), particularly deep neural networks (DNNs), excels at learning complex, high-dimensional patterns from large datasets but often lacks inherent uncertainty estimates and can be data-hungry.

The Hybrid Approach uses a DNN as the surrogate model within the BO loop, often enhanced with Bayesian neural networks (BNNs) or Monte Carlo dropout to provide uncertainty estimates. Alternatively, BO can be used to optimize the hyperparameters of a DL model tasked with predicting developability scores, creating a powerful bidirectional relationship.

Core Architectures of Hybrid BO-DL Models

Deep Networks as Surrogates in BO

Replacing the GP with a deep network (e.g., a BNN) allows the surrogate to model more complex relationships in high-dimensional antibody sequence space (e.g., from next-generation sequencing data).

BO for DL Hyperparameter Optimization

DL model performance is highly sensitive to hyperparameters (learning rate, network depth, etc.). BO provides an efficient framework for tuning these, ensuring the predictor itself is optimally configured.

Joint Latent Space Optimization

A shared latent representation of antibody sequences is learned via a variational autoencoder (VAE). BO is then performed directly in this lower-dimensional, informative latent space to propose sequences with optimal predicted developability.

Experimental Protocol for Hybrid Model Development

The following is a detailed methodology for building a BO-DL hybrid for antibody aggregation score prediction.

Step 1: Data Curation & Featurization

- Source: Public datasets (e.g., CoV-AbDab, Thera-SAbDab) and proprietary biophysical screens.

- Input Representation: Antibody variable region sequences are encoded using:

- One-hot encoding

- Physicochemical property embeddings (e.g., AAindex)

- Learned embeddings from protein language models (e.g., ESM-2)

- Output/Target: Experimental aggregation scores (e.g., measured by SEC-HPLC or dye-binding assays) normalized to a 0-1 scale.

Step 2: Model Architecture Design

- Primary Predictor: A convolutional neural network (CNN) or transformer operating on embedded sequences.

- Bayesian Layer: Apply Monte Carlo dropout at training and inference to approximate Bayesian inference and output a mean (μ) and variance (σ) for each prediction.

- BO Loop: The BNN serves as the surrogate. An acquisition function (Expected Improvement) is maximized to propose the next sequence for in silico evaluation.

Step 3: Training & Active Learning Cycle

- Train the initial BNN on a seed dataset (n~1000-5000).

- Use the trained BNN surrogate and acquisition function to select a batch of candidate sequences (n=50-100) with high potential or high uncertainty.

- [In a real workflow: Send candidates for experimental testing.]

- [In a simulation: Use a held-out high-fidelity dataset or a high-accuracy simulator (e.g., *Aggrescan3D) to get "ground truth" scores for the proposed candidates.]*

- Augment the training data with these new (sequence, score) pairs.

- Retrain/update the BNN surrogate.

- Repeat from step 2 until convergence or resource exhaustion.

Step 4: Validation

- Validate final model predictions on a completely held-out test set.

- Compare against baseline models (e.g., Random Forest, standard GP-BO) using metrics: Mean Absolute Error (MAE), Root Mean Square Error (RMSE), and area under the curve (AUC) for classifying high-risk candidates.

Table 1: Performance Comparison of Prediction Models on Antibody Aggregation Propensity

| Model Architecture | MAE (↓) | RMSE (↓) | AUC (↑) | Data Efficiency (Samples to 0.8 AUC) |

|---|---|---|---|---|

| Linear Regression | 0.152 | 0.198 | 0.72 | >10,000 |

| Random Forest | 0.098 | 0.132 | 0.81 | ~5,000 |

| Standard CNN | 0.085 | 0.121 | 0.85 | ~8,000 |

| GP-BO (Baseline) | 0.070 | 0.105 | 0.88 | ~3,000 |

| Hybrid BNN-BO (Proposed) | 0.062 | 0.091 | 0.93 | ~1,500 |

Table 2: Key Developability Parameters Predictable by Hybrid Models

| Developability Attribute | Common Experimental Assay | Typical Prediction Target | Hybrid Model Impact |

|---|---|---|---|

| Aggregation | SEC-HPLC, DLS | % aggregation, kD | High - Primary focus of early modeling |

| Viscosity | Micro-viscometer | Concentration at 20 cP | Medium - Requires complex features |

| Thermal Stability | DSF, DSC | Tm1, Tm2 | High - Well-predicted from sequence |

| Polyreactivity | Hep-2 ELISA, PSB | Signal/Background Ratio | Medium-High |

Visualization of Workflows and Architectures

Title: Active Learning Loop for Hybrid BNN-BO Model

Title: Bayesian Neural Network Surrogate Architecture

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Developability Prediction & Validation Workflows

| Item / Reagent | Function in Workflow | Key Considerations |

|---|---|---|

| HEK293 or CHO Expression System | Production of antibody variants for experimental validation. | Transient vs. stable yield; glycosylation patterns. |

| Protein A/G Chromatography Resin | Purification of expressed antibodies from cell culture supernatant. | Binding capacity and elution pH affect stability. |

| Size-Exclusion Chromatography (SEC) Column (e.g., S200, TSKgel) | Gold-standard for quantifying soluble aggregate levels (% aggregation). | Resolution for monomer/aggregate separation is critical. |

| Differential Scanning Calorimetry (DSC) Instrument | Measures thermal unfolding temperatures (Tm), indicating structural stability. | Requires high protein concentration and purity. |

| Microfluidic Viscometer | Measures viscosity at high concentration, a key developability liability. | Sample consumption is minimal compared to traditional methods. |

| PEG Precipitation Assay Kit | High-throughput surrogate for viscosity measurement. | Correlates with, but does not replace, direct viscometry. |

| Aggrescan3D or Spatial Aggregation Propensity (SAP) Software | In silico simulation for aggregating "hot spot" identification. | Used for in silico validation of model predictions. |

This technical guide details the multi-stage pipeline for therapeutic antibody discovery, framed within the context of applying Bayesian optimization to navigate and predict antibody developability scores—a core component of a broader research thesis on computational optimization in biologics development.

Library Design

The process begins with constructing a diverse and high-quality antibody library to maximize the probability of identifying candidates with desired affinity, specificity, and developability.

Key Considerations:

- Source: Libraries can be naïve, synthetic, or derived from immunized animals/humans.

- Diversity: Focus on both sequence diversity (CDR regions) and structural diversity.

- Pre-filtering: In silico assessment of sequences for potential red flags (e.g., aggregation-prone motifs, immunogenic sequences).

Experimental Protocol: Phage Display Library Construction

- Gene Synthesis & Cloning: Oligonucleotides encoding diversified CDRs are synthesized and assembled into scFv or Fab gene fragments. These are cloned into a phage display vector (e.g., pIII or pVIII fusion).

- Electroporation: The ligated vector is transformed into E. coli (e.g., TG1 or SS320 cells) via electroporation to create the primary library.

- Library Amplification: Transformed bacteria are grown, rescued with helper phage (e.g., M13KO7), to produce phage particles displaying the antibody fragments on their surface.

- Titration: Colony-forming units (cfu) and phage titer are measured to determine library size and diversity.

| Library Design Quantitative Metrics | ||

|---|---|---|

| Parameter | Typical Target | Measurement Method |

| Theoretical Diversity | >10^9 unique clones | Calculation from transformation efficiency |

| Actual Diversity | >10^9 cfu | Colony count on selective plates |

| Phage Titer | 10^12 - 10^13 cfu/mL | Plaque assay or serial dilution infection |

| Insert Rate | >90% | PCR screening of random colonies |

Diagram Title: Antibody Phage Display Library Construction Workflow

Selection (Panning)

The library undergoes iterative selection against the target antigen to enrich for specific binders.

Experimental Protocol: Solid-Phase Panning

- Coating: Immobilize purified antigen (1-10 µg/mL) in a well or on a column. Include a negative selection (e.g., BSA, off-target protein) step to deplete non-specific binders.

- Binding: Incubate the phage library with the immobilized antigen for 1-2 hours.

- Washing: Remove unbound/weakly bound phage with increasingly stringent washes (e.g., PBS with 0.1% Tween-20, then PBS).

- Elution: Recover bound phage using either acidic elution (0.1 M Glycine-HCl, pH 2.2, neutralized) or competitive elution with soluble antigen.

- Amplification: Infect log-phase E. coli with eluted phage, rescue with helper phage, and precipitate amplified phage for the next round. Typically, 3-4 rounds are performed.

| Panning Enrichment Metrics | |||

|---|---|---|---|

| Round | Input Phage (cfu) | Output/Eluted Phage (cfu) | Enrichment Ratio |

| 1 | 10^12 | 10^3 - 10^5 | Baseline |

| 2 | 10^12 | 10^5 - 10^7 | 10 - 1000x |

| 3 | 10^12 | 10^7 - 10^9 | 100 - 10^5x |

Screening & Characterization

Individual clones from enriched pools are screened for binding and functionality.

Experimental Protocol: High-Throughput Screening

- Clone Picking: Pick 96-384 single colonies from the final panning output into microtiter plates.

- Expression: Induce soluble antibody fragment (scFv/Fab) expression in E. coli or HEK293 cells for full IgG.

- Primary Screen: Use ELISA or surface plasmon resonance (SPR) to confirm antigen binding and assess crude specificity.

- Secondary Screen: Positive hits are sequenced, grouped by CDR homology, and characterized for affinity (KD by SPR/BLI), kinetics (kon, k_off), and epitope binning.

Lead Candidate Selection via Developability Assessment

This critical stage integrates high-throughput in vitro assays with in silico predictive models, forming the core application area for Bayesian optimization.

Thesis Context: Bayesian optimization can be employed to model the complex, multi-parameter space of developability scores. It iteratively selects candidates for experimental testing to efficiently find global optima (e.g., high stability, low viscosity) while minimizing costly experimental runs.

Key Developability Assays & Protocols:

1. Stability Assessment (Thermal Shift Assay)

- Protocol: Mix purified antibody with a fluorescent dye (e.g., SYPRO Orange). Heat sample from 25°C to 95°C at 1°C/min in a real-time PCR machine. Monitor fluorescence increase as protein unfolds.

- Data: Determine melting temperature (Tm). Higher Tm suggests greater thermal stability.

2. Self-Interaction & Viscosity (Affinity-Capture Self-Interaction Nanoparticle Spectroscopy, AC-SINS)

- Protocol: Coat gold nanoparticles with anti-human Fc antibody. Incubate with IgG samples. IgG-coated particles will self-interact, causing a spectral shift.

- Data: Larger wavelength shift (Δλ max) indicates stronger self-interaction, correlating with high viscosity risk.

3. Polydispersity & Aggregation (Size-Exclusion Chromatography, SEC)

- Protocol: Inject purified antibody onto an HPLC equipped with a size-exclusion column (e.g., TSKgel UP-SW3000). Run in an isocratic mobile phase (e.g., PBS).

- Data: Percentage of monomer (target >98%), high molecular weight (HMW) aggregates, and low molecular weight (LMW) fragments.

4. Chemical Stability (Forced Degradation)

- Protocol: Incubate antibody at stressed conditions (e.g., pH 3-10, 40°C for 2 weeks; freeze-thaw cycles; mechanical agitation). Analyze post-stress by SEC, CE-SDS, and binding ELISA.

- Data: % recovery of monomer and binding activity after stress.

| Developability Scoring Matrix (Example) | |||

|---|---|---|---|

| Assay | Property Measured | Ideal Profile | Risk Threshold |

| Thermal Shift | Conformational Stability | T_m > 65°C | T_m < 60°C |

| AC-SINS | Self-Interaction Propensity | Δλ max < 5 nm | Δλ max > 10 nm |

| SEC-HPLC | Aggregation & Fragmentation | Monomer > 98% | Monomer < 95% |

| CE-SDS | Purity & Integrity | Main peak > 90% | Fragments > 5% |

| SPR/BLI | Affinity & Kinetics | K_D = nM-pM range | k_off > 10^-3 s^-1 |

Diagram Title: Bayesian Optimization Loop for Developability Screening

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent/Material | Function in Pipeline | Example Product/Catalog |

|---|---|---|

| Phage Display Vector | Cloning and display of antibody fragments on phage surface. | pComb3X (scFv/Fab), pHEN1 |

| Helper Phage | Provides viral proteins for phage particle assembly during rescue. | M13KO7, VCSM13 |

| Anti-M13 Antibody | Detection and quantification of phage in ELISA. | Anti-M13 HRP-conjugated |

| Protein A/G/L Beads | For purification or capture of IgG/Fab for screening/characterization. | MabSelect SuRe, CaptureSelect resins |

| SPR/BLI Biosensor Chips | Label-free kinetic analysis of antigen-antibody binding. | Series S CM5 chip (SPR), Protein A biosensor (BLI) |

| Fluorescent Dye (Sypro Orange) | Detection of protein unfolding in thermal stability assays. | SYPRO Orange protein gel stain |

| SEC-HPLC Columns | High-resolution separation of monomer from aggregates and fragments. | TSKgel UP-SW3000, AdvanceBio SEC columns |

| Gold Nanoparticles | Core component for AC-SINS self-interaction assays. | 20-40 nm citrate-stabilized gold colloid |

| CHO or HEK293 Cells | Recombinant expression of full-length IgG for developability studies. | ExpiCHO-S, Expi293F systems |

Overcoming Challenges: Optimizing BO for Noisy, Multi-Objective Developability Landscapes

Within the critical pathway of antibody therapeutic development, assessing developability—a molecule's suitability for manufacturing, stability, and delivery—is a pivotal, multi-parametric challenge. High-throughput screening generates vast datasets, but their utility is often undermined by significant assay variability and noise. This guide, framed within a broader thesis on Bayesian optimization for antibody developability scoring, presents a systematic, technical approach to quantifying, modeling, and mitigating this noise to enable robust, data-driven candidate selection.