Bayesian Optimization for Antibody Stability-Viscosity Tradeoffs: A Next-Gen Strategy for Biotherapeutics Development

This article provides a comprehensive guide to implementing Bayesian optimization (BO) for navigating the critical stability-viscosity tradeoff in monoclonal antibody (mAb) therapeutic development.

Bayesian Optimization for Antibody Stability-Viscosity Tradeoffs: A Next-Gen Strategy for Biotherapeutics Development

Abstract

This article provides a comprehensive guide to implementing Bayesian optimization (BO) for navigating the critical stability-viscosity tradeoff in monoclonal antibody (mAb) therapeutic development. We begin by exploring the foundational biophysical principles and business-critical challenges of high-concentration formulation. We then detail the methodological framework of BO, from constructing sequence-function landscapes to designing adaptive experimental campaigns. Practical guidance is provided for troubleshooting common pitfalls and optimizing model performance. Finally, we validate the approach through comparative analysis with traditional methods like Design of Experiments (DoE) and High-Throughput Screening (HTS), showcasing real-world case studies and accelerated timelines. This guide is essential for researchers and drug development professionals seeking to rationally engineer antibodies with optimal developability profiles.

Understanding the Antibody Stability-Viscosity Dilemma: The Foundational Challenge in Biologics Development

Why the Stability-Viscosity Tradeoff is a Critical Bottleneck in mAb Development

Technical Support Center: Troubleshooting mAb Formulation & Developability

FAQs & Troubleshooting Guides

Q1: During high-concentration formulation, our lead mAb candidate shows a sudden, nonlinear increase in viscosity (>50 cP at 150 mg/mL). What are the primary causal factors and immediate investigative steps?

A: This is a classic manifestation of the stability-viscosity tradeoff. Primary factors include:

- Net attractive protein-protein interactions (PPIs): Driven by patchy hydrophobic or charged surfaces.

- Electrostatic self-association: Especially at low ionic strength.

- Flexible CDR loops or domains: Leading to transient, viscosity-enhancing interactions.

Immediate Protocol: Dynamic Viscosity & Interaction Parameter Analysis

- Prepare samples: Dialyze mAb into target formulation buffer (e.g., 20 mM Histidine-HCl, pH 6.0). Concentrate to 50, 100, and 150 mg/mL using a 30 kDa MWCO centrifugal concentrator.

- Measure viscosity: Use a micro-viscometer (e.g., ViscoStar) with a 100 µL sample at 25°C. Perform in triplicate.

- Determine the interaction parameter (kD): Using Dynamic Light Scattering (DLS). Run samples at 1, 10, and 50 mg/mL on a Zetasizer. Analyze the diffusion interaction parameter from the concentration dependence of the mutual diffusion coefficient (Dt).

- Interpret data: A strongly negative kD (< -8 mL/g) and a sharp, exponential rise in viscosity confirm net attractive PPIs as the root cause.

Q2: Our stability-optimized variant (from charge engineering) now shows unacceptable viscosity. How do we diagnose if the issue is charge-mediated versus hydrophobic clustering?

A: Perform a controlled salt perturbation assay. Experimental Protocol: Salt Perturbation Assay for PPI Typing

- Prepare buffer matrix: Create a series of 20 mM Histidine buffers, pH 6.0, with NaCl concentrations of 0, 50, 150, and 300 mM.

- Formulate mAb: Dialyze both the original and charge-engineered variant into each buffer. Concentrate to 100 mg/mL.

- Measure: Record viscosity (as above) and kD via DLS for each condition.

- Analyze:

- If added salt reduces viscosity and makes kD less negative, interactions are primarily electrostatic.

- If salt has minimal effect or increases viscosity, the dominant driver is likely hydrophobic or short-range attraction.

Q3: What are the critical in-silico and in-vitro assays to screen for viscosity issues early in candidate selection?

A: Implement a multi-parameter developability screen.

Table 1: Key Developability Assays for Stability-Viscosity Assessment

| Assay | Parameter Measured | Predictive Value for Viscosity | Target Range (Ideal) |

|---|---|---|---|

| Static Light Scattering (SLS) | Second Virial Coefficient (B22) | High: Measures overall PPI. | B22 > 0 (positive) |

| Dynamic Light Scattering (DLS) | Diffusion Interaction Parameter (kD) | High: Measures hydrodynamic interactions. | kD > -8 mL/g |

| Affinity-Capture Self-Interaction Nanoparticle Spectroscopy (AC-SINS) | Δλ max (plasmon wavelength shift) | Medium-High: Measures self-association at low conc. | Δλ max < 5 nm |

| Size-Exclusion Chromatography (SEC) | % High Molecular Weight (HMW) species | Medium: Measures irreversible aggregates. | HMW < 2% |

| Differential Scanning Calorimetry (DSC) | Tm of Fab and Fc domains | Medium-Low: Confers stability but not direct PPI. | Tm1 > 65°C |

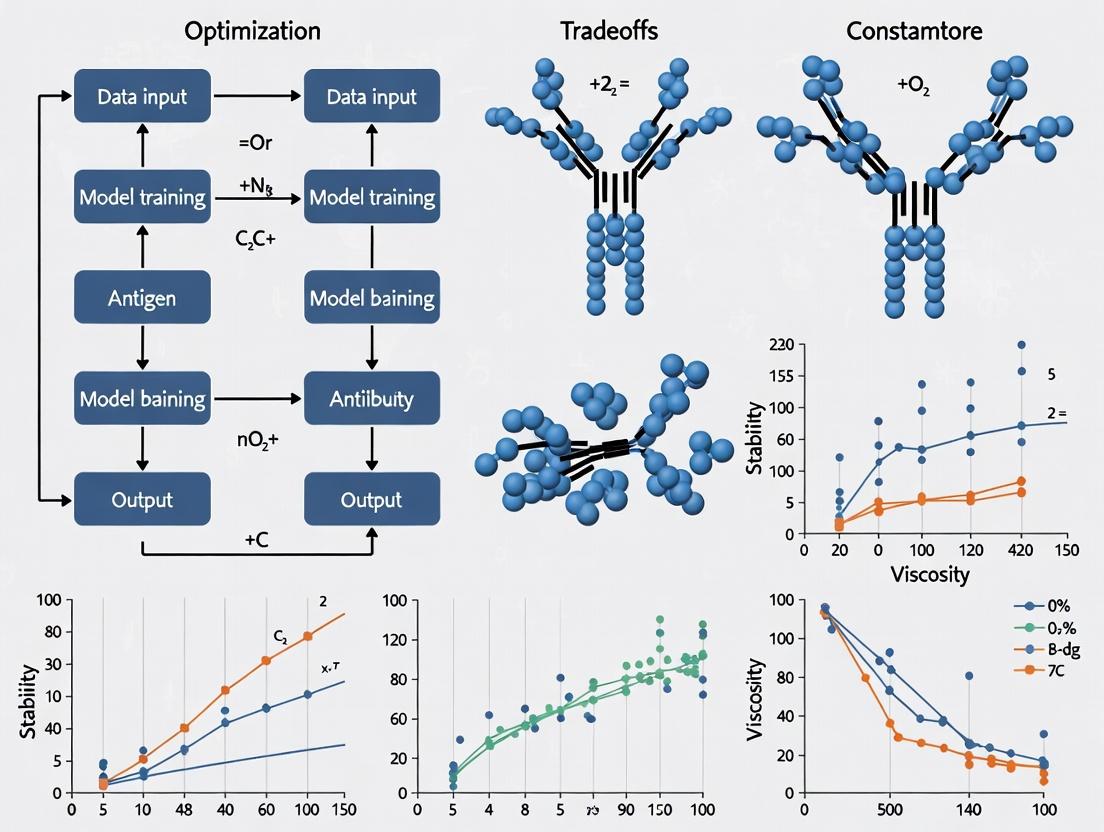

Bayesian Optimization in mAb Developability

The stability-viscosity tradeoff presents a high-dimensional optimization problem perfect for a Bayesian optimization (BO) framework. BO can efficiently navigate the sequence and formulation space by building a probabilistic model to predict viscosity and stability based on features like net charge, hydrophobicity index, and patchiness.

Experimental Protocol: Setting Up a BO Loop for mAb Engineering

- Define Design Space: Identify mutable residues in CDR and framework regions.

- Define Objective Functions: Maximize Tm (from DSC) and minimize viscosity at 150 mg/mL.

- Initial Data Collection: Characterize 10-20 initial variants (wild-type and mutants) for both objectives.

- Train Surrogate Model: Use a Gaussian Process to model the relationship between sequence features and objectives.

- Acquisition & Iteration: Use an acquisition function (e.g., Expected Improvement) to select the next most informative variant to test experimentally.

- Iterate: Update the model with new data for 5-10 rounds to converge on an optimized variant.

Bayesian Optimization for mAb Design

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for mAb Stability-Viscosity Research

| Item | Function & Application |

|---|---|

| Histidine-HCl Buffer (20 mM, pH 6.0) | Standard low-ionic-strength formulation buffer for assessing electrostatic PPIs. |

| Sucrose or Trehalose | Common stabilizers used to enhance conformational stability (raise Tm) and modulate viscosity. |

| Arginine Hydrochloride | A versatile excipient that can suppress aggregation but may increase or decrease viscosity based on concentration. |

| NaCl Solution (1-5 M stock) | For performing salt perturbation studies to diagnose interaction types. |

| 30 kDa Molecular Weight Cut-Off (MWCO) Centrifugal Concentrators | For buffer exchange and concentrating mAbs to high concentration (>100 mg/mL). |

| Micro-viscometer (e.g., ViscoStar) | Essential for accurately measuring low-volume, high-value mAb samples at high concentration. |

| Zetasizer or Similar DLS Instrument | For measuring kD, hydrodynamic radius (Rh), and particle size distribution. |

| Differential Scanning Calorimetry (DSC) Microcalorimeter | For determining the thermal melting temperature (Tm) of Fab and Fc domains. |

Root Cause of Stability-Viscosity Tradeoff

Troubleshooting Guides & FAQs

Q1: During formulation screening, my antibody shows unexpectedly high viscosity at low ionic strength, contrary to charge repulsion theory. What could be the cause?

A: This often indicates that hydrophobic interactions are dominating over electrostatic repulsion. High-concentration self-association can be driven by surface hydrophobicity patches, even when the net charge is high and repulsive. Troubleshooting steps:

- Measure hydrophobic interaction chromatography (HIC) retention time: A higher retention time confirms increased surface hydrophobicity.

- Perform cross-interaction chromatography (CIC): This assesses self-association propensity directly in conditions mimicking low ionic strength.

- Check for charge heterogeneity: Use imaged capillary isoelectric focusing (icIEF). A broad or asymmetric charge variant distribution can lead to localized attractive patches.

Q2: My Bayesian optimization model for viscosity prediction is not converging on an optimal formulation. The suggested experiments seem contradictory. How should I proceed?

A: This typically occurs when the model's acquisition function is exploring uncertain regions of the parameter space. Follow this protocol:

- Validate Input Data: Ensure all historical data on net charge (from capillary zone electrophoresis), hydrophobicity (from HIC), and viscosity (from microcapillary viscometry) are accurately measured and formatted.

- Inspect Parameter Ranges: The model may be suggesting experiments at the edges of your defined design space (e.g., very low pH and very high conductivity). Systematically constrain one parameter (e.g., fix pH at 6.0) for the next iteration to reduce complexity.

- Incorporate a Direct Self-Association Metric: Add Cross-Interaction Chromatography (CIC) retention time as a fourth input parameter. This provides a more direct correlate to viscosity than net charge or hydrophobicity alone.

Q3: How can I quickly differentiate whether viscosity is driven primarily by net charge or self-association propensity?

A: Perform a simple salt titration experiment and analyze the data in this table:

| Condition (NaCl Concentration) | Viscosity (cP) at 150 mg/mL | Interpretation |

|---|---|---|

| 0 mM | High (> 25 cP) | If viscosity is high at low salt, electrostatic attractions (from charge patches) or hydrophobic effects may dominate. |

| 50-100 mM | Decreasing | Screening of electrostatic interactions supports charge-driven self-association. |

| >150 mM | Plateau or Increases | Hydrophobic-driven self-association is likely, as salt enhances hydrophobic interactions. |

Protocol: Prepare the same antibody sample at 150 mg/mL in a histidine buffer at pH 6.0. Dialyze into identical buffers containing 0, 50, 100, and 150 mM NaCl. Measure viscosity using a microfluidic viscometer at 25°C.

Q4: My antibody has a favorable (negative) net charge at formulation pH and low hydrophobicity, yet shows high aggregation propensity in stability studies. What factor am I missing?

A: You are likely missing dynamic self-association propensity. Net charge and average hydrophobicity are static measures. Some antibodies undergo concentration-dependent reversible self-association that is not captured by standard assays.

- Solution: Use Static and Dynamic Light Scattering (SLS/DLS) to measure the interaction parameter (kD) and the second virial coefficient (B22). A negative kD/B22 indicates attractive interactions leading to self-association and aggregation risk.

- Experimental Protocol:

- Perform buffer exchange into the desired formulation using size-exclusion chromatography.

- Conduct DLS measurements across a concentration series (e.g., 1, 5, 10, 20 mg/mL) at 25°C.

- Plot the diffusion coefficient (Dm) vs. concentration. The slope is kD (negative slope = attraction).

- Use SLS data to calculate B22 via the Zimm plot method.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Analysis |

|---|---|

| Cation Exchange Chromatography (CEX) Resin (e.g., Capto SP ImpRes) | Measures net charge distribution and identifies basic/acidic charge variants. |

| Hydrophobic Interaction Chromatography (HIC) Resin (e.g., Capto Phenyl) | Quantifies surface hydrophobicity; higher retention time correlates with hydrophobicity. |

| Cross-Interaction Chromatography (CIC) Column | A column coupled with human IgG or Fc receptor to directly assess self-association propensity. |

| Imaged Capillary Isoelectric Focusing (icIEF) Assay Kit | Provides high-resolution analysis of net charge (pI) and charge heterogeneity. |

| Microfluidic Viscometer Chip (e.g., on a Viscosizer platform) | Enables viscosity measurement of precious, low-volume (µL) antibody samples at high concentration. |

| Dynamic Light Scattering (DLS) Plate Reader | Measures the interaction parameter (kD) to quantify colloidal stability and self-association. |

| Bayesian Optimization Software Package (e.g., in Python: Scikit-Optimize, BoTorch) | Algorithmically designs the next best experiment to optimize stability and minimize viscosity. |

Experimental Workflow & Logical Diagrams

Bayesian Optimization Workflow for Viscosity

Biophysical Drivers Impact on Viscosity

Technical Support Center: Troubleshooting & FAQs

This technical support center provides guidance for researchers conducting experiments related to antibody formulation and stability, specifically within the framework of Bayesian optimization studies for managing the stability-viscosity trade-off.

FAQ 1: During my high-throughput screening for viscosity, my readings are inconsistent across replicate samples. What could be the cause?

- Answer: Inconsistent viscosity measurements in replicates often stem from poor temperature control or sample equilibration. High-concentration antibody solutions are highly sensitive to temperature fluctuations.

- Troubleshooting Protocol:

- Verify Instrument Calibration: Use standard viscosity oils at the expected measurement temperature.

- Ensure Thermal Equilibration: Place all samples and instrument plates in a thermally controlled environment (e.g., 25°C) for at least 30 minutes prior to measurement.

- Check for Sample Evaporation: Use sealing films for all plates during the equilibration step. For long analysis runs, consider humidity-controlled chambers.

- Inspect for Air Bubbles: Centrifuge plates at low speed (e.g., 500 x g for 2 minutes) before loading into the viscometer.

- Troubleshooting Protocol:

FAQ 2: My Bayesian optimization algorithm is converging on formulations with high viscosity despite setting a viscosity penalty. Why?

- Answer: This indicates a potential imbalance in your objective function or insufficient exploration of the formulation space. The algorithm may be over-prioritizing stability metrics (like aggregation percentage).

- Troubleshooting Protocol:

- Audit Objective Function: Recalculate the weighting of your composite objective. For example:

Objective = (w1 * Aggregation%) + (w2 * Viscosity) + (w3 * Opalescence). Ensurew2(viscosity weight) is sufficiently large. - Review Parameter Bounds: Check if your design space (e.g., pH range, excipient concentration limits) is too narrow, preventing discovery of low-viscosity regions.

- Introduce a Viscosity Constraint: Modify the algorithm to discard any candidate formulation with viscosity > a specified threshold (e.g., 20 cP) before assessing stability.

- Inspect Data Quality: Verify that the viscosity data fed into the model is accurate and has low noise (see FAQ 1).

- Audit Objective Function: Recalculate the weighting of your composite objective. For example:

- Troubleshooting Protocol:

FAQ 3: Scale-up from a 5mL Bayesian optimization batch to a 50mL stability batch resulted in a significant viscosity increase. What happened?

- Answer: This is a common scale-up issue related to mixing heterogeneity and shear history. Small-volume magnetic stirring does not replicate the shear forces of large-scale impeller mixing.

- Troubleshooting Protocol:

- Standardize Mixing: Implement a defined mixing protocol (RPM, time, impeller type) for all batches above a critical volume (e.g., >10mL).

- Characterize Shear Rate: Estimate the shear rate during mixing for both small and large scales. Aim to keep it consistent.

- Reformulate with Scale in Mind: If certain excipients (e.g., some surfactants) are shear-sensitive, the Bayesian model may need to be retrained with data generated under scaled-down, but representative, mixing conditions.

- Troubleshooting Protocol:

FAQ 4: How do I effectively incorporate "dosage" as a constraint in my Bayesian optimization for formulation?

- Answer: Dosage (mg/mL) is a direct input variable, not just a constraint. Its interaction with excipients is non-linear and critical for viscosity.

- Troubleshooting Protocol:

- Model Dosage Explicitly: Include antibody concentration (mg/mL) as a primary, continuous variable in your experimental design space (e.g., from 50 to 150 mg/mL).

- Define a Viscosity-Dosage Response Surface: Run a preliminary DOE (Design of Experiments) to map viscosity as a function of concentration and key excipients (e.g., Histidine, NaCl). Feed this data as a prior to the Bayesian optimizer.

- Set Business Logic Constraints: Program the algorithm to target the minimum dosage required for therapeutic efficacy (a fixed value) OR treat it as an optimizable variable with a cost function (higher concentration may reduce fill volume but increase viscosity risk).

- Troubleshooting Protocol:

Table 1: Impact of Formulation Parameters on Key Metrics

| Parameter | Typical Range | Effect on Viscosity (cP) | Effect on Stability (Aggregation %/month) | Estimated Cost Impact (Relative to Baseline) |

|---|---|---|---|---|

| Antibody Concentration | 50 - 150 mg/mL | Increase of 2-10x across range | May increase by 0.1-0.5% at high conc. | High (increases CoGs proportionally) |

| pH | 5.5 - 6.5 | U-shaped curve, min ~pH 6.0 | Can increase sharply at extremes | Low |

| Histidine (Buffer) | 10 - 50 mM | Mild decrease with increase | Minimal effect | Very Low |

| Sodium Chloride | 0 - 150 mM | Can sharply increase above 50mM | May reduce colloidal stability | Low |

| Sucrose (Stabilizer) | 5 - 10% w/v | Slight increase | Can reduce aggregation by ~0.2% | Low |

| Surfactant (PS80) | 0.01 - 0.1% w/v | Negligible effect | Critical for surface protection | Medium |

Table 2: Timeline Delays Due to Formulation Challenges

| Challenge | Typical Delay | Root Cause | Mitigation Strategy |

|---|---|---|---|

| High Viscosity (>20 cP) at target dose | 3-6 months | Requires reformulation and new stability studies | Implement Bayesian optimization early in development. |

| Unstable lead formulation (aggregation) | 6-12 months | Requires identification of new stabilizers and long-term stability studies | Use accelerated stability screening (e.g., CE-SDS, SEC-HPLC after stress). |

| Failed tech transfer to CMO | 1-3 months | Non-robust formulation, mixing sensitivity | Include scale-down shear models in initial screening. |

Experimental Protocols

Protocol 1: High-Throughput Viscosity Screening for Bayesian Optimization Input

- Objective: Generate reliable viscosity data for Bayesian model training.

- Materials: See "Scientist's Toolkit" below.

- Method:

- Prepare formulation candidates in a 96-deep well plate using a liquid handler.

- Seal plate and equilibrate at 25.0 ± 0.1°C for 30 minutes in a thermal chamber.

- Centrifuge plate at 500 x g for 2 minutes to remove bubbles.

- Using a micro-viscometer (e.g., with capillary or rotational probe), measure kinematic viscosity. Convert to dynamic viscosity using measured density.

- Perform each measurement in triplicate, reporting the mean and standard deviation.

Protocol 2: Accelerated Stability Assessment for Objective Function Calculation

- Objective: Quantify aggregation after thermal stress to predict long-term stability.

- Method:

- Aliquot 100 µL of each formulated candidate into a PCR tube.

- Subject samples to controlled thermal stress (e.g., 40°C for 4 weeks). Include a control stored at 2-8°C.

- At weekly intervals, analyze samples by Size-Exclusion High-Performance Liquid Chromatography (SEC-HPLC).

- Calculate percent aggregation as:

(Area of aggregate peaks / Total peak area) * 100. - Use the aggregation rate (change % per week) as a key input for the stability score in the Bayesian objective function.

Visualizations

Title: Bayesian Optimization Workflow for Formulation

Title: Formulation Challenges Drive Business Outcomes

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Formulation Research |

|---|---|

| Histidine-HCl Buffer | A common buffering system (pH 5.5-6.5) that provides minimal ion-specific viscosity effects. |

| Trehalose / Sucrose | Stabilizing excipients that protect the antibody from aggregation via preferential exclusion. |

| Polysorbate 80 (PS80) | Surfactant that minimizes surface-induced aggregation at interfaces (e.g., air-liquid). |

| Arginine Hydrochloride | A versatile excipient that can suppress aggregation but may increase viscosity at high concentrations. |

| Sodium Chloride | Ionic strength modifier; can be used to screen for electrostatic viscosity drivers but often increases viscosity. |

| Micro Viscometer | Instrument for measuring viscosity of small-volume (μL) samples in high-throughput formats. |

| SEC-HPLC Columns | For quantifying soluble aggregates (dimers, HMWs) as a primary stability metric. |

| Dynamic Light Scattering (DLS) | Provides hydrodynamic radius and polydispersity, early indicators of instability. |

| 96-Well Deep Well Plates | Enable parallel formulation preparation for screening design spaces. |

| Automated Liquid Handler | Critical for accuracy and reproducibility when preparing multicomponent formulation matrices. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My Bayesian optimization model is failing to converge or is stuck in a local minimum for the antibody viscosity-stability Pareto front. What are the primary checks?

A1: Perform this diagnostic sequence:

- Check Acquisition Function: Are you using Expected Improvement (EI) or Upper Confidence Bound (UCB)? For highly noisy viscosity measurements, switch to a noise-aware acquisition function like Predictive Entropy Search.

- Validate Kernel Choice: The Matérn 5/2 kernel is standard, but for a tradeoff problem, consider a multi-task kernel if you have correlated stability (e.g., Tm) and viscosity data.

- Scale Your Inputs: Ensure all antibody sequence descriptors (e.g., hydrophobicity index, charge) are normalized (e.g., z-score). Unscaled inputs can cripple kernel performance.

- Initial Design: You need a sufficient space-filling initial design (e.g., Latin Hypercube) of at least 5-10 points per dimension before the Bayesian loop begins.

Q2: High-throughput viscosity measurements are noisy and sometimes outlier-prone. How do I robustly integrate this data into the Bayesian optimization loop?

A2: Implement a pre-processing pipeline:

- Statistical Filtering: Define a moving median absolute deviation (MAD) threshold for replicate measurements. Discard points beyond 3×MAD.

- Model Noise Explicitly: Use a Gaussian Process model that includes a heteroscedastic noise term (

gpytorchorGPflowallow this). This informs the model which data points are less reliable. - Windowing: Focus the optimization on a recent window of iterations if experimental conditions drift over time.

Q3: When optimizing for both stability (high Tm) and low viscosity, how do I properly define the composite objective function for a single-target BO?

A3: Avoid ad-hoc weighted sums. Use a two-stage approach:

- Constraint Method: Set low viscosity as the primary objective to minimize. Define a constraint on stability (e.g., Tm > 70°C). Use a constrained BO package like

BoTorch. - Scalarization with Care: If you must scalarize, use a known transformation like the Logarithmic Desirability Function. It is less sensitive to scale differences than a linear sum.

Q4: The computational cost of updating the Gaussian Process model with every new batch of experimental data is becoming prohibitive. How can I speed this up?

A4: Employ approximate methods:

- Sparse Gaussian Processes: Use inducing points to approximate the full dataset. This reduces complexity from O(n³) to O(m²n), where m << n.

- Update the Posterior, Not the Full Model: For sequential batches, use Bayesian updating rules to refine the posterior distribution without re-computing from scratch, if the kernel hyperparameters are stable.

Key Experimental Protocols & Data

Protocol 1: High-Throughput Viscosity Measurement (Microfluidic Rheology)

Principle: Measure dynamic viscosity from the flow rate and pressure drop in a micro-capillary.

- Sample Prep: Dialyze antibody variants into a standard formulation buffer (e.g., Histidine-Sucrose). Concentrate to 50 mg/mL using a 30 kDa MWCO centrifugal filter.

- Load Chip: Use a commercial microfluidic viscometer chip (e.g., VROC initium). Load 100 µL of sample into the injection port.

- Run: Apply a controlled pressure gradient (5-20 psi). The software detects meniscus movement via embedded video and calculates viscosity from the Poiseuille flow equation.

- Replicates: Perform three independent loads per variant. Include a buffer control and a standard protein (e.g., BSA) for calibration.

Protocol 2: Stability Assessment via Differential Scanning Fluorimetry (DSF)

Principle: Monitor protein unfolding as a function of temperature using a fluorescent dye.

- Plate Setup: Dilute antibody samples to 0.2 mg/mL in formulation buffer. Mix with SYPRO Orange dye (final dilution 5X).

- Run: Use a real-time PCR instrument. Ramp temperature from 25°C to 95°C at 1°C/min, with fluorescence readings (ROX channel) at each step.

- Analysis: Fit the fluorescence derivative vs. temperature curve. The inflection point is the apparent melting temperature (Tm). Report the mean of 4 replicates.

Table 1: Optimization Efficiency for a 20-Variant Design Space

| Metric | Random Search (50 iterations) | Bayesian Optimization (50 iterations) |

|---|---|---|

| Best Viscosity (cP) @ 50 mg/mL | 12.5 ± 1.8 | 8.2 ± 0.5 |

| Tm of Best Candidate (°C) | 68.5 | 72.3 |

| Iterations to Reach <10 cP | 38 | 12 |

| Pareto Front Quality (Hypervolume) | 0.65 | 0.89 |

Table 2: Essential Research Reagent Solutions

| Reagent/Kit | Function | Key Consideration |

|---|---|---|

| His-Tag Purification Resin | High-throughput purification of expressed antibody fragments. | Use pre-packed 96-well plates for parallel processing. |

| SYPRO Orange Dye | Fluorescent dye for DSF stability screening. | Light-sensitive; aliquot to avoid freeze-thaw cycles. |

| VROC Microfluidic Chip | Enables viscosity measurement with <50 µL sample volume. | Calibrate with viscosity standards at the start of each run. |

| Stability Buffer Screen Kit | Pre-formulated buffer plates to assess excipient impact. | Contains 24 distinct buffers for initial formulation space mapping. |

| Charge Variant Analysis Column | Cation-exchange HPLC column to assess isoelectric point. | Net charge is a critical feature for viscosity prediction models. |

Visualizations

Title: Bayesian Optimization Workflow for Antibody Engineering

Title: Molecular Drivers of Viscosity-Stability Tradeoff

Bayesian Optimization Technical Support Center

Welcome to the Technical Support Center for Bayesian Optimization (BO) in antibody stability-viscosity trade-off research. This guide provides targeted troubleshooting and FAQs to assist researchers in implementing BO for efficient biologic drug development.

Frequently Asked Questions (FAQs)

Q1: In our study of antibody viscosity, the BO algorithm seems to get "stuck" exploring a narrow region of the sequence space too early. How can we encourage more global exploration?

- Answer: This is a common issue of over-exploitation. Adjust your acquisition function.

- For Expected Improvement (EI): Increase the parameter

xi(e.g., from 0.01 to 0.1 or 0.2). This adds more weight to exploring uncertain regions. - Switch to Upper Confidence Bound (UCB): Use a higher

kappaparameter (e.g., 3-5) for earlier iterations to prioritize exploration, then gradually reduce it. - Protocol: Run a short initial BO loop (10-20 iterations) with a high exploration parameter. Analyze the surrogate model's uncertainty. If uncertainty remains high in large, unexplored areas, manually add 1-2 design points in those regions before resuming the main BO loop.

- For Expected Improvement (EI): Increase the parameter

Q2: Our experimental measurements for antibody stability (e.g., Tm, ΔG) have significant inherent noise or variability. How do we configure BO to handle this?

- Answer: You must explicitly model the noise in your Gaussian Process (GP) surrogate.

- Methodology: When defining your GP prior, set a non-zero

alphaornoiseparameter. This tells the model to expect variance in the observations themselves. - Action: Use a heteroscedastic GP if noise level varies across the parameter space. Alternatively, use a robust acquisition function like Noisy Expected Improvement (NEI). Always run experimental replicates (n≥3) for initial design points to quantify baseline noise level, which informs the

alphasetting.

- Methodology: When defining your GP prior, set a non-zero

Q3: When optimizing for both high stability (Target: Max Tm) and low viscosity (Target: Min Concentration at 20 cP), how do we structure the single objective function for a standard BO implementation?

- Answer: Construct a weighted, normalized composite objective.

- Formula:

Objective = w1 * ((Tm - Tm_min) / (Tm_max - Tm_min)) - w2 * ((log(Viscosity) - log(Visc_min)) / (log(Visc_max) - log(Visc_min))) - Procedure:

- Define plausible min/max ranges for Tm and Viscosity from literature or prior data.

- Normalize each property to a [0,1] scale.

- Assign weights

w1andw2(e.g., 0.7 and 0.3) reflecting the project's priority. - The negative sign for viscosity ensures minimizing viscosity increases the score.

- Formula:

Q4: We have prior knowledge about which antibody framework regions most influence viscosity. How can we incorporate this into the BO model?

- Answer: Use informative priors in the GP kernel.

- Method: Apply Automatic Relevance Determination (ARD) with a Matern or RBF kernel. Start the length-scale parameters for known critical regions (e.g., CDR loops) with smaller initial values, making the model initially more sensitive to changes in those dimensions. For truly categorical variables (e.g., specific amino acid types at a site), use a Hamming kernel or one-hot encoding.

Troubleshooting Guides

Issue: Poor Performance Despite Many Iterations

- Check 1: Initial Design. Your initial Design of Experiments (DoE) may be insufficient. For a sequence space with

ddimensions, start with at least5*dto10*dpoints using Latin Hypercube Sampling (LHS). - Check 2: Kernel Choice. For antibody parameters (continuous, discrete, categorical), a composite kernel is often needed. Example:

Matern (for continuous) + Hamming (for categorical). - Action Plan: Pause optimization. Visualize the surrogate model's mean and variance predictions across 2D slices of your parameter space. If the model appears random, restart with a better DoE and a simpler, more exploratory configuration.

Issue: Objective Function Evaluation is Extremely Expensive (e.g., In Silico FEP calculations)

- Solution: Implement a Multi-Fidelity Approach.

- Protocol: Use a lower-fidelity, cheaper method (e.g., coarse-grained simulation, heuristic scoring function) to approximate the objective for many candidate points. The BO algorithm uses this to decide which few points warrant evaluation with the high-fidelity method (e.g., experimental viscosity measurement).

- Visual Workflow: See Diagram 1 below.

Issue: Constraints are Violated by Suggested Experiments (e.g., suggested mutant is insoluble)

- Solution: Use Constrained Bayesian Optimization.

- Methodology: Model the constraint (e.g., solubility > threshold) with a separate GP classifier. Multiply your primary acquisition function by the probability of satisfying the constraint. Only suggest points with a high probability of being feasible.

- Experimental Integration: Build a quick, cheap solubility assay (e.g., thermal challenge followed by SEC-HPLC) to run in parallel with your main stability/viscosity assays to gather constraint data.

Table 1: Common GP Kernels for Antibody Optimization

| Kernel Name | Best For | Key Parameter | Consideration for Antibodies |

|---|---|---|---|

| Matern 5/2 | Continuous parameters (pH, Temp) | Length-scale | Default choice for smooth but not infinitely differentiable functions. |

| Radial Basis (RBF) | Very smooth, continuous trends | Length-scale | Can oversmooth if the response is complex. |

| Hamming | Categorical/sequence data (Amino Acid type) | Length-scale | Essential for encoding discrete mutations. |

| Dot Product | Linear trends | Variance offset | Useful as a component in composite kernels. |

Table 2: Comparison of Acquisition Functions

| Function | Goal | Parameter to Tune | Use-Case Phase |

|---|---|---|---|

| Expected Improvement (EI) | Balance explore/exploit | xi (exploration weight) |

General purpose, most common. |

| Upper Confidence Bound (UCB) | Explicit exploration | kappa (confidence level) |

Early-stage, highly uncertain space. |

| Probability of Improvement (PI) | Pure exploitation | xi |

Final tuning of a promising region. |

| Noisy EI | Noisy observations | xi, noise_level |

When experimental replicates vary. |

Experimental Protocol: Standard BO Loop for Viscosity-Stability Screening

1. Define Parameter Space & Objective:

- Parameters: List mutable residues (e.g., CDR-H3 positions 99-102), each with possible amino acids [A, R, N, D...].

- Objective: As defined in FAQ A3. Establish assay protocols for

Tm(Differential Scanning Fluorimetry) andViscosity(Dynamic Light Scattering or micro-viscometer).

2. Initial Experimental Design:

- Use LHS to select

n_init= 50-100 unique antibody variants. - Express, purify, and characterize (

Tm,Viscosity) alln_initvariants. Run in triplicate.

3. BO Loop Execution (Iterative Phase):

- Surrogate Model Training: Fit a GP with a composite kernel to all accumulated data.

- Acquisition Maximization: Use an optimizer (e.g., L-BFGS-B, DIRECT) to find the next candidate variant(s) that maximize the acquisition function.

- Experimental Evaluation: Characterize the suggested variant(s).

- Iterate: Repeat until resource budget exhausted or convergence (e.g., <1% improvement in objective over 10 iterations).

Mandatory Visualizations

Multi-Fidelity BO for Costly Experiments

Core Bayesian Optimization Cycle

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Antibody Stability-Viscosity BO Experiments

| Item | Function / Role in BO Workflow |

|---|---|

| High-Throughput Expression System (e.g., Expi293F) | Rapid production of 100s of antibody variant supernatants for initial design and iterative testing. |

| Automated Liquid Handler | Enables precise, reproducible plate-based assays for DSF and sample prep for viscosity. |

| Differential Scanning Fluorimeter (DSF, e.g., Prometheus) | Measures thermal stability (Tm, ΔG) in a high-throughput, low-volume format. |

| Dynamic Light Scattering (DLS) Plate Reader | Measures hydrodynamic radius and assesses aggregation propensity, correlated with viscosity. |

| Micro-Viscometer (e.g., ViscoStar) | Directly measures viscosity of low-volume (≤50 µL) protein samples. |

| BO Software Library (e.g., BoTorch, GPyOpt, scikit-optimize) | Provides algorithms for Gaussian Process modeling, acquisition function optimization, and loop management. |

| Laboratory Information Management System (LIMS) | Tracks the genotype (sequence), experimental parameters, and phenotype (Tm, Viscosity) data for each variant, essential for data integrity in the BO loop. |

Implementing Bayesian Optimization: A Step-by-Step Framework for Antibody Engineering

FAQs & Troubleshooting Guides

Q1: How do I properly define my initial sequence variant library for a Bayesian optimization study of antibody viscosity? A: Ensure your variant library covers a diverse, yet physically plausible, sequence space. Common issues include:

- Problem: Poor optimization convergence due to a sparse initial dataset.

- Solution: Use a combination of structure-based computational design (e.g., targeting net charge, hydrophobic patches) and historical variant data. Aim for 20-50 well-characterized variants to seed the model. Avoid clustering variants with only single-point mutations.

Q2: What are the critical formulation parameters to include when expanding the search space beyond sequence? A: The key parameters are pH, ionic strength, and excipient concentration. A frequent error is using ranges that are too narrow or physiologically irrelevant.

- Problem: Missing the optimal formulation sweet spot.

- Solution: Define ranges based on stability and feasibility: pH (5.0-7.0), NaCl (0-150 mM), Sucrose (0-10% w/v), or Histidine (5-50 mM). Use a Design of Experiments (DoE) approach to sample this space efficiently when combined with sequence variables.

Q3: My high-concentration viscosity measurements are highly variable. How can I improve reproducibility? A: This is often related to sample handling and instrument calibration.

- Problem: Inconsistent shear history or sample equilibration leads to noisy viscosity data, confusing the Bayesian model.

- Solution:

- Pre-shear all samples at a fixed, moderate shear rate for 60 seconds.

- Equilibrate at the measurement temperature (e.g., 25°C) for 10 minutes.

- Use a controlled-stress rheometer with a cone-plate geometry for small sample volumes.

- Implement a triplicate measurement protocol, discarding the first reading as a conditioning step.

Q4: How do I balance the number of sequence vs. formulation parameters to avoid an intractably large search space? A: Use a tiered approach.

- Problem: The "curse of dimensionality" makes optimization inefficient.

- Solution: Start with a sequence-only search (5-10 mutable positions) to identify promising variant families. Then, for the top 2-3 variants, launch a combined sequence-formulation optimization where formulation is the primary variable, using the earlier data as prior knowledge.

Key Experimental Protocols

Protocol 1: High-Throughput Viscosity Screening at Low Volume

- Objective: Measure relative viscosity of antibody variants at high concentration using minimal material.

- Methodology:

- Concentrate purified antibody variants to 100-150 mg/mL using 10 kDa MWCO centrifugal filters.

- Load 20 µL of sample into a capillary-based viscosity instrument (e.g., Unchained Labs Little Mr. Viscosity or similar).

- Measure the flow time through a micro-capillary at a controlled pressure and temperature (25°C).

- Calculate kinematic viscosity relative to a buffer standard. Normalize all values to a common reference antibody included in each plate.

- Key Controls: Include a buffer blank and a standard antibody control on every measurement plate.

Protocol 2: Formulation Buffer Preparation for DoE Studies

- Objective: Generate precise, high-throughput formulation buffers for stability-viscosity profiling.

- Methodology:

- Prepare stock solutions of all excipients (e.g., 1M Histidine, 2M NaCl, 40% Sucrose).

- Use a liquid handling robot to mix stocks according to a DoE matrix in 96-well deep-well blocks.

- Adjust pH of each buffer using micro-titrations of 0.5M HCl or NaOH. Verify final pH in a representative subset.

- Perform buffer exchange for selected antibody variants into each formulation using 96-well plate desalting columns or dialysis.

- Concentrate to target concentration (e.g., 50 mg/mL) for screening.

Data Tables

Table 1: Typical Search Space Parameters for Antibody Optimization

| Parameter Category | Specific Variables | Typical Range | Key Consideration |

|---|---|---|---|

| Sequence | CDR Residue Identity | 3-5 positions, 2-4 aa each | Prioritize by in silico SCM or hydrophobicity |

| Sequence | Framework Patch Mutation | e.g., "TM2" (S28T, S30T, S65T) | Known to modulate self-interaction |

| Formulation | pH | 5.0 - 7.0 (0.5 increments) | Impacts charge distribution & stability |

| Formulation | Ionic Strength (NaCl) | 0 - 150 mM | Screens electrostatic interactions |

| Formulation | Stabilizer (Sucrose) | 0 - 10% (w/v) | Alters solution viscosity & stability |

Table 2: Common Viscosity Measurement Methods

| Method | Sample Volume | Concentration Range | Throughput | Key Limitation |

|---|---|---|---|---|

| Capillary Viscometer | 10-30 µL | 50-200 mg/mL | High | Measures kinematic viscosity only |

| Micro-Rheology | 5-10 µL | 1-150 mg/mL | Medium | Requires tracer particles |

| Cone-Plate Rheometer | 50-100 µL | 10-200 mg/mL | Low | Gold standard; requires more sample |

Visualizations

Diagram 1: Search Space Definition Workflow

Diagram 2: BO for Antibody Tradeoffs Logic

The Scientist's Toolkit

| Research Reagent / Material | Function in Experiment |

|---|---|

| Histidine-HCl Buffer Stock (1M, pH 6.0) | Primary buffer system for formulation screens; provides pH control and chemical stability. |

| Sodium Chloride (NaCl) | Modifies ionic strength to screen for electrostatic-driven self-interactions affecting viscosity. |

| Trehalose or Sucrose | Stabilizing excipient; used to probe colloidal stability and its effect on solution viscosity. |

| 96-Well Plate Desalting Columns | Enables high-throughput buffer exchange of multiple antibody variants into numerous formulation conditions. |

| 10 kDa MWCO Centrifugal Filters | For concentrating antibody samples to high concentration (≥100 mg/mL) for viscosity measurements. |

| Reference mAb Control | A well-characterized antibody with known viscosity profile; essential for data normalization and instrument QC. |

| Capillary Viscometer Plates/Chips | Enables low-volume, high-throughput relative viscosity measurements for initial screening. |

Troubleshooting Guides & FAQs

Q1: My Gaussian Process (GP) surrogate model training is failing due to high-dimensional antibody sequence data (one-hot encoded). What are my options? A: High-dimensional one-hot encoded sequences often violate GP assumptions of smoothness and lead to poor kernel matrix conditioning. Solutions include:

- Dimensionality Reduction: Apply Principal Component Analysis (PCA) or use learned embeddings from a pre-trained protein language model (e.g., ESM-2) before model training.

- Model Switching: Use a surrogate model better suited for high-dimensional, discrete data, such as a Random Forest or a Bayesian Neural Network (BNN).

- Kernel Selection: If using a GP, switch to a specialized kernel like the Fisher kernel or a deep kernel that incorporates sequence information.

Q2: The predictions from my ensemble of surrogates (GP and Random Forest) disagree significantly for promising candidate sequences. Which prediction should I trust for the next Bayesian optimization iteration? A: Significant disagreement indicates high model uncertainty in that region of the sequence-stability-viscosity landscape. This is an opportunity for active learning.

- Strategy: Use an acquisition function that explicitly balances exploration and exploitation, like Expected Improvement (EI) or Upper Confidence Bound (UCB). The candidate with the highest acquisition function value, not necessarily the best mean prediction, should be selected for the next wet-lab experiment.

- Protocol: Calculate the mean and variance (uncertainty) for each candidate from the ensemble. Feed these into the acquisition function. The candidate maximizing the acquisition function is the optimal next experiment.

Q3: How do I integrate experimental viscosity measurements (a notoriously noisy assay) into my surrogate model reliably? A: Explicitly modeling measurement noise is crucial.

- Methodology: When configuring your surrogate model (e.g., a GP), set or estimate a noise parameter (

alphain scikit-learn'sGaussianProcessRegressor). Use replicate experimental data to estimate the noise level empirically. - Protocol: For each candidate antibody, perform at least

n=3technical replicates of the viscosity measurement (e.g., using a micro-viscometer). Calculate the variance. Use the average variance across recent batches as a prior for the GP's noise level parameter to stabilize training.

Q4: My multi-output surrogate model, predicting both stability (Tm) and viscosity (cP), performs poorly on viscosity. Should I build separate models? A: Not necessarily. A poorly performing multi-output model often indicates mismatched scaling or inappropriate coregionalization.

- Troubleshooting Steps:

- Scale Outputs: Independently standardize the Tm and cP values to have zero mean and unit variance.

- Kernel Review: For a Multi-output GP, ensure you are using an appropriate coregionalization kernel (e.g.,

Coregionalization) that can learn correlations between the two outputs. If no correlation exists, separate models may be simpler. - Validate Correlation: Check the Pearson correlation between experimental Tm and cP in your existing data. If

|r| < 0.2, separate models are recommended.

Research Reagent Solutions

| Item | Function in Surrogate Modeling for Antibody Optimization |

|---|---|

| scikit-learn | Python library providing robust implementations of Random Forest regressors and foundational tools for data scaling/preprocessing for model training. |

| GPyTorch / BoTorch | PyTorch-based libraries for flexible Gaussian Process and Bayesian optimization model building, ideal for custom kernel design and multi-output tasks. |

| ESM-2 (Meta) | Pre-trained protein language model used to generate informative, continuous vector embeddings of antibody variable region sequences, reducing dimensionality. |

| UniRep (JAX) | Alternative protein sequence representation model for generating rich features from amino acid sequences as input for machine learning models. |

| PyMC3 / NumPyro | Probabilistic programming frameworks for building complex, hierarchical Bayesian models (e.g., Bayesian Neural Networks) as surrogates. |

| Pandas / NumPy | Essential for data wrangling, organizing experimental data (sequences, Tm, cP), and preparing it for model ingestion. |

Key Experimental Data

Table 1: Comparison of Surrogate Model Performance on Antibody Stability-Viscosity Dataset (Hypothetical Data)

| Model Type | Kernel/Architecture | Stability (Tm) RMSE (°C) ↓ | Viscosity (cP) RMSE ↓ | Avg. Training Time (min) | Handles High-Dim Seq? |

|---|---|---|---|---|---|

| Gaussian Process | RBF Kernel | 1.05 | 0.82 | 45 | No |

| Gaussian Process | Deep Kernel + ESM-2 | 0.78 | 0.65 | 62 | Yes |

| Random Forest | 100 Trees | 0.95 | 0.71 | 5 | Yes |

| Bayesian Neural Net | 3 Hidden Layers | 0.82 | 0.68 | 110 | Yes |

| Multi-output GP | ICM Kernel | 0.88 | 0.75 | 58 | No |

Table 2: Impact of Noise Modeling on Surrogate Prediction for Viscosity

| Noise Handling Method | Estimated Noise Level (cP²) | Model Log-Likelihood on Test Set ↑ |

|---|---|---|

None (alpha=1e-6) |

Fixed, Low | -125.4 |

| Empirical (from replicates) | 0.11 | -48.7 |

| Marginal Likelihood Maximization | 0.09 | -50.1 |

Experimental Protocol: Training a Robust Surrogate Model

Title: Integrated Workflow for Surrogate Model Training on Antibody Data

Objective: To train a surrogate model that accurately maps antibody sequence features to experimentally measured stability (Tm) and viscosity.

Materials:

- Dataset of antibody variable region sequences and corresponding experimental Tm & viscosity values.

- Python environment with scikit-learn, GPyTorch, pandas, numpy.

- ESM-2 model weights (local or via API).

Procedure:

- Feature Generation: Input antibody sequences into the ESM-2 model. Extract the per-residue or pooled embeddings from the final layer. Use PCA to reduce dimensions to ~50.

- Data Partitioning: Randomly split the dataset into training (70%), validation (15%), and hold-out test (15%) sets. Ensure stratified sampling across a range of Tm/viscosity values.

- Output Scaling: Independently standardize the Tm and viscosity vectors (from the training set only) using

StandardScaler. - Model Configuration: Initialize your chosen surrogate model (e.g., a GP with a Matern 5/2 kernel). For the GP, set the noise constraint based on prior replicate variance (see Table 2).

- Training: Fit the model to the scaled training data (ESM-2 features -> scaled outputs). For GPs, optimize the marginal log-likelihood. For Random Forests, use out-of-bag error.

- Validation: Predict on the validation set. Inverse-transform the predictions to original units. Calculate RMSE and Mean Absolute Error (MAE).

- Hyperparameter Tuning: Adjust model complexity (kernel lengthscales, number of trees, network layers) based on validation performance to avoid overfitting.

- Final Evaluation: Retrain on combined training+validation data. Report final performance metrics on the untouched test set.

Model Integration & Selection Workflow Diagram

Surrogate Model Decision Logic Diagram

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During a Bayesian optimization (BO) run for mAb formulation, my acquisition function gets "stuck," repeatedly selecting similar points without exploring new regions of the viscosity-stability space. How can I address this?

A: This indicates a potential over-exploitation issue. Recommended actions:

- Check Kernel Hyperparameters: An excessively large length-scale in the Matern or RBF kernel can over-smooth the surrogate model, causing it to miss local optima. Re-optimize hyperparameters (e.g., via marginal log-likelihood maximization) or consider using an automatic relevance determination (ARD) kernel.

- Adjust Acquisition Function Parameters: If using Expected Improvement (EI), increase the exploration parameter (ξ). A typical range is 0.01 to 0.1. Systematically increase ξ and monitor the diversity of selected points.

- Switch Acquisition Functions: Temporarily switch to an Upper Confidence Bound (UCB) with a high β (e.g., β=4-6) for a few iterations to force exploration, or use a purely exploratory function like Thompson Sampling.

- Add Manual Exploration Points: Inject a random or space-filling design point into the next batch to perturb the optimization loop.

Q2: The predicted mean from my Gaussian Process (GP) model for viscosity appears accurate, but the uncertainty (variance) is unrealistically low, causing poor exploration. What could be wrong?

A: Unrealistically low uncertainty often stems from inappropriate noise assumptions.

- Noise Model Mis-specification: Your experimental noise may be higher than assumed. Explicitly model heteroscedastic (input-dependent) noise if your measurement error varies across the formulation space (e.g., higher error at high viscosity).

- Kernel Choice: The default kernel might be too rigid. Implement a composite kernel (e.g., RBF + WhiteKernel) to capture both the smooth function and independent noise. Ensure the WhiteKernel's noise level parameter is being optimized.

- Data Pre-processing: Verify that the viscosity and stability data are scaled appropriately (e.g., standardized). Features on vastly different scales can distort distance calculations in the kernel.

Q3: When optimizing for both low viscosity and high stability, how do I handle conflicting objectives within the acquisition function?

A: For multi-objective BO, you must use a specialized acquisition function.

- Methodology: Employ the Expected Hypervolume Improvement (EHVI). This is the gold standard for Pareto front discovery. It measures the expected increase in the hypervolume dominated by the Pareto front after adding a new point.

- Protocol: After each experiment, update the GP models for both viscosity and stability. Calculate the current Pareto front from all observed data. The EHVI acquisition function then evaluates candidate formulations by how much they are expected to improve this front. Select the point maximizing EHVI for the next experiment.

- Alternative: For a simpler scalarized approach, use the Weighted Sum method with a standard EI. Define a scalar objective:

Objective = w * (Stability Score) - (1-w) * log(Viscosity). Optimize this single objective with BO. Vary the weightwacross multiple BO runs to map the trade-off.

Key Experimental Protocols

Protocol 1: Benchmarking Acquisition Functions for mAB Formulation

- Design: Create a historical dataset of 50-100 formulations with measured viscosity (cp, at 10 mg/mL, 20°C) and stability (% monomer after 4 weeks at 40°C).

- Surrogate Model: Fit independent GP models with Matern 5/2 kernels to viscosity and stability data. Use 5-fold cross-validation to validate model predictions.

- BO Loop Simulation: Start each BO run from a randomly selected subset of 10 initial points. Iteratively "select" the next point using different acquisition functions (EI, UCB, Probability of Improvement, Thompson Sampling). Use the full historical dataset to simulate the "experimental result" for the selected point.

- Metric: Track the log hypervolume improvement over iterations. The acquisition function leading to the fastest hypervolume growth is optimal for your problem.

Protocol 2: Calibrating the Exploration-Exploitation Trade-off Parameter (ξ for EI)

- Initial Run: Conduct a BO run with a default ξ=0.01.

- Analysis: Plot the distance of each newly selected point to its nearest neighbor in the observed dataset. Calculate the moving average of this distance.

- Adjustment: If the moving average distance drops below a threshold (e.g., 10% of the feature space diameter) for 3 consecutive iterations, increase ξ by a factor of 1.5 for the next iteration.

- Validation: Run two parallel BO experiments on the same mAb: one with static ξ=0.01 and one with the adaptive ξ protocol from steps 1-3. Compare the Pareto fronts obtained after 30 iterations.

Data Presentation

Table 1: Performance Comparison of Acquisition Functions in a Simulated mAB Optimization Scenario: Maximizing Stability & Minimizing Viscosity over 40 iterative experiments.

| Acquisition Function | Final Hypervolume (a.u.) | Iterations to Reach 90% Max HV | % of Selected Points in Unexplored Regions* |

|---|---|---|---|

| Expected Improvement (EI) | 12.7 | 28 | 35% |

| Upper Confidence Bound (UCB, β=2) | 11.9 | 33 | 52% |

| Probability of Improvement (PI) | 10.5 | 37 | 22% |

| Thompson Sampling (TS) | 12.4 | 26 | 48% |

| q-EHVI (Multi-Objective) | 14.2 | 24 | 41% |

*Unexplored Region: Distance > 0.2 (normalized space) from all previous points.

Table 2: Impact of EI Exploration Parameter (ξ) on Optimization Outcome Data from a single mAb formulation screen targeting viscosity < 5 cp.

| ξ Value | Final Best Viscosity (cp) | Stability at that Point (% monomer) | Total Distinct Formulation Clusters Explored |

|---|---|---|---|

| 0.001 | 4.8 | 94.2 | 3 |

| 0.01 | 4.5 | 93.8 | 7 |

| 0.1 | 5.1 | 95.1 | 11 |

| Adaptive (0.01-0.3) | 4.4 | 94.5 | 9 |

Visualizations

Title: Bayesian Optimization Cycle for mAb Development

Title: Acquisition Function Balancing Exploration vs Exploitation

The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Primary Function in BO for mAb Formulation |

|---|---|

| Histidine Buffer System (e.g., L-Histidine/Histidine-HCl) | A common pH buffer (range 5.5-6.5) providing a controlled ionic environment for screening excipient effects on viscosity and stability. |

| Excipient Library (Sucrose, Trehalose, Arginine-HCl, Proline, NaCl, PS20/PS80) | Key formulation components whose concentrations become the input variables (dimensions) for the Bayesian optimization search space. |

| High-Throughput Viscosity Analyzer (e.g., μVISC, DLS-based) | Enables rapid, low-volume viscosity measurement of hundreds of formulation candidates, generating the critical quantitative data for the GP model. |

| Stability-Indicating Assays (SEC-HPLC, DSC, DLS for subvisible particles) | Provide the stability/output metrics (e.g., % monomer, Tm, kD) for the multi-objective optimization, often after stressed storage. |

| Automated Liquid Handler | Essential for precise, high-throughput preparation of the diverse formulation combinations suggested by the BO algorithm. |

| BO Software Platform (e.g., BoTorch, GPyOpt, custom Python with scikit-learn & GPflow) | Provides the computational framework for building GP models, calculating acquisition functions (EI, UCB, EHVI), and managing the iterative optimization loop. |

Technical Support Center: Troubleshooting & FAQs

Q1: Our Bayesian optimization (BO) loop is suggesting antibody variants with very high predicted stability but also a high predicted viscosity risk. Should we proceed with synthesis? A1: Yes, but with caution. The BO algorithm is exploring the trade-off frontier. Validate these "high-risk, high-reward" candidates with in silico viscosity predictors (e.g., spatial charge map, CoVariance Identification [CVI] score) before moving to wet-lab. If predictors concur, synthesize a small batch for initial viscosity measurement (e.g., micro-scale viscosity assessment) before full expression.

Q2: During wet-lab validation, the measured viscosity of a variant is significantly higher than the BO model predicted. What could be the cause? A2: Common causes and solutions:

- Feature Miscalibration: The isoelectric point (pI) or charge features used in the model may not fully capture the specific self-interaction. Re-check the calculated molecular features for errors.

- Concentration Discrepancy: Ensure the measured protein concentration is exact. Use orthogonal methods (A280, SEC-MALS) for confirmation.

- Buffer Conditions: Verify that the formulation buffer (pH, ionic strength, excipients) matches the in silico simulation conditions exactly.

- Assay Variability: Perform the viscosity measurement (e.g., on a viscometer) in triplicate. High variance may indicate instrument or sample handling issues.

Q3: The stability (e.g., Tm from DSF) of a synthesized variant is much lower than predicted, breaking the expected trade-off. How should we update the BO model? A3: This is critical feedback for the BO loop.

- Confirm Data Fidelity: Repeat the stability assay to rule out experimental error.

- Enter Data Point with Confidence Metric: Input the new (sequence, measured stability, measured viscosity) data pair into the BO database. Tag it with a "high confidence" flag if the assay was robust.

- Adjust Model Hyperparameters: Retrain the Gaussian Process model. The unexpected result may indicate a need to adjust the length-scale hyperparameters, suggesting the model was over-confident in a region of the sequence space.

- Re-run Optimization: The updated model will now avoid this region of the design space, improving subsequent suggestions.

Q4: We are experiencing slow progress in the BO loop. The algorithm seems to be "exploiting" rather than "exploring" the design space. A4: Tune the acquisition function.

- Problem: Over-use of Expected Improvement (EI) can lead to exploitation.

- Solution: Shift to Upper Confidence Bound (UCB) with a higher

kappaparameter (e.g., increase from 2 to 4) to weight exploration more heavily for the next 1-2 design rounds. Alternatively, use a mixed strategy (e.g., 70% EI, 30% random query) for the next iteration.

Q5: How do we handle failed protein expression or purification for a suggested variant? A5: This is a common bottleneck.

- Immediate Action: Assign a "failed expression" flag and input a penalty value for stability and viscosity (e.g., a very low stability and very high viscosity) into the BO dataset. This actively teaches the model to avoid sequences with poor developability.

- Root Cause: Run quick in silico checks on the failed sequence: check for aggregation-prone regions (APR) or unusual codon usage. This can inform a filter for future suggestions.

- Protocol: Implement a high-throughput micro-expression screen (e.g., 1 mL deep-well plate) for all suggested variants before moving to large-scale purification to catch expression issues early.

Key Experimental Protocols

Protocol 1: High-Throughput Stability Assessment (Differential Scanning Fluorimetry - DSF)

- Prepare Samples: Dilute purified antibody variant to 0.2 mg/mL in formulation buffer. Mix 25 µL of protein with 25 µL of 10X SYPRO Orange dye.

- Plate Setup: Load into a 96-well PCR plate in triplicate. Include a buffer-only + dye control.

- Run Assay: Using a real-time PCR instrument, ramp temperature from 25°C to 95°C at a rate of 1°C/min, with fluorescence measurements (excitation/emission ~470/570 nm) at each step.

- Analyze Data: Plot derivative of fluorescence (dF/dT) vs. temperature. The melting temperature (Tm) is the peak minimum. Report the mean ± SD of triplicates.

Protocol 2: Micro-Scale Viscosity Measurement (Dynamic Light Scattering - DLS)

- Sample Preparation: Buffer-exchange antibody variants into the target formulation buffer and concentrate to a target high concentration (e.g., 150 mg/mL) using centrifugal concentrators. Confirm concentration via A280.

- Measurement: Load 15 µL of sample into a glass capillary or low-volume cuvette. Place in a DLS instrument equipped with a viscosity measurement module.

- Data Collection: Measure the diffusion coefficient at 25°C. The apparent viscosity is derived from the Stokes-Einstein equation, relative to a buffer standard.

- Quality Control: Ensure the intensity autocorrelation function fits a single major species model. Polydispersity >25% may indicate aggregation invalidating the viscosity readout.

Protocol 3: Bayesian Optimization Iteration Update

- Data Compilation: Assemble a table with columns: [VariantID, SequenceFeatures (pI, hydrophobicity index, etc.), MeasuredTm, MeasuredViscosity].

- Data Normalization: Scale all input features and target outputs (Tm, viscosity) to zero mean and unit variance.

- Model Retraining: Using a Gaussian Process library (e.g., GPyTorch, scikit-learn), train a model with a Matern kernel on all data.

- Acquisition Calculation: Compute the Upper Confidence Bound (UCB) for all candidate sequences in the pre-enumerated library.

- Selection: Choose the top 3-5 sequences with the highest UCB scores for the next round of synthesis and validation.

Table 1: Example Closed-Loop Experiment Results (Cycle 3)

| Variant ID | Predicted Tm (°C) | Measured Tm (°C) | Predicted Viscosity (cP) | Measured Viscosity (cP) | Expression Yield (mg/L) |

|---|---|---|---|---|---|

| BO-3-01 | 72.5 | 71.8 ± 0.4 | 12.1 | 14.5 ± 0.8 | 45 |

| BO-3-02 | 69.1 | 68.3 ± 0.6 | 8.2 | 8.0 ± 0.3 | 52 |

| BO-3-03 | 75.2 | 70.1 ± 1.1 | 15.5 | 22.7 ± 1.5 | 28 |

| Parent | 68.0 | 68.0 | 15.0 | 15.0 | 60 |

Table 2: Key Bayesian Optimization Hyperparameters

| Parameter | Symbol | Value Used | Function |

|---|---|---|---|

| Acquisition Function | α(x) | UCB (κ=2.5) | Balances exploration/exploitation |

| Kernel | k(x,x') | Matern (ν=2.5) | Models smoothness of the objective function |

| Noise Prior | σ² | 0.01 | Accounts for experimental measurement noise |

| Training Iterations | - | 1000 | For Gaussian Process model convergence |

Diagrams

Closed-Loop Bayesian Optimization Workflow

Wet-Lab Validation Protocol Steps

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Closed-Loop Experiment |

|---|---|

| HEK293 Expi or CHO-S Cells | Mammalian expression systems for transient or stable production of human antibody variants, ensuring proper folding and post-translational modifications. |

| Protein A Affinity Resin | For high-purity, high-yield capture of IgG antibodies from cell culture supernatant in a single step. |

| Size-Exclusion Chromatography (SEC) Column | Critical for polishing purification, removing aggregates, and exchanging buffer into the desired formulation for stability/viscosity testing. |

| SYPRO Orange Dye | Fluorescent dye used in Differential Scanning Fluorimetry (DSF) to monitor protein unfolding as a function of temperature, yielding Tm. |

| Standardized Formulation Buffer Kits | Pre-mixed buffers (e.g., Histidine-Sucrose at various pHs) to ensure consistency in viscosity measurements across all variants. |

| Dynamic Light Scattering (DLS) Plate Reader | Enables low-volume, high-throughput measurement of diffusion coefficients and derived viscosity for concentrated antibody solutions. |

| Codon-Optimized Gene Fragments | For rapid synthesis of variant antibody sequences identified by the BO algorithm, accelerating the build phase of the cycle. |

| Bayesian Optimization Software (e.g., BoTorch, GPyOpt) | Python libraries to build, train, and query the Gaussian Process models that drive the iterative design process. |

Troubleshooting Guides & FAQs

Q1: Our lead antibody candidate shows acceptable potency but exhibits unacceptably high viscosity (>50 cP at 150 mg/mL) for subcutaneous delivery. What are the primary sequence or structural attributes we should investigate first?

A: High viscosity in mAb solutions is often linked to self-association driven by specific molecular interactions. Primary investigation targets should include:

- Net Surface Charge and Charge Distribution: A low net positive charge or asymmetric charge patches can increase viscosity. Calculate the isoelectric point (pI) and analyze 3D electrostatic surface maps.

- Hydrophobic Patches: Surface-exposed hydrophobic residues, particularly in the Complementarity-Determining Regions (CDRs), can drive aggregation and increase viscosity. Use tools like CamSol or Hydrophobic Interaction Chromatography (HIC) retention time to assess.

- Flexible Regions: High conformational entropy in the Fab or hinge region can contribute. Analyze molecular dynamics simulations or hydrogen-deuterium exchange mass spectrometry (HDX-MS) data for flexible segments.

Experimental Protocol: Cross-Interaction Chromatography (CIC) for Assessing Self-Association Potential

- Column: Use a commercially available human IgG column (e.g., HiTrap Protein G column coupled with pooled human IgG).

- Sample Prep: Dialyze your antibody candidate into a standard buffer (e.g., 20 mM Histidine, pH 6.0).

- Run Conditions: Load 50 µg of antibody at a low flow rate (0.2 mL/min). Elute with a linear pH gradient from pH 6.0 to 2.5 over 40 column volumes.

- Analysis: Monitor UV absorbance at 280 nm. A later elution peak (lower pH) indicates stronger self-interaction. Compare the retention time to a non-viscous control antibody.

Q2: We have generated a library of variants. How should we set up a Bayesian optimization loop to efficiently screen for the optimal stability-viscosity trade-off?

A: Bayesian optimization (BO) is ideal for navigating high-dimensional biologic design spaces with expensive measurements (like viscosity). The loop is structured as follows:

Experimental Protocol: Bayesian Optimization Workflow for mAb Engineering

- Initial Dataset: Characterize a small, diverse set of variants (20-50) for key attributes: Viscosity (at target concentration, e.g., 150 mg/mL), Thermal Stability (Tm1, Tm2 by DSC), Binding Affinity (KD by SPR or BLI), and Expression Titer.

- Model Training: Use a Gaussian Process (GP) model to learn the complex relationships between your input features (e.g., sequence descriptors, physicochemical properties) and the multi-dimensional output space (viscosity, stability, etc.).

- Acquisition Function: Apply an acquisition function (e.g., Expected Improvement) to the GP model. This function identifies the next most informative variant to test by balancing exploration (testing in uncertain regions of the space) and exploitation (testing near predicted optima).

- Iteration: The selected variant is experimentally characterized, and its data is added to the training set. The GP model is updated, and the loop repeats (Steps 2-4) until a candidate meeting all target profiles is identified.

Q3: During formulation development, viscosity of our optimized candidate spikes unexpectedly in a specific buffer condition (e.g., phosphate vs. histidine). What is the likely mechanism and how can we diagnose it?

A: This is typically indicative of a charge-mediated reversible self-association. Phosphate ions can specifically interact with positively charged residues (Arg, Lys, His), potentially bridging antibody molecules.

Diagnostic Protocol: Ion-Specific Viscosity Profiling

- Buffer Matrix: Prepare the antibody at 150 mg/mL in a series of buffers: 20 mM Histidine-HCl (pH 6.0), 20 mM Sodium Phosphate (pH 6.0), and 20 mM Citrate (pH 6.0). Keep ionic strength constant by adding NaCl.

- Measurement: Measure viscosity in triplicate using a micro-viscometer (e.g., capillary-based or rheometer with cone-plate geometry) at 25°C.

- Dynamic Light Scattering (DLS): Run DLS on the same samples to measure the hydrodynamic radius (Rh). A significant increase in Rh in phosphate buffer confirms reversible oligomerization.

- Mitigation: If phosphate is necessary, consider fine-tuning pH or adding excipients like arginine-HCl (100-200 mM), which can disrupt electrostatic interactions.

Data Presentation

Table 1: Bayesian Optimization Iteration Results for Lead Candidate ABC123

| Variant ID | CDR Mutations | Viscosity @ 150 mg/mL (cP) | Tm1 (°C) | KD (nM) | Expression (g/L) | Iteration |

|---|---|---|---|---|---|---|

| WT | -- | 58.2 | 67.5 | 5.1 | 2.1 | Initial |

| V-12 | H100aG, S100bR | 35.6 | 66.1 | 5.5 | 2.0 | 1 |

| V-45 | S31T, H102eY | 25.4 | 68.3 | 4.8 | 1.8 | 2 |

| V-78 | S31T, H100aG, H102eY | 19.1 | 69.0 | 5.0 | 2.3 | 3 (Optimal) |

| V-79 | S31T, H100aR | 42.1 | 65.5 | 120.0 | 2.1 | 3 |

Table 2: Formulation Screen Impact on Optimal Variant (V-78)

| Formulation Buffer (pH 6.0) | Ionic Strength (mM) | Viscosity (cP) | Aggregation (%) SEC-HPLC | Observation |

|---|---|---|---|---|

| 20 mM Histidine-HCl | 50 (w/ NaCl) | 19.1 | 0.8 | Clear, low viscosity |

| 20 mM Sodium Phosphate | 50 | 32.7 | 0.9 | Clear, elevated viscosity |

| 20 mM Citrate | 50 | 21.5 | 0.8 | Clear, low viscosity |

| 20 mM Histidine-HCl + 150mM Arg-HCl | 200 | 15.2 | 0.7 | Clear, lowest viscosity |

Mandatory Visualization

Bayesian Optimization Workflow for mAb Screening

Mechanism of Ion-Mediated Antibody Self-Association

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Optimization | Example/Notes |

|---|---|---|

| Microcapillary Viscometer | Measures viscosity of small-volume (µL), high-concentration protein samples. Essential for high-throughput screening. | ViscoJet 2 (RheoSense). Requires < 50 µL sample. |

| Differential Scanning Calorimetry (DSC) | Quantifies thermal stability (Tm) of Fab and Fc domains. A key constraint in optimization. | MicroCal PEAQ-DSC. Used for measuring Tm1 & Tm2. |

| Surface Plasmon Resonance (SPR) / BLI | Measures binding kinetics (KD, kon, koff) to ensure potency is maintained during engineering. | Biacore 8K (SPR) or Octet RED384 (BLI). |

| Cross-Interaction Chromatography (CIC) Column | Pre-packed column for assessing self-association propensity via HPLC. Predictive of viscosity. | YMC BioPro CIC Column or in-house prepared human IgG column. |

| High-Throughput Protein Expression System | Rapid production of variant libraries for initial screening (e.g., in 96-well format). | Expi293F or CHO transient systems; Ambr 250 bioreactors. |

| Bayesian Optimization Software | Implements Gaussian Process modeling and acquisition functions to guide iterative design. | Custom Python (GPyTorch, BoTorch) or commercial platforms like GINKGO (Synthace). |

| Arginine-HCl | Common formulation excipient that suppresses viscosity via competitive charge shielding and hydrophobic interaction disruption. | Use at 100-250 mM in histidine buffer. |

Overcoming Pitfalls in Bayesian Optimization: Advanced Troubleshooting and Model Refinement

Troubleshooting Guides & FAQs

Noisy Data

Q1: Our high-throughput stability (Tm) measurements show high replicate variance, corrupting the BO surrogate model. How can we diagnose and mitigate this? A1: Noisy label data, common in biophysical assays, misleads the Gaussian Process (GP). Implement the following protocol:

- Diagnosis: For a control sample, run ≥10 replicates in the same assay plate. Calculate the Coefficient of Variation (CV). A CV > 10% indicates problematic noise levels.

- Mitigation - Replicate Strategy: Actively allocate a portion of your experimental budget for replicates. A suggested rule is to perform 3 replicates for points the GP model is most uncertain about (high prediction variance).

- Mitigation - Noise-Aware Modeling: Explicitly model the noise by using a GP likelihood that incorporates a

WhiteKernelorHeteroscedasticKernelin libraries likeGPyTorchorBoTorch. This prevents the model from overfitting to spurious measurements.

Q2: What experimental protocols minimize noise in antibody viscosity measurements? A2: Key methodologies for consistent capillary viscosity assessment:

- Instrument: Use a stabilized-temperature (e.g., 25°C ± 0.1°C) micro-viscometer (e.g., Viscologic).

- Sample Prep: Dialyze all samples into an identical formulation buffer (e.g., Histidine-Sucrose) to eliminate ionic strength artifacts.

- Control: Include a monoclonal antibody with known low viscosity (e.g., 5 cP at 50 mg/mL) as an inter-plate calibrator.

- Replicates: Perform four consecutive measurements per sample, discarding the first as a conditioning run, and report the mean of the remaining three.

Q3: How do we quantify noise to adjust our BO acquisition function? A3: Integrate estimated noise levels directly into the Expected Improvement (EI) or Upper Confidence Bound (UCB). First, characterize noise per experimental region:

| Experimental Condition | Suggested Replicates (n) | Estimated SD (σ) | Impact on Acquisition Function |

|---|---|---|---|

| Initial Random Screen | 2 | High (~2°C for Tm) | Use Noisy EI, increase exploration parameter (ξ). |

| High-Promise Region (Exploitation) | 4 | Medium (~1°C for Tm) | Standard EI. |

| High-Uncertainty Region (Exploration) | 3 | Propagated from model | UCB with β tuned for noise. |

Table 1: Replication strategy and noise integration for BO.

Model Mismatch

Q4: The GP model with a standard RBF kernel fails to capture sharp "cliffs" in the viscosity landscape when a single residue is mutated. How can we fix this? A4: This is a classic kernel mismatch. The smooth RBF kernel cannot model discontinuous relationships. Implement a composite kernel:

RBFKernel: Models the smooth, global effects across most dimensions.Matern12Kernel: Added for the specific dimension (e.g., charge at position 103H) known to cause sharp changes. This kernel allows for less smooth, more abrupt functions.*(Multiplication): Creates an interaction between the smooth and non-smooth kernels.- Protocol: Perform kernel selection via cross-validation on historical data before starting the BO loop.

Q5: Our antibody sequence space is combinatorial. How do we choose a model for such a structured, high-dimensional input? A5: Move beyond a standard GP with one-hot encoding. Use a latent embedding GP.

- Protocol: Pre-train a variational autoencoder (VAE) on a large corpus of antibody sequences (e.g., from OAS database).

- Model: Use the low-dimensional latent vector from the VAE as the input

xto your GP model. - Advantage: The VAE learns a continuous, semantically meaningful space where similar sequences are clustered, making the landscape much smoother and easier for the GP to model.

Diagram 1: Latent space modeling for antibody sequences.

Search Space Limitations

Q6: Our BO search is confined to 3 mutations, but we suspect global optima require 5-6 mutations. How can we expand the search space efficiently? A6: Use a trust region or adaptive expansion strategy.

- Define Initial Region: Start BO in a small, promising region (e.g., 3 mutations around CDR loops).

- Convergence Check: When EI falls below threshold

τ(e.g., 0.01 * max observed improvement), trigger expansion. - Expansion Protocol: Use the GP model to identify the most impactful unexplored mutation direction (highest predicted improvement at boundary) and add it to the search space. This prevents combinatorial explosion.

Q7: How do we balance exploring a vast sequence space with limited wet-lab experiments (≤100)? A7: Implement a multi-fidelity BO approach.

- Low-Fidelity (Cheap): Use in silico stability predictors (e.g.,

RosettaΔΔG,ABACUS) or rapid expression titer. - High-Fidelity (Expensive): Capillary viscosity and thermal shift (Tm) assays.

- Protocol: The GP model integrates data from both fidelities, using the cheap data to explore broadly and guiding expensive experiments to the most promising regions identified.

Diagram 2: Multi-fidelity BO workflow for efficient search.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Antibody Stability/Viscosity BO |

|---|---|