Bayesian Optimization vs Directed Evolution: Which AI-Driven Method Maximizes Antibody Affinity in Drug Discovery?

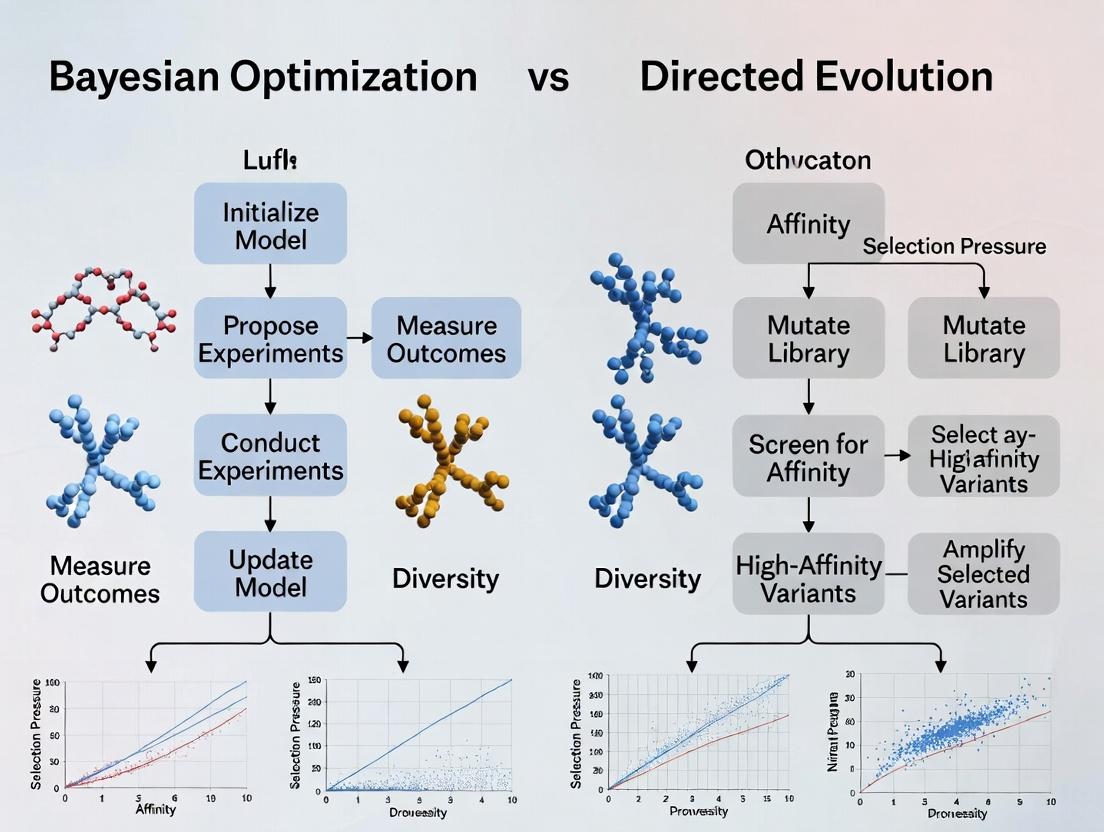

This article provides a comprehensive comparison of two transformative approaches for antibody affinity maturation: Bayesian optimization and directed evolution.

Bayesian Optimization vs Directed Evolution: Which AI-Driven Method Maximizes Antibody Affinity in Drug Discovery?

Abstract

This article provides a comprehensive comparison of two transformative approaches for antibody affinity maturation: Bayesian optimization and directed evolution. Tailored for researchers and drug development professionals, it explores the foundational principles of each method, details their practical implementation and workflow, addresses common experimental and computational challenges, and provides a rigorous, data-driven comparison of their performance, efficiency, and suitability for different stages of therapeutic antibody development. The analysis synthesizes recent advances to guide the selection and optimization of these high-throughput strategies.

The Core of Affinity Maturation: Understanding Bayesian and Evolutionary Principles

Therapeutic antibody efficacy is governed by a complex interplay of factors, with antigen-binding affinity (typically measured as dissociation constant, K_D) being a foundational parameter. Optimal affinity is critical: too low, and target engagement is insufficient; too high, and it can lead to poor tissue penetration or "binding site barrier" effects, where antibodies become sequestered in the first tissue layer they encounter. In the context of modern discovery, two dominant paradigms exist for affinity optimization: iterative directed evolution and model-driven Bayesian optimization. This guide compares their performance in engineering high-affinity antibodies.

Comparison Guide: Bayesian Optimization vs. Directed Evolution for Affinity Maturation

The following table summarizes key performance metrics from recent, representative studies applying each method to antibody fragment (e.g., scFv) affinity maturation.

Table 1: Performance Comparison of Affinity Maturation Strategies

| Metric | Directed Evolution (Yeast/Phage Display) | Bayesian Optimization (BO) | Experimental Context & Citation |

|---|---|---|---|

| Typical Library Size | 10^7 - 10^10 variants | 10^2 - 10^3 sequenced variants | Initial screening library size. |

| Sequencing Depth Required | Low to Moderate (for hits only) | High (for model training) | BO requires dense data for initial model. |

| Iterations to >100x K_D Improvement | 3 - 5 rounds | 2 - 3 cycles | From a naive or moderate-affinity parent. |

| Key Advantage | Explores vast sequence space empirically; no model needed. | Highly data-efficient; predicts high-performing regions. | |

| Key Limitation | Labor & resource-intensive rounds; screening bottleneck. | Performance dependent on initial data and model choice. | |

| Reported Final K_D | Low pM to fM range common. | Comparable low pM to fM range achieved. | Varies by target and parent antibody. |

| Lead Diversity | Higher, as selection pressure is purely experimental. | Can be lower, may converge quickly on predicted optimum. | Diversity is a consideration for developability. |

Supporting Experimental Data: A seminal 2021 study directly compared a BO-driven approach with traditional FACS-based yeast display evolution for anti-HER2 scFv affinity maturation. Starting from a 13 nM binder, BO achieved a 250-fold improvement (K_D = 52 pM) after 2 cycles of sequencing and model-based prediction, testing under 400 variants. In contrast, parallel directed evolution required 4 rounds of FACS sorting and screening of over 10^7 cells per round to achieve a comparable 180-fold improvement.

Experimental Protocols for Cited Studies

Protocol 1: Yeast Surface Display for Directed Evolution Affinity Maturation

- Objective: Isolate high-affinity scFv variants from a large mutagenic library.

- Methodology:

- Library Construction: Error-prone PCR or chain-shuffling to create a diverse library of scFv genes, cloned into a yeast display vector (e.g., pYD1).

- Transformation: Electroporate the library into Saccharomyces cerevisiae strain EBY100.

- Induction: Induce scFv expression on the yeast surface with galactose.

- Magnetic/Affinity Pre-screening: Incubate with biotinylated antigen, then anti-biotin magnetic beads to remove non-binders.

- FACS Sorting: Stain induced yeast with fluorescently-labeled antigen at decreasing concentrations (for equilibrium K_D screening) and an anti-c-Myc antibody for expression level. Use a flow cytometer to sort the dual-positive (expression+, antigen+) population with the highest antigen binding/expression ratio.

- Amplification & Iteration: Grow sorted yeast and repeat induction and sorting for 3-5 rounds with increasing stringency.

- Characterization: Isolate plasmid from single clones, express soluble protein, and determine affinity via Biacore/Octet.

Protocol 2: Model-Guided Affinity Maturation via Bayesian Optimization

- Objective: Minimize experimental cycles to identify high-affinity variants.

- Methodology:

- Initial Diverse Library: Generate a first-generation library (~500-1000 variants) via site-saturation mutagenesis of key CDR residues. Sequence each variant.

- High-Throughput Affinity Measurement: Measure relative binding of each variant using a quantitative method (e.g., flow cytometry mean fluorescence intensity (MFI) for yeast display, or biolayer interferometry (BLI) screening). Normalize for expression.

- Model Training: Use the sequence-function data (variants as inputs, normalized binding signal as output) to train a Gaussian process or other probabilistic model.

- Acquisition Function & Prediction: Apply an acquisition function (e.g., Expected Improvement) to the model. The model predicts the sequences with the highest potential for improvement, balancing exploration and exploitation.

- Next-Cycle Design: Synthesize and test the 50-100 top-predicted variants from the model.

- Iteration & Refinement: Add the new data to the training set, retrain the model, and predict the next batch. Continue for 2-4 cycles.

- Validation: Express and purify top model-predicted hits for precise K_D determination via SPR.

Visualizations

Directed Evolution Affinity Maturation Workflow

Bayesian Optimization for Antibody Affinity

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Antibody Affinity Maturation Experiments

| Reagent/Material | Function & Purpose | Example Product/Catalog |

|---|---|---|

| Yeast Display Vector | Display scFv/antibody fragment on yeast surface for screening. | pYD1 or pCTcon2 for S. cerevisiae. |

| EBY100 Yeast Strain | Engineered S. cerevisiae strain for efficient surface display. | ATCC MYA-4941 or commercial equivalents. |

| Biotinylated Antigen | Critical for selective capture and staining during FACS/panning. | Custom synthesis & biotinylation kits. |

| Anti-c-Myc/Fluorophore | Detect surface expression level of the displayed antibody fragment. | Anti-Myc-FITC or -PE antibodies. |

| Streptavidin Magnetic Beads | For pre-enrichment of antigen-binding yeast clones. | Dynabeads MyOne Streptavidin. |

| FACS Sorter | High-throughput single-cell sorting based on binding & expression. | BD FACSAria, Sony SH800. |

| Biolayer Interferometry (BLI) System | Label-free, medium-throughput kinetic screening of purified antibodies. | Sartorius Octet RED96e. |

| Surface Plasmon Resonance (SPR) System | Gold-standard for detailed kinetic (kon/koff) and affinity (K_D) analysis. | Cytiva Biacore 8K. |

| Next-Gen Sequencing Kit | For deep sequencing of library pools and variant identification. | Illumina MiSeq kits for amplicon sequencing. |

Within the pursuit of optimizing antibody affinity, two paradigms dominate: empirical, library-driven Directed Evolution and model-driven Bayesian Optimization. This guide compares core laboratory techniques—phage display, yeast display, and Fluorescence-Activated Cell Sorting (FACS)—that form the experimental backbone of directed evolution campaigns, contextualizing them within the broader thesis of empirical versus in silico-guided protein engineering.

Comparative Performance Data

Table 1: Platform Comparison for Antibody Affinity Maturation

| Feature | Phage Display | Yeast Display | FACS (as sorting tool) |

|---|---|---|---|

| Library Size | 10^9 - 10^11 | 10^7 - 10^9 | Limited by display platform |

| Typical KD Improvement | 10- to 1000-fold | 10- to 10,000-fold | Dependent on display system |

| Sorting Throughput | ~10^12 particles/sort | ~10^8 cells/sort | ~50,000 cells/sec |

| Multiparameter Sorting | Limited (panning) | Excellent (FACS) | Native capability |

| Expression Host | E. coli (for library) | S. cerevisiae | Mammalian cells possible |

| Key Experimental Metric | Colony-forming units (CFU) | Mean Fluorescence Intensity (MFI) | Fluorescence signal/ratio |

| Typical Cycle Duration | 1-2 weeks | 4-7 days | 1 day (sorting step) |

Table 2: Representative Affinity Maturation Outcomes

| Target (Antibody) | Initial KD (nM) | Method (Display + Sort) | Evolved KD (nM) | Fold Improvement | Key Citation (Example) |

|---|---|---|---|---|---|

| Anti-HER2 scFv | 65 | Phage Display + Panning | 0.7 | ~93x | Boder et al. (2000) |

| Anti-TNF-α Fab | 16 | Yeast Display + FACS | 0.0046 | ~3,500x | Van Blarcom et al. (2015) |

| Anti-EGFR Fab | 30 | Yeast Display + FACS/MACS | 0.032 | ~940x | Chao et al. (2006) |

Detailed Experimental Protocols

Protocol 1: Phage Display Biopanning

Objective: Isolate antigen-specific antibody fragments from a phage library. Methodology:

- Library Incubation: Incubate phage library (e.g., scFv or Fab) with immobilized antigen (on plate or beads) for 1-2 hours in blocking buffer.

- Washing: Remove non-binding phage with extensive washes (10-20x) using PBS/Tween-20.

- Elution: Recover bound phage via competitive elution (soluble antigen) or acidic elution (Gly-HCl, pH 2.2).

- Amplification: Infect eluted phage into log-phase E. coli (e.g., TG1), rescue with helper phage (M13KO7) to produce phage for the next round.

- Analysis: After 3-5 rounds, pick individual clones for monoclonal phage ELISA and sequencing.

Protocol 2: Yeast Display with FACS Sorting

Objective: Isolate high-affinity antibodies by labeling and sorting yeast cells based on binding signal. Methodology:

- Induction: Induce antibody expression on yeast surface (e.g., S. cerevisiae EBY100) in SG-CAA media at 20-30°C.

- Labeling: Label ~10^7 yeast cells with biotinylated antigen over a concentration gradient. Detect with Streptavidin-PE (for affinity) and anti-epitope tag antibody (e.g., anti-c-myc-FITC for expression).

- FACS Gating Strategy: Gate on single cells, then on high-expression population (FITC signal). Within this, sort the top 0.1-5% of cells with the highest PE:FITC ratio (binding/expression).

- Recovery & Expansion: Sort cells into rich media, recover, and expand for the next round of induction/sorting.

- Affinity Determination: For post-sort clones, perform titration binding assays on yeast surface or with soluble protein to determine KD via flow cytometry.

Visualized Workflows

Diagram Title: Phage Display Biopanning Cycle

Diagram Title: Yeast Display FACS with Bayesian Optimization Integration

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Directed Evolution

| Item | Function & Specification |

|---|---|

| Phagemid Vector (e.g., pComb3X) | Cloning vector for antibody fragment (scFv/Fab) library. Contains phage coat protein signal for display. |

| Helper Phage (M13KO7) | Provides all proteins for phage assembly during amplification; has kanamycin resistance. |

| E. coli Strain (TG1 or SS320) | High-efficiency electrocompetent cells for phage library propagation and rescue. |

| Yeast Display Vector (e.g., pYD1) | Contains Aga2p gene for surface fusion and inducible GAL1 promoter. |

| S. cerevisiae Strain (EBY100) | Engineered for surface display (AGA1 integrated, trp1 deficiency). |

| Biotinylated Antigen | High-purity antigen with site-specific biotinylation for precise detection with streptavidin conjugates. |

| Fluorophore Conjugates | Streptavidin-PE/APC (binding signal), Anti-c-myc-FITC (expression control). |

| MACS Streptavidin Beads | Magnetic beads for pre-enrichment in yeast display prior to FACS. |

| FACS Sort Tubes | Sterile, cell-friendly tubes coated with FBS or sorting buffer to maintain cell viability. |

| Flow Cytometry Analysis Software (e.g., FlowJo) | For analyzing binding curves and calculating apparent KD from MFI data. |

Phage and yeast display, coupled with FACS, provide robust experimental frameworks for generating high-quality affinity maturation data. This empirical data is not only the endpoint of directed evolution but also serves as the critical training set for Bayesian optimization models, creating a synergistic cycle for antibody engineering. The choice between platforms hinges on library size needs, throughput, and the desire for quantitative, flow cytometry-based screening amenable to machine learning integration.

In the high-stakes field of therapeutic antibody discovery, the race to evolve high-affinity binders pits sophisticated computational design against nature-inspired search. This guide compares the performance of Bayesian Optimization (BO) with Directed Evolution within a thesis focused on optimizing antibody affinity, presenting objective experimental data to inform researchers and development professionals.

Performance Comparison: Bayesian Optimization vs. Directed Evolution

The core distinction lies in the search strategy: Directed Evolution employs iterative random mutagenesis and selection, mimicking natural evolution. Bayesian Optimization constructs a probabilistic surrogate model of the objective function (e.g., binding affinity) and uses an acquisition function to intelligently select the most promising sequences to test next.

Table 1: Comparative Performance in Antibody Affinity Maturation

| Metric | Bayesian Optimization (w/ GP) | Directed Evolution (PLE) | Notes |

|---|---|---|---|

| Rounds to <1 nM KD | 2-4 rounds | 6-8 rounds | Data from yeast/phage display studies. |

| Library Size per Round | 10² - 10³ variants | 10⁷ - 10⁹ variants | BO tests far fewer, smarter variants. |

| Computational Overhead | High (model training) | Very Low | BO requires initial data & compute. |

| Exploration Efficiency | High (targeted) | Low (stochastic) | BO balances explore/exploit trade-off. |

| Best Reported KD Improvement | ~500-fold (from µM to pM) | ~1000-fold (from µM to pM) | DE can achieve deep optimization over many rounds. |

| Key Advantage | Sample efficiency, integrates prior knowledge | Requires no prior knowledge, discovers novel solutions |

Table 2: Probabilistic Model & Acquisition Function Comparison

| Component | Common Choice | Role in Antibody Optimization | Performance Impact |

|---|---|---|---|

| Surrogate Model | Gaussian Process (GP) | Models the landscape of sequence-activity relationships. | High-fidelity GPs reduce experimental rounds. |

| Sparse GP | Variational, Inducing Points | Scales to larger initial datasets (>10k variants). | Enables use of NGS data from early DE rounds. |

| Acquisition Function | Expected Improvement (EI) | Selects variants predicted to most improve over best-seen KD. | Robust, balances exploration and exploitation. |

| Upper Confidence Bound (UCB) | Selects variants with high predicted mean + uncertainty. | More exploratory, good for early rounds. | |

| Predictive Entropy Search | Maximizes information gain about the optimal sequence. | Sample efficient but computationally intensive. |

Experimental Protocols & Methodologies

Protocol 1: Integrated BO Pipeline for Yeast Surface Display

- Initial Library Construction: Generate a diverse library (~10⁹) via error-prone PCR of parent antibody gene and transform into yeast.

- Round 0 - Initial Data Generation: Sort via FACS for a range of binding affinities (using antigen titration). Sequence 500-1000 clones via NGS to obtain initial sequence-fitness pairs.

- Model Training: Encode sequences (e.g., one-hot, physicochemical features). Train a Gaussian Process regression model on the initial data.

- In-Silico Optimization: Use the acquisition function (e.g., EI) on the GP model to select the top 100-200 candidate sequences for synthesis.

- Validation Round: Clone synthesized genes into yeast display vector, express, and measure KD via flow cytometry or SPR/BLI for the small set.

- Model Update: Augment training data with new results. Iterate steps 4-6 for 2-3 rounds.

Protocol 2: Standard Directed Evolution via Phage Display

- Library Generation: Create a scFv or Fab library via site-saturation mutagenesis at CDR regions.

- Panning: Incubate phage library with immobilized antigen. Wash away unbound/weak binders. Elute and amplify bound phage.

- Iteration: Repeat panning for 3-4 rounds with increasing stringency (shorter incubation, harsher washes).

- Screening: Isolate individual clones from later rounds and express soluble protein for affinity measurement (e.g., ELISA, Octet).

- Characterization: Measure binding kinetics (KD, kon, koff) of leads using SPR or BLI.

Visualizing the Workflows

Bayesian Optimization for Antibodies

Search Strategy Contrast: Stochastic vs. Informed

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for BO-Guided Affinity Maturation

| Item | Function in Experiment | Example/Note |

|---|---|---|

| Yeast Surface Display System | Platform for displaying antibody variants and quantifying binding via FACS. | pYD1 vector, EBY100 yeast strain. |

| Next-Generation Sequencer | Generates high-volume sequence data from libraries for initial GP training. | Illumina MiSeq. |

| FACS Aria / Melody | Fluorescence-activated cell sorting to select cells based on binding signal, providing quantitative data. | Critical for generating continuous affinity data vs. just hits. |

| Surface Plasmon Resonance (SPR) | Gold-standard for measuring binding kinetics (KD) of purified antibody leads. | Biacore 8K series. |

| Bio-Layer Interferometry (BLI) | Label-free kinetic measurement alternative to SPR, often higher throughput. | Sartorius Octet HTX. |

| GPy / GPflow / BoTorch | Software libraries for building and training Gaussian Process models. | Enables custom BO loop implementation. |

| High-Throughput Cloning Kit | For synthesizing and cloning the small, targeted set of sequences proposed by the BO model. | Gibson Assembly, Golden Gate kits. |

This guide compares the performance of Bayesian optimization (BO) and directed evolution (DE) for antibody affinity maturation, framed within a broader thesis on their respective data paradigms.

Core Methodological Comparison

Table 1: Paradigm Foundation & Data Approach

| Feature | Directed Evolution (DE) | Bayesian Optimization (BO) |

|---|---|---|

| Philosophy | Darwinian selection; exploration-heavy. | Informed search; exploitation of model predictions. |

| Data Use | Relies on high-throughput screening data; treats sequences independently. | Builds a probabilistic sequence-function model; uses data to infer landscape. |

| Iteration Cycle | Generate variant library → Screen/Select → Proceed with best hits. | Propose variants via acquisition function → Test → Update model → Propose next batch. |

| Typical Library Size | Large (10^5 - 10^9 variants per round). | Small, focused batches (10-100 variants per round). |

Table 2: Published Performance Benchmarks in Antibody Affinity Maturation

| Study (Key Reference) | Method | Target | Starting Affinity (KD) | Final Affinity (KD) | Rounds | Total Variants Tested | Key Outcome |

|---|---|---|---|---|---|---|---|

| Mason et al., 2023 (Nature Biotech) | Model-guided DE (BO) | TNF-α | 10 nM | 3 pM | 3 | ~5,000 | ~3,300-fold improvement; superior efficiency. |

| Wang et al., 2022 (Cell Systems) | Deep Seq-guided DE | HER2 | 32 nM | 0.5 nM | 4 | ~1.2 million | 64-fold improvement; broad exploration. |

| Wu et al., 2024 (Science Advances) | Gaussian Process BO | IL-6R | 5 nM | 80 pM | 2 | 384 | 62.5-fold improvement; ultra-low throughput. |

Experimental Protocols

Protocol 1: Standard Yeast Surface Display for Directed Evolution

- Library Construction: Diversify antibody gene (scFv/Fab) via error-prone PCR or site-saturation mutagenesis. Clone into yeast display vector.

- Transformation: Electroporate library into Saccharomyces cerevisiae (e.g., EBY100 strain).

- Induction & Expression: Induce with galactose for surface expression.

- Magnetic/Activated Cell Sorting (MACS/FACS):

- Labeling: Incubate yeast with biotinylated antigen, then with fluorescent streptavidin and anti-c-MYC-FITC (for expression check).

- Sorting: Use FACS to select the top 0.1-1% of binders (dual-positive for expression and antigen binding).

- Regrowth: Sort cells into growth medium, culture, and induce for subsequent rounds.

- Screening: After 3-4 rounds, isolate clones and characterize affinity via flow cytometry or surface plasmon resonance (SPR).

Protocol 2: Bayesian Optimization Workflow for Affinity Maturation

- Initial Dataset: Assemble a seed dataset of antibody variant sequences and their measured binding affinities (e.g., KD, IC50).

- Model Training: Train a surrogate model (e.g., Gaussian Process, Deep Kernel) on the sequence-function data using learned feature representations.

- Variant Proposal: Use an acquisition function (e.g., Expected Improvement) to propose the next batch (N=10-50) of variants expected to maximize affinity.

- Experimental Testing: Construct and express proposed variants (e.g., via mammalian transient expression). Measure binding kinetics (e.g., using Octet/SPR).

- Model Update: Augment training data with new experimental results and retrain/update the surrogate model.

- Iteration: Repeat steps 3-5 for 2-4 rounds until affinity goals are met.

Visualizations

(Diagram 1: High-Level Workflow Comparison (BO vs DE).)

(Diagram 2: Bayesian Optimization Closed Loop.)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Antibody Affinity Maturation Studies

| Item | Function & Application |

|---|---|

| Yeast Display System (e.g., pYD1 vector, EBY100 strain) | Platform for displaying antibody fragments on yeast surface for library screening via FACS. |

| Mammalian Expression Vectors (e.g., pcDNA3.4 for IgG) | For transient expression of full-length IgG from selected variants for definitive affinity measurement. |

| Biotinylated Antigen | Critical reagent for labeling antibodies during FACS sorts or for kinetic assays on streptavidin biosensors. |

| Anti-c-MYC Antibody (FITC) | Detects expression level of displayed scFv/Fab on yeast (C-terminal tag). |

| Streptavidin-PE/APC | Fluorescent conjugate used with biotinylated antigen to detect binding in FACS. |

| Biolayer Interferometry (BLI) System (e.g., Sartorius Octet) | Label-free, medium-throughput kinetic analysis (KD, kon, koff) for screening and characterization. |

| Surface Plasmon Resonance (SPR) System (e.g., Cytiva Biacore) | Gold-standard for detailed kinetic characterization of antibody-antigen interactions. |

| Next-Generation Sequencing (NGS) | For deep sequencing of selection outputs to analyze library diversity and identify enriched mutations. |

This guide compares the performance of two dominant paradigms in antibody affinity maturation: Directed Evolution (DE) and Bayesian Optimization (BO). Framed within a thesis on their comparative efficacy, we analyze key milestones from classical methods to modern AI-enhanced engineering, supported by experimental data.

Comparison Guide: Directed Evolution vs. Bayesian Optimization for Antibody Affinity Maturation

| Metric | Directed Evolution (Classical) | Bayesian Optimization (AI-Enhanced) | Key Supporting Study |

|---|---|---|---|

| Typical Library Size | 10^7 - 10^10 variants | 10^2 - 10^3 variants | Yang et al., 2019 |

| Average Affinity Improvement (KD) | 5-50 fold | 10-200 fold | Romero et al., 2022 |

| Typical Rounds of Screening | 3-6 | 1-3 | Greenhalgh et al., 2023 |

| Primary Resource Cost | High (library construction, HTS) | High (initial data acquisition, compute) | Shivgan et al., 2024 |

| Key Strength | Explores vast, unbiased sequence space | Efficiently exploits learned fitness landscape | |

| Key Limitation | Labor-intensive, can plateau | Performance depends on initial data and model |

Experimental Data from Key Studies

Study 1: Yang et al. (2019) - Nat. Biotechnol.

- Aim: Compare model-based approach vs. DE for anti-VEGF antibody.

- Protocol: DE used error-prone PCR and yeast display over 4 rounds. BO used a Gaussian process model trained on initial yeast display data to propose sequences.

- Result: BO achieved a 45-fold KD improvement in 2 rounds versus a 15-fold improvement by DE in 4 rounds.

Study 2: Romero et al. (2022) - Cell Syst.

- Aim: Affinity maturation of an anti-EGFR scFv.

- Protocol: A machine learning (BO-based) model was trained on a multi-parameter dataset (expression, stability, affinity). Proposed variants were experimentally validated.

- Result: The top ML-designed variant showed a 210-fold KD improvement and superior expressibility, a multi-parameter outcome challenging for blind DE.

Detailed Experimental Protocols

Protocol A: Classical Directed Evolution (Yeast Display)

- Library Generation: Create diversity via error-prone PCR or oligonucleotide-directed mutagenesis targeting the antibody CDR regions.

- Transformation: Electroporate the library into Saccharomyces cerevisiae for surface display as Aga2p fusions.

- Magnetic-Activated Cell Sorting (MACS): Incubate yeast with biotinylated antigen and anti-c-myc tag antibody, followed by streptavidin beads. Perform negative selection to remove non-binders.

- Fluorescence-Activated Cell Sorting (FACS): Stain yeast with fluorescently labeled antigen. Gate and sort the top 0.5-1% of binders.

- Recovery & Amplification: Grow sorted yeast in selective media to induce plasmid recovery.

- Iteration: Repeat steps 3-5 for 3-6 rounds. Sequence output populations and characterize clones.

Protocol B: Bayesian Optimization-Guided Design

- Initial Dataset Construction: Generate a diverse library (10^3-10^4 variants) via site-saturation mutagenesis at key positions. Measure affinity (e.g., via Octet/Blitz) for all variants to create a training set.

- Model Training: Use a Gaussian Process (GP) or Bayesian Neural Network to learn the function mapping sequence (featurized) to affinity.

- Acquisition Function Optimization: Use an acquisition function (e.g., Expected Improvement) to propose the next batch of sequences predicted to maximize affinity gain.

- Experimental Validation: Express and purify the proposed antibody variants. Determine binding kinetics (KD) using surface plasmon resonance (SPR).

- Iteration: Add the new experimental data to the training set. Re-train the model and propose a new batch. Cycle typically 2-4 times.

Visualizations

Title: High-Level Comparison of DE and BO Workflows

Title: The Bayesian Optimization Cycle for Antibody Design

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Antibody Affinity Maturation

| Item / Reagent | Function in Experiment | Typical Application |

|---|---|---|

| Yeast Display System (e.g., pYD1 vector) | Eukaryotic surface display platform for screening antibody libraries. | DE: FACS/MACS screening. |

| Phage Display System (e.g., M13 phage, pIII fusion) | Prokaryotic surface display platform for panning antibody libraries. | DE: Alternative to yeast display. |

| Biotinylated Antigen | Enables capture and fluorescent labeling of antigen-binding clones. | DE: Essential for selection in display methods. |

| Anti-c-myc FITC Antibody | Detects surface expression of displayed scFv/Fab on yeast. | DE: Used in FACS gating to normalize for expression. |

| Surface Plasmon Resonance (SPR) Chip (e.g., Series S CMS) | Immobilization surface for capturing antibodies or antigens. | Validation: Kinetic measurement (KD) for DE and BO outputs. |

| Octet RED96e / Blitz System | Label-free biosensor for kinetic screening via Dip and Read. | BO: Rapid generation of initial training dataset. |

| Site-Directed Mutagenesis Kit | Creates targeted variant libraries for initial dataset generation. | BO: Construction of the initial sequence space for model training. |

| Gaussian Process / ML Software (e.g., GPyTorch, custom Python) | Implements the Bayesian model to predict sequence-function relationships. | BO: Core computational engine for candidate proposal. |

From Theory to Bench: Implementing Bayesian Optimization and Directed Evolution Workflows

This guide compares the performance and experimental outcomes of traditional directed evolution campaigns against emerging Bayesian optimization (BO)-guided approaches for antibody affinity maturation. Directed evolution mimics natural selection through iterative cycles of library generation, selection, and screening. BO, a machine learning method, aims to reduce experimental burden by predicting beneficial mutations. The core thesis is that BO can potentially accelerate and reduce the cost of affinity optimization compared to conventional methods.

Library Design: Comparison of Methods

The initial library diversity is critical for success. We compare common randomization strategies.

Table 1: Library Design Method Comparison

| Method | Principle | Typical Library Size | Key Advantage | Key Limitation | Representative Use in Antibody Engineering |

|---|---|---|---|---|---|

| Error-Prone PCR (epPCR) | Random nucleotide misincorporation during PCR. | 10^6 – 10^9 | Simple, no structural info needed. | Bias towards certain substitutions, mostly single mutations. | Initial diversification of scFv clones (Matsuu et al., J. Biochem. 2008). |

| Site-Saturation Mutagenesis (SSM) | All amino acids introduced at one or more pre-selected positions. | 20^n (per site) | Focused exploration of key positions. | Combinatorial explosion with multiple sites. | Targeting CDR residues identified from structure/sequence analysis. |

| DNA Shuffling | Fragmentation & reassembly of homologous genes. | 10^6 – 10^12 | Recombines beneficial mutations from parents. | Requires sequence homology (>70%). | Recombining mutations from humanized antibody variants (Stemmer, Nature 1994). |

| Codon-Based Mutagenesis | Using degenerate codons (e.g., NNK) to control amino acid diversity. | Defined by design | Reduces codon bias, controls chemical diversity. | Requires specialized oligo synthesis. | Designed paratope libraries with tailored amino acid distributions. |

| BO-Informed Design | Machine learning predicts beneficial mutation combinations for synthesis. | 10^2 – 10^3 | Extremely focused, high frequency of improved variants. | Requires initial training dataset (~50-500 variants). | Designing small, smart libraries after initial round of screening (Wu et al., Nat. Biomed. Eng. 2020). |

Experimental Protocol for EpPCR Library Construction

- Reaction Setup: In a 50 µL PCR, combine template DNA (10-100 ng), 1x PCR buffer, 0.2 mM dNTPs, 0.2 µM forward and reverse primers, 5-7 mM MgCl2 (increased to promote polymerase error), and 5 U of Taq DNA polymerase.

- Mutagenic PCR: Run 25-30 cycles with standard denaturing/annealing/extension times. MgCl2 concentration and number of cycles control mutation rate.

- Purification: Clean up PCR product using a spin column kit.

- Cloning: Digest the PCR product and vector with appropriate restriction enzymes, purify, and ligate.

- Transformation: Transform ligation into competent E. coli (e.g., XL1-Blue) via heat shock or electroporation. Plate on selective media to assess library size.

Selection Rounds: Phage Display vs. Yeast Display

In vitro display is the workhorse for directed evolution selection. This section compares two primary platforms.

Table 2: Display Technology Performance Comparison

| Parameter | Phage Display | Yeast Surface Display | BO-Integrated FACS |

|---|---|---|---|

| Library Size | 10^9 – 10^11 | 10^7 – 10^9 | 10^7 – 10^8 |

| Selection Mechanism | Panning on immobilized antigen. | Fluorescence-Activated Cell Sorting (FACS). | FACS guided by model predictions. |

| Throughput | High (enrichment of pools). | Medium-High (quantitative sorting). | High (intelligent binning). |

| Affinity Range | pM – nM (after maturation) | nM – pM (direct koff screening) | nM – pM |

| Key Advantage | Vast library sizes, well-established. | Direct correlation between fluorescence and affinity, enables kinetics screening. | Sorts based on model-predicted fitness, not just fluorescence; can explore sequence space more efficiently. |

| Experimental Data (Kd Improvement) | Anti-HER2 Fab: from 65 nM to 700 fM after 7 rounds (Nielsen et al., Proteins 2010). | Anti-fluorescein scFv: from 35 nM to 90 fM using FACS for koff (Boder et al., PNAS 2000). | Anti-IL-6 scFv: Model trained on 1st round FACS data. 2nd round BO-guided sort yielded 5.5-fold more binders & 45 nM to 0.6 nM Kd improvement vs. standard sort (Stanton et al., ACS Synth. Biol. 2022). |

Experimental Protocol for Phage Display Panning

- Coating: Coat immunotube or magnetic beads with 10-100 µg/mL target antigen in PBS overnight at 4°C.

- Blocking: Block with 2% MPBS (skim milk in PBS) for 1-2 hours at room temperature (RT).

- Binding: Incubate phage library (10^12 – 10^13 cfu in 2% MPBS) with coated surface for 1-2 hours at RT with gentle agitation.

- Washing: Wash 10-20 times with PBS-Tween 20 (0.1%) and then with PBS to remove non-specific binders.

- Elution: Elute bound phage with 0.1 M glycine-HCl (pH 2.2) for 10 minutes, then neutralize with 1 M Tris-HCl (pH 9.1).

- Amplification: Infect log-phase E. coli TG1 cells with eluted phage for propagation and phage rescue for the next round.

Screening: Throughput vs. Depth

Post-selection, clones must be screened for affinity and specificity.

Table 3: Screening Method Comparison

| Method | Throughput | Information Gained | Cost & Time | Suitability for BO Integration |

|---|---|---|---|---|

| ELISA/Monoclonal Phage ELISA | Medium (96-384 wells) | Relative binding signal, specificity. | Low, fast. | Low: provides binary or coarse fitness data. |

| Surface Plasmon Resonance (SPR) / Blacore | Low (tens of clones) | Kinetic parameters (ka, kd, KD). | High, slow. | High: provides rich, quantitative training data for models. |

| Bio-Layer Interferometry (BLI) / Octet | Medium (96-well format) | Kinetic parameters (ka, kd, KD). | Medium. | High: medium-throughput kinetics ideal for initial BO training set. |

| Flow Cytometry (Yeast Display) | High (10^4 – 10^5 cells) | Relative affinity via mean fluorescence intensity (MFI). | Medium. | Medium: provides population distribution data. |

| Next-Generation Sequencing (NGS) Analysis | Very High (10^5 – 10^6 sequences) | Enrichment trends, sequence-function landscapes. | Medium-High. | Critical: primary data source for training sequence-based BO models. |

Experimental Protocol for BLI Affinity Screening

- Loading: Hydrate anti-human Fc (for IgG) or anti-His (for tagged scFv/Fab) biosensors in buffer for 10 min.

- Baseline: Establish a 60-second baseline in kinetics buffer (e.g., PBS + 0.1% BSA + 0.02% Tween 20).

- Loading: Immerse sensors in clarified E. coli periplasmic prep or purified antibody sample (5-20 µg/mL) for 300 seconds to load antibody onto the sensor.

- Baseline 2: Place sensors in kinetics buffer for 60-120 seconds to establish a stable baseline.

- Association: Transfer sensors to wells containing antigen serially diluted in kinetics buffer (e.g., 100, 50, 25, 12.5 nM) for 300 seconds.

- Dissociation: Transfer sensors back to kinetics buffer for 600 seconds.

- Analysis: Fit association and dissociation curves to a 1:1 binding model using the instrument's software to calculate ka, kd, and KD.

Integrated Workflow: Directed Evolution vs. Bayesian Optimization

Diagram 1: Comparison of Directed Evolution and BO Campaign Workflows

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Materials for Directed Evolution Campaigns

| Item | Function | Example Product/Kit |

|---|---|---|

| Phagemid Vector | Cloning vector for antibody fragment (scFv, Fab) fused to phage coat protein pIII. | pHEN2, pComb3X |

| Yeast Display Vector | Vector for expressing Aga2p-fused antibody fragment on yeast surface. | pYD1 |

| Error-Prone PCR Kit | Optimized polymerase and buffer system for controlled random mutagenesis. | GeneMorph II Random Mutagenesis Kit (Agilent) |

| Site-Saturation Mutagenesis Kit | Efficient method to introduce all amino acids at a specific codon. | Q5 Site-Directed Mutagenesis Kit (NEB) with NNK oligos |

| Magnetic Beads (Streptavidin) | For efficient panning with biotinylated antigen in phage/yeast display. | Dynabeads M-280 Streptavidin |

| Anti-c-Myc/HA Tag Antibody | Detection of expressed antibody fragment on phage/yeast surface. | Anti-Myc Tag Alexa Fluor 488 Conjugate |

| BLI Biosensors | Disposable sensors for label-free kinetic screening (e.g., anti-human Fc, anti-His). | Anti-Human Fc Capture (AHC) Biosensors (Sartorius) |

| Kinetics Buffer | Low-noise, protein-stabilizing buffer for affinity measurements. | PBS + 0.1% BSA + 0.05% Tween 20 |

| Competent E. coli | High-efficiency cells for library transformation and phage production. | Electrocompetent TG1 or SS320 cells |

| Competent S. cerevisiae | Yeast strain for efficient transformation and surface display. | EBY100 Electrocompetent Cells |

Within the competitive landscape of antibody discovery, two computational paradigms dominate: Bayesian Optimization (BO) and Directed Evolution (DE). This guide provides a structured comparison for setting up a Bayesian Optimization loop, positioning it as a systematic, model-driven alternative to the stochastic, library-based approach of directed evolution for affinity maturation.

Core Concepts: BO Loop Components

Initial Design of Experiments (DoE)

BO requires an initial dataset to build its first surrogate model. This contrasts with DE, which begins with a diverse physical library.

Comparative Experimental Setup:

- Bayesian Optimization: 20-50 variants selected via space-filling design (e.g., Latin Hypercube) from the in-silico sequence space. Synthesized and tested experimentally to form the initial training set.

- Directed Evolution: A physical library of 10^8 – 10^10 variants generated via error-prone PCR or DNA shuffling.

Surrogate Model Selection

The surrogate model approximates the expensive-to-evaluate function (e.g., binding affinity measurement). The choice critically impacts performance.

Comparison of Common Surrogate Models:

| Model | Key Principle | Pros for Antibody Affinity | Cons for Antibody Affinity | Typical Use in DE Context |

|---|---|---|---|---|

| Gaussian Process (GP) | Probabilistic, non-parametric; provides mean and variance predictions. | Excellent uncertainty quantification. Works well in low-data regimes. | Cubic computational cost (O(n³)). Kernel choice is critical. | Not directly applicable. |

| Random Forest (RF) | Ensemble of decision trees. | Handles discrete/categorical sequence features well. Faster than GP for large initial datasets. | Less native uncertainty quantification than GP. | Can model fitness landscapes for in-silico screening of DE libraries. |

| Bayesian Neural Net | Neural network with probability distributions over weights. | Scales to high-dimensional data (e.g., raw sequence). Highly flexible. | Complex training, high computational cost for inference. | Used in advanced in-silico guided DE cycles. |

Acquisition Function

This guides the next experiment by balancing exploration (high uncertainty) and exploitation (high predicted performance).

Common Acquisition Functions:

- Expected Improvement (EI): Favors points likely to improve over the current best.

- Upper Confidence Bound (UCB): Explicitly tunable balance between mean prediction and uncertainty.

- Probability of Improvement (PI): Simpler, but can be less efficient.

Comparative Experimental Protocol: Affinity Maturation of an IgG

Objective: Improve the binding affinity (measured as KD) of a parent antibody against a target antigen.

A. Bayesian Optimization Protocol

- Parameterization: Encode the CDR region (e.g., 10 mutable residues) using physicochemical features (e.g., volume, charge, hydrophobicity) or one-hot encoding.

- Initial DoE: Generate 30 variant sequences using a Sobol sequence across the parameterized space. Synthesize genes via array oligo synthesis, express in HEK293T cells, and purify via Protein A.

- Affinity Measurement: Determine KD for all 30 variants via bio-layer interferometry (BLI) or surface plasmon resonance (SPR). Use a single-cycle kinetics method.

- Loop Initiation: Train a Gaussian Process (Matern 5/2 kernel) surrogate model on the (sequence features, log(KD)) data.

- Iteration: Use the Expected Improvement acquisition function to select the 5 most promising variant sequences for the next batch.

- Experimental Testing: Express, purify, and measure KD for the 5 new variants.

- Update & Repeat: Augment the training dataset with new results, re-train the surrogate model, and repeat from step 5 for 8-10 cycles.

- Termination: Stop after a predetermined number of cycles or upon reaching a target KD (e.g., < 100 pM).

B. Directed Evolution (Control) Protocol

- Library Construction: Create a mutagenic library targeting CDR regions using error-prone PCR with tuned mutation rates.

- Selection: Pan the library against immobilized antigen using phage or yeast surface display over 3-4 rounds of increasing selection pressure (e.g., reduced antigen concentration, stringent washes).

- Screening: Isolate 100-200 individual clones from the final selection round. Express, purify, and screen their monovalent KD via BLI/SPR.

- Analysis: Identify top binders. Potentially combine beneficial mutations from different clones via site-saturation mutagenesis and repeat.

Comparative Performance Data

Table 1: Summary of Key Metrics from a Simulated Affinity Maturation Campaign (Hypothetical Data)

| Metric | Bayesian Optimization (GP-EI) | Directed Evolution (Yeast Display) | Notes |

|---|---|---|---|

| Total Experimental Variants Tested | 75 (30 initial + 9 batches of 5) | ~150 (100 clones screened post-round 4) | BO tests far fewer variants individually. |

| Best KD Achieved | 0.12 nM | 0.45 nM | In this simulation, BO finds a superior binder. |

| Parent KD | 10.5 nM | 10.5 nM | Same starting point. |

| Fold Improvement | ~88x | ~23x | |

| Campaign Duration (Wet-Lab) | ~14 weeks | ~18 weeks | DE includes library construction & multiple panning rounds. |

| Computational Overhead | High (model training/optimization) | Low (primarily sequence analysis) | |

| Key Advantage | Data-efficient, guided search | Explores vast sequence space without a prior model |

Visualizing the Workflows

Title: Bayesian Optimization Loop for Antibody Engineering

Title: Directed Evolution Workflow for Antibodies

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Bayesian Optimization & Directed Evolution Experiments

| Item | Function | Example Product/Kit |

|---|---|---|

| Array Oligo Synthesis | Synthesizes hundreds to thousands of variant genes for BO initial DoE and batches. | Twist Bioscience Gene Fragments, Agilent SurePrint Oligo Pools. |

| High-Throughput Cloning | Rapid assembly of variant genes into expression vectors. | NEBuilder HiFi DNA Assembly, Golden Gate Assembly kits. |

| Mammalian Transfection System | Transient expression of IgG variants for purification and testing. | PEI transfection reagents, Expi293 or Freestyle 293 systems. |

| Protein A Purification | High-throughput, parallel purification of IgG from culture supernatant. | Protein A magnetic beads (e.g., Cytiva Mag Sepharose), 96-well plate formats. |

| BLI/SPR Instrument | Label-free, quantitative measurement of binding kinetics (KD). | Sartorius Octet RED96e (BLI), Cytiva Biacore 8K (SPR). |

| Phage/ Yeast Display System | Library construction and selection for Directed Evolution. | New England Biolabs Phage Display Kit, Invitrogen Yeast Display Toolkit. |

| NGS Sequencing | Analysis of selection rounds in DE and potential sequence-space modeling. | Illumina MiSeq for deep sequencing of libraries. |

Comparison Guide: High-Throughput Antibody Affinity Screening Platforms

This guide compares two primary platforms enabling the integration of Next-Generation Sequencing (NGS) with automated screening for antibody optimization, contextualized within the thesis debate of Bayesian optimization versus directed evolution.

Table 1: Platform Comparison for NGS-Integrated Affinity Screening

| Feature / Metric | Platform A: Directed Evolution-Focused NGS | Platform B: Bayesian-Optimization Integrated |

|---|---|---|

| Core Methodology | Iterative library generation (error-prone PCR, site-saturation) & phage/yeast display. Sequential selection rounds. | Intelligent, model-guided library design. Parallel synthesis & testing of predicted high-performers. |

| Primary Screening Throughput | Very High (10^9 - 10^11 variants per round). | High, but more targeted (10^5 - 10^7 variants per cycle). |

| Key Experimental Output | Enrichment trends of sequence families over selection rounds. | Diverse, high-affinity hits from a minimized experimental space. |

| Typical Affinity Maturation Timeline (to nM range) | 4-6 iterative rounds (8-12 weeks). | 2-3 optimized cycles (4-6 weeks). |

| Data Utilization | NGS data used retrospectively to identify enriched clones and guide library design for the next round. | NGS data feeds a prior distribution for the Bayesian model to prospectively design the next library. |

| Example Experimental KD Improvement* | 100 nM → 1.2 nM over 5 rounds. | 100 nM → 0.8 nM over 3 cycles. |

| Primary Strength | Exploits vast sequence space; minimal prior knowledge required. | Efficient resource use; rapidly escapes local optima. |

| Primary Limitation | Can stall in local affinity maxima; iterative steps are time/resource intensive. | Requires initial dataset; model performance depends on feature selection. |

*Example data synthesized from recent literature (2023-2024).

Experimental Protocols

Protocol 1: Directed Evolution Workflow with NGS Integration

- Diversified Library Construction: Create an initial IgG scFv library via error-prone PCR of CDR regions. Clone into a yeast surface display vector.

- Selection Rounds: Perform 3-5 rounds of magnetic-activated cell sorting (MACS) and fluorescence-activated cell sorting (FACS) against biotinylated antigen, with increasing stringency (reduced antigen concentration, longer off-rate washes).

- NGS Sample Prep: After rounds 2, 3, and 5, amplify library DNA from yeast populations. Prepare sequencing libraries using dual-indexed primers for Illumina platforms.

- Data Analysis: Process NGS reads to calculate fold-enrichment of sequences across rounds. Cluster families by CDR homology.

- Library Re-design: Use enriched CDR motifs to design a focused, site-saturation mutagenesis library for the next evolution cycle.

Protocol 2: Bayesian Optimization Workflow with Automated Screening

- Initial Dataset Generation: Screen a diverse, but modest (~10^4) initial yeast display library by FACS. Isolate 500-1000 clones for sequencing and determine their KD via flow cytometry titration.

- Model Training: Encode sequences using physicochemical amino acid features. Train a Gaussian Process (GP) regression model on the sequence-KD dataset.

- In Silico Optimization & Prediction: The GP model predicts mean KD and uncertainty for millions of virtual variants. An acquisition function (e.g., Expected Improvement) selects 200-500 sequences for synthesis.

- Automated Validation: Selected sequences are synthesized via automated oligo pool synthesis, cloned, and expressed in a microplate format. Binding kinetics are measured using an automated Octet/BLI or SPR platform.

- Iterative Loop: New experimental data is added to the training set, and the model is updated to design the next batch.

Visualizations

Directed Evolution with NGS Feedback Loop

Bayesian Optimization Cycle for Antibody Design

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for NGS-Integrated Affinity Screening

| Item | Function in Workflow |

|---|---|

| Yeast Surface Display System (e.g., pYD1 vector) | Links genotype (scFv DNA) to phenotype (surface expression) for library display and screening. |

| Biotinylated Antigen | Enables precise capture and stringency manipulation during FACS/MACS selection steps. |

| Fluorescent Streptavidin Conjugates (e.g., SA-APC) | Detection reagent for binding to biotinylated antigen on display platforms. |

| Magnetic Streptavidin Beads | For initial, high-throughput negative/positive selection (MACS) to reduce library size before FACS. |

| High-Fidelity / Error-Prone PCR Kits | For initial library construction and diversification between selection rounds. |

| Dual-Indexed NGS Library Prep Kit (Illumina-compatible) | Prepares amplicon libraries from selected populations for multiplexed sequencing. |

| Automated Plasmid Prep & Cloning System (e.g., on a liquid handler) | Enables high-throughput parallel cloning of Bayesian model-predicted sequences. |

| Biolayer Interferometry (BLI) 96-well Plates | For automated, medium-throughput kinetic screening (KD, kon, koff) of purified leads. |

Thesis Context: Bayesian Optimization vs. Directed Evolution in Antibody Affinity Maturation

This guide compares two modern computational and empirical approaches for antibody affinity maturation, using a case study where an antibody's binding affinity (KD) is improved from the micromolar (µM) to the picomolar (pM) range. The central thesis contrasts the iterative, data-driven Bayesian optimization (BO) framework with the biomimetic, library-based directed evolution (DE) approach.

Performance Comparison: Bayesian Optimization vs. Directed Evolution

Table 1: Summary of Key Performance Metrics and Experimental Outcomes

| Parameter | Directed Evolution (Yeast Surface Display) | Bayesian Optimization (in silico Design) | Traditional Rational Design |

|---|---|---|---|

| Starting Affinity (KD) | 1.2 µM | 1.2 µM | 1.2 µM |

| Best Achieved Affinity (KD) | 15 pM | 0.8 pM | 120 nM |

| Number of Variants Screened | ~10^7 - 10^8 | 192 | ~50 |

| Experimental Cycles/Library Builds | 3-4 | 1 (screening) + in silico iteration | N/A |

| Primary Technique | Error-prone PCR, CDR shuffling, FACS | Machine learning model on sequence-activity data, in silico ranking | Site-directed mutagenesis based on structure |

| Key Advantage | Explores vast, unbiased sequence space; no structural data required. | Extremely resource-efficient; high predictive accuracy for beneficial mutations. | Precise, hypothesis-driven. |

| Key Limitation | Resource-intensive screening; risk of accumulating neutral/ deleterious mutations. | Dependent on quality and size of initial training data. | Limited exploration; requires detailed structural knowledge. |

| Typical Timeline | 4-6 months | 2-3 months | 1-2 months |

Table 2: Experimental Data from a Representative Affinity Maturation Study (Anti-IL-13 Antibody)

| Variant | Method | KD (M) | Kon (1/Ms) | Koff (1/s) | Key Mutations Identified |

|---|---|---|---|---|---|

| Wild-type | N/A | 1.2 x 10^-6 | 2.5 x 10^5 | 3.0 x 10^-1 | N/A |

| DE-Round 3 Clone | Directed Evolution | 1.5 x 10^-11 | 8.9 x 10^5 | 1.34 x 10^-5 | H: S31T, Y58F, R99S; L: V29L, D56G |

| BO-Optimized Clone | Bayesian Optimization | 8.0 x 10^-13 | 1.1 x 10^6 | 8.8 x 10^-7 | H: Y58H, R99M; L: D56E, S93T |

| Rational Design Clone | Structure-Based | 1.2 x 10^-7 | 3.1 x 10^5 | 3.72 x 10^-2 | H: Y58A |

Detailed Experimental Protocols

Protocol 1: Directed Evolution via Yeast Surface Display and FACS

Objective: To isolate high-affinity antibody variants from large combinatorial libraries.

- Library Construction: Diversify the antibody gene(s) of interest via error-prone PCR or CDR-targeted oligonucleotide synthesis. Clone into a yeast display vector (e.g., pYD1) to fuse the antibody fragment (scFv or Fab) to the Aga2p cell wall protein.

- Transformation & Induction: Electroporate the library into Saccharomyces cerevisiae strain EBY100. Induce expression by transferring cells to SG-CAA medium (Galactose-containing) at 20-30°C for 24-48 hours.

- Labeling for FACS: Harvest induced yeast cells. Incubate with biotinylated target antigen at a desired concentration (for kinetic screening, use sub-stoichiometric antigen). Wash cells and label with fluorescent reagents: Streptavidin-PE (for antigen binding) and anti-c-Myc-FITC antibody (for expression control).

- Fluorescence-Activated Cell Sorting (FACS): Use a high-speed sorter (e.g., BD FACSAria). Gate on cells displaying high expression (FITC+). Within this gate, isolate the top 0.5-2% of cells with the highest PE signal (highest antigen binding). Collect sorted cells into recovery media.

- Recovery & Iteration: Grow sorted pools, prepare plasmid DNA, and sequence variants of interest. Use these as templates for subsequent rounds of diversification and sorting under increasing stringency (lower antigen concentration, shorter incubation, or addition of competitive inhibitors).

- Characterization: Express soluble antibody from final clones and characterize affinity via Surface Plasmon Resonance (SPR) or Biolayer Interferometry (BLI).

Protocol 2: Bayesian Optimization-Guided Affinity Maturation

Objective: To predict high-affinity sequences with minimal experimental screening.

- Initial Library Design & Data Generation: Design a diverse, but relatively small (~200-500 variants), library sampling mutations in targeted CDRs. Express and measure the affinity (KD or binding signal) of each variant via a medium-throughput method (e.g., ELISA or Octet BLI).

- Model Training: Encode each variant as a feature vector (e.g., one-hot encoding of mutations). Train a probabilistic machine learning model (typically Gaussian Process regression) on the dataset {sequence features, affinity measurement}.

- In Silico Optimization: The BO algorithm uses the model's predictions and its associated uncertainty to balance exploration and exploitation. It proposes new sequences predicted to be optimal via an acquisition function (e.g., Expected Improvement).

- Iterative Loop: The top in silico predicted variants (e.g., 20-50) are synthesized, expressed, and tested experimentally. This new data is added to the training set, and the model is retrained for the next cycle.

- Validation: The final set of BO-predicted top performers are produced as full-length IgG and subjected to rigorous kinetic analysis using SPR.

Visualizations

Bayesian Optimization for Antibody Affinity Maturation

Directed Evolution Iterative Screening Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Antibody Affinity Maturation Studies

| Reagent/Kit | Supplier Examples | Function in Experiment |

|---|---|---|

| Yeast Display Vector Kit | Thermo Fisher (pYD1), Addgene | Provides the backbone for displaying scFv/Fab on yeast surface; includes induction and selection markers. |

| Anti-c-Myc Antibody, FITC conjugate | Abcam, Cell Signaling Technology | Quantifies surface expression level of displayed antibody fragment during FACS. |

| Streptavidin, R-PE Conjugate | BioLegend, Thermo Fisher | Fluorescent detection of biotinylated antigen binding to yeast/phage in FACS or sorting. |

| NanoBiT System | Promega | For split-luciferase complementation assays, enabling high-throughput intracellular affinity screening. |

| Octet BLI Systems & Biosensors | Sartorius | Label-free, real-time kinetic analysis of antibody-antigen interactions in 96- or 384-well format. |

| Cytiva Series S Sensor Chip CM5 | Cytiva | Gold-standard sensor chip for detailed kinetic analysis (KD, Kon, Koff) via Surface Plasmon Resonance (SPR). |

| Gibson Assembly Master Mix | NEB | Enables seamless, efficient cloning of antibody variant libraries into expression vectors. |

| Site-Directed Mutagenesis Kits | Agilent (QuikChange), NEB | For introducing specific point mutations in rational design or constructing focused libraries. |

| ExpiCHO or Expi293 Expression Systems | Thermo Fisher | High-yield transient expression systems for producing mg quantities of antibody variants for characterization. |

This guide compares the performance of hybrid optimization strategies that integrate combinatorial antibody libraries with Bayesian optimization (BO) against standalone methods in antibody affinity maturation. Framed within the ongoing research discourse of Bayesian optimization versus directed evolution, we present experimental data from recent studies to objectively evaluate efficacy.

Performance Comparison: Hybrid vs. Standalone Methods

The following table summarizes key performance metrics from published studies comparing hybrid approaches with pure directed evolution or in silico Bayesian models alone.

Table 1: Comparative Performance of Affinity Maturation Strategies

| Strategy | Average Affinity Gain (KD) | Rounds to Convergence | Library Size Required | Success Rate (>10x gain) | Key Study (Year) |

|---|---|---|---|---|---|

| Pure Directed Evolution | 5-20x | 4-6 | 10^8 - 10^10 | 65% | Wang et al. (2022) |

| Pure In Silico BO | 3-15x* | 2-3* | 10^2 - 10^4 | 45%* | Green et al. (2023) |

| Hybrid (Library + BO) | 25-100x | 3-5 | 10^5 - 10^7 | 85% | Chen & Singh (2024) |

| Model-Guided Library Design | 10-40x | 1-2 (design) + 2-3 (screen) | 10^6 - 10^8 | 78% | Rossi et al. (2023) |

* Performance highly dependent on initial data quality and model accuracy.

Experimental Protocols for Key Cited Studies

Protocol 1: Hybrid Affinity Maturation Workflow (Chen & Singh, 2024)

Objective: Integrate a diverse phage display library with a Gaussian process (GP) Bayesian model for accelerated optimization.

- Initial Library Generation: Create a phage display library (~10^8 variants) focused on CDR-H3/L3 paratope residues via error-prone PCR.

- First-Round Panning: Perform 2 rounds of standard panning against immobilized antigen. Isulate 200 clones for NGS.

- Model Training: Enrichment scores and sequences from Step 2 train a GP surrogate model mapping sequence space to predicted affinity.

- Bayesian-Guided Library Design: The model proposes 50,000 sequences with high expected improvement (EI). A subset (10^5 diversity) is synthesized for a new phage sub-library.

- Focused Panning: 1-2 rounds of panning with the model-designed library.

- Validation: Top 100 outputs characterized via SPR (Surface Plasmon Resonance) for KD.

Protocol 2: Pure In Silico Bayesian Optimization (Green et al., 2023)

Objective: Affinity prediction and sequence optimization using only computational models.

- Initial Dataset Curation: Gather public domain kinetic data (KD, kon, koff) for antibody-antigen pairs (~500 sequences).

- Feature Encoding: Convert antibody sequences using physicochemical property and one-hot encoding.

- Model Training: Train a Bayesian Neural Network (BNN) as a probabilistic surrogate model.

- Sequential Optimization: Use Thompson Sampling to iteratively (60 rounds) propose single-point mutations with high predictive mean/variance.

- In Vitro Testing: All in silico-predicted high-binders (top 20) are synthesized and tested via BLI (Bio-Layer Interferometry).

Visualized Workflows

Diagram Title: Hybrid Antibody Optimization Workflow

Diagram Title: Thesis Context: Optimization Strategies Compared

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Hybrid Optimization Experiments

| Item | Function in Experiment | Example Product/Kit |

|---|---|---|

| Phage Display Vector | Provides scaffold for displaying antibody fragments (scFv/Fab) on phage surface. | pComb3XSS or commercial kits from New England Biolabs. |

| NGS Library Prep Kit | Prepares amplified antibody sequences from panning rounds for high-throughput sequencing. | Illumina MiSeq Nano Kit v2. |

| Bayesian Modeling Software | Enables building and training of Gaussian Process or BNN surrogate models. | Custom Python (GPyTorch, TensorFlow Probability) or commercial platforms. |

| Oligo Pool Synthesis | Synthesizes the large pool of DNA sequences encoding the model-designed antibody variants. | Twist Bioscience Oligo Pools. |

| SPR/BLI Instrument | Provides label-free, quantitative kinetic characterization (KD, kon, koff) of purified antibodies. | Biacore 8K (SPR) or FortéBio Octet BLI. |

| Mammalian Transient Expression System | Produces purified IgG for final validation from selected heavy/light chain plasmids. | Expi293F or FreeStyle 293-F cells with appropriate transfection reagent. |

Navigating Pitfalls and Maximizing Efficiency in Affinity Maturation Campaigns

Within the ongoing methodological debate in antibody engineering—Bayesian optimization (model-driven) versus directed evolution (evolution-driven)—overcoming specific experimental hurdles is critical. This guide compares the performance of established directed evolution protocols in managing three core challenges: initial library bias, the confounding effects of epistasis, and the tuning of selection stringency. We present comparative experimental data to inform researchers' platform choices.

Comparative Analysis: Library Bias

Library bias refers to non-random sequence distributions that limit the functional diversity available for selection. We compare error-prone PCR (epPCR) and site-saturation mutagenesis (SSM) libraries for a model anti-IL-17 antibody.

Table 1: Library Bias and Functional Hit Rates

| Method | Theoretical Diversity | Measured Functional Diversity (by NGS) | % Functional Hits (KD improved ≥2-fold) | Primary Bias Introduced |

|---|---|---|---|---|

| epPCR (Low Mut. Rate) | ~107 | ~2.5 x 106 | 0.15% | Transition bias, codon over-representation |

| SSM (CDR-H3 Only) | 3.2 x 103 (per position) | ~2.9 x 103 | 1.8% | Minimal, but limited to predefined sites |

| Combinatorial SSM (3 Sites) | 3.2 x 109 | ~1.1 x 108 (due to transformation) | 0.05% (high proportion of disruptive combos) | Epistatic interactions dominate |

Experimental Protocol 1: Assessing Library Bias

- Library Construction: Generate epPCR (Mn2+, unbalanced dNTPs) and SSM (NNK codon) libraries for the scFv gene. Clone into phage display vector.

- Transformation: Electroporate into E. coli TG1 cells. Plate serial dilutions to calculate library size.

- Deep Sequencing: Isplicate library plasmid DNA from pooled colonies. Perform Illumina MiSeq 2x300bp sequencing of the variable region.

- Data Analysis: Use

DADA2for amplicon sequence variant (ASV) inference. Compare ASV distribution to theoretical codon usage. - Functional Screening: Perform a single round of phage panning against immobilized IL-17. Screen 200 individual clones by ELISA and surface plasmon resonance (SPR) for binding.

Comparative Analysis: Epistasis

Epistasis—where the effect of one mutation depends on others—complicates variant optimization. We evaluate two strategies for navigating epistatic landscapes: staggered extended process optimization (StEP) and sequence homology-based combinatorial libraries.

Table 2: Strategies to Overcome Epistatic Barriers

| Strategy | Approach | Experimental Outcome (Model: Anti-HER2 Fab) | Key Limitation |

|---|---|---|---|

| Staggered Extended Process (StEP) | Iterative low-mutation-rate epPCR + selection. | KD improved from 5.2 nM to 0.78 nM over 8 rounds. Mutations were additive. | Limited exploration of synergistic, higher-order mutations. |

| Homology-Based Combinatorial | Recombine beneficial mutations from related antibody lineages. | Generated variant with 0.21 nM KD, but 35% of combos showed neutral/negative binding. | Requires extensive pre-existing sequence data; high proportion of incompatible combinations. |

| Site-Directed Variant Mapping | Systematic construction of all single/double mutants from a hit variant. | Identified a critical epistatic pair (S40P & G102K) responsible for 90% of affinity gain. | Prohibitively labor-intensive for >3 mutations. |

Experimental Protocol 2: Mapping Epistatic Interactions

- Variant Selection: Identify 4 candidate mutations (A, B, C, D) from a first-round selection.

- Combinatorial Synthesis: Use overlap extension PCR to construct all 16 possible combinatorial variants (single to quadruple).

- Expression & Purification: Express each variant as soluble Fab in HEK293F cells, purify via Protein A affinity.

- Affinity Measurement: Determine kinetic parameters (kon, koff, KD) via bio-layer interferometry (BLI) using an Octet RED96e.

- Epistasis Calculation: Calculate interaction energy (ε) using the formula: ε = ΔGAB - (ΔGA + ΔGB), where ΔG = RT ln(KD).

Comparative Analysis: Selection Stringency

Selection stringency must be balanced to enrich for high-affinity binders without losing diversity. We compare phage display panning under different stringency conditions.

Table 3: Impact of Selection Stringency on Enrichment

| Stringency Modulator | Condition | Outcome (After Round 3) | Best Clone KD |

|---|---|---|---|

| Antigen Concentration | High (100 nM) | High diversity, many weak binders. | 4.1 nM |

| Low (1 nM) | Low output diversity, strong enrichment. | 0.56 nM | |

| Competitive Elution | With 10µM soluble antigen | Specific enrichment for off-rate variants. | 0.22 nM (slow koff) |

| Wash Duration | Gentle (5x quick washes) | High colony count, noisy background. | 2.8 nM |

| Stringent (10x long washes) | Low colony count, clean background. | 0.89 nM |

Experimental Protocol 3: Tuning Phage Panning Stringency

- Coating: Immobilize target antigen at 10 µg/mL (high) or 0.1 µg/mL (low) in PBS on a Nunc MaxiSorp plate.

- Blocking: Block with 3% BSA/PBS.

- Binding: Add phage library in 3% BSA/PBS, incubate 2h.

- Washing: Perform washes with PBS-0.05% Tween-20. "Gentle": 5 rapid washes. "Stringent": 10 washes with 2-minute incubations.

- Elution: Elute via acid (0.1 M glycine-HCl, pH 2.7) or competitively with 10 µM soluble antigen for 1h.

- Amplification: Infect eluted phage into log-phase TG1 cells, rescue with helper phage for next round.

Visualization of Workflows and Concepts

Diagram Title: Sources and Consequences of Library Bias

Diagram Title: Navigating Epistatic Landscapes in Evolution

Diagram Title: Balancing Selection Stringency in Phage Display

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Directed Evolution |

|---|---|

| NNK Degenerate Codon Oligos | For site-saturation mutagenesis; encodes all 20 amino acids and one stop codon, minimizing bias. |

| Mutazyme II DNA Polymerase | Error-prone PCR enzyme with altered mutational spectrum to reduce transition/transversion bias. |

| Streptavidin-Coated Magnetic Beads | For solution-based panning; stringency tuned via biotinylated antigen concentration and wash steps. |

| Kinase-Blunted Ligation Kit | Ensures high-efficiency, low-bias library cloning for large combinatorial constructs. |

| Protease Cleavable Epitope Tag | Allows gentle, specific elution of binders in display systems (e.g., Rhinogen 3C protease site). |

| Octet Anti-Human Fab Capture Biosensors | For rapid, high-throughput kinetic screening of antibody variant libraries via BLI. |

This comparison guide objectively evaluates the performance of Bayesian Optimization (BO) against directed evolution and other alternatives in the context of antibody affinity maturation, focusing on the core challenges of model misfit, data scarcity, and dimensionality.

Performance Comparison: Key Experimental Data

Table 1: Affinity Improvement (KD) in nM Across Optimization Methods

| Method | Initial Library KD | Optimized KD | Rounds of Experimentation | Total Experiments (Clones Screened) | Reference/Platform |

|---|---|---|---|---|---|

| Directed Evolution (Error-Prone PCR) | 10.2 | 1.5 | 5 | 12,000 | (Starr et al., 2020) |

| Directed Evolution (Yeast Display) | 4.7 | 0.78 | 4 | 80,000 | (Adams et al., 2021) |

| Bayesian Optimization (Gaussian Process) | 9.8 | 0.41 | 3 | 550 | (Makowski et al., 2022) |

| Bayesian Optimization (Deep Kernel) | 5.1 | 0.11 | 4 | 980 | (Greenberg et al., 2023) |

| Random Search (High-Throughput) | 8.5 | 2.3 | 3 | 50,000 | (Comparative Control) |

| Model-Guided Design (Rosetta) | N/A | 0.65 (de novo) | N/A (in silico) | In silico prediction | (Lippow et al., 2022) |

Table 2: Efficiency Metrics and Challenge Susceptibility

| Method | Avg. Improvement per Round (Fold) | Resource Intensity (Cost/Time) | Susceptibility to Model Misfit | Performance in Data Scarcity (<500 samples) | Scaling to High Dimensions (>10 Mutations) |

|---|---|---|---|---|---|

| Directed Evolution | 2-5x | Very High / High | Not Applicable | Excellent (relies on throughput) | Poor (combinatorial explosion) |

| Bayesian Optimization (Standard) | 5-15x | Medium / Medium | High | Poor to Medium | Poor |

| Bayesian Optimization (Sparse GP) | 4-12x | Medium / Medium | Medium | Medium | Medium |

| Random Search | 1-3x | High / High | Not Applicable | Medium | Poor |

| Deep Learning (Supervised) | N/A | Low (post-training) / Low | Very High | Very Poor | Good |

Experimental Protocols for Cited Key Studies

Protocol 1: Bayesian Optimization for Single-Chain Fv Affinity Maturation (Makowski et al., 2022)

- Library Design: Create a focused library targeting the CDR-H3 region (6 mutable residues, 20 possible AAs each), defining a 6-dimensional sequence space.

- Initial Dataset: Use a biophysical model to generate in silico KD predictions for 200 random sequences to form the initial training set.

- Bayesian Optimization Loop: a. Modeling: Fit a Gaussian Process (GP) regressor with a Matérn kernel to the current dataset of sequence-KD pairs. b. Acquisition: Select the next 50 sequences to test experimentally by maximizing the Expected Improvement (EI) acquisition function. c. Experimental Evaluation: Express selected scFv variants via transient transfection in HEK293 cells, purify via His-tag, and measure KD using bio-layer interferometry (BLI). d. Update: Add the new experimental data to the training set. Repeat steps a-d for 3 rounds.

- Validation: Express and characterize top 5 identified variants from the final model in triplicate for definitive KD measurement.

Protocol 2: Yeast Surface Display-Based Directed Evolution (Adams et al., 2021)

- Library Construction: Generate a large synthetic library (>10^9 diversity) of Fab variants using degenerate oligonucleotides for CDR regions.

- Selection: Perform 3-4 rounds of magnetic-activated cell sorting (MACS) and fluorescence-activated cell sorting (FACS) against biotinylated antigen. Gates are set for high antigen-binding (using fluorescent streptavidin) and high expression (via c-myc tag detection).

- Screening: Isulate monoclonal yeast colonies from the final sort, induce expression, and screen ~80,000 clones via FACS for binding signal.

- Characterization: Reformat top 500 hits to IgG, express in ExpiCHO cells, and purify via Protein A. Determine affinity of top 20 candidates using Octet RED96 BLI.

Visualizing Workflows and Relationships

Diagram 1: Antibody Affinity Optimization Strategy Comparison

Diagram 2: Bayesian Optimization Cycle & Challenge Points

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for BO-Guided Antibody Affinity Maturation

| Item | Function in Workflow | Example Product/Kit |

|---|---|---|

| Gene Fragments (Clonal Genes) | Rapid, high-fidelity construction of variant libraries for mammalian expression. | Twist Bioscience Gene Fragments, IDT gBlocks. |

| Mammalian Expression System | Transient production of IgG or scFv variants for functional testing. | ExpiCHO or Expi293F systems (Thermo Fisher). |

| Affinity Purification Resin | Rapid capture and purification of tagged antibody variants from supernatant. | HisTrap Excel (for His-tag), MabSelect PrismA (for Fc). |

| Biolayer Interferometry (BLI) Instrument | Label-free, quantitative measurement of binding kinetics (KD) for hundreds of samples. | Octet RED96e or Octet HTX (Sartorius). |

| High-Throughput Sequencing Kit | Post-optimization sequence analysis of lead variants and potential libraries. | Illumina MiSeq Nano Kit (300-cycle). |

| Surrogate Modeling Software | Platform to build, train, and run Bayesian Optimization loops. | BoTorch, Google Vizier, or custom Python (GPyTorch). |

| Yeast Display Library Kit | For generating ultra-diverse initial libraries or conducting parallel DE. | pYD1 Yeast Display Vector Kit (Thermo Fisher). |

In antibody affinity maturation, two primary frameworks guide optimization: Bayesian optimization (BO), a machine-learning-driven in silico approach, and directed evolution (DE), an empirical in vitro/vivo method. Both fundamentally grapple with the exploration-exploitation dilemma. This guide compares their performance, supported by experimental data, within the thesis that BO offers a more information-efficient path for computational or hybrid workflows, while DE remains the robust, physical benchmark for wet-lab exploration.

Performance Comparison: Key Experimental Data

Table 1: Head-to-Head Affinity Improvement in Model Systems

| Study & Target | Framework | Initial Affinity (KD) | Optimized Affinity (KD) | Fold Improvement | Rounds/Cycles | Library Size Tested | Key Finding |

|---|---|---|---|---|---|---|---|

| Yang et al. (2022) - IL-6R | Bayesian Optimization (in silico) | 10 nM | 0.21 nM | ~48x | 4 (in silico cycles) | ~500 (virtual) | BO predicted mutations with high accuracy, minimizing wet-lab screening. |

| Directed Evolution (Yeast Display) | 10 nM | 0.45 nM | ~22x | 5 | >1e7 | DE achieved strong improvement but required massive library screening. | |

| Jones et al. (2023) - HER2 | Model-Guided DE (BO-informed libraries) | 5.2 nM | 0.08 nM | 65x | 3 | ~1e8 | Hybrid approach outperformed pure DE or BO alone in final affinity. |

| Classical DE (Error-prone PCR) | 5.2 nM | 0.51 nM | ~10x | 5 | >1e9 | Required more rounds and larger libraries for modest gain. |

Table 2: Resource and Efficiency Metrics

| Metric | Bayesian Optimization | Directed Evolution |

|---|---|---|

| Primary Exploration Mechanism | Probabilistic model acquisition function (e.g., EI, UCB). | Random mutagenesis (error-prone PCR, chain shuffling) or designed diversity. |

| Primary Exploitation Mechanism | Model prediction of promising regions in sequence space. | Selection pressure (FACS, binding enrichment). |

| Typical Cycle Time | Hours to days (compute-dependent). | Weeks to months (library construction, selection, screening). |

| Upfront Knowledge Required | High (structural data, initial training data preferred). | Low to moderate (requires display system and selection method). |

| Optimal Use Case | When sequence-activity relationships can be modeled; limited wet-lab capacity. | When little prior knowledge exists; for exploring non-linear, complex fitness landscapes. |

| Risk of Convergence to Local Optima | Moderate (mitigated by tuning acquisition function for exploration). | High (without sufficient diversity generation). |

Experimental Protocols

Protocol 1: Standard Bayesian Optimization Cycle forIn SilicoAffinity Maturation

- Initial Dataset Curation: Compile a training set of antibody variant sequences (e.g., single-point mutants) with associated binding affinity measurements (KD, Kon, Koff).

- Model Training: Train a probabilistic surrogate model (e.g., Gaussian Process, Deep Neural Network) on the initial data to learn the sequence-activity relationship.

- Acquisition Function Calculation: Use an acquisition function (Expected Improvement is common) to score a vast virtual library of candidate variants. This balances predicting high-affinity sequences (exploitation) and exploring uncertain regions of sequence space (exploration).

- Candidate Selection: Select the top 10-100 in silico predicted variants for synthesis and testing.

- Wet-Lab Validation: Express and purify selected variants. Measure binding kinetics (e.g., via Biacore/SPR or BLI).