Beyond Random Screening: A Practical Guide to Bayesian Optimization for Next-Generation Antibody Design

This article provides researchers and drug development professionals with a comprehensive introduction to Bayesian optimization (BO) for antibody design.

Beyond Random Screening: A Practical Guide to Bayesian Optimization for Next-Generation Antibody Design

Abstract

This article provides researchers and drug development professionals with a comprehensive introduction to Bayesian optimization (BO) for antibody design. We first explore the foundational limitations of traditional high-throughput screening and the core components of a BO framework. We then detail a methodological workflow for implementation, covering sequence space definition, acquisition functions, and successful case studies. Practical sections address common experimental and computational challenges in model construction and hyperparameter tuning. Finally, we compare BO against alternative machine learning approaches and discuss validation strategies for in silico predictions. The conclusion synthesizes key takeaways and outlines future directions for integrating BO with structural modeling and clinical translation.

Why Bayesian Optimization? From High-Throughput Screening to Intelligent Antibody Discovery

The advent of machine learning-driven Bayesian optimization represents a paradigm shift in antibody design, promising to navigate the vast protein sequence space with unprecedented efficiency. To fully appreciate this shift, one must first understand the fundamental limitations of the traditional discovery pillars upon which it improves: random discovery (e.g., animal immunization, phage/yeast display) and directed evolution (e.g., error-prone PCR, site-saturation mutagenesis). This document details the technical bottlenecks of these classical approaches, providing the essential rationale for the integration of probabilistic models and active learning in next-generation antibody engineering.

Table 1: Throughput vs. Coverage Limits of Traditional Methods

| Method | Theoretical Library Size | Practical Screening Throughput | Effective Sequence Space Coverage | Primary Bottleneck |

|---|---|---|---|---|

| Animal Immunization | ~10⁸ B cells (mouse) | 10² - 10³ clones (hybridoma screening) | Extremely Low (<10⁻¹⁰) | Immune tolerance, low throughput screening, species bias. |

| Phage Display (Naïve) | 10⁹ - 10¹¹ | 10⁷ - 10¹¹ (panning selection) | Moderate (10⁻⁹ - 10⁻⁷) | Translational bias, folding issues in E. coli, limited diversity source. |

| Yeast Surface Display | 10⁷ - 10⁹ | 10⁷ - 10⁸ (FACS) | Moderate to High (10⁻⁸ - 10⁻⁶) | Eukaryotic expression burden, lower transformation efficiency. |

| Error-Prone PCR (1st Gen) | 10¹⁰ - 10¹³ | <10⁸ | Local (focused on parent) | Random, non-targeted mutations; high proportion of deleterious variants. |

| Site-Saturation Mutagenesis | 20ⁿ (n=residues) | <10⁸ | Local & Combinatorial | Combinatorial explosion; screening cannot cover full combinatorial library. |

Table 2: Key Experimental Metrics and Limitations

| Parameter | Random Discovery (Immunization/Display) | Directed Evolution | Implication for Design |

|---|---|---|---|

| Affinity Maturation (Kd Gain) | 10-1000 nM → ~1 nM (3-5 rounds) | 1 nM → 10-100 pM (multiple cycles) | Labor-intensive, diminishing returns per round. |

| Development Timeline (to candidate) | 6-12 months | Adds 3-6 months per evolution cycle | Slow iteration loops hinder rapid response. |

| Multispecificity Engineering | Poor (relies on chance pairing) | Challenging (requires parallel evolution) | Lacks a systematic framework for co-optimization. |

| Humanization Requirement | High (for animal sources) | Medium (can start from human scaffold) | Adds steps, can introduce immunogenicity risk. |

Detailed Experimental Protocols Highlighting Bottlenecks

Protocol 1: Phage Display Panning with a Naïve Library

Objective: Isolate antigen-specific antibody fragments (scFv/Fab). Bottleneck Focus: The stochastic nature of panning and amplification biases.

- Library Preparation: Use a naïve human scFv phage library (e.g., Yale CAT-derived, ~10¹¹ diversity).

- Panning: Immobilize 10 µg of target antigen on an immuno-tube/plate. Block with 2% BSA/PBS. Incubate with 10¹² phage particles in blocking buffer for 1-2 hours at RT.

- Washing: Perform 10 washes with PBST (0.1% Tween-20) in Round 1, escalating to 20 washes in subsequent rounds to increase stringency.

- Elution: Elute bound phage using 1 mL of 100 mM Triethylamine (or glycine-HCl, pH 2.2) for 10 minutes, then neutralize with 0.5 mL 1M Tris-HCl, pH 7.4.

- Amplification: Infect mid-log phase TG1 E. coli with eluted phage, culture, and rescue with helper phage (e.g., M13KO7) to produce phage for the next round. CRITICAL BOTTLENECK: This amplification step introduces propagation bias, where fast-growing clones outcompete others, irrespective of affinity.

- Screening: After 3-4 rounds, pick 96-384 individual colonies for monoclonal phage ELISA to identify binders.

Protocol 2: Affinity Maturation via Error-Prone PCR & Yeast Display

Objective: Improve antibody affinity through random mutagenesis and FACS. Bottleneck Focus: The "search blindness" of random mutagenesis.

- Gene Diversification: Subject the parent antibody VH/VL genes to error-prone PCR using Mutazyme II kit, aiming for 1-3 amino acid substitutions per gene.

- Library Construction: Clone mutated fragments into a yeast display vector (e.g., pYD1) via homologous recombination in Saccharomyces cerevisiae strain EBY100. Achieve a transformation efficiency of >10⁷ variants.

- Expression & Labeling: Induce expression in SG-CAA medium at 20°C. Label cells with: a) Anti-c-Myc-FITC (for expression check), b) Biotinylated antigen at varying concentrations (e.g., 100 nM, 10 nM, 1 nM), c) Streptavidin-PE (for detection).

- FACS Sorting: Use a high-speed sorter. Gate on double-positive (FITC⁺/PE⁺) cells. For the first sort, use a high antigen concentration to collect binders. CRITICAL BOTTLENECK: Subsequent sorts use decreasing antigen concentrations to select for higher affinity, but the process is blind to stability or developability. The final "winners" are often those that express well and bind, not necessarily the best binders in the theoretical mutant space.

- Characterization: Sequence top clones and characterize soluble fragments via SPR/BLI.

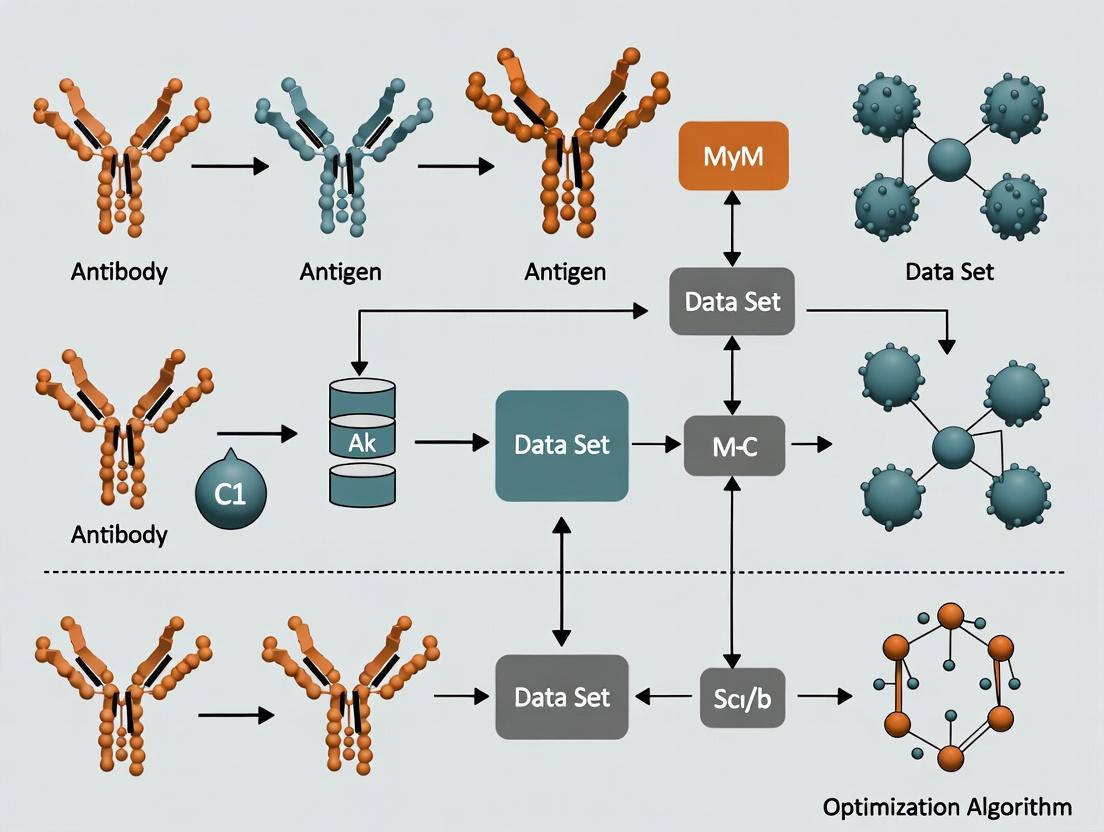

Visualizing Workflows and Limitations

Title: Directed Evolution Cycle Bottlenecks

Title: The Combinatorial Explosion Problem

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Reagents for Traditional Antibody Discovery

| Reagent/Material | Function & Relevance to Bottlenecks | Example/Supplier |

|---|---|---|

| Naïve Human scFv Phage Library | Source of initial diversity. Bottleneck: Limited by donor sampling and cloning biases. | Synthetic Human Combinatorial Antibody Library (HuCAL), Yale CAT library. |

| Helper Phage (M13KO7) | Essential for packaging and amplifying phage during panning. Bottleneck: Causes propagation bias. | NEB (M13KO7 Helper Phage). |

| Yeast Display Vector (pYD1) | Surface expression system for eukaryotic folding and FACS. Bottleneck: Lower transformation efficiency vs. phage. | Invitrogen pYD1. |

| Error-Prone PCR Kit (Mutazyme II) | Introduces random mutations. Bottleneck: Mutational bias, non-targeted. | Agilent (GeneMorph II). |

| Biotinylated Antigen | Critical for labeling during FACS/panning. Bottleneck: Requires site-specific labeling to avoid epitope masking. | Prepared via NHS-PEG4-Biotin conjugation kits (Thermo Fisher). |

| Anti-c-Myc-FITC Antibody | Detection tag for expression normalization in yeast display. Enables gating on well-expressed clones. | Commercial clones (e.g., 9E10). |

| Fluorescence-Activated Cell Sorter (FACS) | High-throughput screening instrument. Ultimate bottleneck: Maximum ~10⁸ cells sorted per experiment. | BD FACSAria, Beckman Coulter MoFlo. |

| Surface Plasmon Resonance (SPR) Chip (CM5) | For kinetics characterization (KD). Bottleneck: Low-throughput, expensive, follows screening. | Cytiva Series S CM5. |

Within the domain of computational antibody design, the search for high-affinity, developable candidates is a high-dimensional, expensive, and noisy optimization problem. Each experimental evaluation of a candidate sequence—via surface plasmon resonance (SPR) or next-generation sequencing (NGS)-based assays—is costly and time-consuming. Bayesian Optimization (BO) provides a principled mathematical framework for navigating such complex design spaces with maximal efficiency, transforming the search from random screening to intelligent, probabilistic guidance. This whitepaper details the core philosophy and technical methodology of BO, contextualized for its transformative application in therapeutic antibody discovery.

The Probabilistic Framework: From Prior Belief to Posterior Knowledge

The essence of BO is a recursive Bayesian inference loop. It formalizes the designer's prior assumptions about the unknown objective function (e.g., binding affinity as a function of sequence) and sequentially updates these beliefs with observed data to guide the search toward promising regions.

Core Algorithmic Loop:

- Build a Probabilistic Surrogate Model: A prior distribution is placed over the objective function, typically using a Gaussian Process (GP).

- Compute an Acquisition Function: This utility function balances exploration (probing uncertain regions) and exploitation (refining known good regions) using the surrogate's posterior.

- Select and Evaluate the Next Point: The candidate maximizing the acquisition function is selected for expensive experimental evaluation.

- Update the Surrogate Model: The new observation is incorporated, updating the posterior belief. The loop repeats until a resource budget is exhausted.

Diagram Title: Bayesian Optimization Closed Loop

Gaussian Process as a Surrogate Model

A Gaussian Process defines a distribution over functions, fully specified by a mean function m(x) and a covariance (kernel) function k(x, x'). Posterior Inference: Given observed data D = (X, y), the posterior predictive distribution for a new point x* is Gaussian with closed-form mean and variance: *Mean:* μ(x_) = k*^T K^{-1} y *Variance:* σ²(x_) = k(x*, x) - k_^T K^{-1} k* Where K is the covariance matrix of observed points, and k* is the vector of covariances between x_* and observed points.

Table 1: Common Kernel Functions in Bayesian Optimization for Antibody Design

| Kernel Name | Mathematical Form (Simplified) | Key Property | Applicability in Antibody Design |

|---|---|---|---|

| Matérn 5/2 | k(d) = (1 + √5d + 5d²/3)exp(-√5d) | Less smooth than RBF, accommodates moderate variations. | Default choice for physical landscapes; handles noisy affinity measurements well. |

| Radial Basis Function (RBF) | k(d) = exp(-d²/2) | Infinitely differentiable, assumes very smooth functions. | Useful for modeling stable, continuous properties like solubility or thermal stability. |

| Dot Product | k(x, x') = σ₀² + x · x' | Captures linear relationships. | Can model linear dependencies on specific sequence features (e.g., charge). |

Acquisition Functions for Guided Search

The acquisition function α(x) quantifies the utility of evaluating a candidate. Key strategies include:

- Expected Improvement (EI): EI(x) = E[max(0, f(x) - f(x⁺))]

- f(x⁺) is the current best observation.

- Upper Confidence Bound (UCB): UCB(x) = μ(x) + κσ(x)*

- κ controls the exploration-exploitation trade-off.

- Probability of Improvement (PI): PI(x) = P(f(x) > f(x⁺))

Table 2: Quantitative Comparison of Acquisition Functions (Typical Behavior)

| Function | Exploitation Bias | Exploration Bias | Sensitivity to Noise | Typical κ or ξ Value |

|---|---|---|---|---|

| Expected Improvement (EI) | Moderate-High | Moderate | Moderate | ξ=0.01 (jitter) |

| Upper Confidence Bound (UCB) | Tunable (κ) | Tunable (κ) | Low | κ=2.0 - 3.0 |

| Probability of Improvement (PI) | Very High | Low | High | ξ=0.01 |

Experimental Protocol: Bayesian Optimization in Antibody Affinity Maturation

This protocol outlines a standard computational-experimental cycle for affinity maturation.

A. Initialization Phase

- Design Space Parameterization: Encode antibody variant sequences (e.g., CDR-H3 region) into a numerical feature vector (e.g., one-hot encoding, physicochemical descriptors, or latent space from a pre-trained language model).

- Construct Initial Dataset (D₀): Assay a small, space-filling set (e.g., 20-50 variants) via a high-throughput screening method (e.g., yeast display with FACS or NGS-coupled binding selection).

- Define Objective Function (y): Normalize and process raw readouts (e.g., KD, enrichment ratio) into a single maximization objective.

B. Iterative Bayesian Optimization Loop For each iteration i (until budget exhausted):

- Model Training: Train the GP surrogate model on the current dataset D_i. Optimize kernel hyperparameters (length scales, noise) via marginal likelihood maximization.

- Candidate Selection: Optimize the acquisition function α(x) over the entire encoded design space using a global optimizer (e.g., L-BFGS-B or multi-start gradient descent).

- Sequence Synthesis & Expression: The top 5-10 selected variant sequences are synthesized (gene fragments) and expressed (e.g., mammalian transient transfection for IgG).

- Experimental Evaluation: Purified antibodies are characterized via a gold-standard, low-throughput method (e.g., SPR/Biacore) to obtain accurate binding kinetics (ka, kd, KD).

- Data Integration: The new (sequence, KD) pairs are added to D_i to form D_{i+1}.

Diagram Title: BO in Antibody Affinity Maturation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for a BO-Driven Antibody Campaign

| Item | Function & Relevance to BO |

|---|---|

| NGS-Compatible Display Library (Yeast, Phage) | Enables high-throughput generation of the initial dataset (D₀) and potential intermediate pooled screens to query more points per cycle. |

| SPR/Biacore Instrumentation | Provides the gold-standard, quantitative binding kinetic data (KD) that serves as the primary objective function (y) for the BO model. Low noise is critical. |

| GP Regression Software (GPyTorch, GPflow, scikit-learn) | Libraries for building and training the probabilistic surrogate model. Must handle custom kernels and noisy observations. |

| Global Optimization Library (DIRECT, CMA-ES, SciPy) | Required to efficiently solve the inner loop problem of maximizing the acquisition function over complex, encoded sequence spaces. |

| Automated Cloning & Expression System (e.g., High-throughput Gibson assembly & transient transfection) | Reduces turnaround time for the experimental evaluation step, accelerating the BO iteration cycle. |

| Pre-trained Protein Language Model (ESM, AntiBERTy) | Provides advanced, semantically meaningful sequence representations (embeddings) as input features (x) for the GP, significantly improving model performance. |

Advanced Considerations & Recent Developments

Modern BO in antibody design addresses several challenges:

- High-Dimensionality: Using latent spaces from protein language models as the input domain reduces effective dimensionality.

- Multi-Objective Optimization: Extending BO to Pareto fronts for balancing affinity, specificity, and developability.

- Contextual & Meta-Learning: Leveraging data from past antibody campaigns to warm-start the prior, accelerating new projects.

- Batch Parallelization: Using acquisition functions like qEI to select a batch of diverse candidates for parallel experimental testing, fitting real-world workflow.

The core philosophy of Bayesian Optimization—a probabilistic framework for guided search—provides a rigorous and efficient paradigm for antibody engineering. By explicitly modeling uncertainty and information gain, it transforms the discovery process from one of brute-force screening to one of intelligent, iterative learning, promising to significantly accelerate the development of next-generation biologics.

The engineering of therapeutic antibodies is a high-dimensional, resource-intensive challenge. Bayesian Optimization (BO) provides a principled framework for navigating complex biological design spaces with minimal experimentation. It iteratively proposes candidate antibodies by balancing exploration (sampling uncertain regions) and exploitation (refining promising candidates). This guide details its two core components: the surrogate model, which probabilistically models the relationship between antibody sequence/structure and a desired property (e.g., affinity, stability), and the acquisition function, which decides the next experiment.

Surrogate Models: Gaussian Processes and Random Forests

Gaussian Processes (GPs)

A GP is a non-parametric probabilistic model defining a distribution over functions. It is fully specified by a mean function ( m(\mathbf{x}) ) and a covariance (kernel) function ( k(\mathbf{x}, \mathbf{x}') ), where ( \mathbf{x} ) represents an antibody descriptor (e.g., sequence features, structural parameters).

Methodology: Given observed data ( \mathcal{D}{1:t} = {(\mathbf{x}i, yi)}{i=1}^t ), the GP assumes a multivariate Gaussian distribution over the observations. The posterior predictive distribution for a new candidate ( \mathbf{x}{t+1} ) is Gaussian with mean ( \mu(\mathbf{x}{t+1}) ) and variance ( \sigma^2(\mathbf{x}{t+1}) ): [ \mu(\mathbf{x}{t+1}) = \mathbf{k}^\top \mathbf{K}^{-1} \mathbf{y} ] [ \sigma^2(\mathbf{x}{t+1}) = k(\mathbf{x}{t+1}, \mathbf{x}{t+1}) - \mathbf{k}^\top \mathbf{K}^{-1} \mathbf{k} ] where ( \mathbf{K} ) is the kernel matrix and ( \mathbf{k} ) is the vector of covariances between ( \mathbf{x}{t+1} ) and the observed data.

Experimental Protocol for GP Application in Antibody Design:

- Feature Encoding: Convert antibody variable region sequences into numerical features (e.g., physicochemical property vectors, one-hot encodings, or learned embeddings).

- Kernel Selection & Training: Choose a kernel (e.g., Matérn, RBF) capturing expected smoothness. Optimize kernel hyperparameters (length scales, variance) by maximizing the marginal log-likelihood of the training data ( \mathcal{D}_{1:t} ).

- Posterior Inference: Compute the predictive mean (estimated property) and variance (uncertainty) for all candidates in the pre-defined library.

Diagram 1: Gaussian Process Modeling Workflow

Random Forests (RFs)

An RF is an ensemble of decorrelated decision trees used for regression. It provides a point prediction as the mean of individual tree predictions and can estimate uncertainty via the variance of these predictions.

Methodology:

- Bootstrap Aggregating (Bagging): Train ( B ) decision trees on bootstrapped samples of ( \mathcal{D}_{1:t} ).

- Random Feature Subsetting: At each split in a tree, a random subset of the antibody features is considered.

- Prediction & Uncertainty: For input ( \mathbf{x}{t+1} ), the RF prediction is ( \frac{1}{B}\sum{b=1}^B Tb(\mathbf{x}{t+1}) ). The predictive variance is estimated as ( \frac{1}{B-1}\sum{b=1}^B (Tb(\mathbf{x}_{t+1}) - \text{prediction})^2 ).

Experimental Protocol for RF Application in Antibody Design:

- Data Preparation: Encode antibody sequences into features. Ensure the dataset is balanced for the target property range.

- Forest Training: Set the number of trees (e.g., 100-500), tree depth, and feature subset size. Train each tree on its bootstrapped sample.

- Inference: Pass library candidates through each tree. Aggregate predictions to obtain mean and variance estimates.

Quantitative Comparison of Surrogate Models

Table 1: Comparison of Gaussian Process and Random Forest Surrogate Models

| Feature | Gaussian Process (GP) | Random Forest (RF) |

|---|---|---|

| Model Type | Probabilistic, non-parametric | Ensemble, non-parametric |

| Primary Output | Full posterior distribution (mean & variance) | Point prediction + variance estimate |

| Uncertainty Quantification | Inherent, mathematically rigorous | Empirical, based on ensemble dispersion |

| Handling of High-Dimensional Data | Challenging; kernel choice critical | Generally robust |

| Interpretability | Low; kernel effects are complex | Moderate; feature importance available |

| Computational Cost (Training) | ( O(n^3) ) for n data points | ( O(B * n_{features} * n \log n) ) |

| Best Suited For | Smaller datasets (<10k), smooth objective functions | Larger datasets, noisy or discontinuous functions |

The Acquisition Function

The acquisition function ( \alpha(\mathbf{x}) ) uses the surrogate's posterior to score the utility of evaluating a candidate. It automatically balances exploration and exploitation.

Common Acquisition Functions

- Expected Improvement (EI): Measures the expected improvement over the current best observation ( f(\mathbf{x}^+) ). [ \text{EI}(\mathbf{x}) = \mathbb{E}[\max(f(\mathbf{x}) - f(\mathbf{x}^+), 0)] ]

- Upper Confidence Bound (UCB): A optimistic policy defined as ( \text{UCB}(\mathbf{x}) = \mu(\mathbf{x}) + \kappa \sigma(\mathbf{x}) ), where ( \kappa ) controls exploration.

- Probability of Improvement (PI): Measures the probability that a candidate will improve upon ( f(\mathbf{x}^+) ).

Protocol for Acquisition Function Optimization

- Compute Surrogate Outputs: Obtain ( \mu(\mathbf{x}) ) and ( \sigma(\mathbf{x}) ) for all candidates in the library from the trained GP or RF.

- Calculate Acquisition Scores: Apply the chosen acquisition function (e.g., EI) to all candidates using the predictive statistics.

- Select Next Experiment: Identify the candidate ( \mathbf{x}{t+1} = \arg\max{\mathbf{x}} \alpha(\mathbf{x}) ). This antibody is synthesized and assayed.

- Iterate: Update the dataset ( \mathcal{D}{1:t+1} = \mathcal{D}{1:t} \cup {(\mathbf{x}{t+1}, y{t+1})} ) and repeat from the model training step.

Diagram 2: Bayesian Optimization Iterative Loop

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Bayesian Optimization-Driven Antibody Design

| Item | Function in the BO Workflow |

|---|---|

| Phage Display / Yeast Display Library | Provides the initial diverse sequence space from which to sample and build the initial dataset. |

| Next-Generation Sequencing (NGS) Platform | Enables high-throughput sequencing of selection outputs, providing rich sequence-activity data for model training. |

| Automated Liquid Handling System | Crucial for high-throughput, reproducible synthesis and assay of BO-suggested antibody candidates. |

| Biolayer Interferometry (BLI) or SPR Instrument | Provides quantitative binding kinetics (KD, kon, koff) as the primary objective function for optimization (e.g., affinity). |

| Differential Scanning Fluorimetry (DSF) | Measures thermal stability (Tm) as a key developability property, often used as a secondary objective or constraint. |

| Cloud/High-Performance Computing (HPC) Cluster | Necessary for training models (especially GPs) and optimizing acquisition functions over large sequence libraries. |

| Specialized Software (e.g., Pyro, BoTorch, Scikit-learn) | Libraries implementing GPs, RFs, and acquisition functions for building custom BO pipelines. |

The synergy between a well-chosen surrogate model (GP for data-efficient uncertainty, RF for scale) and a balanced acquisition function forms the intelligent core of Bayesian Optimization. In antibody design, this translates to a systematic, learning-driven approach that significantly accelerates the campaign to identify high-affinity, stable therapeutic candidates, directly addressing the core challenges of modern drug development.

1. Introduction

The design of therapeutic antibodies is a high-dimensional optimization problem constrained by multiple, often competing, objectives. A modern Bayesian optimization (BO) framework for antibody design requires a precise definition of the design space—the universe of all possible antibody candidates parameterized by their sequences, structures, and functions. This guide delineates this space into three interconnected landscapes: sequence, structure, and multi-objective fitness. Understanding this tripartite definition is foundational for constructing efficient BO algorithms that can navigate this complex terrain to discover viable drug candidates.

2. The Tripartite Antibody Design Space

2.1 Sequence Space The sequence space encompasses all possible linear arrangements of amino acids across the antibody variable regions. Its dimensionality is vast: for a typical Complementarity-Determining Region (CDR) H3 of 15 residues, the theoretical space is 20¹⁵ (~3.3 x 10¹⁹) sequences. Practically, the space is constrained by natural repertoire patterns, structural feasibility, and manufacturability.

Table 1: Quantitative Dimensions of Antibody Sequence Space

| Region | Typical Length (residues) | Theoretical Sequence Diversity | Observed Natural Diversity (Approx.) |

|---|---|---|---|

| CDR H1 | 5-7 | 20⁵ to 20⁷ (3.2x10⁶ to 1.3x10⁹) | 10² - 10³ |

| CDR H2 | 16-19 | ~20¹⁷ (1.3x10²²) | 10³ - 10⁴ |

| CDR H3 | 4-25 | ~20¹⁵ (3.3x10¹⁹) | 10⁷ - 10¹² (in humans) |

| Framework | ~85 | ~20⁸⁵ | Highly conserved (10¹ - 10² variants) |

2.2 Structure Space The structure space refers to the set of all possible three-dimensional conformations of the antibody, particularly the antigen-binding paratope. Key parameters include CDR loop geometries, relative VH-VL orientation, and surface topology. Canonical forms for CDR L1-3 and H1-2 reduce complexity, but CDR H3 exhibits high conformational diversity.

Table 2: Key Structural Parameters Defining the Paratope

| Parameter | Typical Range/Description | Measurement Technique |

|---|---|---|

| CDR Loop Dihedral Angles | Φ, Ψ angles per residue | X-ray crystallography, MD simulations |

| VH-VL Interface Angle | 110° - 180° | Computational structural alignment |

| Paratope Surface Area | 600 - 1000 Ų | PDB analysis, Surface plasmon resonance |

| Solvent Accessible Surface | Variable | Computational chemistry (e.g., DSSP) |

| CDR H3 Loop Cluster (Chothia) | Kinked, Extended, Stacked | Loop structure classification |

2.3 Multi-Objective Fitness Landscape This landscape maps sequences and structures to a vector of functional properties. Optimization requires balancing multiple, often antagonistic, objectives.

Table 3: Core Objectives in Antibody Design Optimization

| Objective | Typical Target | Common Assay | Antagonistic Relationship With |

|---|---|---|---|

| Affinity (KD) | pM - nM range | Surface plasmon resonance (SPR) | Stability, Developability |

| Specificity/Selectivity | >1000-fold vs. homologs | Cross-reactivity panels, SPR | Broad neutralization |

| Thermal Stability (Tm) | >65°C | Differential scanning fluorimetry | High affinity mutations |

| Solubility/Aggregation | Low aggregation (<5%) | Size-exclusion chromatography, SE-HPLC | Hydrophobic paratopes |

| Expression Yield | >1 g/L in CHO cells | Transient expression, titer assay | Complex stability profiles |

| Immunogenicity Risk | Low predicted T-cell epitopes | In silico tools (e.g., TCED) | Human homology |

3. Experimental Protocols for Landscape Characterization

3.1 Protocol: Deep Mutational Scanning (DMS) for Sequence-Stability-Function Mapping Objective: Empirically map the local sequence landscape around a lead antibody. Materials: Antibody gene library, yeast surface display or phage display system, next-generation sequencing (NGS) reagents, fluorescence-activated cell sorting (FACS), antigen. Procedure:

- Library Construction: Use site-saturation mutagenesis or oligonucleotide-directed mutagenesis to create a library of single-point mutants in the CDRs.

- Display & Selection: Clone library into a display vector (e.g., pYD1 for yeast). Induce expression and display on yeast surface.

- Staining & Sorting: Label yeast with fluorescent conjugates: anti-c-Myc (FITC) for expression and biotinylated antigen + streptavidin-PE for binding. Perform FACS to collect bins of cells with high/low expression and binding.

- NGS & Enrichment Analysis: Isolate plasmid DNA from each sorted population. Prepare NGS libraries and sequence. Calculate enrichment scores (ε) for each variant: ε = log₂(freqselected / freqinput).

- Data Integration: Plot ε(binding) vs. ε(expression) to identify variants that maintain both properties.

3.2 Protocol: Structural Characterization via HDX-MS Objective: Probe conformational dynamics and epitope mapping. Materials: Purified antibody-antigen complex, deuterium oxide (D₂O), quench buffer (low pH, low temperature), liquid chromatography-mass spectrometry (LC-MS) system with HDX capability. Procedure:

- Deuterium Labeling: Dilute antibody:antigen complex into D₂O buffer. Incubate for multiple time points (e.g., 10s, 1min, 10min, 1h) at controlled temperature.

- Quenching: Transfer aliquot to pre-chilled quench buffer (e.g., 0.1% formic acid, 0°C) to reduce pH to ~2.5 and halt exchange.

- Digestion & Analysis: Inject quenched sample into a cooled LC system with an immobilized pepsin column for rapid digestion. Separate peptides via UPLC and analyze by high-resolution MS.

- Data Processing: Calculate deuterium uptake per peptide over time. Identify regions with reduced uptake in the complex versus antibody alone, indicating epitope or conformational stabilization.

4. Visualizing the Design Space & Bayesian Optimization Workflow

Diagram Title: Bayesian Optimization Loop for Antibody Design

Diagram Title: Interplay of Antibody Design Spaces

5. The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Reagents & Materials for Antibody Design Space Analysis

| Item | Function/Application | Example/Supplier |

|---|---|---|

| Yeast Display Vector | Surface display of antibody fragments for coupling genotype to phenotype. | pYD1 (Thermo Fisher) |

| Phage Display Library | Diverse library of scFv or Fab fragments for panning against antigens. | Human synthetic Fab library (Dyax) |

| Anti-c-Myc Tag, FITC | Detection of displayed antibody expression level on yeast surface. | Clone 9E10 (Abcam) |

| Streptavidin-PE | Fluorescent detection of biotinylated antigen binding in display systems. | ProZyme |

| Biotinylation Kit | Site-specific biotin labeling of antigen for binding assays. | EZ-Link NHS-PEG4-Biotin (Thermo) |

| SPR Chip (CMS) | Gold sensor chip for real-time, label-free kinetic affinity measurements. | Series S Chip CM5 (Cytiva) |

| HDX-MS Buffer Kit | Standardized buffers for reproducible hydrogen-deuterium exchange experiments. | Waters HDX-MS Kit |

| NGS Library Prep Kit | Preparation of sequencing libraries from display library populations. | Illumina Nextera XT |

| CHO Transient Expression | High-yield mammalian expression system for antibody production. | ExpiCHO System (Thermo Fisher) |

| Stability Dye (SYPRO) | Dye for measuring thermal melt (Tm) by differential scanning fluorimetry. | SYPRO Orange (Thermo Fisher) |

Bayesian optimization (BO) has emerged as a transformative tool in computational antibody design, a core component of modern biologics discovery. Within the broader thesis of advancing Bayesian optimization for antibody design, it is critical for researchers to understand the specific project stages and problem types where BO offers maximal advantage over alternative optimization strategies. This guide details these scenarios with current data and methodologies.

Project Stages for Bayesian Optimization Deployment

BO is not universally applicable across all stages of antibody development. Its value is concentrated in specific, resource-intensive early phases.

Table 1: Applicability of BO Across Antibody Discovery Stages

| Project Stage | Primary Goal | BO Suitability (High/Med/Low) | Key Rationale |

|---|---|---|---|

| Target Antigen Characterization | Identify epitopes & paratopes | Low | Problem space is poorly defined; limited quantitative feedback. |

| Library Design & Panning | Generate diverse candidate sequences | Medium | BO can guide library bias, but traditional display methods dominate. |

| Lead Candidate Optimization | Improve affinity, specificity, stability | High | Expensive assays (e.g., SPR, BLI); goal is to find global optimum with few iterations. |

| Developability Engineering | Optimize solubility, viscosity, aggregation | High | Multivariate problem with costly experimental readouts (e.g., SEC, stability assays). |

| Clinical Candidate Selection | Final validation & risk assessment | Low | Decisions based on comprehensive data; optimization is complete. |

Problem Types Best Suited for Bayesian Optimization

BO excels in specific problem archetypes common in antibody engineering.

Table 2: Problem Characteristics Favoring BO

| Problem Characteristic | Description | Why BO Fits |

|---|---|---|

| Black-Box, Expensive-to-Evaluate Functions | No analytical form; each evaluation (experiment) costs significant time/money. | BO's sample efficiency minimizes total evaluations. |

| Moderate Dimensionality | Typically 5-20 tunable parameters (e.g., CDR residues, fusion partners). | Avoids curse of dimensionality; GP surrogate models remain effective. |

| Continuous, Ordinal, or Categorical Parameters | Mix of continuous (pH, temp) and categorical (amino acid choices) variables. | Modern kernels (e.g., Matern, Hamming) handle mixed spaces. |

| Noise-Prone Observations | Experimental noise in measurements (e.g., binding affinity KD). | GP models can explicitly account for observational noise. |

| Multi-Objective Optimization | Simultaneously optimize affinity, immunogenicity, expression yield. | BO extensions like ParEGO or qNEHVI efficiently navigate trade-offs. |

Experimental Protocols for Key BO-Integrated Experiments

The following methodology is representative of a BO-driven affinity maturation campaign.

Protocol: High-Throughput Sequence-Activity Mapping for BO

Objective: Generate initial dataset to train BO surrogate model for predicting antibody binding affinity. Workflow:

- Design-of-Experiments (DoE): Generate a diverse set of 50-200 antibody variant sequences using Sobol sequence sampling across targeted CDR regions.

- Parallel Gene Synthesis: Synthesize variant genes via high-throughput oligo assembly (e.g., Twist Bioscience).

- Expression & Purification: Use mammalian transient expression (HEK293F) in 96-deep well format, followed by automated protein A affinity chromatography.

- Binding Affinity Assay: Determine kinetic parameters (KD, kon, koff) via parallelized biolayer interferometry (BLI) on an Octet HTX system.

- BO Loop Initiation: Use affinity data (log(KD)) as training labels. A Gaussian Process (GP) model with a composite kernel maps sequence space to affinity.

- Acquisition Function: Apply Expected Improvement (EI) to propose the next batch (e.g., 10-20) of variant sequences predicted to most improve affinity.

- Iterative Cycles: Repeat steps 2-6 for 5-10 cycles, or until affinity plateau is reached.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for BO-Integrated Antibody Experiments

| Reagent/Resource | Function in BO Workflow | Example Vendor/Platform |

|---|---|---|

| High-Fidelity DNA Synthesis | Rapid, accurate generation of variant libraries for BO proposals. | Twist Bioscience, IDT |

| Automated Mammalian Expression System | Consistent, parallel production of antibody variants for activity evaluation. | Expi293F System (Thermo Fisher), Freedom CHO-S |

| Parallel Protein Purification | High-throughput isolation of antibodies from micro-expressions. | Protein A MagBeads (Cube Biotech), KingFisher Systems |

| Label-Free Biosensor | Provides quantitative binding kinetics (KD) as primary feedback for BO. | Octet HTX (Sartorius), MASS-2 (Nicoya) |

| Aggregation & Stability Assays | Multi-objective feedback for developability optimization. | Uncle (Unchained Labs), Prometheus (NanoTemper) |

| BO Software Framework | Implements GP, acquisition functions, and manages the optimization loop. | BoTorch, Ax (Meta), Sherpa, Custom Python (GPyTorch/Emukit) |

Implementing Bayesian Optimization: A Step-by-Step Workflow for Antibody Engineering

The systematic design of therapeutic antibodies represents a high-dimensional optimization challenge. A Bayesian optimization framework for antibody design requires an initial, critical step: defining a quantitative, multi-parameter representation of an antibody variant. This whitepaper details this first step—parameterizing the antibody structure, primarily through its Complementarity-Determining Region (CDR) loops, into a feature set that can be linked to downstream developability scores. This parameterization forms the essential input space for Bayesian models, which will iteratively predict and optimize for desired biophysical and functional properties.

Core Parameterization: CDR Loop Feature Extraction

The CDR loops (H1, H2, H3, L1, L2, L3) are the primary determinants of antigen binding. Their parameterization moves beyond sequence alone to structural and physicochemical descriptors.

Feature Categories for Machine Learning-Ready Input

Table 1: Core Feature Categories for CDR Loop Parameterization

| Feature Category | Specific Descriptors | Predicted Impact on Developability |

|---|---|---|

| Sequential | Amino acid sequence, Length, Kappa/Lambda chain type | Stability, Immunogenicity risk |

| Physicochemical | Net charge, Hydrophobicity index, Isoelectric point (pI), Dipole moment | Solubility, Self-interaction, Viscosity |

| Structural | Canonical class, Predicted secondary structure, Solvent-accessible surface area (SASA), CDR loop dihedral angles | Aggregation propensity, Conformational stability |

| Energetic | Predicted binding affinity (ΔG), Intramolecular interaction energy | Expression yield, Thermal stability |

| Dynamic | Predicted root-mean-square fluctuation (RMSF), Loop flexibility metrics | Chemical degradation, Shelf-life |

Quantitative Data from Recent Studies

Table 2: Correlation of CDR-H3 Parameters with Key Developability Scores

| CDR-H3 Parameter | Typical Range (Therapeutic mAbs) | Correlation with Aggregation Score (r-value) | Correlation with Polyspecificity Score (r-value) | Primary Assay | ||

|---|---|---|---|---|---|---|

| Hydrophobicity (H-index) | 0.1 - 0.5 | +0.72 | +0.65 | Hydrophobic Interaction Chromatography (HIC) | ||

| Net Charge at pH 7.4 | -3 to +3 | +0.15 ( | charge | >5) | +0.58 (extreme +/-) | Imaged Capillary Isoelectric Focusing (icIEF) |

| Length (Residues) | 8 - 18 | +0.41 (if >18) | +0.33 (if >15) | Next-Generation Sequencing (NGS) Analysis | ||

| SASA (Ų) | 400 - 800 | +0.68 (if >900) | +0.25 | Molecular Dynamics (MD) Simulation |

Experimental Protocols for Feature Validation

Protocol: High-Throughput Hydrophobicity Profiling via HIC-HPLC

Purpose: Quantify relative surface hydrophobicity of antibody variants. Materials: Agilent 1260 Infinity II HPLC system, MAbPac HIC-10 column, Sodium phosphate buffer with ammonium sulfate gradient. Method:

- Sample Prep: Dialyze purified mAb variants into 1.5 M ammonium sulfate, 25 mM sodium phosphate, pH 7.0.

- Column Equilibration: Equilibrate HIC column with 1.0 M ammonium sulfate, 25 mM sodium phosphate, pH 7.0 for 30 min at 0.5 mL/min.

- Gradient Elution: Inject 20 µg of sample. Run a 30-minute linear gradient from 1.0 M to 0 M ammonium sulfate.

- Data Analysis: Record retention time. Normalize retention time of each variant to a internal control mAb to calculate Hydrophobicity Index (HIC-HI). Higher HIC-HI correlates with higher aggregation risk.

Protocol: Polyspecificity Assessment Using Surface Plasmon Resonance (SPR)

Purpose: Measure non-specific binding to a panel of immobilized polyanionic/polycationic ligands. Materials: Biacore 8K, CMS Sensor Chip, Human Cell Lysate, Heparin, Laminin, DNA. Method:

- Ligand Immobilization: Amine-couple human cell lysate proteins, heparin, and laminin to separate flow cells on a CMS chip (~5000 RU each).

- Running Buffer: Use HBS-EP+ (10 mM HEPES, 150 mM NaCl, 3 mM EDTA, 0.05% v/v Surfactant P20, pH 7.4).

- Kinetic Injection: Inject antibody variants at a single concentration (200 nM) over all flow cells for 180s at 30 µL/min, followed by 300s dissociation.

- Data Processing: Subtract signal from a blank reference flow cell. Report the average response unit (RU) across all non-target ligands at the end of the injection cycle as the "Polyspecificity Score."

Protocol: In-Silico Structural Parameter Extraction from Homology Models

Purpose: Generate structural features (SASA, dihedrals) from antibody sequence. Materials: ROSIE or SAbPred web server, MODELLER, BioPython, MD simulation software (e.g., GROMACS). Method:

- Template Selection & Modeling: Input VH and VL sequences into SAbPred. Use selected templates (e.g., from AbY database) for automated modeling with MODELLER. Generate 10 models.

- Energy Minimization: Solvate the top-ranked model in a water box, neutralize with ions, and perform steepest-descent minimization.

- Feature Calculation: Use the

MDtrajPython package to calculate:- Total SASA for each CDR loop using the Shrake-Rupley algorithm.

- Main chain dihedral angles (Phi, Psi) for all CDR residues.

- Radius of gyration for the Fv region.

- Aggregation Propensity Prediction: Input structural features into machine-learning models like

Aggrescan3DorSpatial Aggregation Propensitytools.

Visualizing the Parameterization-to-Optimization Workflow

Title: Antibody Parameterization Workflow for Bayesian Optimization

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents & Tools for Parameterization Studies

| Item | Supplier Examples | Function in Parameterization |

|---|---|---|

| HEK293/CHO Transient Expression Kit | Thermo Fisher (Expi293/ExpiCHO), Mirus (TransIT) | High-yield production of antibody variants for experimental profiling. |

| Protein A/G Purification Plates | Pierce (Thermo Fisher), Cytiva (MabSelect) | Rapid, parallel purification of IgGs from culture supernatants. |

| Hydrophobic Interaction Chromatography (HIC) Column | Thermo Fisher (MAbPac HIC-10), Tosoh Bioscience | Quantifying relative surface hydrophobicity of antibody variants. |

| Biacore CMS Sensor Chip & Immobilization Kits | Cytiva | Surface functionalization for SPR-based polyspecificity and affinity assays. |

| Multi-Antigen Polyspecificity Reagent (MAP) Kit | Solid Biosciences, The Native Antigen Company | Standardized panel of biotinylated antigens for off-target binding screens. |

| Differential Scanning Calorimetry (DSC) Plate Kit | Malvern Panalytical (MicroCal) | High-throughput measurement of thermal melting (Tm) for stability ranking. |

| Next-Generation Sequencing (NGS) Library Prep Kit for Antibodies | Twist Bioscience, Illumina (MiSeq) | Deep sequence analysis of antibody variant libraries post-selection. |

| In-Silico Modeling & Analysis Software (Cloud) | Schrödinger (BioLuminate), AWS/Azure (RosettaCloud) | Generating homology models and extracting structural parameters at scale. |

Within the Bayesian optimization (BO) pipeline for computational antibody design, this step is critical for transforming sparse, high-dimensional biological data into a predictive function that maps antibody sequence or structure space to a fitness score (e.g., binding affinity, specificity, developability). The surrogate model, often a probabilistic machine learning model, learns from an initial dataset—typically generated via phage display, yeast surface display, or deep mutational scanning—to predict and quantify the uncertainty of unseen variants. Its selection and training directly dictate the efficiency of the subsequent acquisition function in guiding the search toward optimal designs.

Surrogate Model Selection: A Comparative Analysis

The choice of surrogate model balances expressivity, data efficiency, uncertainty quantification (UQ), and computational cost. Below is a quantitative comparison of leading models applicable to antibody design.

Table 1: Quantitative Comparison of Surrogate Models for Antibody Fitness Prediction

| Model Type | Key Algorithm/ Variant | Data Efficiency | Uncertainty Quantification | Computational Scalability (to ~10⁴-10⁵ variants) | Interpretability | Best Suited For |

|---|---|---|---|---|---|---|

| Gaussian Process (GP) | Standard RBF Kernel | High (for ≤10³ data points) | Native (probabilistic) | Poor (O(n³) inversion) | Medium (via kernels) | Small, high-value initial datasets (e.g., focused libraries). |

| Sparse Gaussian Process | SVGP, FITC | Medium-High | Approximated, good | Good (with inducing points) | Medium | Scaling GP to larger display screening data. |

| Bayesian Neural Network (BNN) | Monte Carlo Dropout, Deep Ensembles | Medium (requires more data) | Approximated, ensemble-based | Medium (training cost high, inference fast) | Low | Complex, non-linear fitness landscapes from deep sequencing. |

| Random Forest (Probabilistic) | Quantile Regression Forest | Medium | Approximated (via ensemble variance) | Excellent | High (feature importance) | Medium-sized datasets with many sequence features. |

| Gradient Boosting (XGBoost/LGBM) | With quantile regression | High | Approximated (conformal prediction) | Excellent | Medium-High | Large-scale mutagenesis data for initial screening. |

Experimental Protocol for Initial Data Generation

The quality of the surrogate model is contingent on the initial dataset. A standard protocol for generating such data via yeast surface display is detailed below.

Protocol: Generation of Initial Training Data via Yeast Surface Display and Flow Cytometry

Objective: To produce a quantitative fitness label (binding signal) for a diverse library of antibody single-chain variable fragments (scFvs).

Materials: See "The Scientist's Toolkit" below. Procedure:

- Library Construction: Clone the diversified scFv library into a yeast display vector (e.g., pYD1) via homologous recombination or Gibson assembly.

- Transformation & Induction: Electroporate the library into Saccharomyces cerevisiae strain EBY100. Induce scFv expression by transferring cells to SG-CAA medium (20°C, 24-48 hrs).

- Labeling: Harvest induced cells. For each variant pool, stain with:

- A primary antigen (e.g., biotinylated target protein) at a concentration series (e.g., 100 nM, 10 nM, 1 nM).

- Secondary reagents: Fluorescently labeled anti-c-Myc antibody (for expression detection) and streptavidin-conjugated fluorophore (e.g., SA-PE, for binding detection).

- Flow Cytometry & Sorting: Analyze cells using a high-throughput flow cytometer. Gate for cells expressing scFv (Myc-positive). The median fluorescence intensity (MFI) of the binding channel for the expressing population serves as the fitness label. Cells can be sorted into bins based on binding MFI to create a stratified training set.

- Sequencing: Isolate plasmid DNA from sorted populations or the pre-sorted library. Perform next-generation sequencing (NGS) on the scFv variable regions.

- Data Curation: Align NGS reads to reference. Encode each variant using a numerical scheme (e.g., one-hot, AAindex, physicochemical embeddings). Pair each variant sequence with its corresponding binding MFI (or a normalized Kdapp derived from titration). This forms the dataset

{X_sequence, y_fitness}for model training.

Training Methodology for a Gaussian Process Surrogate

Given its native UQ, a GP is a canonical choice for BO. The training protocol for a GP surrogate on antibody sequence data is as follows.

Protocol: Training a Sparse Variational Gaussian Process (SVGP) on Sequence-Fitness Data

Input: Initial dataset D = {X_i, y_i} for i=1...N, where X_i is a feature vector of the antibody variant (e.g., one-hot encoded CDR sequences, ESM-2 embeddings) and y_i is a normalized fitness score (e.g., log-transformed binding MFI).

Preprocessing: Standardize y to zero mean and unit variance. Use dimensionality reduction (PCA) on X if using high-dimensional embeddings.

Model Specification:

- Mean Function: Constant mean (μ).

- Kernel Function: Combination of a Matérn 5/2 kernel (to model smooth but non-linear trends) and a white noise kernel (to capture experimental noise):

k(x, x') = σ² * Matern52(x, x') + σ_noise² * δ(x, x'). - Inducing Points: Initialize

Minducing points (M << N) via k-means clustering onX. Training (Optimization):

- Maximize the Evidence Lower Bound (ELBO) using stochastic gradient descent (e.g., Adam optimizer).

- Use mini-batches of data (e.g., 256 points per batch) for scalability.

- Monitor convergence via the stabilization of the ELBO loss.

Output: A trained SVGP model capable of predicting a posterior distribution

p(y* | x*, D) = N(μ(x*), σ²(x*))for any new sequencex*.

Visualizations

Title: Initial Data Generation & Model Training Workflow

Title: SVGP Model Architecture for Sequence Fitness

The Scientist's Toolkit

Table 2: Essential Research Reagents & Materials for Initial Data Generation

| Item | Function in Protocol | Example Product/Catalog |

|---|---|---|

| Yeast Display Vector | Plasmid for surface expression of scFv, contains Aga2p fusion and epitope tags. | pYD1 (Thermo Fisher V83501) |

| S. cerevisiae EBY100 | Engineered yeast strain for inducible display; genotype: GAL1-AGA1::URA3. | ATCC MYA-4941 |

| Induction Media (SG-CAA) | Galactose-containing medium for induction of scFv expression under GAL1 promoter. | Prepared in-lab (20 g/L galactose, 6.7 g/L YNB, etc.) |

| Biotinylated Antigen | Target protein for binding assays, enables sensitive detection via streptavidin. | Customer-specific, biotinylated via EZ-Link NHS-PEG4-Biotin. |

| Anti-c-Myc Antibody, Fluorescent | Detects expression level of displayed scFv (via c-Myc tag). | Anti-c-Myc-FITC (Miltenyi Biotec 130-116-485) |

| Streptavidin-Conjugated Fluorophore | Detects binding of biotinylated antigen. | Streptavidin-PE (BioLegend 405204) |

| High-Throughput Flow Cytometer | Analyzes and sorts yeast cells based on expression and binding fluorescence. | Sony SH800S, BD FACSymphony |

| NGS Library Prep Kit | Prepares variable region amplicons for deep sequencing. | Illumina MiSeq Nano Kit (300-cycles) |

| GP Training Software | Library for scalable, flexible GP model training. | GPyTorch (Python) |

In the high-stakes field of computational antibody design, Bayesian Optimization (BO) has emerged as a powerful framework for navigating complex, high-dimensional, and expensive-to-evaluate fitness landscapes. The core challenge is to optimally select the sequence or structure to test in the next wet-lab experiment. This decision is governed by the acquisition function, which quantifies the utility of evaluating a candidate point. For researchers aiming to optimize antibody properties like affinity, specificity, or stability, the choice between Expected Improvement (EI), Upper Confidence Bound (UCB), and Probability of Improvement (PI) is critical. This guide provides a technical deep dive into these functions, tailored for the antibody design pipeline.

Mathematical Foundations & Comparative Analysis

Each acquisition function balances exploration (probing uncertain regions) and exploitation (refining known good regions) differently. Their performance is intrinsically linked to the Gaussian Process (GP) surrogate model, which provides a predictive mean (\mu(x)) and standard deviation (\sigma(x)) for any candidate antibody variant (x).

The table below summarizes the core quantitative characteristics of the three primary acquisition functions.

Table 1: Comparison of Key Acquisition Functions for Bayesian Optimization

| Function | Mathematical Formulation | Exploration-Exploitation Balance | Key Assumptions & Sensitivities | Typical Use Case in Antibody Design |

|---|---|---|---|---|

| Probability of Improvement (PI) | (\alpha_{PI}(x) = \Phi\left(\frac{\mu(x) - f(x^+) - \xi}{\sigma(x)}\right)) | High exploitation bias. Tunes balance via (\xi) (trade-off parameter). | Sensitive to the choice of (\xi). Can get stuck in shallow local maxima if (\xi) is too small. | Initial screens where any improvement over a baseline is valuable. |

| Expected Improvement (EI) | (\alpha_{EI}(x) = (\mu(x) - f(x^+) - \xi)\Phi(Z) + \sigma(x)\phi(Z)) where (Z = \frac{\mu(x) - f(x^+) - \xi}{\sigma(x)}) | Balanced. Automatically weights mean and uncertainty. The de facto standard. | Requires an incumbent (f(x^+)). Robust to moderate model mismatch. | General-purpose affinity maturation or stability optimization campaigns. |

| Upper Confidence Bound (UCB) | (\alpha_{UCB}(x) = \mu(x) + \kappa \sigma(x)) | Explicit, tunable balance via (\kappa). Higher (\kappa) promotes exploration. | Theoretical regret bounds exist. Performance depends on schedule for (\kappa). | Optimizing under strict evaluation budgets or when prioritizing discovery of diverse leads. |

Legend: (\Phi) is the CDF of the standard normal distribution; (\phi) is its PDF. (f(x^+)) is the best observed objective value. (\xi) and (\kappa) are tunable parameters.

Experimental Protocols in Computational Antibody Design

The efficacy of an acquisition function is validated through in silico benchmarks before guiding real-world experiments.

Protocol 1: Benchmarking Acquisition Functions on In Silico Landscapes

- Landscape Definition: Select a simulated antibody fitness landscape (e.g., a random GP prior, a public dataset like SAbDab with a learned surrogate, or an in silico scoring function like FoldX or ABACUS).

- BO Loop Initialization: Randomly sample a small set of initial antibody sequences/structures (5-10) to form the initial training set for the GP model.

- Iterative Optimization: For a fixed budget of iterations (e.g., 50-100): a. Train the GP model on all observed data. b. Optimize the chosen acquisition function (EI, UCB, PI) to propose the next candidate. c. Query the in silico oracle (the simulated landscape) to obtain the objective value (e.g., binding energy). d. Append the new observation to the training set.

- Metric Tracking: Record the best objective value found and cumulative regret at each iteration.

- Statistical Analysis: Repeat the entire procedure (steps 2-4) with multiple random seeds. Compare the convergence rates and final performance of EI, UCB, and PI using statistical tests.

Protocol 2: Wet-Lab Validation Cycle for Affinity Maturation

- Computational Proposal: After an initial round of phage/yeast display sequencing, fit a GP model to sequence-fitness data. Use EI to propose 50-200 candidate mutant sequences expected to improve affinity.

- Library Synthesis: Synthesize the proposed variants via oligo library synthesis and construct the mutant library for the next display round.

- Biological Selection: Perform 1-3 rounds of selection under increasing stringency (e.g., lower antigen concentration, shorter binding time).

- Next-Generation Sequencing (NGS): Sequence output pools. Enrichment scores from NGS counts provide fitness proxies for the next BO cycle.

- Validation: Express top BO-proposed hits as soluble antibodies for validation via Surface Plasmon Resonance (SPR) to measure binding kinetics ((K_D)).

Visualizing the Bayesian Optimization Workflow in Antibody Design

Title: Bayesian Optimization Loop for Antibody Variant Design

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Platforms for BO-Driven Antibody Development

| Item / Solution | Function in the BO Pipeline | Example Vendor/Platform |

|---|---|---|

| Oligo Pool Synthesis | Enables synthesis of the computationally proposed variant library for the next experimental cycle. | Twist Bioscience, IDT, Agilent |

| Phage or Yeast Display System | Provides the physical platform for displaying antibody variants and selecting for binding. | New England Biolabs (Phage), Thermo Fisher (Yeast) |

| Next-Generation Sequencer | Generates high-throughput sequence data from selection rounds to feed back into the GP model. | Illumina (MiSeq), PacBio |

| SPR/Biolayer Interferometry (BLI) Instrument | Provides gold-standard, quantitative validation of binding kinetics for top BO-predicted hits. | Cytiva (Biacore), Sartorius (Octet) |

| GP/BO Software Library | Implements the surrogate modeling and acquisition function optimization algorithms. | BoTorch, GPyOpt, scikit-optimize |

| High-Performance Computing (HPC) Cluster | Runs computationally intensive GP training and acquisition function maximization across sequence space. | In-house, AWS, Google Cloud |

Within a modern Bayesian optimization (BO) framework for therapeutic antibody design, the Design-Test-Learn (DTL) cycle constitutes the core operational engine. This iterative process tightly couples in silico surrogate modeling with in vitro or in vivo wet-lab experimentation to navigate the astronomically large sequence-structure-function landscape efficiently. This guide details the technical execution of this cycle for researchers.

The DTL Cycle: Core Conceptual Workflow

The cycle formalizes the iterative hypothesis generation and testing required for rational protein engineering.

Phase 1: Design – Probabilistic Model and Acquisition Function

The Design phase uses a probabilistic surrogate model, typically a Gaussian Process (GP), trained on all existing data to predict antibody properties (e.g., affinity, stability) and quantify uncertainty for any sequence.

- Surrogate Model: A GP is defined by a mean function m(x) and a kernel (covariance) function k(x, x'). For a sequence represented by features x, the predicted property f(x) follows a normal distribution: f(x) ~ N(μ(x), σ²(x)).

- Acquisition Function: This guides the search. Expected Improvement (EI) is common: EI(x) = E[max(f(x) - f(x⁺), 0)], where f(x⁺) is the best-observed value. The next batch of candidates is selected by maximizing EI, balancing exploration (high uncertainty) and exploitation (high predicted mean).

Phase 2: Test – Wet-Lab Experimental Protocols

The Test phase validates in silico predictions through controlled experiments. Key quantitative outputs feed back into the model.

Protocol 2.1: High-Throughput Affinity Screening via Biolayer Interferometry (BLI)

Objective: Quantify binding kinetics (kₐ, kₑ, K_D) for dozens of antibody variants in parallel. Methodology:

- Biosensor Loading: Hydrate anti-human Fc (AHQ) biosensors. Dilute purified antibodies to 5 µg/mL in kinetics buffer. Load antibodies onto biosensors for 300s to achieve ~1 nm shift.

- Baseline: Place sensors in kinetics buffer for 60s.

- Association: Transfer sensors to wells containing serially diluted antigen (e.g., 0, 6.25, 12.5, 25, 50 nM) for 300s.

- Dissociation: Transfer sensors back to kinetics buffer for 600s.

- Data Analysis: Reference-subtracted data is fit globally to a 1:1 binding model using the BLI instrument software (e.g., FortéBio Data Analysis HT) to extract kₐ, kₑ, and K_D.

Protocol 2.2: Thermal Stability Assessment by Differential Scanning Fluorimetry (DSF)

Objective: Determine melting temperature (T_m) as a proxy for structural stability. Methodology:

- Sample Preparation: Mix purified antibody (0.2 mg/mL final) with a fluorescent dye (e.g., Sypro Orange) in a 96-well PCR plate. Final volume: 25 µL.

- Thermal Ramp: Run plate in a real-time PCR instrument. Protocol: equilibrate at 25°C for 2 min, then ramp from 25°C to 95°C at a rate of 0.5°C/min with continuous fluorescence measurement.

- Analysis: Plot fluorescence derivative vs. temperature. The minimum of the derivative curve corresponds to the T_m.

Table 1: Example Wet-Lab Output Data for BO Update

| Variant ID | Predicted K_D (nM) | Measured K_D (nM) | Measured T_m (°C) | Expression Yield (mg/L) |

|---|---|---|---|---|

| AB001 | 5.2 | 4.8 ± 0.7 | 68.5 ± 0.3 | 120 |

| AB002 | 12.1 | 25.3 ± 3.5 | 62.1 ± 0.5 | 85 |

| AB003 | 8.7 | 9.1 ± 1.2 | 71.3 ± 0.2 | 105 |

Phase 3: Learn – Model Updating and Multi-Objective Optimization

The Learn phase integrates new data to refine the surrogate model. For multiple properties (e.g., high affinity and high stability), a multi-objective BO (MOBO) approach is used, often employing the ParEGO or EHVI acquisition function to trace a Pareto front.

Table 2: The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in DTL Cycle | Example/Specifications |

|---|---|---|

| Anti-Human Fc (AHQ) Biosensors | Enable label-free, high-throughput kinetic screening of IgG antibodies via BLI. | FortéBio Octet AHQ tips. |

| Sypro Orange Protein Gel Stain | Fluorescent dye used in DSF to monitor protein unfolding as a function of temperature. | 5000X concentrate in DMSO. |

| HEK293 or CHO Transient Expression System | Rapid production of µg to mg quantities of antibody variants for characterization. | Expi293F or ExpiCHO-S cells. |

| Protein A/G Purification Resin | Robust capture and purification of IgG from complex cell culture supernatants. | Agarose or magnetic bead formats. |

| Kinetics Buffer (for BLI) | Provides consistent pH and ionic strength to ensure specific binding interactions during screening. | 1X PBS, pH 7.4, 0.01% BSA, 0.002% Tween-20. |

The rigorous integration of these phases, supported by robust experimental data and adaptive probabilistic modeling, enables the efficient discovery of antibody candidates that simultaneously optimize multiple, often competing, development criteria.

This technical guide explores the application of Bayesian Optimization (BO) to the computational design of antibodies with enhanced properties. Within the broader thesis of Bayesian optimization for antibody design, this whitepaper presents case studies demonstrating the optimization of three critical parameters: binding affinity, specificity, and thermostability. BO provides a powerful, sample-efficient framework for navigating the vast combinatorial sequence space, enabling the rapid identification of lead candidates with desired biophysical characteristics.

Theoretical Framework: Bayesian Optimization for Protein Engineering

Bayesian Optimization is a sequential design strategy for global optimization of black-box functions that are expensive to evaluate. In antibody design, the "function" is an experimental assay measuring affinity, specificity, or stability, and each "evaluation" involves costly and time-consuming wet-lab experimentation. BO consists of two key components:

- A probabilistic surrogate model (typically a Gaussian Process) that approximates the unknown function from observed data.

- An acquisition function (e.g., Expected Improvement, Upper Confidence Bound) that uses the surrogate model's predictions to decide which sequence to test next by balancing exploration and exploitation.

This iterative loop of prediction and experimental validation accelerates the design cycle.

Diagram Title: Bayesian Optimization Iterative Cycle for Antibody Design

Case Studies & Data Analysis

Case Study 1: Optimizing Binding Affinity

- Objective: Improve the binding affinity (KD) of an anti-IL-6 antibody.

- BO Setup: Sequence space focused on 6 residues in the CDR-H3 loop. Gaussian Process with Matérn kernel; Expected Improvement acquisition function.

- Result: Achieved a 50-fold affinity improvement over the parent antibody in 4 iterative rounds of design (20 total variants tested).

Case Study 2: Enhancing Specificity

- Objective: Increase specificity for target antigen (EGFR) over closely related homolog (HER2).

- BO Setup: Multi-objective BO optimizing both target binding and homolog discrimination ratio. Used a weighted sum of objectives in the acquisition function.

- Result: Generated variants with >100-fold improved specificity index in 5 rounds, minimizing off-target binding.

Case Study 3: Improving Thermostability

- Objective: Increase melting temperature (Tm) of a single-chain variable fragment (scFv) prone to aggregation.

- BO Setup: Input features included sequence metrics and in silico stability predictions. Output was experimentally measured Tm via differential scanning fluorimetry (DSF).

- Result: Increased Tm by 12.5°C over 6 design iterations, significantly improving developability.

Table 1: Summary of Quantitative Results from Case Studies

| Optimization Target | Parent Value | Optimized Value | Fold Improvement | BO Rounds | Variants Tested |

|---|---|---|---|---|---|

| Affinity (KD to IL-6) | 10 nM | 0.2 nM | 50x | 4 | 20 |

| Specificity Ratio (EGFR:HER2) | 5:1 | >500:1 | >100x | 5 | 25 |

| Thermostability (Tm) | 62.5 °C | 75.0 °C | +12.5 °C | 6 | 30 |

Detailed Experimental Protocols

Protocol 4.1: Affinity Measurement via Biolayer Interferometry (BLI)

Purpose: To determine kinetic binding parameters (KD, Kon, Koff). Workflow:

- Loading: Immobilize biotinylated antigen onto streptavidin biosensors.

- Baseline: Establish a baseline in kinetics buffer.

- Association: Dip sensors into wells containing varying concentrations of purified antibody; monitor binding over time.

- Dissociation: Transfer sensors to buffer-only wells; monitor dissociation.

- Analysis: Fit resultant sensograms to a 1:1 binding model using the instrument's software to extract kinetics.

Protocol 4.2: High-Throughput Thermostability Assay (Differential Scanning Fluorimetry)

Purpose: To determine melting temperature (Tm) of antibody variants in a 96- or 384-well format. Workflow:

- Sample Prep: Mix purified antibody (0.2 mg/mL) with a fluorescent dye (e.g., Sypro Orange) that binds hydrophobic patches exposed upon unfolding.

- Run: Using a real-time PCR instrument, ramp temperature from 25°C to 95°C at a steady rate (e.g., 0.5°C/min) while monitoring fluorescence.

- Analysis: Calculate Tm as the inflection point of the fluorescence vs. temperature curve using first-derivative analysis.

Diagram Title: High-Throughput Wet-Lab Validation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for BO-Driven Antibody Optimization

| Item | Function in Workflow | Example/Notes |

|---|---|---|

| Gene Fragments (Arrayed) | Synthesizes the BO-proposed variant DNA sequences for cloning. | Twist Bioscience gene fragments, IDT oligo pools. |

| Cloning Vector | Backbone for recombinant antibody expression. | pTT5, pcDNA3.4 for mammalian expression. |

| Expression Host | Produces full-length, folded antibody protein. | Expi293F or ExpiCHO cells for transient transfection. |

| Protein A Resin (HT) | High-throughput purification of IgG from culture supernatant. | MabSelect PrismA in 96-well filter plates. |

| BLI Instrument & Biosensors | Measures binding kinetics and affinity without flow cells. | Sartorius Octet systems with Anti-Human Fc (AHQ) sensors. |

| DSF Dye | Fluorescent reporter for protein thermal unfolding. | Sypro Orange protein gel stain. |

| RT-qPCR Instrument | Platform for high-throughput DSF runs. | Applied Biosystems QuantStudio 7 Flex. |

| BO Software Platform | Implements surrogate modeling, acquisition, and data management. | Orion, Pyro, or custom Python scripts (BoTorch/GPyTorch). |

Overcoming Practical Challenges: Noise, Constraints, and Model Failure in BO

Handling Noisy and High-Variance Biological Assay Data

The development of therapeutic antibodies is a high-dimensional optimization problem, where the goal is to navigate a vast sequence space to identify candidates with optimal affinity, specificity, and developability profiles. A central thesis in modern computational antibody design posits that Bayesian Optimization (BO) provides a robust framework for this search, efficiently balancing exploration and exploitation. However, the efficacy of any BO-driven campaign is fundamentally constrained by the quality of the input data. This guide addresses the critical, often underestimated challenge of handling noisy and high-variance biological assay data, which, if unmitigated, can misdirect the optimization process, leading to suboptimal candidates and wasted resources.

Biological assays used in antibody screening are inherently variable. Noise arises from both systematic (technical) and random (biological) sources. The table below summarizes the primary contributors to noise in common assays.

Table 1: Sources of Variance in Common Antibody Development Assays

| Assay Type | Primary Measurement | Major Noise Sources (Technical) | Major Noise Sources (Biological) | Typical Coefficient of Variation (CV) Range* |

|---|---|---|---|---|

| ELISA / MSD | Binding Affinity (OD, RLU) | Plate edge effects, pipetting inaccuracy, reagent lot variability, reader calibration. | Non-specific binding, protein aggregation, epitope masking. | 15% - 25% |

| Surface Plasmon Resonance (SPR / Blitz) | Kinetics (ka, kd, KD) | Sensor chip degradation, reference surface subtraction errors, flow rate fluctuations. | Conformational heterogeneity, avidity effects for multivalent analytes. | 5% - 15% (for KD) |

| Bio-Layer Interferometry (BLI) | Kinetics & Affinity | Tip alignment variability, baseline drift, nonspecific binding to tips. | Similar to SPR, with additional buffer artifact sensitivity. | 10% - 20% |

| Flow Cytometry (FACS) | Cell-Surface Binding (MFI) | Laser power drift, PMT voltage calibration, gating subjectivity. | Cell viability, receptor density heterogeneity, internalization. | 20% - 35% |

| Neutralization / Functional Assay | IC50 / EC50 | Cell passage number, assay incubation time/temp variability, reporter signal stability. | Biological responsiveness of cell lines, pathway stochasticity. | 25% - 50%+ |

*CV ranges are approximate and represent inter-experimental variability under standard conditions. Intra-assay CVs are typically lower.

Experimental Protocols for Noise Mitigation

Implementing rigorous, standardized protocols is the first line of defense against excessive variance.

Protocol for Robust High-Throughput Binding ELISA

Objective: To quantitatively measure antibody-antigen binding with minimized technical variance. Key Reagents: See Section 6. Procedure:

- Plate Coating: Dilute antigen to 2 µg/mL in carbonate-bicarbonate coating buffer (pH 9.6). Dispense 50 µL/well using a calibrated multichannel or automated liquid handler. Include blank wells (coating buffer only) and negative control wells (irrelevant protein).

- Blocking: After overnight incubation at 4°C, aspirate and block with 200 µL/well of blocking buffer (e.g., 3% BSA in PBST) for 2 hours at room temperature (RT) on a plate shaker.

- Sample Addition:

- Prepare antibody dilutions in blocking buffer using a serial dilution series (e.g., 1:3 dilutions across 8 points). Include a known reference standard antibody on every plate for inter-plate normalization.

- Dispense 50 µL/well in technical duplicates or triplicates, positioned non-adjacently across the plate to control for spatial effects.

- Detection: Incubate 1-2 hours at RT. Wash plate 5x with PBST using an automated plate washer. Add 50 µL/well of diluted HRP-conjugated secondary antibody. Incubate 1 hour at RT, protected from light. Wash 5x.

- Signal Development & Quantification: Add 50 µL/well of TMB substrate. Incubate for a fixed, optimized time (e.g., 10 minutes). Stop reaction with 50 µL/well 1M H₂SO₄. Read absorbance at 450 nm immediately.

- Data Processing: Subtract blank well average from all values. Fit the reference standard curve using a 4-parameter logistic (4PL) model. Normalize sample responses to the plate-specific standard curve to generate Normalized Response Units before calculating EC₅₀.

Protocol for Reliable SPR Affinity Determination

Objective: To obtain accurate kinetic parameters (kₐ, kd) and equilibrium affinity (KD). Key Reagents: See Section 6. Procedure:

- Surface Preparation: Dock a new series S sensor chip (e.g., CM5). Condition the surface with two 1-minute injections of 10 mM glycine pH 1.5 and 2.0.

- Immobilization: Dilute the target antigen in 10 mM sodium acetate buffer at pH optimal for its isoelectric point. Using amine coupling chemistry, activate the surface with a 7-minute injection of a 1:1 mixture of 0.4 M EDC and 0.1 M NHS. Inject the antigen solution to achieve a target immobilization level of 50-100 Response Units (RU) for kinetic analysis. Deactivate with a 7-minute injection of 1 M ethanolamine-HCl pH 8.5.

- Kinetic Run:

- Prepare a twofold serial dilution series of the antibody analyte (typically 5-8 concentrations, spanning values above and below the expected KD).

- Use HBS-EP+ (0.01M HEPES, 0.15M NaCl, 3mM EDTA, 0.05% v/v Surfactant P20, pH 7.4) as running buffer.

- Program a method with a 60-second association phase and a 300-600 second dissociation phase. Use a flow rate of 30 µL/min. Include buffer blank injections (0 nM analyte) in duplicate for double-referencing.

- Regeneration: Identify a mild, consistent regeneration condition (e.g., 10 mM glycine pH 2.0, 30-second injection) that removes analyte without damaging the immobilized ligand.

- Data Analysis: Process sensorgrams using double referencing (subtract both reference flow cell and buffer blank). Fit the globally to a 1:1 binding model. Report the mean and standard deviation of KD from at least two independent experiments with freshly prepared analyte dilutions.

Statistical and Computational Methods for Data Integration into Bayesian Optimization

Raw assay data must be processed and modeled to provide reliable objective functions for BO.

Table 2: Data Processing Techniques for Noise Reduction

| Technique | Application | Methodology | Benefit for BO |

|---|---|---|---|

| Plate-Based Normalization | HTS (ELISA, FACS) | Use Z-score, Z'-factor, or B-score normalization to correct for row/column effects and systematic drift. | Removes spatial bias, ensuring sequence quality comparisons are fair. |

| Reference Standard Scaling | All quantitative assays | Run a validated reference control in each experiment. Scale all sample responses to the reference's fixed value. | Enables data integration across multiple experimental batches over time. |

| Replicates & Aggregation | All assays | Perform technical & biological replicates. Use median or trimmed mean instead of mean for aggregation. | Robust central estimates reduce the influence of outlier data points. |

| Error-Aware Modeling | Fitting dose-response curves | Use hierarchical Bayesian models (e.g., in Stan/PyMC) to fit EC₅₀/IC₅₀, sharing information across curves and estimating uncertainty. | Provides the posterior distribution of the activity metric, which can be directly used in BO acquisition functions. |

| Heteroscedastic Regression | Modeling assay noise | Model the measurement variance as a function of the mean signal (e.g., using a log-normal model). | Allows BO to down-weight high-variance measurements automatically. |

Integration with Bayesian Optimization: The processed data, represented as a distribution (mean and variance) for each antibody variant, directly informs the Gaussian Process (GP) surrogate model in BO. The GP's kernel function models the correlation between sequences, while the likelihood function incorporates the observed noise. An acquisition function like Expected Improvement (EI) with plug-in or Noisy EI is then used to propose the next most informative sequence to test, explicitly balancing predicted performance and measurement uncertainty.

Visualization of Workflows and Relationships

Title: Bayesian Optimization Cycle with Noisy Data Integration

Title: Data Processing Pipeline from Noise to BO Input

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Materials for High-Quality Assays

| Item | Function & Importance | Key Considerations for Noise Reduction |

|---|---|---|

| Bovine Serum Albumin (BSA), IgG-Free | Standard blocking agent to minimize non-specific binding in immunoassays. | Use high-quality, protease-free grade. Prepare fresh solutions or use commercially prepared, filter-sterilized stocks for consistency. |

| PBS/Tween-20 (PBST) Wash Buffer | Used for washing steps to remove unbound reagents. | Use a calibrated automated plate washer. Ensure consistent wash volume, soak time, and aspiration. Freshly prepare buffer to prevent microbial growth. |

| Reference Standard Antibody | A well-characterized antibody control run in every experiment. | Critical. Enables inter-experiment normalization. Must be aliquoted and stored at -80°C to prevent freeze-thaw degradation. |

| Low-Binding Microplates & Tips | Reduce surface adsorption of proteins, especially at low concentrations. | Essential for accurate dilution series. Use the same brand/type throughout a project. |

| Kinetic Assay Running Buffer (e.g., HBS-EP+) | Buffer for label-free biosensors (SPR, BLI). Provides a stable baseline. | Always degas and filter (0.22 µm) before use. Include a surfactant (P20) to reduce non-specific binding. Use the same lot for a kinetic series. |