Beyond Static Models: Navigating the Limitations of AI in Predicting Antibody Conformational Dynamics

This article critically examines the current capabilities and significant limitations of artificial intelligence (AI) in predicting conformational changes in antibodies, a crucial challenge in computational biology and rational drug design.

Beyond Static Models: Navigating the Limitations of AI in Predicting Antibody Conformational Dynamics

Abstract

This article critically examines the current capabilities and significant limitations of artificial intelligence (AI) in predicting conformational changes in antibodies, a crucial challenge in computational biology and rational drug design. We explore the fundamental biophysical principles that challenge static AI models, review the latest methodological advances attempting to capture antibody flexibility, and provide a troubleshooting guide for researchers encountering inaccuracies. A comparative analysis of leading AI tools against experimental benchmarks highlights persistent gaps. The synthesis offers researchers and drug developers a realistic framework for integrating AI predictions with complementary techniques, outlining future directions to improve the reliability of computational antibody engineering.

Why AI Struggles with Antibody Flexibility: Core Biophysical and Data Challenges

The Critical Role of Conformational Dynamics in Antibody Function and Affinity Maturation

Technical Support Center: Troubleshooting AI-Predicted Conformational States in Antibody Engineering

This support center addresses common experimental challenges when validating or utilizing AI/ML predictions for antibody conformational dynamics and affinity maturation. The content is framed within the thesis that while AI predictions accelerate hypothesis generation, their limitations—particularly in capturing rare, transient, or solvent-sensitive states—require rigorous experimental verification.

FAQs & Troubleshooting Guides

Q1: Our AI-predicted high-affinity antibody variant shows poor antigen binding in SPR/BLI assays. What could be wrong? A: This is a common disconnect between in silico and in vitro results. The AI model may have predicted a stable conformation that is not populated under physiological conditions or may have overlooked colloidal instability.

- Troubleshooting Steps:

- Check Predicted Stability: Use differential scanning calorimetry (DSC) or thermal shift assays. A low Tm (<65°C) suggests protein instability, not a binding defect.

- Assess Aggregation: Perform size-exclusion chromatography (SEC) immediately after purification. Aggregates indicate non-native conformations.

- Probe Conformation: Use hydrogen-deuterium exchange mass spectrometry (HDX-MS) to compare the conformational landscape of your variant with a positive control. Deviations in Fab region dynamics explain lost function.

- Root Cause in AI Limitation: Most AI models are trained on static crystal structures or simulations with simplified solvent models, missing aggregation-prone patches or the role of solvation entropy.

Q2: How do we experimentally validate a predicted rare conformational state involved in antigen recognition? A: AI can propose rare states, but capturing them requires specialized biophysics.

- Recommended Protocol: Double Electron-Electron Resonance (DEER) Spectroscopy

- Objective: Measure distance distributions between spin labels to probe conformational ensembles.

- Methodology:

- Introduce cysteine residues at specific sites in the Fv region (e.g., on adjacent CDR loops) using site-directed mutagenesis.

- Label with a methanethiosulfonate spin label (e.g., MTSSL).

- Purify and confirm labeling via mass spec.

- Acquire DEER data on the labeled antibody (with and without antigen) at cryogenic temperatures.

- Analyze distance distributions. A broad or multi-modal distribution indicates conformational heterogeneity, potentially confirming the predicted rare state's presence in the ensemble.

Q3: During affinity maturation, our library based on AI-flexibility predictions shows no improvement. What's the issue? A: The AI may have correctly identified flexible regions, but your library diversity might be restricted to unfavorable chemical space or disrupt the conformational sampling necessary for binding.

- Troubleshooting:

- Analyze Library Sequences: Use next-generation sequencing (NGS) of the phage/yeast display library input. Check if the designed codon scheme (e.g., NNK) was correctly implemented and if diversity is sufficient.

- Test Conformational Rigidity: Incorporate a proteolytic sensitivity assay. Incubate parental and selected clones with a mild protease (e.g., subtilisin). Increased cleavage indicates AI-suggested mutations inadvertently increased flexibility, destabilizing the binding-competent state.

- Focus on Interface Paratope Dynamics: The AI might have prioritized overall Fab dynamics. Use MD simulations (explicit solvent) on top candidate sequences to specifically analyze paratope conformational entropy before experimental testing.

Q4: AI suggests a conformational selection mechanism, but our ITC data shows enthalpydriven binding. How to resolve this? A: Conformational selection often incurs an entropic penalty. A strong negative ΔH can mask a negative ΔS in ITC. Direct measurement of dynamics is needed.

- Validation Protocol: NMR Relaxation Dispersion

- Objective: Detect millisecond-microsecond timescale dynamics (typical for conformational selection) at atomic resolution.

- Methodology:

- Produce ¹⁵N-labeled antibody Fv fragment.

- Acquire ¹⁵N CPMG relaxation dispersion experiments at multiple magnetic fields.

- Fit data to models of chemical exchange. An increase in exchange parameters (Rex) upon antigen addition indicates that binding modulates dynamics, supporting a conformational selection model where the antigen selects a pre-existing, minor state from the antibody's ensemble.

Table 1: Biophysical Techniques for Validating AI Predictions on Antibody Dynamics

| Technique | Measured Parameter | Timescale Resolution | Throughput | Key Insight for AI Validation |

|---|---|---|---|---|

| HDX-MS | Solvent Accessibility & Dynamics | Seconds to Hours | Medium | Maps regions where AI-predicted & experimental flexibility differ. |

| DEER/EPR | Distance Distributions | Nanoseconds to Microseconds | Low | Quantifies populations of predicted conformations in ensemble. |

| NMR Relaxation | Bond Vector Dynamics | Picoseconds to Seconds | Very Low | Provides atomic-level, timespecific data to benchmark MD/AI predictions. |

| MD Simulations | Atomic Trajectories | Femtoseconds to Milliseconds | Computational | Direct comparison to AI trajectories; use explicit solvent for validation. |

| SR-FTIR | Secondary Structure Kinetics | Milliseconds to Seconds | Medium | Tracks real-time folding/ conformational changes post-AI mutation. |

Table 2: Troubleshooting Correlation: AI Prediction Errors vs. Experimental Outcomes

| AI Prediction Error Type | Likely Experimental Outcome | Confirmatory Experiment |

|---|---|---|

| Over-stabilized CDR loop conformation | Loss of antigen binding (increased KD) | HDX-MS (shows reduced flexibility in CDRs) |

| Underestimation of Fab stability | Low expression yield, aggregation | SEC-MALS, Thermal Shift Assay |

| Mis-predicted rare state energy | No binding improvement in maturation | DEER Spectroscopy, NMR |

| Neglect of solvation effects | Discrepancy in ΔG (predicted vs. ITC) | Computational SAXS/ SANS with explicit solvent |

Experimental Protocols

Protocol 1: HDX-MS to Probe AI-Predicted Conformational Changes

- Objective: Validate regions of predicted flexibility or rigidity changes upon antigen binding or after affinity maturation.

- Materials: ¹⁵N/¹³C-labeled antibody fragment, quench buffer (pH 2.3, 0°C), immobilized pepsin column, UPLC, Mass Spectrometer.

- Steps:

- Dilution & Labeling: Dilute antibody (with/without antigen) into D₂O buffer. Incubate at multiple timepoints (e.g., 10s, 1min, 10min, 1hr).

- Quench: Transfer aliquot to low-pH, ice-cold quench buffer to reduce exchange.

- Digestion & Separation: Rapidly pass over immobilized pepsin column, elute peptides onto a UPLC trap column.

- MS Analysis: Elute peptides into high-resolution MS. Identify peptides via tandem MS/MS.

- Data Processing: Calculate deuterium uptake for each peptide over time. Compare uptake curves between conditions to identify regions with altered dynamics.

Protocol 2: Computational-Experimental Hybrid Workflow for Affinity Maturation

- Objective: Integrate AI predictions with experimental screening to overcome AI's sampling limitations.

- Materials: Parental antibody structure, Rosetta/dAb-initio MD software, yeast display library, FACS sorter, NGS platform.

- Steps:

- AI-Driven Design: Use a method like RFdiffusion or AlphaFold2 with conditioning to generate a diverse set of predicted stable CDR-H3 conformations.

- Library Construction: Design oligos encoding the top 100-1000 variant sequences for synthesis and cloning into a yeast display vector.

- Conformation-Aware Sorting: Use conformation-sensitive dyes (e.g., ANS) or competitive binding with a conformational probe antibody during FACS to select for both stability and antigen binding.

- Deep Mutational Scanning: Sequence output pools via NGS to identify enriched mutations. Cross-reference with AI-generated positional entropy scores.

Visualizations

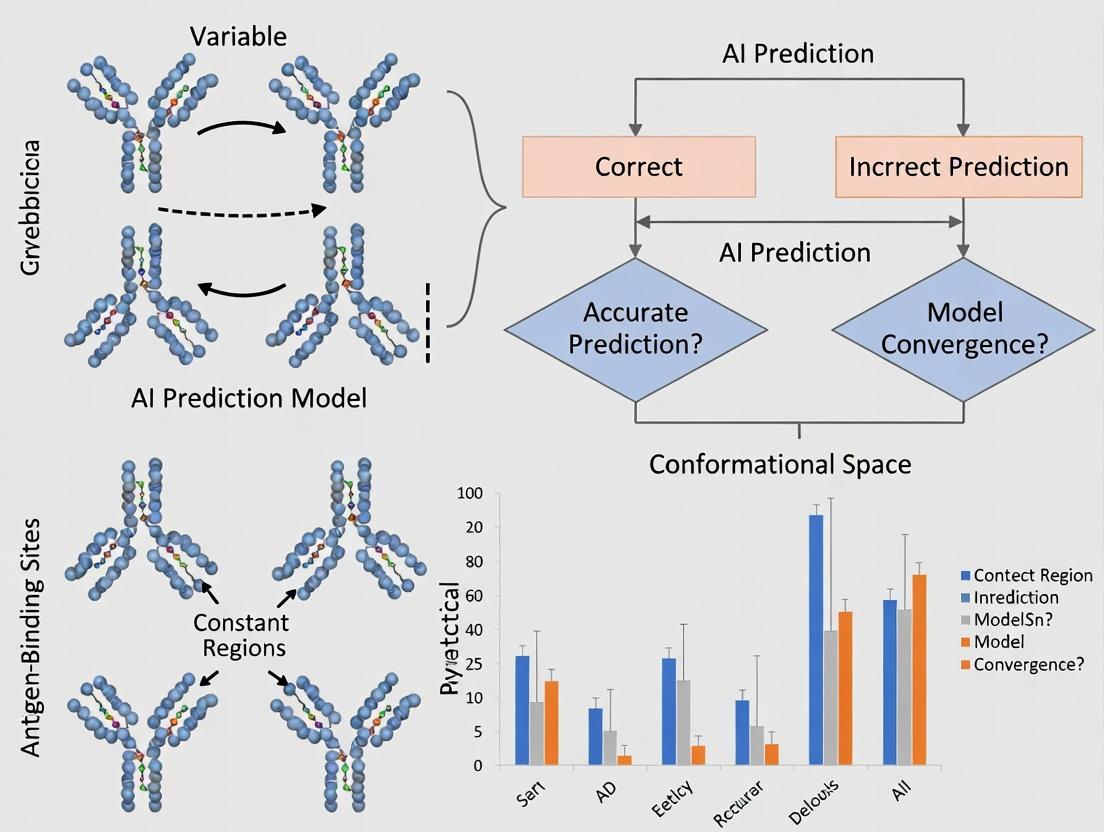

Title: AI Prediction Validation and Refinement Workflow

Title: Conformational Selection Binding Mechanism

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Conformational Dynamics Studies

| Item | Function & Rationale |

|---|---|

| Site-Directed Mutagenesis Kit | To introduce cysteine residues for spin/fluorescence labeling or to test AI-proposed point mutations. |

| Methanethiosulfonate (MTSSL) Spin Label | The standard, minimally perturbing label for DEER/EPR spectroscopy to measure distances. |

| Deuterium Oxide (D₂O) - 99.9% | Essential labeling reagent for HDX-MS experiments to measure backbone amide exchange rates. |

| Immobilized Pepsin Column | Enables rapid, reproducible digestion for HDX-MS under quench conditions (low pH, 0°C). |

| Conformation-Sensitive Dyes (e.g., ANS, Sypro Orange) | Used in thermal shift assays or FACS to detect aggregation or stability changes in antibody variants. |

| ¹⁵N/¹³C Labeling Growth Media | For production of isotopically labeled antibody fragments required for detailed NMR dynamics studies. |

| Biotinylated Antigen | Critical for efficient pulldown during selection in display technologies and for BLI/SPR kinetics. |

| Phosphatase/Protease Cocktail | Added during purification to maintain antibody integrity and native conformation for accurate assays. |

Troubleshooting Guide & FAQs

Q1: Our molecular docking simulation fails to predict the correct binding pose for a flexible antibody CDR loop. The rigid-body docking algorithm places the ligand incorrectly. What is the likely cause and solution?

A: This is a classic symptom of an induced fit mechanism, where the antibody's complementarity-determining region (CDR) loop undergoes a significant conformational change upon ligand binding. Rigid-body docking assumes a pre-formed, static binding site (intrinsic fit), which fails here.

Solution Protocol:

- Switch to Flexible Docking or Ensemble Docking:

- Flexible Docking: Use software like Rosetta FlexPepDock (for peptides) or Schrödinger's Induced Fit Docking module. These allow specific CDR loops to be flexible during the simulation.

- Ensemble Docking: Generate an ensemble of antibody conformations from molecular dynamics (MD) simulations or using a conformational sampling tool. Dock the ligand against this ensemble of receptor structures.

- Experimental Workflow for Validation:

- Express and purify the antibody Fab fragment.

- Perform X-ray crystallography or cryo-EM on both the apo (unbound) and holo (bound) structures.

- Compare the root-mean-square deviation (RMSD) of the CDR loops. An RMSD > 2Å strongly indicates induced fit.

Q2: Our AI/ML model, trained on existing antibody-antigen structures, performs poorly when predicting the conformation of a novel antibody with a long CDR H3 loop. Why?

A: AI models for structure prediction are often trained on databases of solved structures, which are biased toward stable, low-energy states and may underrepresent the rare conformational states sampled by highly flexible loops. The model is likely predicting an average, low-energy state but missing the intrinsic motion dynamics of the loop prior to binding.

Solution Protocol:

- Augment Training Data with Dynamics:

- Run long-timescale Molecular Dynamics (MD) simulations (µs-scale) on the apo antibody to sample its intrinsic conformational landscape.

- Cluster the MD trajectories to identify representative conformational states.

- Use these states as additional input structures for model training or refinement.

- Integrate Experimental Ensemble Data:

- Use Small-Angle X-Ray Scattering (SAXS) to obtain solution-phase scattering data of the apo antibody.

- Compute SAXS profiles from your MD-derived ensemble and refine the ensemble weights to match the experimental profile. This provides a experimentally-validated conformational ensemble.

Q3: How can we quantitatively distinguish between intrinsic fit and induced fit mechanisms in our study?

A: The distinction lies in comparing the conformational populations before and after binding. Use the following experimental data table to guide analysis.

Table 1: Quantitative Distinction Between Intrinsic and Induced Fit Mechanisms

| Metric | Intrinsic Fit (Conformational Selection) | Induced Fit | Key Experimental Method |

|---|---|---|---|

| Apo State Conformational Diversity | High. The bound-like conformation is a minor but pre-existing population. | Low. The bound conformation is not significantly populated in the apo state. | MD Simulation, NMR Relaxation Dispersion |

| ΔRMSD (Bound vs. Apo) | Typically low to moderate (< 2.5 Å). The antibody selects a pre-existing state. | Can be very high (> 3 Å), especially in CDR loops. Ligand induces a new state. | X-ray Crystallography, Cryo-EM |

| Binding Kinetics (k_on) | Often slower, limited by the population of the competent state. | Can be faster, not limited by a rare pre-existing state. | Surface Plasmon Resonance (SPR) |

| NMR Chemical Shift Perturbation | Shifts occur primarily for residues in the pre-organized binding site. | Widespread, allosteric shifts observed as the structure rearranges. | NMR Spectroscopy |

Experimental Protocol for NMR-Based Distinction:

- Isotopically label ([¹⁵N, ¹³C]) the antibody Fab fragment.

- Collect 2D [¹⁵N,¹H]-HSQC NMR spectra of the apo Fab and the Fab-ligand complex.

- Calculate chemical shift perturbations (CSPs) for each backbone amide.

- Analyze the pattern: Intrinsic Fit shows CSPs localized to the binding interface. Induced Fit shows widespread CSPs across multiple secondary structure elements, indicating global rearrangement.

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Material | Function in Studying Conformational Change |

|---|---|

| Fab Fragment Expression System (e.g., mammalian HEK293 or insect cell) | Produces the antigen-binding fragment of the antibody without the Fc region, ideal for crystallography, cryo-EM, and biophysical assays. |

| Size-Exclusion Chromatography (SEC) Column (e.g., Superdex 200 Increase) | Purifies the antibody/Fab and separates monomers from aggregates, ensuring sample homogeneity for structural studies. |

| Crystallization Screen Kits (e.g., JCSG+, Morpheus) | Contains diverse chemical conditions to empirically identify conditions for growing protein crystals of apo and bound states. |

| Biacore Series S Sensor Chip CM5 | Gold-standard surface for immobilizing antibodies/Fabs for Surface Plasmon Resonance (SPR) to measure binding kinetics and affinity. |

| Deuterated Media & Isotopically Labeled Nutrients | Essential for producing [¹⁵N, ¹³C]-labeled proteins for multi-dimensional NMR spectroscopy studies of dynamics. |

Visualizations

Diagram 1: AI Prediction Workflow & Limitations for Antibody Motions

Diagram 2: Experimental Flow to Determine Fit Mechanism

Troubleshooting Guides & FAQs

Q1: Our AI model, trained on static PDB structures, fails to predict the conformational change of an antibody Fab upon antigen binding. The predicted binding energy is highly inaccurate. What went wrong? A: This is a classic symptom of the static data bottleneck. Your training set likely lacks examples of the intermediate or induced-fit states. The model has learned features specific to unbound or a narrow subset of conformations. To troubleshoot:

- Validate: Perform molecular dynamics (MD) simulations (see Protocol A) starting from your predicted complex and the known unbound structure. Compare the RMSD trajectories.

- Check Data: Audit your training set for conformational diversity. Calculate the RMSD distribution within clusters of similar antibodies.

- Solution: Integrate data from accelerated MD (aMD) or conformational sampling experiments into your training pipeline.

Q2: When fine-tuning a pre-trained protein language model for antibody affinity prediction, performance plateaus. We suspect limited dynamic information is the cause. How can we confirm and address this? A: The plateau likely arises from the model's inability to encode allosteric effects or flexibility. Confirm by:

- Ablation Test: Train two models: one on static structures only, and one augmented with ensemble data (e.g., from NMR or multi-temperature crystallography). Compare performance on a hold-out set containing known flexible binders.

- Analyze Attention: Examine the model's attention maps. Are they focused solely on the paratope, or do they highlight distal regions known to be allosteric from biophysical studies?

- Solution: Use fine-tuning data enriched with metrics of dynamics (see Table 1).

Q3: Our ensemble docking using static PDB snapshots yields inconsistent poses, and the top-ranked pose is biologically implausible. How should we refine the protocol? A: Inconsistent poses indicate your ensemble may not represent functionally relevant states. Refine using:

- Cluster Analysis: Cluster your docking results by ligand binding site RMSD. If clusters are equally populated with no clear consensus, your input ensemble is too diverse or irrelevant.

- Experimental Priors: Use hydrogen-deuterium exchange mass spectrometry (HDX-MS) data (see Protocol B) to constrain the regions allowed to move during docking.

- Protocol Refinement: Follow the updated ensemble generation protocol (Protocol C) that prioritizes energy landscape sampling over random dispersion.

Experimental Protocols

Protocol A: Short Molecular Dynamics Simulation for Model Validation Objective: Generate a basic trajectory to assess the stability of a predicted antibody-antigen complex.

- System Preparation: Use the

pdbfixertool to add missing residues/hydrogens to your PDB file. Solvate in an explicit water box (e.g., TIP3P) with 10 Å padding. Add ions to neutralize. - Energy Minimization: Using AMBER or CHARMM force fields, perform 5000 steps of steepest descent minimization to remove steric clashes.

- Equilibration: Heat the system to 300 K over 100 ps under NVT conditions, then equilibrate density for 100 ps under NPT (1 atm).

- Production Run: Run a 50-100 ns simulation under NPT conditions, saving frames every 10 ps.

- Analysis: Calculate Cα root-mean-square deviation (RMSD) and fluctuation (RMSF) using

MDtrajorcpptraj.

Protocol B: Hydrogen-Deuterium Exchange Mass Spectrometry (HDX-MS) for Conformational Insight Objective: Identify regions of increased flexibility or conformational change upon antigen binding.

- Labeling: Dilute antibody (alone and in complex) into D₂O-based buffer. Incubate for five time points (e.g., 10s, 1min, 10min, 1h, 4h) at 25°C.

- Quench & Digestion: Quench by lowering pH to 2.5 and temperature to 0°C. Pass over an immobilized pepsin column for online digestion.

- LC-MS/MS Analysis: Separate peptides via reverse-phase UPLC (at 0°C) and analyze with a high-resolution mass spectrometer.

- Data Processing: Calculate deuterium uptake for each peptide at each time point. Significant differences in uptake between apo and complex states identify dynamic regions involved in binding.

Protocol C: Generating a Relevance-Weighted Conformational Ensemble Objective: Create an ensemble for docking that is biased towards pharmacologically relevant states.

- Seed Collection: Gather all PDB structures for the antibody (or homology models), and structures of related antibodies.

- Normal Mode Analysis (NMA): Use

ProDyto compute low-frequency, collective modes of motion from a representative structure. - Sampling: Displace along the first three low-frequency modes (both directions) to generate a coarse ensemble.

- Filtration & Clustering: Relax each displaced structure with brief MD (see Protocol A, steps 1-3). Cluster based on CDR loop dihedrals. Weigh clusters by their relative free energy estimated from the MD ensemble or by experimental HDX data overlap.

Data Presentation

Table 1: Comparative Performance of AI Models Trained on Static vs. Dynamic-Enhanced Data

| Model Architecture | Training Data Source | Affinity Prediction MAE (kcal/mol) | Conformational Change Accuracy (Recall) | Data Required Volume (Structures) |

|---|---|---|---|---|

| 3D CNN | PDB Static Structures Only | 2.1 ± 0.3 | 0.22 | ~10,000 |

| GNN | PDB + MD Simulation Snapshots | 1.5 ± 0.2 | 0.58 | ~1,000 + 100 MD Trajs |

| Transformer | PDB + NMR Ensemble Data | 1.3 ± 0.2 | 0.71 | ~5,000 + 50 NMR Ensembles |

| Equivariant GNN | PDB + aMD Frames & HDX-MS metrics | 0.9 ± 0.1 | 0.85 | ~2,000 + 20 aMD Trajs + HDX |

Table 2: Resource Requirements for Key Conformational Sampling Methods

| Method | Typical Temporal Resolution | Typical Spatial Resolution | Computational/Experimental Cost | Key Output for AI Training |

|---|---|---|---|---|

| X-ray Crystallography | Static Snapshot | Atomic (1-2 Å) | High (Experimental) | Single, low-energy conformation |

| NMR Spectroscopy | Picosec to Msec | Atomic (Backbone) | Very High (Experimental) | Ensemble of solution-state conformations |

| Molecular Dynamics (MD) | Femtosec to Microsec | Atomic | Extreme (Computational) | High-resolution trajectory of motion |

| Hydrogen-Deuterium Exchange (HDX) | Msec to Hour | Peptide Level (4-20 residues) | Medium (Experimental) | Solvent accessibility/flexibility kinetics |

Visualizations

Diagram 1: Static vs Dynamic Data AI Training Pipeline

Diagram 2: Conformational Ensemble Generation Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Relevance to Dynamic Predictions |

|---|---|

| RosettaAntibody | Software suite for antibody homology modeling and design. Its FlexibleBackbone docking protocol can sample limited CDR loop flexibility. |

| AMBER/CHARMM Force Fields | Parameter sets for MD simulations. Critical for generating physically accurate conformational ensembles from static starting points. |

| ProDy Python API | Tool for protein dynamics analysis, including NMA and ensemble comparison. Used to generate initial conformational samples. |

| HDX-MS Kit (Commercial) | Standardized buffers and columns for reproducible hydrogen-deuterium exchange experiments, providing experimental constraints on dynamics. |

| AlphaFold2 (Multimer) + MD | Use AF2 for initial structure prediction, then feed output as a seed for extensive MD simulation to explore the conformational landscape. |

| Conda/Mamba Environment | For reproducible management of often-incompatible computational chemistry and machine learning software packages. |

| GPU Cluster Access | Essential for running high-throughput MD simulations or training large, dynamics-aware AI models within a practical timeframe. |

| PyMOL/ChimeraX w/ MDPlugin | Visualization software capable of loading and analyzing trajectories, essential for interpreting simulation and ensemble data. |

Technical Support Center: Troubleshooting AI-Driven Conformational Sampling

FAQ 1: The AI model consistently predicts the same dominant conformation and fails to sample rare states. How can I improve sampling diversity? Answer: This is a classic sign of an over-regularized or insufficiently trained model. Implement the following protocol:

- Protocol: Enhanced Sampling via Adversarial Training.

- Train your generative model (e.g., a Variational Autoencoder or Normalizing Flow) with an adversarial component that discriminates between generated and reference rare conformations (e.g., from sparse cryo-EM data or long-timescale MD snippets).

- Loss function:

L_total = L_reconstruction + λ * L_adversarial, where λ is a weighting factor. - Explicitly include metadynamics or adaptive sampling data from MD simulations as part of your training set to bias the model towards higher-energy regions.

- Check: Verify your training data includes heterogeneous structural data (X-ray, cryo-EM, NMR ensembles) and is not biased towards high-resolution, static crystal structures.

FAQ 2: How do I validate that a predicted rare conformation is biophysically plausible and not an artifact of the AI model? Answer: AI predictions are hypotheses and require orthogonal experimental validation.

- Protocol: Computational Cross-Validation Pipeline.

- Step 1: Use the AI-predicted conformation as a starting point for short, all-atom Molecular Dynamics (MD) simulations in explicit solvent (≥ 100 ns). Stability assessment is key.

- Step 2: Perform in-silico mutagenesis or alanine scanning on the predicted conformation. If the predicted interface or allosteric network is critical, computational mutagenesis should disrupt binding affinity predictions.

- Step 3: Design a Disulfide Trapping or FRET experiment based on the predicted conformation (see Toolkit).

- Check: The predicted conformation should have no steric clashes, reasonable bond geometries, and a free energy score (from MD or a scoring function) within a plausible range of the native state.

FAQ 3: When integrating AI predictions with Molecular Dynamics (MD), the system fails to relax or quickly collapses back to the dominant state. What went wrong? Answer: The AI-predicted conformation may be in a high-energy local minimum, or the force field may not be adequately parameterized.

- Protocol: Targeted Meta-Dynamics for Conformational Refinement.

- Use the Collective Variables (CVs) derived from the AI model's latent space (e.g., distance between specific CDR loops) as biasing coordinates in a well-tempered meta-dynamics simulation.

- This explicitly discourages the simulation from revisiting the dominant state and helps explore the free energy basin around the AI prediction.

- Run simulations in replicate (n≥3) to assess consistency.

- Check: Ensure your solvent and ion force field parameters are current. For antibodies, pay special attention to disulfide bond and glycosylation parameterization if present.

FAQ 4: My experimental data (e.g., HDX-MS, FRET) suggests a conformational state, but the AI model assigns it an extremely low probability. Who is likely wrong? Answer: This discrepancy is a critical research opportunity. The model's energy landscape may be inaccurate.

- Protocol: Experimental Data Integration for Model Retraining.

- Frame the experimental data as constraints. For HDX-MS, convert deuterium uptake into soft distance or solvent accessibility constraints.

- Use Bayesian inference or a loss term that penalizes model predictions deviating from these experimental constraints.

- Retrain the AI model with this hybrid experimental/computational loss function. This directly addresses the thesis limitation of AI models lacking experimental landscape information.

- Check: Scrutinize the experimental data quality and its interpretation. Also, review the model's training set for the absence of similar conformational motifs.

Table 1: Comparison of Conformational Sampling Methods

| Method | Typical Timescale | Spatial Resolution | Ability to Capture Rare States | Key Limitation |

|---|---|---|---|---|

| X-ray Crystallography | Static | Atomic (~1 Å) | Very Low (often one state) | Crystal packing forces, static snapshot. |

| Cryo-EM | Static to milli-second | Near-atomic (2-3 Å) | Moderate (can visualize some heterogeneity) | Requires particle classification, resolution of rare states can be low. |

| Long-Timescale MD | Microseconds to seconds | Atomic | High (but computationally expensive) | Extreme computational cost; force field inaccuracies. |

| AI/ML Generative Models | Inference: seconds | Atomic | Very High (in principle) | Dependent on training data quality; validation challenge. |

| Enhanced Sampling MD | Nanoseconds to microseconds (biased) | Atomic | Medium-High | Requires pre-defined Collective Variables (CVs). |

Table 2: AI Model Performance Metrics for Conformational Prediction

| Model Type | Test Set RMSD (Å) (Dominant) | Test Set RMSD (Å) (Rare) | Latent Space Dimension | Training Data Required |

|---|---|---|---|---|

| Variational Autoencoder (VAE) | 1.2 - 2.5 | 3.5 - 6.0 | 10-50 | ~10^4 - 10^5 structures |

| Equivariant Diffusion Model | 1.0 - 2.0 | 2.5 - 4.5 | N/A | ~10^5 - 10^6 structures |

| Normalizing Flow | 1.5 - 3.0 | 3.0 - 5.5 | 20-100 | ~10^4 - 10^5 structures |

| Geometry-Transformer | 1.3 - 2.8 | 3.2 - 5.0 | N/A | ~10^5 - 10^6 structures |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Conformational Analysis

| Item | Function in Conformational Research |

|---|---|

| Disulfide Trapping Mutagenesis Kits | Introduce cysteine pairs to "trap" a predicted transient conformation via disulfide bond formation, enabling detection by SDS-PAGE shift or mass spec. |

| Site-Specific Fluorophore Labeling Kits (e.g., for Cysteine, Lysine) | Label engineered antibody sites for FRET or smFRET experiments to measure distances related to conformational changes in solution. |

| HDX-MS (Hydrogen-Deuterium Exchange Mass Spectrometry) Platform | Probes solvent accessibility and dynamics, providing experimental constraints on flexible regions and potential rare state populations. |

| SEC-MALS (Size Exclusion - Multi-Angle Light Scattering) Standards | Validates antibody monodispersity and detects large-scale aggregation or conformational shifts that alter hydrodynamic radius. |

| Membrane Nanoparticles (e.g., Nanodiscs) | Provides a native-like membrane environment for studying conformations of membrane-protein targeting antibodies. |

| Metadynamics-Ready MD Software (e.g., PLUMED) | Enables enhanced sampling simulations to explore free energy landscapes and test AI-predicted rare state stability. |

Visualizations

Diagram 1: Hybrid AI-Experimental Workflow for Conformational Discovery

Diagram 2: Energy Landscape of Antibody Conformations

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My AlphaFold2 or RoseTTAFold model predicts a static, low-energy conformation. How do I investigate biologically relevant, higher-energy states for my antibody?

- A: The model outputs represent a static ground state. To probe dynamics:

- Use Ensemble Generation: Run AlphaFold2 with multiple random seeds (e.g.,

--num-seeds=10). Compare models; regions with high variance (high pLDDT but differing backbone angles) suggest conformational plasticity. - Apply Perturbation: Use tools like

colabfold_batchwith the--num-recycleflag set high (e.g., 20-30) and enable--tunemode. This can sometimes push the model into alternate states. - Downstream MD Simulation: Use the AI-predicted structure as a starting point for Molecular Dynamics (MD) simulation. This is currently the most reliable method to sample dynamics. See Protocol A.

- Use Ensemble Generation: Run AlphaFold2 with multiple random seeds (e.g.,

Q2: I observe poor accuracy (low pLDDT/IpTM) specifically in the CDR H3 loop and elbow hinge regions of my AI-predicted antibody model. What steps should I take?

- A: This is a known limitation due to sparse homologous templates and inherent flexibility.

- Template Detachment: Re-run prediction with

--disable-templatesflag. This forces the model to rely on its inherent physical understanding, which can sometimes improve loop modeling at the cost of overall scaffold accuracy. - Focused Refinement: Use a loop modeling tool (e.g., Rosetta Kinematic Closure (KIC), MODELLER) specifically on the low-confidence regions, using the AI prediction as a starting constraint.

- Experimental Priors: If SAXS or NMR data suggesting a certain radius of gyration or distance constraints are available, use them in MD simulations (see Protocol B) to bias the sampling towards experimentally plausible conformations.

- Template Detachment: Re-run prediction with

Q3: How can I predict the conformational change of an antibody upon antigen binding using current AI structure predictors?

- A: Direct prediction of the binding-induced change is not possible with single-sequence input. You must use a multi-sequence or structural complex approach.

- Complex Prediction: Input the full sequence of the antibody and the antigen into AlphaFold-Multimer or RoseTTAFold. The predicted bound state may differ from the unbound prediction.

- Comparison Analysis: Superimpose the unbound (Ab alone) and bound (Ab-Ag complex) predictions. Measure dihedral angle changes in CDRs and elbow hinge. Caution: The accuracy of the unbound state in this complex context is not guaranteed.

- Dynamics Gap: The pathway and energy barrier between the two predicted states remain unknown. Follow-up with Transition Path Sampling or Steered MD is required to hypothesize a transition mechanism.

Q4: What quantitative metrics should I use to assess predicted conformational diversity versus noise?

- A: Rely on consolidated metrics from multiple runs. See Table 1.

Table 1: Metrics for Assessing AI-Predicted Conformational Diversity

| Metric | Source | Interpretation | Threshold for Significance |

|---|---|---|---|

| pLDDT Std. Dev. (per residue) | Multiple model runs (ensembles) | Low mean pLDDT with low variance indicates stable, low-confidence. High mean pLDDT with high variance indicates confident, multi-state plasticity. | >5-10 points variance |

| Backbone Dihedral Angle Std. Dev. | Multiple model runs (ensembles) | Direct measure of structural variance in φ/ψ angles. High deviation in loops/hinges indicates conformational freedom. | >30° variance |

| Predicted Aligned Error (PAE) Shift | Compare unbound vs. bound complex PAE matrices | Changes in inter-domain error (e.g., VH-VL) suggest a model-predicted rigid-body movement. | >2Å shift in inter-domain error |

Experimental Protocols

Protocol A: Molecular Dynamics as a Post-AI Refinement for Dynamics Objective: Sample the conformational landscape of an AI-predicted antibody structure.

- System Preparation:

- Use the AI-predicted PDB file. Add missing hydrogen atoms using

pdb4amberorCHARMM-GUI. - Solvate the antibody in a cubic TIP3P water box with a 10-12 Å buffer.

- Add physiological ion concentration (e.g., 150mM NaCl) and neutralize system charge.

- Use the AI-predicted PDB file. Add missing hydrogen atoms using

- Simulation Parameters:

- Use AMBER ff19SB or CHARMM36m force field.

- Employ GPU-accelerated engine (e.g., AMBER PMEMD, NAMD, OpenMM).

- Minimize, heat to 310 K, and equilibrate under NPT conditions (1 atm) with harmonic restraints gradually released.

- Production Run & Analysis:

- Run unrestrained production MD for ≥100 ns (µs-scale ideal).

- Analyze: Root Mean Square Fluctuation (RMSF) per residue, radius of gyration, inter-domain distances (VH-VL elbow angle), and dihedral angle clustering (e.g., using

cpptraj).

Protocol B: Integrating Sparse Experimental Data with AI/MD Objective: Bias MD sampling using experimental data to explore correct conformational states.

- Data as Restraints:

- SAXS: Compute theoretical scattering curve from MD snapshots using

CRYSOL. Apply a Bayesian or Maximum Entropy restraint to minimize the χ² between computed and experimental curves. - NMR RDCs/NOEs: Convert experimental measurements into harmonic or flat-bottomed distance/angle restraints added to the simulation force field.

- SAXS: Compute theoretical scattering curve from MD snapshots using

- Enhanced Sampling:

- Use the experimental restraints within an enhanced sampling method (e.g., Metadynamics, Accelerated MD) to drive transitions between states and overcome energy barriers that pure AI or classical MD cannot.

Diagrams

Title: Workflow for AI-Guided Antibody Dynamics Study

Title: AI Prediction Pipeline & Its Dynamics Gap

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Toolkit for Post-AI Antibody Dynamics Research

| Item | Function & Relevance |

|---|---|

| GPU Computing Cluster | Essential for running both deep learning structure prediction (AlphaFold2, etc.) and subsequent microsecond-scale Molecular Dynamics simulations. |

| AMBER/CHARMM/OpenMM Licenses | Software suites providing force fields and simulation engines for classical and enhanced-sampling MD, used to model dynamics beyond AI. |

| Enhanced Sampling Plugins (PLUMED) | Enables advanced sampling techniques (Metadynamics, Steered MD) to overcome high energy barriers and sample rare conformational events. |

| SAXS/NMR Data Collection | Source of sparse experimental data (scattering curves, distance restraints) used to validate and bias MD simulations towards experimentally relevant states. |

| Rosetta or MODELLER Suite | Provides specialized tools (e.g., loop modeling, docking) for focused refinement of AI-predicted low-confidence regions. |

| Analysis Suites (MDTraj, PyMOL, VMD) | For visualization, trajectory analysis (RMSF, clustering), and comparing AI predictions with MD ensembles and experimental data. |

Bridging the Flexibility Gap: Current AI/ML Approaches and Best-Practice Workflows

Technical Support Center

Troubleshooting Guides & FAQs

Q1: AlphaFold2 predicts a single, static structure, but my antibody-antigen research requires understanding conformational changes. How can AlphaFold-MD help bridge this gap? A: AlphaFold2 excels at single-state prediction but has known limitations in modeling conformational ensembles. AlphaFold-MD integrates the AlphaFold2-derived structure as a prior into enhanced sampling Molecular Dynamics (MD) simulations. By using the predicted aligned error (PAE) or pLDDT scores to guide the application of biasing forces (e.g., in Gaussian Accelerated MD or Metadynamics), you can explore alternative conformations beyond the initial prediction, crucial for modeling CDR loop flexibility or induced-fit binding.

Q2: During setup, the AlphaFold-MD simulation becomes unstable and the protein unfolds immediately. What are the primary causes? A: This is often due to clashes or high local strain in the initial AlphaFold2 model when placed in an explicit solvent MD environment.

- Check 1: Protonation States. Ensure histidine protonation states (HID, HIE, HIP) are correct for your simulation pH, especially in the binding pocket.

- Check 2: Missing Atoms. AlphaFold2 models may have incomplete side chains. Use tools like

PDBFixerorModellerto add missing heavy atoms and hydrogens. - Check 3: Relaxation. Perform a steepest-descent energy minimization and a short (50-100 ps) restrained equilibration in explicit solvent before launching the enhanced sampling run. This allows the solvent to adapt and relieves minor clashes.

Q3: How do I quantitatively use AlphaFold2's output (pLDDT or PAE) to define the collective variables (CVs) for enhanced sampling in my antibody simulation? A: Low pLDDT/high PAE regions often indicate intrinsic flexibility. You can define CVs based on these metrics.

- For CDR Loops: Define a CV as the root-mean-square deviation (RMSD) of the low pLDDT (<70) CDR loop residues relative to the AlphaFold2 initial pose. Bias this CV using Metadynamics to encourage exploration.

- For Inter-domain Motions: If PAE is high between the VH and VL domains, define a CV as the distance between their centers of mass or their relative angle.

- Protocol: Extract residue-specific pLDDT scores from the AlphaFold2 output JSON. Use a script to map scores onto your topology file. In your MD engine (e.g., PLUMED), configure the bias to apply force primarily to low-confidence regions.

Q4: My AlphaFold-MD simulation sampled multiple states, but I am unsure how to validate them or identify the most biologically relevant conformation. A: Validation requires integration of experimental and computational data.

- Cross-validate with Experimental Data: Use SAXS (small-angle X-ray scattering) profiles to assess the ensemble's fit to solution data. Compute theoretical SAXS curves from your simulation clusters and calculate the χ² fit.

- Compute Binding Affinity: For each dominant conformation, perform docking or short MD simulations with the antigen and compute relative binding energies (MM-GBSA/PBSA). The conformation that yields a favorable and consistent binding energy is more plausible.

- Check Conserved Interactions: The relevant conformation should maintain conserved intramolecular salt bridges or hydrogen bonds observed in known antibody structures.

Q5: Are there specific CVs or enhanced sampling methods recommended for antibody-specific motions like VH-VL elbow angle variation? A: Yes. The elbow angle between the variable (VH-VL) and constant (CH1-CL) domains is a classic antibody degree of freedom.

- CV Definition: Define four pseudo-atoms representing the centers of mass of VH, VL, CH1, and CL. The CV is the angle between the vector connecting VH-VL and the vector connecting CH1-CL.

- Recommended Method: Use Well-Tempered Metadynamics or Adaptive Sampling biased on this elbow angle CV, combined with RMSD CVs of CDR loops, to capture coupled motions.

Table 1: Common AlphaFold2 Output Metrics and Their Interpretation for MD

| Metric | Range | Interpretation for MD Setup |

|---|---|---|

| pLDDT | 90-100 | Very high confidence. Treat as a well-folded, stable region. |

| 70-90 | Confident. Standard MD parameters are suitable. | |

| 50-70 | Low confidence. Region is flexible/unstructured. Prime candidate for CV-based enhanced sampling. | |

| <50 | Very low confidence. Likely disordered. May require specialized force fields or truncated modeling. | |

| Predicted Aligned Error (PAE) | <5 Å | Confident in relative positioning of residue pairs. |

| 5-15 Å | Moderate uncertainty. Can guide domain-level CV definition. | |

| >15 Å | High uncertainty. Relative orientation is poorly predicted. Key region for conformational exploration. |

Table 2: Comparison of Enhanced Sampling Methods for AlphaFold-MD

| Method | Key Principle | Best Suited for Antibody Research Scenario | Computational Cost |

|---|---|---|---|

| Gaussian Accelerated MD (GaMD) | Adds a harmonic boost potential to smooth the energy landscape. | Initial broad exploration of CDR loop conformational space. | Medium |

| Well-Tempered Metadynamics | Deposits repulsive Gaussian biases in CV space to push system away from visited states. | Quantitatively mapping the free energy landscape of VH-VL elbow angles. | High |

| Adaptive Sampling | Uses short, independent simulations to seed new ones based on uncertainty. | Generating a diverse ensemble of Fab fragment conformations for ensemble docking. | Variable (can be high-throughput) |

| Replica Exchange MD | Runs parallel simulations at different temperatures, allowing exchanges. | Overcoming large energy barriers in domain rearrangements. | Very High |

Experimental Protocols

Protocol 1: From AlphaFold2 Prediction to Equilibrated System for MD

- Prediction: Run AlphaFold2 (via ColabFold recommended for speed) for your antibody sequence. Download the ranked PDB files and the

model_*.pklfiles containing PAE/pLDDT data. - Model Preparation: Select the top-ranked model. Use

PDBFixer(OpenMM suite) to:- Add missing heavy atoms and side chains.

- Add missing hydrogens for pH 7.4.

- Save as a new PDB.

- Solvation & Ionization: Use

gmx pdb2gmx(GROMACS) ortleap(AMBER) to:- Place the protein in a cubic or dodecahedral water box (e.g., TIP3P) with at least 1.2 nm buffer.

- Add ions (e.g., 0.15 M NaCl) to neutralize the system and mimic physiological concentration.

- Minimization & Equilibration:

- Energy Minimization: Perform 5000 steps of steepest descent minimization to remove clashes.

- NVT Equilibration: Heat system to 310 K over 100 ps using a v-rescale thermostat, with heavy restraints on protein atoms.

- NPT Equilibration: Equilibrate pressure at 1 bar over 100 ps using a Parrinello-Rahman barostat, with restraints on protein backbone.

Protocol 2: Implementing pLDDT-Guided Gaussian Accelerated MD (GaMD)

- CV Selection: Parse the pLDDT scores. Define Cα atoms of residues with pLDDT < 70 as your "flexible region".

- GaMD Setup (using AMBER):

- Run a short (2 ns) conventional MD simulation to collect potential statistics.

- Calculate the average and standard deviation of the system's dihedral and total potential energy.

- Use the

gamdcommand in AMBER'spmemd.cudato set the GaMD parameters (e.g.,sigma0D=6.0, sigma0P=6.0for dihedral and total boost).

- Production Run: Execute the GaMD simulation for 100-500 ns. The flexible, low-pLDDT regions will receive a higher boost potential, accelerating their conformational sampling.

- Analysis: Cluster the trajectories (e.g., using

gmx cluster). Analyze the sampled RMSD of CDR loops and compare to the initial AlphaFold2 pose.

Diagrams

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools & Materials for AlphaFold-MD Experiments

| Item | Function & Purpose | Example/Note |

|---|---|---|

| ColabFold | Cloud-based, accelerated AlphaFold2 server. Provides quick predictions with MMseqs2 for MSA. | Use for rapid initial structure prediction. Download PAE matrix. |

| GROMACS/AMBER | High-performance MD simulation engines. Required for running energy minimization, equilibration, and production MD. | GROMACS is free; AMBER requires license. Both support enhanced sampling via PLUMED. |

| PLUMED | Plugin for free-energy calculations and enhanced sampling. Essential for implementing Metadynamics, Umbrella Sampling, etc., based on your CVs. | Must be compiled with your MD engine. Use version >2.8. |

| PDBFixer | Tool to prepare protein structures from PDB files for simulation (add missing atoms, protonate). | Part of the OpenMM suite. Critical for fixing AlphaFold2 output. |

| VMD/ChimeraX | Molecular visualization software. Used to analyze trajectories, visualize conformational changes, and prepare figures. | VMD is powerful for analysis scripts; ChimeraX has excellent rendering. |

| PyMOL | Commercial molecular visualization and analysis tool. Widely used for creating publication-quality images of structures. | Useful for aligning and comparing the initial AF2 model with sampled MD frames. |

| PLUMED-INSURE | A tool within PLUMED to analyze the sampling efficiency and convergence of enhanced sampling simulations. | Check if your chosen CVs adequately explore the conformational space. |

| High-Performance Computing (HPC) Cluster | Essential computational resource. AlphaFold-MD simulations are resource-intensive, requiring multiple GPUs/CPUs for days to weeks. | Plan for adequate GPU (for AF2) and CPU/GPU (for MD) node allocation. |

Troubleshooting Guides & FAQs

Q1: My ensemble model generates highly similar conformations instead of a diverse set. What could be the cause and how can I fix it?

A: This is often due to mode collapse in generative models or insufficient sampling diversity.

- Cause 1: Inadequate latent space exploration. The model is stuck in a local minimum.

- Solution: Increase the temperature parameter (e.g., from 1.0 to 1.5) during the sampling phase to encourage broader exploration. Implement or enhance random seed variation across ensemble members.

- Cause 2: Overly restrictive training data. The training set lacks conformational variety.

- Solution: Augment training data with structures from different experimental conditions (pH, temperature) or employ data augmentation techniques like adding Gaussian noise to atomic coordinates during training.

- Protocol: To diagnose, calculate the Root Mean Square Deviation (RMSD) matrix between all generated conformations.

- If the average pairwise RMSD is < 2.0 Å for a flexible CDR loop, diversity is too low.

- Fix Protocol: Retrain with a modified loss function that includes a diversity penalty term, such as maximizing the average pairwise RMSD among a batch of generated samples.

Q2: How do I validate which AI-generated conformation is biologically relevant when experimental structures are unavailable?

A: Employ a multi-pronged computational validation pipeline.

- Step 1: Energetic Filtration. Filter all generated conformations using a physics-based scoring function. Discard high-energy poses.

- Step 2: Consensus Ranking. Use at least three independent metrics to rank conformations.

- Step 3: Dynamic Assessment. For top-ranked conformations, run a short, implicit solvent molecular dynamics (MD) simulation (e.g., 50 ns) to check for stability.

Table 1: Computational Validation Metrics for Generated Antibody Conformations

| Metric | Recommended Threshold | Purpose | Tool Example |

|---|---|---|---|

| Rosetta Energy Units (REU) | < 0 (lower is better) | Assesses thermodynamic stability. | Rosetta refine protocol |

| MolProbity Clashscore | < 10 (lower is better) | Evaluates steric clashes and rotamer outliers. | MolProbity Server |

| PLDDT (from AlphaFold2) | > 70 (higher is better) | Measures local confidence per residue. | ColabFold |

| Normalized B-Factor (from MD) | < 1.0 for CDR loops | Assesses dynamic stability from simulation. | GROMACS gmx rmsf |

Q3: The predicted conformations do not agree with my HDX-MS (Hydrogen-Deuterium Exchange Mass Spectrometry) data. How should I proceed?

A: This indicates a potential discrepancy between AI-predicted static structures and solution-phase dynamics.

- Troubleshooting Steps:

- Map HDX-MS data: Project the deuterium uptake rates onto your generated conformations. Identify regions with high experimental exchange but low predicted solvent accessibility.

- Check for missing ensembles: HDX-MS detects an average over all populated states. A single conformation may be insufficient.

- Protocol: Cluster your AI-generated ensemble into 3-5 representative clusters. Compute the weighted average solvent-accessible surface area (SASA) for each residue across clusters (weighted by cluster population). Compare this ensemble-averaged SASA to HDX-MS data.

- Refine model: Use the HDX-MS data as a soft constraint during a subsequent MD simulation or during the training of a next-generation model to bias sampling toward experimentally consistent states.

Q4: When integrating ensemble predictions with MD, my simulations become unstable or crash. What are common pitfalls?

A: This is frequently due to steric clashes or poor geometry in the initial AI-generated model.

- Pre-MD Repair Protocol:

- Run the PDB file through PDBFixer (OpenMM suite) to add missing atoms (especially hydrogens) and residues.

- Perform energy minimization using an implicit solvent model (e.g., Generalized Born) for 5,000 steps to relieve severe clashes. A steep drop in potential energy (> 10^4 kJ/mol) indicates resolved clashes.

- Check and correct chirality and protonation states of key residues (e.g., HIS, ASP, GLU) according to your intended simulation pH using PROPKA.

- Critical Step: Use LEaP (AmberTools) or pdb2gmx (GROMACS) to properly parameterize the structure for your chosen force field (e.g., CHARMM36, AMBER ff19SB).

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for AI-Driven Conformational Ensemble Studies

| Item / Reagent | Function / Purpose | Example Product / Software |

|---|---|---|

| High-Quality Structural Datasets | Training and benchmarking AI models. Requires diverse, high-resolution antibody-antigen complexes. | SAbDab (The Structural Antibody Database), PDB |

| Generative AI Software | Core platform for generating conformational ensembles. | Omega (OpenEye), Rosetta KIC/Backrub, DiffDock, RFdiffusion |

| Molecular Dynamics Suite | For validation, refinement, and assessing dynamics of generated conformations. | GROMACS, AMBER, NAMD, OpenMM |

| Force Field Parameters | Defines atomic interactions for physics-based simulation and scoring. | CHARMM36m, AMBER ff19SB, DES-Amber |

| Solvent Model | Critical for accurate simulation of aqueous environments and binding interfaces. | TIP3P, TIP4P water models; Generalized Born (GB) implicit solvent |

| Analysis & Visualization Suite | Processing, comparing, and visualizing ensembles and simulation trajectories. | PyMOL, VMD, MDTraj, Bio3D (R) |

| Validation Server | Independent assessment of structural quality and steric soundness. | MolProbity, PDB Validation Server |

Experimental Protocols

Protocol 1: Generating an Ensemble with a Conditional Variational Autoencoder (cVAE)

- Data Preparation: Curate a dataset of antibody Fv region structures from SAbDab. Align all structures to a common framework reference. Convert coordinates into internal representations (e.g., torsion angles).

- Model Training: Train a cVAE where the encoder maps a structure to a latent vector z, and the decoder reconstructs it. Condition the model on antibody sequence features (CDR loop lengths, amino acid profiles).

- Ensemble Generation: For a target sequence, sample multiple latent vectors zi from a Gaussian distribution (N(0, I)). Decode each zi using the conditioned decoder to produce a unique backbone conformation.

- Side-Chain Packing: Use a fast rotamer library (e.g., SCWRL4) to add side chains to each generated backbone.

- Initial Filtering: Discard conformations with severe steric clashes (clashscore > 20) or improbable backbone dihedrals (outside preferred Ramachandran regions).

Protocol 2: Integrating Ensemble Predictions with Molecular Dynamics for Stability Assessment

- Input: Select top 5 conformations from AI ensemble based on composite score (Table 1).

- System Preparation: Solvate each conformation in a cubic water box (10 Å padding). Add ions to neutralize charge and reach 150 mM NaCl concentration.

- Equilibration: Perform stepwise equilibration in NVT and NPT ensembles (100 ps each) with heavy atom positional restraints gradually released.

- Production MD: Run unrestrained MD simulation for 100 ns per conformation. Use a 2-fs timestep. Save coordinates every 10 ps.

- Analysis: Calculate per-residue Root Mean Square Fluctuation (RMSF). Cluster frames from the last 50 ns to identify the dominant stable pose. Compare to the initial AI-generated structure via RMSD.

Workflow & Relationship Diagrams

AI-Driven Conformational Ensemble Prediction & Validation Workflow

Thesis Context: From Single-State Limits to Ensemble Solution

Technical Support Center: Troubleshooting & FAQs

Q1: During a molecular dynamics (MD) simulation of a CDR-H3 loop using AMBER, my simulation "blows up" (becomes unstable) after a few nanoseconds. What are the primary causes and solutions?

A: This is often due to bad contacts or incorrect parameters. Follow this protocol:

- Minimization & Heating Protocol:

- Perform 5,000 steps of steepest descent minimization on only the solvent and ions, restraining the antibody complex (force constant of 10.0 kcal/mol·Å²).

- Minimize the entire system for 10,000 steps (5,000 steepest descent, 5,000 conjugate gradient).

- Heat the system from 0K to 300K over 50 ps in the NVT ensemble, using a weak restraint (1.0 kcal/mol·Å²) on the antibody.

- Equilibrate for 100 ps in the NPT ensemble at 300K and 1 bar before production run.

- Check for Missing Parameters: For non-standard residues or covalent linkages, use the

antechamberandparmchk2modules to generate GAFF2 parameters. Ensure correct disulfide bond definitions in your topology. - Troubleshooting Table:

| Issue | Likely Cause | Diagnostic Step | Solution |

|---|---|---|---|

| Rapid energy increase | Bad steric clash | Visualize the last stable frame (e.g., VMD, PyMOL). | Return to the minimized structure, apply stronger positional restraints during initial heating (5.0 kcal/mol·Å²). |

| Sudden coordinate NaN | Unphysical bond/angle | Check simulation logs for "Coordinate/velocity/force is NaN". | Shorten the initial timestep to 0.5 fs during heating, ensure all hydrogen masses are properly repartitioned (using parmed). |

Q2: When using Rosetta FlexPepDock or CDR loop modeling protocols, my models show unrealistic backbone dihedral angles (Ramachandran outliers) specifically in the grafted loop regions. How can I fix this?

A: This indicates a failure in the loop conformation sampling or refinement step.

- Protocol Enhancement:

- Increase the number of cyclic coordinate descent (CCD) closure attempts from the default (e.g., 1000 to 5000) using the flag

-loops:max_ccd_cycles 5000. - Apply the

-loops:refine_onlyflag combined with-relax:thoroughto the problematic models, focusing refinement on a 9Å region around the CDR loop. - Incorporate backbone dihedral constraints from homologous structures (if available) using the

-constraints:fileflag.

- Increase the number of cyclic coordinate descent (CCD) closure attempts from the default (e.g., 1000 to 5000) using the flag

- Use the

FloppyTailapplication for extreme flexibility prior to docking. - Key Metrics Table for Model Validation:

| Metric | Acceptable Range | Tool for Assessment | Corrective Action if Out of Range |

|---|---|---|---|

| Ramachandran Favored (%) | >98% for grafted loop | MolProbity, PHENIX |

Apply Rosetta's FastRelax with a rama_2b weight map. |

omega angle outliers |

<0.1% | MolProbity |

Use Rosetta's fixbb with -correct flag. |

clashscore (all atom) |

<5 | MolProbity |

Run RosettaDock high-resolution refinement with -docking_local_refine. |

Q3: How do I effectively use AlphaFold2 or AlphaFold3 for predicting the conformation of a CDR loop in the context of a known antibody Fv framework, and what are the limitations?

A: Leverage AlphaFold's strength in template-based modeling while mitigating its stochasticity for hypervariable loops.

- Experimental Protocol (AlphaFold2 with AF2Complex):

- Input Preparation: Supply the full-length heavy and light chain sequences in a FASTA file. For context, include the target antigen sequence separated by a colon.

- Template Guidance: Provide the PDB file of your known Fv framework as a

--template_pdbto bias the framework regions. - Multi-Seed Sampling: Run 5-10 independent predictions with different random seeds (

--model_seed). The relaxed model with the lowest pLDDT in the CDR region is not always the best; cluster all predictions by CDR RMSD. - Analysis: Extract the per-residue pLDDT and predicted aligned error (PAE) focusing on the CDR loops.

- Limitations & Data Summary Table:

| Model Feature | Strength for CDRs | Known Limitation | Quantitative Benchmark (Approx.) |

|---|---|---|---|

| pLDDT Score | High confidence (>90) correlates with accuracy. | Poor discriminator for low-confidence (70-85) loop conformations. | CDR-H3 RMSD can vary by >4Å for models with similar pLDDT. |

| Predicted Aligned Error (PAE) | Identifies flexible/disordered regions. | Underestimates error for conformational rearrangements upon binding. | N/A |

| Sequence Dependency | Excellent for canonical loops. | Struggles with rare lengths (>22 residues) or multiple disulfides in CDR. | Success rate (RMSD <2Å) drops from ~70% to <30% for non-canonical H3 loops. |

Visualization of Protocols

AlphaFold CDR Modeling & Validation Workflow

Stable MD Simulation Protocol for CDR Loops

The Scientist's Toolkit: Research Reagent Solutions

| Item Name | Vendor (Example) | Function in CDR Loop Modeling |

|---|---|---|

| AMBER (ff19SB/GAFF2) | Open Source / UCSF | Force field providing parameters for MD simulations of antibodies, including backbone and side chain energetics. |

| Rosetta Software Suite | University of Washington | Comprehensive suite for de novo loop remodeling, docking, and full-atom refinement, specialized for proteins. |

| ChimeraX / PyMOL | UCSF / Schrödinger | Visualization and analysis tools for model validation, clash detection, and measuring distances/angles. |

| MolProbity Server | Duke University | Critical validation service for checking steric clashes, rotamer outliers, and backbone dihedral angles. |

| AlphaFold2/3 ColabFold | DeepMind / GitHub | Cloud-based implementation for rapid, GPU-accelerated prediction of antibody-antigen complex structures. |

| GROMACS (2023+) | Open Source | High-performance MD engine suitable for large-scale sampling of loop conformational states on HPC clusters. |

| PDB Fixer | OpenMM | Prepares PDB files for simulation by adding missing atoms, loops (crudely), and protonation states. |

| PEP-FOLD3 | Université Paris Cité | De novo peptide folding tool useful for initial modeling of long, independent CDR-H3 loop conformations. |

Incorporating Co-factors and Solvent Effects in AI-Driven Docking Simulations

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our AI-docking simulation fails when a catalytic metal ion (co-factor) is present in the binding pocket. The predicted binding pose is physically impossible, with the ligand overlapping the ion. What could be the cause and solution?

A: This is a common issue where the AI scoring function lacks explicit parameters for metal-coordination chemistry. The model treats the ion as a generic charged sphere.

Troubleshooting Steps:

- Pre-process the co-factor: Ensure the metal ion has the correct formal charge and is parameterized with a suitable force field (e.g., AMBER, CHARMM) before input into the AI system.

- Use a hybrid approach: Run a classical molecular dynamics (MD) simulation with explicit solvent and the correct ion parameters to generate an ensemble of stable protein-co-factor conformations. Use this ensemble as input structures for the AI docking.

- Post-docking refinement: Subject the top AI-generated poses to a brief MD simulation or energy minimization with explicit ions and solvent to validate and refine the geometry.

Q2: How do we accurately account for explicit water molecules mediating a ligand-protein interaction in an AI docking protocol that uses an implicit solvation model?

A: Key mediating waters are often overlooked by implicit models. They must be treated as part of the receptor.

Experimental Protocol: "Explicit Bridge Water Retention"

- Obtain a high-resolution crystal structure (≤2.0 Å) of the target.

- Run a short (50-100 ns) explicit solvent MD simulation of the apo-protein to identify conserved water molecules within the binding site (occupancy > 0.8).

- Manually inspect the binding site for water molecules forming hydrogen-bond networks between protein and known ligands.

- Incorporate these conserved/structural water molecules as fixed, non-flexible residues in the receptor PDBQT file used for AI docking. The AI model will then treat them as part of the target geometry.

Q3: The AI-predocked conformation of our antibody-antigen complex shows strong complementarity, but subsequent MD shows rapid dissociation in explicit solvent. Why does the AI score not capture this instability?

A: The discrepancy likely arises from the lack of explicit solvation and entropic effects in the AI training data. AI models trained on static crystal structures may favor overly tight, "dry" interfaces that are not solvated correctly.

Diagnosis and Solution:

- Analyze the interface: Use a tool like PISA (Protein Interfaces, Surfaces and Assemblies) to predict the solvation free energy gain upon binding (ΔG). Compare the AI-predicted pose's ΔG to known stable complexes.

- Implement a solvation-shell checkpoint: Before accepting the top AI pose, run a Poisson-Boltzmann/Surface Area (MM/PBSA) calculation on the complex using a short MD snapshot with explicit water. This provides a more rigorous solvation-inclusive binding score.

- Refine with SMD: Use Steered Molecular Dynamics (SMD) in explicit water to test the mechanical stability of the docked pose, which can reveal unrealistic interactions missed by static scoring.

Table 1: Performance Comparison of Docking Methods with Co-factors

| Method | Co-factor Handling | RMSD (Å) <2.0 (Success Rate) | ΔG Prediction Error (kcal/mol) | Computational Cost (GPU hrs) |

|---|---|---|---|---|

| AI Docking (Baseline) | Implicit / Generic Charge | 42% | 3.8 ± 1.2 | 0.5 |

| AI Docking + Pre-Param. Ion | Explicit Parameters | 65% | 2.1 ± 0.9 | 1.0 |

| Hybrid MD/AI Ensemble | Explicit, Dynamic | 78% | 1.5 ± 0.7 | 24.0 |

| Classical Docking (Ref.) | Explicit Parameters | 58% | 2.5 ± 1.0 | 5.0 |

Table 2: Impact of Explicit Solvent Bridges on Binding Affinity Prediction

| System | No. of Bridging Waters | AI Score (pKd) | MM/PBSA Score (pKd) | Experimental (pKd) |

|---|---|---|---|---|

| Antibody A / Antigen X | 0 (Dry) | 8.9 | 6.2 | 7.1 |

| Antibody A / Antigen X | 2 (Conserved) | 7.5 | 7.0 | 7.1 |

| Protease / Inhibitor Y | 1 (Catalytic) | 9.2 | 8.8 | 8.9 |

Experimental Protocols

Protocol: Hybrid MD/AI Docking for Antibody Conformational Changes with Solvent This protocol addresses the thesis context of limitations in predicting antibody paratope flexibility.

- System Preparation:

- Start with the Fv fragment of the antibody (PDB ID).

- Protonate states at pH 7.4 using

PDB2PQR. - Parameterize any co-factors (e.g., catalytic Zn²⁺) with

MCPB.py(for AMBER).

- Conformational Sampling (MD):

- Solvate the system in a TIP3P water box with 150 mM NaCl.

- Minimize, heat to 310 K, and equilibrate under NPT conditions.

- Run a production MD simulation for 200 ns. Save frames every 100 ps.

- Cluster Analysis:

- Cluster the paratope (CDR-H3/L3) residues from the MD trajectory using RMSD-based clustering (e.g., GROMACS

cluster). - Select the top 5 centroid structures as representative conformers.

- Cluster the paratope (CDR-H3/L3) residues from the MD trajectory using RMSD-based clustering (e.g., GROMACS

- AI-Driven Ensemble Docking:

- Prepare each antibody centroid conformer and the antigen as input for the AI docking software (e.g., using

prepare_receptorandprepare_ligandscripts). - Run docking against each conformer. Aggregate and rank all results by the AI's confidence score.

- Prepare each antibody centroid conformer and the antigen as input for the AI docking software (e.g., using

- Solvation & Scoring Validation:

- For the top 20 poses, run a 50 ns explicit solvent MD.

- Calculate the MM/PBSA binding free energy over the last 20 ns.

- The pose with the most favorable MM/PBSA score and stable RMSD is selected.

Visualizations

Title: Hybrid MD-AI Workflow for Antibody Docking

Title: Solvent Effect Troubleshooting Logic

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Experiment | Example/Detail |

|---|---|---|

| Force Field Parameters for Ions | Provides accurate bonded/non-bonded terms for metal co-factors (e.g., Zn²⁺, Mg²⁺) in MD simulations. | MCPB.py (for AMBER); CHARMM GUI Metal Center Builder. |

| Explicit Solvent Box | Creates a realistic aqueous environment for MD simulations to model solvent effects. | TIP3P, TIP4P water models; 150 mM NaCl for physiological ionic strength. |

| Trajectory Analysis Suite | Processes MD data to cluster conformations, calculate RMSD, and identify conserved waters. | GROMACS cluster, gmx rms; VMD; MDTraj (Python). |

| AI Docking Software | Performs rapid, deep-learning-based pose prediction and scoring. | AlphaFold 3, DiffDock, EquiBind. |

| MM/PBSA Calculation Tool | Computes solvation-inclusive binding free energies from MD trajectories. | g_mmpbsa (GROMACS), AMBER MMPBSA.py. |

| High-Resolution Structure | Essential starting point to identify structural waters and correct binding site geometry. | RCSB PDB entry with resolution ≤ 2.0 Å. |

Technical Support Center

Troubleshooting Guide & FAQs

Q1: Our AI-predicted antibody conformational changes show unrealistic backbone torsions or clashes in the CDR loops. What are the primary checks and corrections? A: This is a common limitation in AI models trained on static structures. First, run a steric clash check using tools like MolProbity or UCSF Chimera. If clashes are present, apply a short, constrained molecular dynamics (MD) minimization in explicit solvent (e.g., using AMBER or GROMACS) to relax the structure. For torsions, validate predicted angles against statistical distributions from the PDB (e.g., via CDR loop classification). Consider using a refinement step with a physics-based force field to correct energetically unfavorable states before proceeding to experimental validation.

Q2: After generating AI-predicted frames, which biophysical technique is most suitable for initial, rapid validation of a putative conformational change? A: For initial validation, Surface Plasmon Resonance (SPR) or Bio-Layer Interferometry (BLI) is recommended to detect binding kinetics changes. A significant alteration in off-rate (kd) between the antibody and its antigen across different conditions (e.g., pH shift) can indicate a predicted conformational switch. Ensure your experimental buffer conditions match the in silico prediction environment (pH, ionic strength). Negative results here may suggest the AI-predicted state is not populated under tested conditions.

Q3: During Hydrogen-Deuterium Exchange Mass Spectrometry (HDX-MS) validation, we see low deuterium uptake changes compared to our AI-predicted dramatic conformational shift. What does this imply? A: Low HDX-MS signal change can indicate: 1) The predicted conformational state is not significantly populated in solution. 2) The conformational change is highly dynamic and averaged out in the measurement timeframe. 3) The structural change is localized and does not alter backbone solvent accessibility. Revisit the AI model's confidence scores for that region. Consider complementary techniques like time-resolved FRET or MD simulations to probe for transient or subtle changes.

Q4: How do we reconcile a high-confidence AI prediction with negative experimental validation from X-ray crystallography? A: Crystallography captures a single, lowest-energy state, often stabilized by crystal packing. A negative result may mean: 1) The predicted state is transient or has low population in the crystallized condition. 2) Crystal packing forces inhibit the transition. To address this, try co-crystallization under the condition predicted to induce the change (e.g., with antigen, at different pH). If unsuccessful, use solution techniques like Small-Angle X-Ray Scattering (SAXS) to detect populations of alternative conformations.

Q5: Our MD simulation, initiated from an AI-predicted frame, rapidly collapses back to the known ground state. Is the prediction invalid? A: Not necessarily. This could indicate that the AI-predicted state is a metastable intermediate or requires a specific trigger (e.g., antigen binding, post-translational modification) for stabilization. Examine the simulation trajectory for early-stage structural features that match the prediction before collapse—these may be genuine characteristics of an unstable intermediate. Consider running metadynamics or umbrella sampling simulations to compute the free energy landscape between the ground and predicted states.

Key Experimental Protocols

Protocol 1: Constrained MD Refinement of AI-Predicted Structures

- Preparation: Solvate the AI-predicted PDB file in a TIP3P water box with 10 Å padding using

tleap(AMBER) orgmx solvate(GROMACS). Add ions to neutralize the system. - Minimization: Perform 5000 steps of steepest descent minimization, restraining protein heavy atoms with a force constant of 10 kcal/mol·Å².

- Heating & Equilibration: Heat the system from 0 to 300 K over 100 ps in the NVT ensemble, followed by 1 ns equilibration in the NPT ensemble (1 atm), maintaining restraints.

- Production: Run a short (5-10 ns) MD simulation in the NPT ensemble with reduced backbone restraints (1-2 kcal/mol·Å²) or no restraints, using a 2-fs timestep.

- Analysis: Cluster the trajectory and take the centroid of the largest cluster as the refined structure for experimental testing.

Protocol 2: HDX-MS for Conformational Change Validation

- Labeling: Dilute antibody (10 µM) into deuterated buffer (pD 7.4, 25°C) for seven time points (10s to 4 hours). Quench with cold, low-pH buffer (final pH 2.5).

- Digestion & Analysis: Pass quenched sample over an immobilized pepsin column at 0°C. Trap peptides on a C18 cartridge, separate via UPLC, and analyze with a high-resolution mass spectrometer.

- Data Processing: Use software (e.g., HDExaminer) to identify peptides and calculate deuterium uptake for each time point. Compare uptake between the antibody alone and in complex with antigen or under perturbing conditions.

- Mapping: Significantly altered peptides (ΔDa > 0.5, p-value < 0.01) are mapped onto the AI-predicted model to assess regional agreement.

Data Presentation

Table 1: Comparison of Experimental Techniques for Validating AI-Predicted Conformations

| Technique | Resolution | Timescale | Sample Consumption | Key Metric for Validation | Suitability for Transient States |

|---|---|---|---|---|---|

| X-ray Crystallography | Atomic (~1-2 Å) | Static | Low (~µg) | Electron density fit | Poor (captures dominant state) |

| Cryo-EM | Near-Atomic (~3-4 Å) | Static | Moderate (~µg-mg) | 3D reconstruction map | Moderate (can resolve multiple states) |

| HDX-MS | Peptide Level (5-20 residues) | Seconds to Hours | Low (~pmol) | Deuterium Uptake (Da) | Excellent (probes dynamics) |

| SAXS | Global Shape (~10 Å) | Milliseconds | Moderate (~mg) | Pair-distance distribution | Good (detects ensemble changes) |

| FRET | Distance (20-80 Å) | Nanoseconds to Seconds | Very Low | Efficiency (E) | Excellent for kinetics |

Table 2: Example Reagent Table for HDX-MS Validation Experiment

| Item | Function/Description | Example Product (Supplier) |

|---|---|---|

| Deuterium Oxide (D₂O) | Labeling buffer base for HDX exchange. | 99.9% D₂O, Sigma-Aldrich |

| Immobilized Pepsin Column | Rapid, cold digestion of labeled protein into peptides. | Poroszyme Immobilized Pepsin (Thermo Fisher) |

| Vanquish UPLC System | Low-temperature, fast chromatographic separation to minimize back-exchange. | Vanquish Horizon (Thermo Fisher) |

| Q Exactive HF Mass Spectrometer | High-resolution, accurate mass detection for deuterated peptides. | Q Exactive HF (Thermo Fisher) |

| HDExaminer Software | Automated processing, analysis, and visualization of HDX-MS data. | Sierra Analytics |

Visualizations

Title: Hybrid AI-Experimental Validation Pipeline Workflow

Title: HDX-MS Experimental Workflow Steps

The Scientist's Toolkit: Research Reagent Solutions

| Item | Category | Function in Conformational Analysis |

|---|---|---|

| Size Exclusion Chromatography (SEC) Column | Protein Purification | Ensures monodispersity of antibody sample before biophysical assays, removing aggregates that skew data. |

| Anti-His Tag Biosensor (for BLI) | Binding Assay | Enables capture-tag based kinetics measurement for antigen binding to validate predicted affinity changes. |

| SPR Chip (CM5 Series) | Binding Assay | Gold-standard surface for immobilizing antigen/antibody to measure real-time binding kinetics and thermodynamics. |

| SEC-SAXS Buffer Kit | Structural Biology | Provides pre-matched, ultra-pure buffers for SAXS to minimize background scattering and aggregation. |

| Cryo-EM Grids (Quantifoil R1.2/1.3) | Structural Biology | Holey carbon films for vitrifying samples to capture single-particle images for 3D reconstruction. |

| DEER Spectroscopy Labeling Kit (MTSSL) | Spectroscopy | Site-directed spin labeling for pulsed EPR measurements to validate long-range distance predictions. |

| Fluorophore Pair for FRET (e.g., Alexa 488/594) | Spectroscopy | Conjugated to engineered cysteines to measure distances and dynamics between specific sites in solution. |

Troubleshooting Inaccurate AI Predictions: A Researcher's Diagnostic Guide

Troubleshooting Guides & FAQs