Harmony Batch Correction: The Complete Guide for Single-Cell RNA-Seq Data Integration (2024 Workflow)

This comprehensive guide provides researchers, scientists, and drug development professionals with a detailed, practical workflow for using Harmony to correct batch effects in single-cell RNA sequencing data.

Harmony Batch Correction: The Complete Guide for Single-Cell RNA-Seq Data Integration (2024 Workflow)

Abstract

This comprehensive guide provides researchers, scientists, and drug development professionals with a detailed, practical workflow for using Harmony to correct batch effects in single-cell RNA sequencing data. The article covers foundational concepts, step-by-step implementation, common troubleshooting strategies, and comparative validation against other methods, delivering a complete resource for robust and reproducible data integration.

What is Harmony Batch Correction? Understanding the Core Challenge of scRNA-Seq Integration

1. Introduction & Definitions Batch effects are non-biological sources of variation introduced during the technical execution of experiments. In single-cell genomics, they confound true biological signals, complicating data integration and interpretation. Distinguishing them from biological variation is critical. This Application Note, framed within a thesis on the Harmony batch correction workflow, details protocols for identifying and addressing these effects.

2. Quantitative Comparison of Variation Sources The table below summarizes common sources of variation, their characteristics, and distinguishing features.

Table 1: Characteristics of Technical Batch Effects vs. Biological Variation

| Aspect | Technical Batch Effects | Biological Variation |

|---|---|---|

| Primary Source | Library prep date, reagent lot, sequencer, operator. | Cell type, developmental stage, disease state, treatment response. |

| Expression Pattern | Often affects genes uniformly across cell types (e.g., mitochondrial gene percentage shift). | Cell-type-specific gene expression programs. |

| Impact on Clustering | Causes "batch-clustered" cells, splitting same cell type across clusters. | Drives meaningful separation into distinct cell types/states. |

| Detectable via Controls | Revealed by technical replicates or multiplexed reference samples (e.g., cell hashing). | Revealed by biological replicates from distinct donors/conditions. |

| Correction Goal | Remove/attenuate to integrate data across batches. | Preserve and analyze. |

3. Protocol: Experimental Design to Isolate Batch Effects Objective: To empirically distinguish batch effects from biological variation using a replicated study design. Materials: Fresh or cryopreserved cell samples from at least 2 distinct biological conditions (e.g., healthy vs. disease) and 2 donors per condition.

Procedure:

- Sample Multiplexing: Label cells from each donor/condition with a unique lipid-tagged antibody (Cell Multiplexing Kit, e.g., BioLegend TotalSeq-B). Pool all labeled samples into a single tube.

- Library Preparation Split: Divide the pooled cell suspension into two equal aliquots.

- Batch Introduction: Process each aliquot as a separate batch:

- Batch A: Process on Day 1, using Kit Lot #XYZ, by Operator 1.

- Batch B: Process on Day 2, using Kit Lot #ABC, by Operator 2.

- All other protocols (sequencing platform, depth) are kept identical.

- Sequencing & Demultiplexing: Sequence libraries and demultiplex based on both the cell multiplexing oligo tags (biological origin) and the batch index (technical origin).

- Analysis: Generate a combined UMAP. Initially, cells will cluster strongly by batch. After applying a batch correction tool (e.g., Harmony), successful correction will lead to clustering primarily by donor and biological condition.

4. Protocol: Computational Assessment with Harmony Objective: Apply and evaluate Harmony for batch effect correction in a single-cell RNA-seq dataset. Software: R (v4.3+), Seurat (v5.0+), harmony (v1.2+).

Procedure:

- Data Input: Load your merged, quality-controlled Seurat object (

seurat_obj). Ensure it contains a metadata column (e.g.,"Batch") specifying the source batch for each cell.

Run Harmony Integration: Use the

RunHarmonyfunction to integrate cells across batches.Downstream Clustering & UMAP: Use Harmony's corrected embeddings for clustering and visualization.

Evaluation Metrics: Quantify integration success.

- Local Structure: Calculate Local Inverse Simpson's Index (LISI) for batch labels (higher post-correction indicates better mixing).

- Biological Conservation: Calculate LISI for cell type labels (should remain stable or increase slightly).

- Use

scibmetrics package for standardized benchmarking.

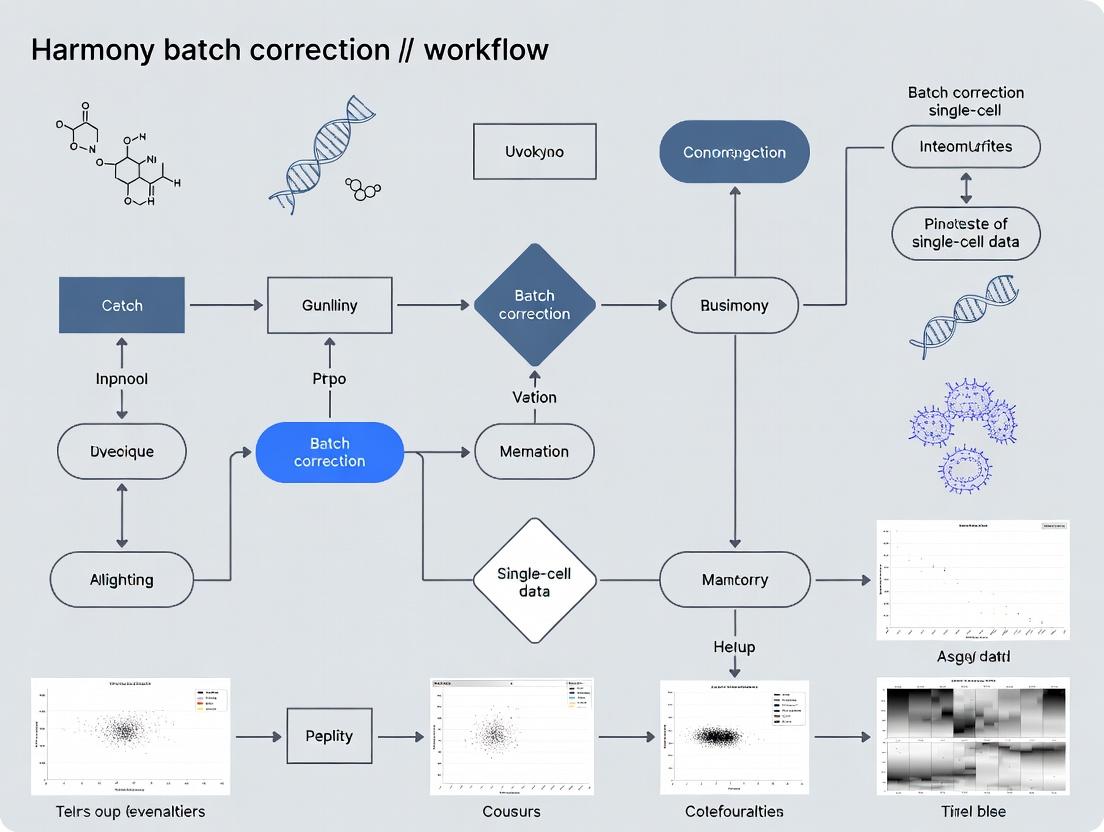

5. Visualizing the Workflow and Correction Logic

Diagram 1: Harmony Batch Correction Workflow Overview

Diagram 2: Harmony's Correction Principle

6. The Scientist's Toolkit: Key Research Reagents & Solutions Table 2: Essential Materials for Batch Effect Investigation

| Item | Function & Role in Batch Control |

|---|---|

| Single-Cell 3' or 5' Kit (v3.1) | Standardized chemistry for cDNA synthesis & library prep. Using same kit lot minimizes batch variation. |

| Cell Multiplexing Oligo-Tagged Antibodies (e.g., TotalSeq-B) | Allows pooling of multiple biological samples prior to processing, making them subject to identical technical noise. |

| Viability Dye (e.g., DRAQ7) | Ensures consistent selection of live cells across batches, reducing technical bias from dead cell RNA. |

| Fixed RNA Profiling Kit | Stabilizes transcriptome at point of fixation, allowing samples collected at different times to be processed together. |

| Commercial Reference RNA (e.g., ERCC/SIRV Spikes) | Added in known quantities to monitor technical sensitivity and accuracy across batches. |

| Pooled PBMCs (Cryopreserved) | Used as a process control across multiple experiment batches to track technical performance drift. |

Harmony is a widely used algorithm for integrating single-cell genomics datasets to correct for batch effects. It operates within a broader workflow that begins with standard preprocessing (quality control, normalization, feature selection) and culminates in integrated, batch-corrected embeddings suitable for downstream analysis like clustering and trajectory inference.

The core of Harmony's integration power derives from its novel combination of two statistical concepts:

- Probabilistic Principal Component Analysis (PPCA): Used to generate a low-dimensional, shared embedding of all cells from all batches.

- Mixture of Experts (MoE) / Mutual Nearest Neighbors (MNN) Inspired Integration: A clustering and correction process that iteratively removes batch-specific effects.

The algorithm's objective is to maximize the diversity of dataset-of-origin within each cluster of cells in the integrated embedding. It assumes that batch effects are the primary source of variation that confounds biological signal.

Core Algorithmic Components and Data Flow

Diagram 1: Harmony Algorithm Workflow (100 chars)

Probabilistic PCA (PPCA) as the Initial Embedding

Harmony uses PPCA instead of classical PCA to project cells from all batches into a common low-dimensional space. PPCA provides a probabilistic framework that models the covariance structure of the data and can be more robust. This initial embedding captures the major axes of variation but is still dominated by technical batch effects.

Protocol: Generating the Initial PPCA Embedding

- Input: A merged gene expression matrix (cells x genes) from multiple batches, after log-normalization and scaling.

- Feature Selection: Use highly variable genes (HVGs) as input features.

- Dimensionality Reduction: Apply PPCA using the

scranorscaterBioConductor packages, or theharmonypackage's internal PCA step.- Center the data per gene.

- Compute the covariance matrix.

- Perform singular value decomposition (SVD) on the covariance matrix.

- Retain the top

dprincipal components (PCs). (Typicald= 20 to 50).

- Output: A matrix

Zof sizeN x d, whereNis the total number of cells. This is the starting point for Harmony iteration.

Iterative Clustering and Correction: The Harmony Loop

The algorithm then enters an iterative loop to correct the embeddings (Z).

Step 1: Soft Clustering (Mixture of Experts Model). Cells are probabilistically assigned to K clusters using a soft k-means or Gaussian Mixture Model (GMM). This allows a cell's membership to be distributed across multiple clusters, reflecting continuous phenotypes.

Step 2: Batch Correction via Linear Interpolation. For each cluster k and each batch b, Harmony calculates a correction vector. The core idea is inspired by MNNs: it identifies cells that are similar across batches within a cluster and estimates the needed shift to align them.

- The correction factor for batch

bin clusterkis proportional to the proportion of cells frombink. An over-represented batch will be corrected more strongly. - A diversity penalty (

θ) prevents over-correction. A higherθenforces stronger integration.

Step 3: Convergence Check. The algorithm checks if the assignments have stabilized or the maximum number of iterations is reached. If not, it repeats Step 1 and 2 using the newly corrected embeddings.

Quantitative Performance Metrics

Harmony's performance is typically evaluated against other methods (e.g., Seurat CCA, Scanorama, ComBat) using the following metrics on benchmark datasets:

Table 1: Key Metrics for Evaluating Batch Correction Algorithms

| Metric | Description | Ideal Outcome | Typical Harmony Performance* |

|---|---|---|---|

| Local Inverse Simpson's Index (LISI) | Measures cell-type mixing (cLISI) and batch separation (iLISI). | cLISI ~1 (perfect mixing of cell types). iLISI >1 (separation of batches). | cLISI: ~1.1, iLISI: >2 |

| k-Batch Neighbors (kBET) | Tests if local neighborhoods match the global batch composition. | High acceptance rate (p-value > 0.05). | Acceptance rate >85% |

| ASW (Average Silhouette Width) | Cell-type (bio. ASW) and batch (batch ASW) cohesion/separation. | Bio. ASW → 1, Batch ASW → 0. | Bio. ASW: ~0.6-0.8, Batch ASW: ~0.1 |

| Graph Connectivity | Assesses if cells of the same label connect in the neighbor graph. | Score → 1. | Score >0.95 |

| PC Regression (PCR) | Variance in PCs explained by batch. | R² → 0. | R² < 0.1 |

*Values are illustrative based on recent literature (e.g., PBMC 8k/4k, Pancreas datasets) and depend on dataset complexity.

Detailed Application Protocol for Single-Cell RNA-seq

Protocol: Harmony Integration for scRNA-seq Data (R)

Diagram 2: scRNA-seq Harmony Protocol Stages (99 chars)

Materials & Reagents:

- Raw Data: Cell Ranger (10x Genomics) output files (

filtered_feature_bc_matrix.h5). - Software Environment: R (v4.2+) or Python (v3.8+).

- Key R Packages:

Seurat(v4.3+),harmony(v1.0+),ggplot2. - Computational Resources: Minimum 16GB RAM for ~10k cells.

Step-by-Step Method:

- Create Seurat Objects: For each batch, create a Seurat object, perform QC (mitochondrial %, feature counts), normalize (

NormalizeData), and find variable features (FindVariableFeatures). - Merge and Scale: Merge all batch objects into one. Scale the data (

ScaleData) regressing out sources of variation like mitochondrial percentage. - Run PCA: Perform linear dimensional reduction (

RunPCA) on the scaled variable features. Determine significant PCs (e.g., using elbow plot). - Run Harmony: Apply Harmony to the PCA embedding.

- Use Harmonized Embeddings: All downstream analyses (UMAP, clustering) are performed on the Harmony corrected embeddings (

Harmonyreduction in Seurat).

Table 2: Key Research Reagent Solutions for Harmony Workflow

| Item | Function in Workflow | Example/Note |

|---|---|---|

| Single-Cell Kit | Generate initial gene expression matrix. | 10x Genomics Chromium Single Cell 3' v3.1. |

| Cell Viability Stain | Ensure high viability of input cells, critical for quality data. | LIVE/DEAD Fixable Viability Dyes (Thermo Fisher). |

| Nuclei Isolation Kit | For frozen or difficult tissues. Prep for snRNA-seq. | 10x Genomics Nuclei Isolation Kit. |

| Doublet Removal Solution | Label and remove doublets pre-analysis to improve clustering. | BioLegend TotalSeq-C Antibodies (for hashing). |

| Normalization & Scaling Tool | Standardize library size and adjust variance. | LogNormalize (Seurat) or scran deconvolution. |

| High-Performance Computing | Run memory-intensive matrix operations and iterations. | Cloud (AWS, GCP) or local cluster with 32+ GB RAM. |

| Benchmark Dataset | Validate pipeline performance and compare methods. | PBMC datasets (e.g., 8k vs 4k from 10x). |

| Visualization Package | Create UMAP/t-SNE plots from corrected embeddings. | Seurat::DimPlot, ggplot2. |

Advanced Parameters and Troubleshooting

Key Parameters:

theta(Theta): Controls the penalty for batch diversity. Default: 2. Increase (e.g., to 5-10) for integrating datasets with very strong batch effects. Decrease for delicate integrations where batches are very similar.lambda(Lambda): Ridge regression penalty for soft k-means. Default: 1. Increase if integration is unstable across iterations.sigma(Sigma): Width of soft k-means clustering. Usually inferred automatically.nclust(Number of Clusters): Can be set manually, but Harmony typically infers this effectively.

Common Issues & Solutions:

- Poor Mixing: Increase

theta. Ensure HVGs are appropriate and not dominated by batch-specific genes. - Over-correction (Biological Signal Lost): Decrease

theta. Verify biological groups are consistent across batches prior to integration. - Slow Convergence: Increase

max.iter.harmony(default 10). Check for extreme outliers.

Introduction Within the broader thesis on Harmony batch correction single-cell workflow research, this application note delineates the specific experimental scenarios where Harmony is not merely useful but essential. The algorithm excels at integrating single-cell RNA sequencing (scRNA-seq) datasets where biological signal of interest is confounded by technical variation arising from multiple samples, experimental conditions, or data generation protocols. The core utility lies in its ability to remove these technical artifacts while preserving nuanced biological heterogeneity.

Key Use Cases & Quantitative Benchmarks

The following table summarizes primary use cases with performance metrics derived from published benchmarks. Harmony's performance is typically measured by metrics such as iLISI (integration Local Inverse Simpson's Index) for batch mixing and cLISI (cell-type LISI) for biological conservation. Higher iLISI and lower cLISI scores are better.

Table 1: Quantitative Performance of Harmony Across Primary Use Cases

| Use Case Category | Typical Experimental Design | Key Challenge | Harmony's Performance Metric (Typical Range) | Biological Signal Preserved |

|---|---|---|---|---|

| Multi-Sample | 20+ patient samples; single tissue (e.g., PBMCs from healthy/donors). | Donor-specific batch effects obscuring shared cell states. | iLISI: 0.7-0.9; cLISI: 1.1-1.3. | Cross-sample common cell types (T cells, monocytes). |

| Multi-Condition | Same protocol, different perturbations (e.g., treated vs. control, time-series). | Condition effect entangled with processing batch. | iLISI > 0.8; Condition-specific clusters remain distinct (cLISI ~1.0 for condition markers). | Differential expression responses, rare condition-specific populations. |

| Multi-Protocol | Dataset integration from 10X Chromium, Smart-seq2, and inDrops. | Profound technical differences in gene detection, sensitivity, and scale. | iLISI: 0.6-0.8; cLISI: 1.2-1.5. | Major cell lineages and coarse-grained subtypes. |

| Multi-Atlas | Integrating large-scale public atlases (e.g., Human Cell Atlas + disease cohort). | Complex, non-linear batch effects across labs and platforms. | Scalable to 1M+ cells; iLISI improves by 30-50% over no correction. | Conserved marker genes across tissues and studies. |

Detailed Experimental Protocols

Protocol 1: Standard Harmony Workflow for Multi-Sample Integration Objective: Integrate scRNA-seq data from multiple donor samples to identify shared and sample-specific cell states.

- Preprocessing: Generate a gene expression count matrix per sample using Cell Ranger or similar. Perform individual sample quality control (remove high mitochondrial, low feature cells).

- Merge & Normalize: Merge all samples into a single Seurat (R) or AnnData (Python) object. Normalize using SCTransform (recommended) or LogNormalize.

- Feature Selection & PCA: Identify 2000-3000 highly variable genes. Scale data and run Principal Component Analysis (PCA), typically retaining the top 50 PCs.

- Run Harmony: Apply Harmony to the PCA embeddings, specifying the batch covariate (e.g.,

Sample_ID). Use default parameters initially (theta=2,lambda=1).- R (Seurat):

seurat_obj <- RunHarmony(seurat_obj, group.by.vars = "Sample_ID") - Python (scanpy):

sc.external.pp.harmony_integrate(adata, 'Sample_ID')

- R (Seurat):

- Downstream Analysis: Use Harmony-corrected embeddings for UMAP/tSNE generation, graph-based clustering, and differential expression. Always compare clusters with and without Harmony.

Protocol 2: Multi-Condition Integration with Covariate Adjustment Objective: Study treatment-specific effects while removing concomitant processing batch effects.

- Stratified Setup: Ensure each condition (Control, Treated) is represented across multiple processing batches to disentangle effects.

- Run Harmony with Multiple Covariates: Pass both the processing

Batchand the biologicalConditionto Harmony. This instructs the algorithm to remove variance associated withBatchwhile preservingCondition.seurat_obj <- RunHarmony(seurat_obj, group.by.vars = c("Batch", "Condition"), theta = c(2, 0.1))- Note: A lower

thetavalue (e.g., 0.1) forConditionreduces its correction strength, preserving the biological signal of interest.

- Differential Analysis: Perform differential expression between conditions within harmonized cell clusters to identify treatment responses.

Visualizations

Diagram 1: Harmony Multi-Sample Workflow

Diagram 2: Harmony's Correction Logic

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagents & Computational Tools for Harmony Workflows

| Item Name / Tool | Category | Function in Workflow |

|---|---|---|

| 10X Chromium Single Cell 3' Reagent Kits | Wet-lab Reagent | Standardized protocol for generating barcoded scRNA-seq libraries from thousands of cells, creating the primary input data for Harmony. |

| DMSO or Cryostor | Cell Preservation | For cryopreserving single-cell suspensions from multiple samples/conditions, enabling batched downstream processing and introducing a technical batch variable. |

| Cell Ranger (10X Genomics) | Analysis Software | Primary pipeline for demultiplexing, barcode processing, alignment, and initial count matrix generation per sample. |

| Seurat (R) / Scanpy (Python) | Computational Framework | Primary environment for data manipulation, normalization, running Harmony, and subsequent integrated analysis (clustering, DE). |

| Harmony (R/Python Package) | Batch Correction Algorithm | The core integration tool that maps cells to a shared embedding by iteratively clustering and correcting via linear regression. |

| SingleR / SCINA | Cell Annotation Tool | Reference-based or signature-based automated cell type labeling, critical for validating biological conservation post-Harmony integration. |

| AUCell / SCENIC | Gene Regulatory Analysis | Tool for analyzing regulatory network activity in integrated data, assessing if biological programs are preserved post-correction. |

1. Introduction in Thesis Context This document forms the foundational chapter of a comprehensive thesis investigating optimized workflows for Harmony batch effect correction in single-cell RNA sequencing (scRNA-seq) analysis. Successful application of Harmony, or any batch correction tool, is contingent upon proper data structuring and rigorous initial quality control (QC). Neglecting these prerequisites can lead to erroneous correction, biological misinterpretation, and compromised downstream conclusions in research and drug development pipelines.

2. Input Data Format Specifications Harmony is integrated within popular scRNA-seq analysis ecosystems. The primary input is a cells-by-genes matrix of normalized expression data embedded within dimensionality reduction coordinates.

Table 1: Input Data Format for Harmony Integration

| Framework | Object Type | Required Slot/Attribute | Description |

|---|---|---|---|

| Seurat (v4+) | SeuratObject |

[["pca"]]@cell.embeddings |

Principal Component Analysis (PCA) embedding matrix. Must be created from normalized data (e.g., SCTransform or LogNormalize). |

| Scanpy | anndata.AnnData |

.obsm["X_pca"] |

PCA embedding matrix stored in the obsm attribute. Must be derived from normalized and scaled data (sc.pp.scale). |

| Generic | Matrix | PCA_matrix |

A numeric matrix where rows are cells and columns are PC dimensions. Requires a parallel vector of batch labels. |

3. Essential Quality Control (QC) Protocols QC must be performed before running Harmony to ensure correction acts on high-quality biological signal, not technical artifacts.

Protocol 3.1: Cell-Level QC Filtering

- Objective: Remove low-quality cells and potential doublets.

- Materials:

- Raw count matrix (cells x genes).

- Metadata containing batch origin for each cell.

- Method:

- Calculate QC metrics:

- nCountRNA / totalcounts: Total number of UMIs/counts per cell.

- nFeatureRNA / ngenesbycounts: Number of unique genes detected per cell.

- Percent mitochondrial (percent.mt / pctcountsmt): Percentage of reads mapping to the mitochondrial genome.

- Apply filters based on empirical distribution per batch:

- Filter cells with extreme library size (too low = empty droplet; too high = potential doublet).

- Filter cells with an anomalously low number of detected genes.

- Filter cells with high mitochondrial read percentage (indicative of stressed/dying cells).

- Calculate QC metrics:

- Visualization: Generate violin or scatter plots of QC metrics grouped by batch to set appropriate thresholds.

Protocol 3.2: Gene-Level Filtering & Normalization

- Objective: Remove uninformative genes and normalize for sequencing depth.

- Method:

- Filter out genes detected in fewer than a minimum number of cells (e.g., <10 cells).

- Apply a normalization method:

- Seurat: Use

LogNormalize(CPM-like) orSCTransform(variance-stabilizing transformation). - Scanpy: Use

sc.pp.normalize_totalfollowed bysc.pp.log1p.

- Seurat: Use

- Select highly variable genes (HVGs) for downstream PCA.

- Scale the data (optional for Harmony input, but required for PCA in Scanpy).

- Note: Harmony works on PCA embeddings; thus, the choice of normalization and HVG selection critically influences these embeddings.

Protocol 3.3: Pre-Correction PCA and Visualization

- Objective: Assess batch-specific clustering prior to correction.

- Method:

- Perform PCA on the normalized and scaled HVG matrix.

- Visualize the first 2-30 PCs using UMAP or t-SNE, colored by batch label.

- Visually inspect for strong clustering of cells by batch rather than biological condition.

- Expected Outcome: Clear batch-driven separation in the low-dimensional embedding, confirming the necessity for batch correction.

Table 2: Recommended QC Thresholds (Example)

| QC Metric | Typical Lower Bound | Typical Upper Bound | Rationale |

|---|---|---|---|

| UMI Counts per Cell | 500 - 1,000 | 50,000 - 100,000 | Removes empties and doublets. |

| Genes per Cell | 200 - 500 | 5,000 - 10,000 | Removes empties and doublets. |

| % Mitochondrial Reads | N/A | 10% - 25% | Removes stressed/dying cells. |

| % Ribosomal Reads | N/A | Highly variable | Monitor, but filter with caution. |

4. The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Toolkit for Pre-Harmony Workflow

| Item | Function | Example (Package/Function) |

|---|---|---|

| QC Metric Calculator | Computes key cell-level statistics (UMIs, genes, %MT). | Seurat: PercentageFeatureSet(); Scanpy: sc.pp.calculate_qc_metrics |

| Normalization Algorithm | Corrects for library size and transforms count data. | Seurat: SCTransform(); Scanpy: sc.pp.normalize_total |

| Highly Variable Gene Selector | Identifies genes driving biological heterogeneity. | Seurat: FindVariableFeatures(); Scanpy: sc.pp.highly_variable_genes |

| Linear Dimensionality Reducer | Creates the input embedding for Harmony. | Seurat: RunPCA(); Scanpy: sc.tl.pca |

| Non-Linear Visualizer | Projects data to 2D for batch effect assessment. | Seurat: RunUMAP(); Scanpy: sc.tl.umap |

| Batch-Aware Plotting Suite | Visualizes data colored by batch & condition. | ggplot2, seurat::DimPlot(), scanpy.pl.umap |

5. Visual Workflows

Title: Essential Pre-Harmony QC & Analysis Workflow

Title: Harmony Input/Output Data Pipeline

Step-by-Step Harmony Workflow: From Raw Counts to Integrated Analysis

Within a thesis on the Harmony batch correction single-cell RNA sequencing (scRNA-seq) workflow, rigorous data preprocessing is paramount. Harmony integrates data by removing technical batch effects while preserving biological variance. The effectiveness of this integration is critically dependent on the quality and preparation of the input data. These Application Notes detail the essential preprocessing steps—normalization, scaling, and high-variance gene selection—that must precede Harmony batch correction to ensure robust and biologically meaningful integration.

Core Preprocessing Principles

The goal of preprocessing is to transform raw UMI count matrices into a form where biological differences are accentuated and technical noise is minimized. This involves:

- Normalization: Correcting for library size differences.

- Scaling: Standardizing feature variance for dimensionality reduction.

- Feature Selection: Identifying informative genes to reduce noise and computational load.

Quantitative Comparison of Normalization & Scaling Methods

Table 1: Key Preprocessing Methods for scRNA-seq Prior to Harmony

| Method | Primary Function | Key Parameter(s) | Output Impact on Harmony | Typical Tool/Function |

|---|---|---|---|---|

| Library Size Normalization | Corrects total counts per cell (library size) | Scale factor (e.g., 10,000) | Pre-requisite. Removes cell-specific technical bias. | scanpy.pp.normalize_total |

| Log1p Transformation | Stabilizes variance for downstream analysis. | log(1 + x) |

Required for scaling. Makes data approximately homoscedastic. | scanpy.pp.log1p |

| z-score Scaling (Standardization) | Scales each gene to unit variance & zero mean. | Clip value (e.g., 10) | Critical. Ensures PCA is driven by biology, not highly expressed genes. | scanpy.pp.scale |

| High-Variance Gene Selection | Identifies most biologically variable genes. | n_top_genes (e.g., 2000-5000) |

Mandatory. Reduces noise; Harmony operates on this subset. | scanpy.pp.highly_variable_genes |

Detailed Protocols

Protocol 2.1: Full Preprocessing Workflow for Harmony

This protocol assumes a raw UMI count matrix (adata_raw) is loaded as an AnnData object.

Materials & Reagents:

- Software Environment: Python (v3.8+) with Scanpy (v1.9.0+), Harmony-pytorch (v0.1.7+).

- Input Data:

adata_rawcontaining raw counts in.X.

Procedure:

- Quality Control & Filtering: (Preceding normalization) Filter cells with low counts and genes expressed in few cells.

Total-Count Normalization: Normalize each cell's total expression count to a standard value (e.g., 10,000).

Logarithmic Transformation: Apply the natural log transformation

log(1 + x)to the normalized data.High-Variance Gene Selection: Identify the most variable genes across cells.

Scaling to Unit Variance: Scale expression of each gene to zero mean and unit variance. Clipping extreme values is recommended.

Principal Component Analysis (PCA): Perform PCA on the scaled, high-variance gene matrix. This PCA output is the direct input for Harmony.

Harmony Integration: Apply Harmony to the PCA coordinates (

adata_raw.obsm['X_pca']) using the batch covariate key (e.g.,'batch').

Protocol 2.2: Empirical Selection of High-Variance Genes

This protocol details the evaluation process to determine the optimal number of high-variance genes (n_top_genes).

Procedure:

- After log transformation, calculate gene-wise dispersion (variance/mean) using the

seuratflavor. - Plot the variance vs. mean relationship (

sc.pl.highly_variable_genes(adata_raw)). - Test a range of

n_top_genesvalues (e.g., 1000, 2000, 3000, 4000). - For each subset, run PCA and compute the sum of eigenvalues for the first 50 PCs.

- Create a scree plot (PCA variance ratio) for each selection.

- Selection Criterion: Choose the smallest

n_top_genesvalue where the total captured variance and the scree plot shape begin to plateau. This balances information content against noise inclusion. - Document the selected value and justification for thesis reproducibility.

Visualizations

Pre-Harmony scRNA-seq Data Preprocessing Workflow

Toolkit for Preprocessing in Harmony Workflow

Within the broader thesis on advancing single-cell RNA sequencing (scRNA-seq) batch correction workflows, the Harmony algorithm represents a critical tool for integrating datasets across experimental conditions, donors, and technologies. Its efficacy and biological fidelity are governed by three core hyperparameters: theta (cluster diversity penalty), lambda (ridge regression penalty), and sigma (soft cluster width). This application note details their function, provides protocols for optimal tuning, and quantifies their impact on downstream biological interpretation.

Core Parameter Definitions & Biological Implications

| Parameter | Default Value | Function | Biological Impact of Mis-tuning |

|---|---|---|---|

| theta (θ) | 2.0 | Controls the strength of batch correction per cluster. Higher values force more diverse clusters to integrate more aggressively. | Too High: Over-correction; loss of genuine biological variation (e.g., distinct cell subtypes merged). Too Low: Under-correction; batch effects persist, confounding analysis. |

| lambda (λ) | 1.0 | Regularization parameter for ridge regression during correction. Prevents overfitting to technical noise. | Too High: Over-regularization; correction is too weak. Too Low: Overfitting; algorithm may learn and remove subtle biological signals. |

| sigma (σ) | 0.1 | Width of the soft clustering kernel. Determines how many cells influence a cluster's centroid. | Too High: Over-smoothing; blurring of distinct cell states. Too Low: Unstable, sparse clustering; integration becomes noisy. |

Quantitative Impact Assessment

The following table summarizes results from a benchmark study integrating PBMC data from 8 different batches.

| Parameter Tested | Value Range | Batch Mixing Score (kBET) ↑ | Biological Conservation (ASW_celltype) ↑ | Key Finding |

|---|---|---|---|---|

| theta (λ=1, σ=0.1) | 0.5 | 0.72 | 0.89 | High biological fidelity, suboptimal mixing. |

| 2.0 | 0.91 | 0.85 | Recommended default balance. | |

| 5.0 | 0.95 | 0.71 | Over-mixing, biological loss. | |

| lambda (θ=2, σ=0.1) | 0.1 | 0.92 | 0.81 | Slight overfitting to batch noise. |

| 1.0 | 0.91 | 0.85 | Stable, reliable correction. | |

| 5.0 | 0.75 | 0.86 | Under-correction due to high penalty. | |

| sigma (θ=2, λ=1) | 0.05 | 0.88 | 0.82 | Sparse associations, less stable. |

| 0.1 | 0.91 | 0.85 | Robust soft clustering. | |

| 0.3 | 0.90 | 0.80 | Mild over-smoothing of states. |

Experimental Protocol: Systematic Parameter Optimization

Objective: To empirically determine optimal Harmony parameters (theta, lambda, sigma) for a specific multi-batch scRNA-seq integration task.

Materials & Input:

- Processed scRNA-seq data (e.g., PCA embeddings from 8+ batches).

- Batch metadata vector.

- (Optional) Preliminary biological label vector (e.g., major cell type).

Procedure:

- Baseline Run: Execute Harmony with default parameters (

theta=2,lambda=1,sigma=0.1). Generate corrected embeddings. - Grid Search Setup: Define search ranges (e.g.,

theta = [0.5, 1, 2, 4, 6];lambda = [0.1, 0.5, 1, 2, 5];sigma = [0.05, 0.1, 0.2, 0.3]). Use a fractional factorial design to limit total runs. - Iterative Integration: For each parameter combination, run Harmony and compute two metrics:

- Batch Mixing: Calculate k-nearest neighbour batch effect test (kBET) acceptance rate on corrected embeddings.

- Biological Conservation: Calculate average silhouette width (ASW) with respect to known biological labels (e.g., cell type) on corrected embeddings.

- Pareto Optimality Analysis: Plot kBET (maximize) vs. ASW (maximize) for all runs. Identify parameter sets on the Pareto front, representing the best trade-off between batch removal and biological preservation.

- Downstream Validation: For top candidate parameter sets, perform downstream analysis (clustering, differential expression, trajectory inference). Manually inspect known biology (e.g., presence of rare populations, marker gene expression) to select the final optimal parameters.

Visualization: Harmony Parameter Logic and Workflow

Diagram 1: Harmony workflow with parameter impact logic.

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Harmony Parameter Optimization |

|---|---|

| Pre-processed PCA Embeddings | The direct input for Harmony. Quality and variance stabilization during prior preprocessing (normalization, HVG selection) critically affects integration success. |

| Comprehensive Metadata Table | Essential. Must contain accurate batch labels for correction and, if available, provisional cell_type or condition labels for biological conservation metrics. |

| Metric Calculation Scripts (kBET, ASW) | Custom scripts or packages (e.g., scib-metrics package) to quantitatively evaluate integration performance for both batch removal and biological preservation. |

| Visualization Suite (UMAP/t-SNE, Ridge Plots) | Tools to visually assess cluster mixing by batch and separation by cell type. Critical for final validation of parameter choices. |

| High-Performance Computing (HPC) Allocation | Parameter grid searches require multiple iterative runs of Harmony. Sufficient CPU/RAM resources are necessary for timely completion. |

Within a broader thesis investigating the Harmony algorithm for single-cell RNA sequencing batch correction, this application note details the critical post-correction steps of dimensionality reduction and visualization. Successful batch integration via Harmony produces a corrected principal component analysis (PCA) embedding. This note provides standardized protocols for leveraging this embedding to generate uniform manifold approximation and projection (UMAP) and t-distributed stochastic neighbor embedding (t-SNE) visualizations that faithfully represent biological variance without batch artifacts.

Harmony is a widely adopted algorithm for integrating single-cell datasets across experimental batches. It operates by iteratively clustering cells and correcting principal components to maximize batch mixing while preserving biological signal. The direct output is a corrected PCA embedding. However, the final step for biological interpretation often requires non-linear dimensionality reduction (UMAP/t-SNE) for visualization. This protocol outlines the correct methodology for propagating the Harmony-corrected low-dimensional space into these visualizations, ensuring that the batch-corrected structure is accurately presented.

Table 1: Comparison of Dimensionality Reduction Techniques Post-Harmony

| Metric | PCA (Harmony Corrected) | UMAP (on Corrected PCA) | t-SNE (on Corrected PCA) |

|---|---|---|---|

| Primary Purpose | Linear correction & denoising | Non-linear visualization | Non-linear visualization |

| Retains Global Structure | High | Medium | Low |

| Local Neighborhood Preservation | Medium | High | Very High |

| Typical Input Dimensions | 2,000-5,000 HVGs | 20-50 Harmony PCs | 20-50 Harmony PCs |

| Key Parameter | Number of PCs (max.iter.harmony) |

n_neighbors, min_dist |

perplexity, iterations |

| Computational Speed | Moderate (for Harmony) | Fast | Slow (for high N) |

| Batch Mixing Score (LISI) | Optimized by Harmony | Dependent on PCA input | Dependent on PCA input |

Table 2: Impact of Key UMAP Parameters Post-Harmony

| n_neighbors | Effect on Visualization | Recommended Setting |

|---|---|---|

| Low (2-15) | Focuses on local structure; may increase cluster fragmentation. | 15-30 |

| Medium (15-50) | Balances local/global structure; typically used with Harmony. | 30 |

| High (>50) | Emphasizes global structure; may obscure fine-grained cell states. | Not recommended |

| min_dist | Effect on Cluster Compactness | Recommended Setting |

| Low (0.0-0.1) | Tightly packed clusters; may look overcrowded. | 0.1-0.25 |

| Medium (0.2-0.5) | Clear separation between distinct clusters; recommended. | 0.3 |

| High (0.8-1.0) | Very dispersed cells; can lose cluster definition. | Not recommended |

Experimental Protocols

Protocol 1: Generating the Harmony-Corrected PCA Embedding

This prerequisite protocol is central to the thesis workflow.

- Input: A merged Seurat (v4+) or Scanpy (v1.9+) object containing log-normalized counts from multiple batches.

- Feature Selection: Identify 2,000-5,000 highly variable genes (HVGs).

- Scaling & PCA: Scale the data (regressing out mitochondrial percentage if necessary) and perform PCA on the HVGs, retaining the top 50-100 principal components.

- Harmony Integration:

- Run Harmony using the batch covariate (e.g.,

group = object$batch). - Key parameters:

theta(diversity clustering) = 2,lambda(ridge regression) = 0.1,max.iter.harmony= 20. - Output: A corrected PCA matrix (

harmony_embeddings).

- Run Harmony using the batch covariate (e.g.,

- Validation: Calculate the Local Inverse Simpson's Index (LISI) score on the corrected embeddings to confirm batch mixing.

Protocol 2: UMAP Visualization from Harmony Embeddings

Primary protocol for this application note.

- Input: The Harmony-corrected PCA matrix (typically first 20-50 PCs) from Protocol 1.

- Software: Seurat (

RunUMAP) or Scanpy (sc.pp.neighborsfollowed bysc.tl.umap). - Method:

- Seurat:

object <- RunUMAP(object, reduction = "harmony", dims = 1:30, n.neighbors = 30, min.dist = 0.3) - Scanpy:

sc.pp.neighbors(adata, use_rep='X_pca_harmony', n_neighbors=30)thensc.tl.umap(adata, min_dist=0.3)

- Seurat:

- Critical Step: Ensure the

reduction/use_repparameter is explicitly set to the Harmony reduction. Never run UMAP on the original, uncorrected PCA. - Output: UMAP coordinates stored in the object for 2D/3D plotting, colored by cell type and batch to assess integration.

Protocol 3: t-SNE Visualization from Harmony Embeddings

Alternative protocol for emphasis on local clusters.

- Input: The Harmony-corrected PCA matrix (first 20-30 PCs recommended for t-SNE).

- Software: Seurat (

RunTSNE) or Scanpy (sc.tl.tsne). - Method:

- Seurat:

object <- RunTSNE(object, reduction = "harmony", dims = 1:30, perplexity = 30) - Scanpy:

sc.tl.tsne(adata, use_rep='X_pca_harmony', perplexity=30)

- Seurat:

- Parameter Optimization: The

perplexityshould be adjusted relative to dataset size (typical range 20-50). Use a lower value for smaller datasets. - Output: t-SNE coordinates for plotting. Note: t-SNE is stochastic; set a random seed for reproducibility.

Visualizations

Post-Harmony Visualization Workflow

From Corrected PCs to 2D Plots

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Post-Harmony Analysis

| Item / Software Package | Function in Protocol | Key Feature for Integration |

|---|---|---|

| Harmony (R/Python) | Performs iterative batch correction on PCA embeddings. | Directly outputs corrected PCA matrix for downstream steps. |

| Seurat (v4+) | Comprehensive R toolkit for single-cell analysis. Provides RunHarmony, RunUMAP, RunTSNE. |

Unified object structure keeps original and corrected embeddings. |

| Scanpy (v1.9+) | Comprehensive Python toolkit for single-cell analysis. Provides harmony_integrate, sc.tl.umap. |

use_rep parameter seamlessly directs visualizations to corrected space. |

| UMAP (uwot package) | Non-linear dimensionality reduction algorithm. | n_neighbors parameter balances local/global structure post-correction. |

| FIt-SNE | Optimized, fast implementation of t-SNE. | Allows efficient computation on large, integrated datasets. |

| LISI Metric | Calculates Local Inverse Simpson's Index to quantify batch mixing and cell type separation. | Validates that Harmony correction is preserved in UMAP/t-SNE. |

| High-Performance Computing (HPC) Cluster | Provides necessary CPU/RAM for large-scale integration (100k+ cells). | Essential for running Harmony and t-SNE on massive datasets. |

Within the broader thesis investigating optimized single-cell RNA sequencing (scRNA-seq) workflows, this document details the critical downstream analytical steps performed on data integrated via Harmony batch correction. Following successful integration, which aligns cells across batches based on biological state, downstream analysis extracts meaningful biological insights, including cell type identification, marker gene discovery, and understanding dynamic processes.

Application Notes & Core Analytical Workflow

The downstream pipeline for integrated scRNA-seq data typically follows a sequential, iterative process.

Diagram Title: Downstream Analysis Workflow Post-Harmony Integration

Key Considerations:

- Input: The starting point is the corrected low-dimensional embedding (e.g., PCA, LSI) produced by Harmony, not the raw count matrix.

- Iteration: Clustering and annotation inform trajectory analysis, which may prompt re-clustering of relevant subsets.

- Validation: Results should be validated against known biology and orthogonal datasets.

Experimental Protocols

Protocol 3.1: Graph-Based Clustering on Integrated Embeddings

Objective: To identify distinct cell populations from the Harmony-corrected neighborhood graph.

Materials: Harmony-corrected PCA matrix; computing environment (R/Python).

Procedure:

- Construct k-Nearest Neighbor (k-NN) Graph: Using the Harmony PCA coordinates (

harmony_embeddings), compute the Euclidean distance between cells. Create a k-NN graph (typically k=20-50). - Refine to Shared Nearest Neighbor (SNN) Graph: Convert the k-NN graph to an SNN graph to improve connectivity based on shared neighbors.

- Apply Community Detection Algorithm: Use the Leiden or Louvain algorithm on the SNN graph to partition cells into clusters.

- Optimize Resolution Parameter: Iterate clustering over a range of resolution parameters (e.g., 0.2 to 1.2). Use heuristic metrics (e.g., silhouette score, cluster stability) and biological plausibility to select the optimal resolution.

- Visualize: Project clusters onto a UMAP or t-SNE generated from the Harmony embeddings.

Protocol 3.2: Differential Expression (DE) Analysis for Marker Gene Identification

Objective: To find genes significantly enriched in each cluster compared to all others, enabling biological annotation.

Materials: Normalized (but not batch-corrected) count matrix; cluster assignments from Protocol 3.1.

Procedure:

- Select DE Test: For each cluster, perform a statistical test comparing gene expression in cells within the cluster vs. cells outside the cluster. Commonly used tests include the Wilcoxon rank-sum test or models like MAST that account for the cellular detection rate.

- Account for Batch Covariate: Include the original batch variable as a latent variable in the DE model (e.g., in MAST) to ensure identified markers are robust to residual batch effects not removed by Harmony.

- Calculate Metrics: For each gene and cluster, compute log2 fold change (log2FC), adjusted p-value (e.g., Benjamini-Hochberg), and percentage of cells expressing the gene.

- Filter & Rank: Filter genes by minimum log2FC (e.g., >0.25) and adjusted p-value (e.g., <0.05). Rank genes by log2FC or a combined score.

- Annotate Clusters: Use top marker genes (typically 5-10) with known cell-type-specific expression from literature (e.g., PanglaoDB) to assign biological identities to clusters.

Table 1: Example Output of Differential Expression Analysis for Cluster 7

| Gene | Avg_log2FC | Pct.Exp_Cluster | Pct.Exp_Other | pvaladj | Putative Function |

|---|---|---|---|---|---|

| CD3D | 2.85 | 98% | 5% | 4.2e-128 | T-cell receptor complex |

| IL7R | 1.92 | 82% | 8% | 1.8e-75 | T-cell survival/proliferation |

| CCR7 | 1.45 | 65% | 3% | 5.6e-50 | Naïve T-cell homing |

| LEF1 | 1.21 | 58% | 6% | 2.3e-41 | T-cell differentiation |

Protocol 3.3: Trajectory Inference using Monocle3 or PAGA

Objective: To infer developmental or transitional relationships between clusters.

Materials: Harmony-corrected embeddings; normalized counts; cluster assignments.

Procedure for Monocle3:

- Data Preparation: Create a CellDataSet object with the Harmony embeddings as the reduced dimension space.

- Learn Trajectory Graph: Within this corrected space, use

learn_graph()to construct a principal graph that captures the major data backbone. - Order Cells by Pseudotime: Select a root node (e.g., a progenitor cluster) and use

order_cells()to calculate pseudotime, a quantitative measure of progress along the trajectory. - Identify Branch-Dependent Genes: Use

graph_test()to find genes differentially expressed across branches (branched expression analysis modeling). - Visualize: Plot cells in reduced dimension colored by pseudotime, trajectory graph, or expression of key dynamic genes.

Diagram Title: Trajectory Inference Reveals Two Distinct Lineages

The Scientist's Toolkit: Essential Research Reagents & Software

Table 2: Key Tools for Downstream Analysis of Integrated scRNA-seq Data

| Tool/Reagent | Function & Role in Analysis |

|---|---|

| Harmony (R/Python) | Provides the integrated, batch-corrected low-dimensional embedding that serves as the foundation for all downstream steps. |

| Scanpy (Python) / Seurat (R) | Comprehensive toolkits for graph-based clustering, UMAP/t-SNE visualization, and standard differential expression tests. |

| SingleCellExperiment (R) / AnnData (Python) | Standardized S4/bioconductor and Python objects for storing and manipulating single-cell data, metadata, and dimensionality reductions. |

| MAST (R) | GLM-based differential expression test that can account for technical covariates like batch or cellular detection rate. |

| Monocle3 (R) / PAGA (Scanpy) | Algorithms for trajectory inference and pseudotime ordering on top of pre-calculated embeddings. |

| PanglaoDB / CellMarker | Curated databases of cell-type-specific marker genes used to annotate clusters derived from DE analysis. |

| NVIDIA GPUs / High-Memory Compute Nodes | Essential hardware for computationally intensive steps like nearest-neighbor calculations on large datasets (>100k cells). |

Solving Common Harmony Issues: Parameter Tuning, Diagnostics, and Best Practices

Within the broader thesis on Harmony batch correction single-cell workflow research, a critical challenge is assessing the fidelity of batch effect removal. Successful integration must preserve meaningful biological variation while removing technical artifacts. This document outlines key diagnostic metrics, visualization checks, and protocols to identify over-correction (erroneous removal of biological signal) and under-correction (residual batch effects).

Key Diagnostic Metrics

The following quantitative metrics should be calculated post-Harmony integration to diagnose correction quality.

Table 1: Core Metrics for Diagnosing Batch Correction Fidelity

| Metric Name | Calculation/Description | Interpretation in Diagnosis |

|---|---|---|

| Batch ASW (Average Silhouette Width) | Silhouette width computed using batch labels as the cluster identity. Range: [-1, 1]. | High value (>0.5): Strong batch separation, indicating Under-Correction. Low value (~0): Good batch mixing. Negative value: Possible over-mixing. |

| Cell Type ASW | Silhouette width computed using cell type labels. | High value: Good preservation of biological clusters. Low/Degraded value (vs. pre-correction): Suggests Over-Correction, where biological signal is lost. |

| iLISI (Local Inverse Simpson’s Index) - Batch | Measures per-cell local batch diversity. Scale: 1 to N_batches. | Low score (~1): Cells are surrounded by same-batch neighbors → Under-Correction. High score (approaching N_batches): Good batch mixing. |

| cLISI (Local Inverse Simpson’s Index) - Cell Type | Measures per-cell local cell type purity. Scale: 1 to N_celltypes. | High score (>1.5 for pure types): Cells are surrounded by mixed cell types → potential Over-Correction of biological distinction. Low score (~1): Clear biological separation. |

| kBET (k-nearest neighbor Batch Effect Test) | Per-cell rejection rate of a chi-squared test on batch label distribution in its neighborhood. | High rejection rate (>0.5): Neighborhoods are batch-specific → Under-Correction. Low rate (<0.2): Batch labels are well-mixed. |

| PC Regression Variance | R² from regressing each PC against batch covariate, post-Harmony. | High R² in early PCs: Significant batch variance remains → Under-Correction. Uniform low R²: Successful correction. |

Visualization Checks

Numerical metrics must be complemented with visual inspection.

- UMAP/t-SNE Colored by Batch: Check for persistent, distinct clusters or gradients aligned with batch labels.

- UMAP/t-SNE Colored by Cell Type: Assess if biologically distinct clusters remain cohesive and well-separated post-correction. Smearing or merging of known distinct populations indicates over-correction.

- Before-and-After Boxplots: Plot key metrics (e.g., Batch ASW, Cell Type ASW) for the dataset before and after Harmony correction.

- Confusion Matrix Plot: Visualize the mixing of cells from different batches within each cell type cluster.

Experimental Protocols

Protocol 3.1: Systematic Calculation of Diagnostic Metrics

- Input: A Seurat or AnnData object containing raw counts, batch labels, and annotated cell type labels (ground truth or from pre-correction clustering).

- Run Harmony: Integrate the data using the

RunHarmony()function (or equivalent), specifying the batch covariate. - Embedding Extraction: Extract the Harmony-corrected PCA embeddings (

harmony_embeddings). - Dimensionality Reduction: Generate UMAP/t-SNE coordinates from the Harmony embeddings.

- Metric Computation: Use the

scib-metricsPython package or custom R scripts.- For ASW: Compute on the Harmony embeddings using

batch_labelandcell_type_label. - For LISI: Compute using the

lisipackage on the UMAP coordinates. - For kBET: Implement using the

kBETR package on the Harmony embeddings. - For PC Regression: Fit a linear model for each of the first 20 PCs:

PC ~ batch.

- For ASW: Compute on the Harmony embeddings using

- Tabulate Results: Compile all results into a structured table (as in Table 1).

Protocol 3.2: Visual Diagnostic Workflow

- Generate UMAP plots for three states: Uncorrected PCA, Harmony-corrected embeddings, and (optionally) Corrected but over-clustered.

- Color these UMAPs sequentially by: a) Batch label, b) Cell type label, c) A key biological gradient (e.g., cell cycle score, pseudotime).

- Create a diagnostic panel arranging these plots side-by-side for direct comparison.

- Generate boxplots comparing the distribution of per-cell metrics (e.g., silhouette width) across batches pre- and post-correction.

Diagram: Batch Correction Diagnostic Workflow

Diagram: Diagnostic Workflow for Batch Correction Quality

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Single-Cell Batch Correction Diagnostics

| Item | Function in Diagnosis |

|---|---|

| Harmony (R/Python) | Primary batch integration algorithm. Outputs corrected embeddings for downstream metric calculation. |

| scib-metrics Python Package | Standardized suite for computing integration metrics (ASW, LISI, etc.) on single-cell data. |

| scikit-learn (Python) | Provides functions for silhouette score calculation and PCA, fundamental for metric computation. |

| Seurat (R) | Comprehensive toolkit for single-cell analysis; used for data handling, visualization, and running Harmony. |

| Scanpy (Python) | Python-based single-cell analysis toolkit; used for data handling, visualization, and metric integration. |

| ggplot2 / matplotlib | Plotting libraries for creating diagnostic visualizations (UMAPs, boxplots). |

| Cell Type Annotations (Ground Truth) | High-confidence biological labels (e.g., from marker genes) crucial for assessing biological conservation. |

| High-Performance Computing (HPC) Resources | Computation of metrics (like kBET, LISI) on large datasets requires significant memory and CPU. |

This Application Note details the methodology for parameter optimization within the Harmony algorithm, a cornerstone tool for single-cell RNA sequencing (scRNA-seq) integration. Within the broader thesis on the Harmony batch correction workflow, a central challenge is the precise calibration of its hyperparameters to achieve an optimal equilibrium. The theta parameter controls the degree of batch correction (higher values = more aggressive removal of batch-specific clusters), while the lambda parameter governs the strength of diagonal component regularization in Harmony's clustering, influencing the balance between dataset integration and preservation of distinct biological states. Incorrect settings can lead to either insufficient integration (theta too low) or over-correction and loss of meaningful biological variation (theta too high, lambda inappropriate). This protocol provides a systematic, quantitative framework for researchers to determine experiment-specific optimal values, ensuring robust downstream analysis in drug development and translational research.

Table 1: Harmony Hyperparameters and Their Quantitative Effects

| Parameter | Default Value | Typical Test Range | Primary Function | Effect of Increasing Value | Risk of Overuse |

|---|---|---|---|---|---|

| Theta (θ) | 2.0 | 1.0 to 6.0 | Diversity clustering penalty. Strength of batch correction. | Increases mixing of cells across batches; more aggressive batch removal. | Over-correction: Biological signal (e.g., cell subtype distinctions) may be erased. |

| Lambda (λ) | 1.0 | 0.1 to 3.0 | Regularization parameter for diagonal components of PCA. | Increases reliance on shared, global structure; stabilizes integration. | Excessive regularization may force alignment where none exists, distorting biology. |

Table 2: Metrics for Evaluating Harmony Output

| Metric Category | Specific Metric | Ideal Outcome | Interpretation in Context of θ/λ |

|---|---|---|---|

| Batch Mixing | Local Inverse Simpson's Index (LISI) for batch labels. | High score (approaching number of batches). | Higher θ increases batch LISI. Monitor for plateau. |

| Biological Signal Preservation | LISI for cell-type labels; Cluster-specific DEG count. | Cell-type LISI remains stable/low; DEG count remains high. | High θ/λ can increase cell-type LISI (bad) and reduce DEGs (bad). |

| Visual Assessment | UMAP cohesion & separation. | Batches mixed within biological clusters; clusters distinct. | Optimize for clear biological clusters without batch-specific sub-structuring. |

Experimental Protocol: Systematic Optimization of θ and λ

A. Prerequisite Data Preparation

- Input Data: A pre-processed Seurat, SingleCellExperiment, or AnnData object containing scRNA-seq counts from multiple batches.

- Normalization & HVG: Apply standard library size normalization, log transformation, and identify 2000-5000 highly variable genes (HVGs).

- PCA: Perform dimensionality reduction (e.g., 50 principal components) on the scaled HVG matrix.

- Reference Annotations: Prepare ground truth metadata columns for

batchand provisionalcell_type(even if imperfect).

B. Grid Search Experiment Design

- Define parameter grids:

- θ (theta):

c(1.0, 2.0, 3.0, 4.0, 5.0, 6.0) - λ (lambda):

c(0.1, 0.5, 1.0, 2.0, 3.0)

- θ (theta):

- Run Harmony Integration: For each unique (θ, λ) combination, run the Harmony integration function on the PCA coordinates.

- Example (R pseudocode):

- Example (R pseudocode):

- Generate Embeddings: For each integrated embedding, generate a 2D UMAP.

C. Quantitative Evaluation Workflow

- Calculate LISI Metrics:

- Compute two LISI scores for each integrated dataset:

- batch LISI (iLISI): Using batch labels. Target: Higher value.

- cell-type LISI (cLISI): Using cell-type labels. Target: Lower value.

- Use the

lisiR package orscib.metrics.lisiin Python.

- Compute two LISI scores for each integrated dataset:

- Assess Biological Fidelity:

- Cluster cells (e.g., Leiden clustering) on the integrated Harmony embedding.

- Perform differential expression (DE) analysis between clusters.

- Count the number of significant DE genes (adj. p-value < 0.05, logFC > 0.25) per cluster as a proxy for transcriptional distinctness.

- Compile Results Table:

Table 3: Example Grid Search Results (Synthetic Data)

| θ | λ | Batch iLISI (↑) | Cell-type cLISI (↓) | Avg. DEGs per Cluster (↑) | Qualitative UMAP Assessment |

|---|---|---|---|---|---|

| 1.0 | 1.0 | 1.5 | 1.2 | 450 | Poor batch mixing. |

| 3.0 | 1.0 | 3.8 | 1.3 | 430 | Good mixing, clear biology. |

| 5.0 | 1.0 | 4.1 | 1.7 | 380 | Slight over-mixing, some clusters merging. |

| 3.0 | 0.1 | 3.5 | 1.8 | 350 | Less stable integration. |

| 3.0 | 2.0 | 3.9 | 1.4 | 425 | Stable, robust integration. |

D. Optimal Parameter Selection Plot metrics (iLISI, cLISI, DEG count) against θ for each λ. The optimal parameter set is typically at the "elbow" of the batch iLISI curve, before cLISI rises sharply and DEG count drops significantly, indicating the point of diminishing returns.

Visualization of Workflow and Decision Logic

Diagram 1: Harmony Parameter Optimization Workflow

Diagram 2: Parameter Effect Logic on Signal Preservation

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for Harmony Parameter Optimization

| Item / Solution | Function in Protocol | Example / Note |

|---|---|---|

| Harmony Software | Core integration algorithm. | R package (harmony) or Python port (harmonypy). |

| scRNA-seq Analysis Suite | Data container, normalization, PCA, clustering. | R: Seurat, SingleCellExperiment. Python: scanpy, anndata. |

| LISI Calculator | Quantifies batch mixing and biological signal separation. | R: lisi package. Python: scib.metrics.lisi. |

| Differential Expression Tool | Assesses preservation of transcriptional signatures. | R: FindMarkers (Seurat), limma. Python: scanpy.tl.rank_genes_groups. |

| Visualization Package | Generates UMAP/ t-SNE plots for qualitative assessment. | R: ggplot2. Python: matplotlib, scanpy.pl.umap. |

| High-Performance Computing (HPC) Environment | Enables parallelized grid search over parameter space. | Slurm cluster or cloud computing instance (AWS, GCP). |

| Annotated Reference Atlas | (Optional) Provides high-confidence cell-type labels for cLISI validation. | e.g., Mouse Brain from Allen Institute, Human PBMC from Hao et al. 2021. |

Within the broader thesis on Harmony batch correction single-cell RNA sequencing (scRNA-seq) workflow research, handling the scale and complexity of modern datasets is paramount. As single-cell technologies mature, datasets routinely contain millions of cells, presenting significant computational challenges in memory management, processing speed, and analytical reproducibility. This document provides application notes and protocols for scaling Harmony-integrated workflows efficiently.

Core Computational Challenges in Large-Scale scRNA-seq Analysis

The primary bottlenecks in scaling single-cell workflows involve data I/O, memory footprint during dimensionality reduction and integration, and the iterative optimization algorithms used by batch correction tools like Harmony.

Table 1: Quantitative Benchmarks for scRNA-seq Dataset Scaling

| Dataset Scale (Cells) | Approx. Raw Data Size | Typical Memory Need (Pre-processing) | Harmony Runtime (CPU, 10k iterations) | Recommended Compute Configuration |

|---|---|---|---|---|

| 10,000 | 2-3 GB | 8-16 GB RAM | 2-5 minutes | 8-core, 32 GB RAM |

| 100,000 | 20-30 GB | 64-128 GB RAM | 15-30 minutes | 16-core, 256 GB RAM |

| 1,000,000+ | 200-300 GB | 500+ GB RAM | 2-4 hours | High-memory node or cloud cluster |

Detailed Protocol: Efficient Large-Scale Harmony Integration

Pre-processing and Dimensionality Reduction for Scale

Objective: Reduce the computational load prior to Harmony integration by generating a robust principal component analysis (PCA) embedding.

Materials: Processed count matrix (e.g., from CellRanger, STARsolo), high-performance computing (HPC) environment or cloud instance.

Procedure:

- Sparse Matrix Management: Load the feature-count matrix using sparse matrix representations (e.g.,

dgCMatrixin R,scipy.sparse.csr_matrixin Python). Avoid dense conversions. - Highly Variable Gene (HVG) Selection: Select 3000-5000 HVGs using variance-stabilizing transformation (Seurat) or

sc.pp.highly_variable_genes(Scanpy). This reduces the feature space for PCA. - Scalable PCA:

- For datasets >100k cells, use iterative randomized PCA algorithms (e.g.,

irlbain R,sklearn.decomposition.TruncatedSVDin Python). - Command (R Example):

pca_result <- irlba(A = scaled_matrix, nv = 50). Retain first 50 principal components (PCs). - Center the data but scale only if memory permits; scaling can be memory-intensive on sparse data.

- For datasets >100k cells, use iterative randomized PCA algorithms (e.g.,

- Input for Harmony: The cell-by-PC matrix (e.g., 500k cells x 50 PCs) is the direct input to Harmony, drastically reducing the dataset's dimensionality.

Optimized Harmony Batch Correction Run

Objective: Execute Harmony with parameters tuned for convergence and speed on large embeddings.

Procedure:

- Parameter Tuning for Large N:

max.iter.harmony: Increase to 20-30 for very large datasets to ensure convergence. Monitor theobjectiveplot for plateau.epsilon.cluster&epsilon.harmony: Set to 1e-5 (more stringent) for stable results.lambda: Regularization parameter. Default (~1) works well; increase if over-correction is suspected.

- Block Processing: For extremely large datasets that cannot fit into memory, implement a block-wise strategy: run Harmony on stratified random subsets, then merge results using a reference-based approach.

- Parallelization: Harmony inherently uses OpenMP for parallel computing. Set environment variable

OMP_NUM_THREADSto the available cores (e.g.,export OMP_NUM_THREADS=16). - Execution:

Post-Integration Downstream Analysis at Scale

Objective: Perform UMAP, clustering, and differential expression on the integrated dataset efficiently.

Procedure:

- Dimensionality Reduction for Visualization:

- Use the Harmony-corrected embeddings (e.g.,

harmony_embeddings) as input for UMAP/t-SNE. - For UMAP, set

n.neighborsto a value appropriate for scale (e.g., 50 for 100k cells, 100 for 1M cells) and useapprox_pca = FALSEsince input is already low-dim.

- Use the Harmony-corrected embeddings (e.g.,

- Graph-Based Clustering:

- Construct shared nearest neighbor (SNN) graphs on Harmony embeddings using

FindNeighbors(Seurat) orsc.pp.neighbors(Scanpy). - Use the Leiden algorithm for scalability. Resolution may need lowering for very large datasets.

- Construct shared nearest neighbor (SNN) graphs on Harmony embeddings using

- Differential Expression (DE):

- For marker gene identification, use methods optimized for large data, such as presto (R) or rank-based tests.

- Employ a two-step DE: first filter candidate genes by high fold-change, then apply statistical testing.

Visualization of Workflows and Relationships

Scalable Harmony Integration Workflow

Harmony Algorithm Iterative Cycle

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Scaling Harmony Workflows

| Tool / Resource | Primary Function | Role in Large-Scale Analysis |

|---|---|---|

| Scanpy (Python) / Seurat (R) | Single-cell analysis toolkit | Primary environment for data manipulation, preprocessing, and downstream analysis. Enable sparse matrix operations. |

| Harmony (R/Python) | Batch integration | Core algorithm for correcting technical variation across batches using iterative clustering and linear regression. |

| IRLBA / TruncatedSVD | Dimensionality reduction | Computes PCA on large, sparse matrices efficiently without full decomposition, saving memory and time. |

| UCSC Cell Browser / SCope | Visualization | Interactive visualization platforms for exploring millions of cells post-integration. |

| Snakemake / Nextflow | Workflow management | Orchestrates scalable, reproducible pipelines from raw data to integrated analysis on HPC/cloud. |

| Anndata / Loom | File format | Efficient, on-disk storage formats for large single-cell datasets with metadata. |

| High-Memory Compute Nodes (Cloud/HPC) | Infrastructure | Provides the necessary RAM (500GB-1TB+) to hold large matrices in memory during integration. |

| Conda / Docker | Environment management | Ensures version control of all packages (Harmony, Seurat, etc.) for reproducible results across runs. |

Application Notes on Harmony Integration and Reproducibility

Harmony is a widely adopted algorithm for integrating multiple single-cell RNA-sequencing (scRNA-seq) datasets, correcting for technical batch effects while preserving biological variance. Its effectiveness is contingent upon its integration into a robust, reproducible computational pipeline. This document details protocols and strategies for embedding Harmony within version-controlled, snapshot-stable workflows essential for rigorous single-cell research and drug development.

Core Challenge: The iterative nature of computational biology, coupled with evolving software dependencies, creates a high risk of result decay. A pipeline that produces integrated cell embeddings today may fail or yield divergent results in six months.

Solution Framework: A dual-strategy approach combining version control for code/logic and environmental snapshotting for software/ dependencies ensures long-term reproducibility and auditability.

Key Reproducibility Concepts & Quantitative Benchmarks

Table 1: Comparative Analysis of Reproducibility Strategies for Harmony Workflows

| Strategy | Core Mechanism | Advantages for Harmony Workflow | Quantitative Impact on Runtime/Result Stability | Key Tools |

|---|---|---|---|---|

| Code Version Control | Tracks changes to pipeline scripts (R/Python). | Enables rollback, collaboration, and provenance tracking for integration parameters. | Zero runtime overhead. Essential for tracking parameter sweeps (e.g., theta, lambda). |

Git, Git LFS (for large metadata). |

| Containerization | OS-level virtualization bundling code, runtime, and libraries. | Guarantees identical versions of Harmony, its dependencies (e.g., Rcpp), and base R/Python. | Container startup adds ~1-2 min. Eliminates "dependency hell," ensuring identical UMAP/t-SNE coordinates across runs. | Docker, Singularity/Apptainer. |

| Package Management | Precise versioning of language-specific packages. | Pins Harmony version and all downstream analysis packages (e.g., Seurat, scanpy). | Package installation time once per environment. Prevents silent errors from API changes in dependent packages. | renv (R), Conda, pip with requirements.txt (Python). |

| Workflow Management | Defines and executes multi-step pipelines (QC -> Integration -> Clustering). | Automates sequencing of steps, manages compute resources, and enforces data flow. | Up to ~30% reduction in manual processing errors. Improves resource utilization for large batches. | Nextflow, Snakemake, CWL. |

| Data Versioning | Tracks changes to input datasets and key outputs. | Links final integrated embeddings and batch-corrected counts to exact input matrix versions. | Storage overhead proportional to data change delta. Critical for re-analysis upon new sample addition. | DVC, Git-annex, institutional data lakes. |

Data synthesized from recent literature on computational reproducibility (2023-2024). Runtime impacts are estimated based on typical high-performance computing (HPC) environments.

Experimental Protocols

Protocol 3.1: Building a Snapshot-Reproducible Harmony Environment using renv and Docker

Objective: Create a frozen computational environment for executing a Harmony-based integration pipeline.

Materials:

- Base R (>=4.1.0) installation.

- Docker Community Edition.

- Project directory with Harmony integration script (

integration.R).

Methodology:

- Initialize renv (R Environment):

Author Dockerfile: Create a file named

Dockerfilewith the following content:Build and Test Container:

Protocol 3.2: Executing a Version-Controlled Harmony Pipeline with Nextflow

Objective: Implement a scalable, reproducible workflow that encapsulates data QC, Harmony integration, and downstream clustering.

Materials:

- Nextflow runtime (>=22.10+).

- Git-managed pipeline repository.

- Sample manifest file (

samples.csv) and raw count matrices.

Methodology:

- Define Pipeline Logic (

main.nf): The core Nextflow script outlines processes and their data channels.

Create Process for Harmony Integration (

modules/harmony_integration.nf):Execute Pipeline with Explicit Versioning:

Visualizations

Diagram 1: Reproducible Harmony Workflow Architecture

Diagram 2: Harmony's Core Algorithmic Steps in Batch Correction

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Computational Reagents for a Reproducible Harmony Workflow

| Item | Category | Function in Workflow | Example/Version (Snapshot) |

|---|---|---|---|

| Harmony R Package | Core Algorithm | Performs batch effect correction on PCA or other embeddings using a iterative integration procedure. | harmony v1.2.0 (GitHub commit: a3b7c5d) |

| Single-Cell Analysis Suite | Analysis Framework | Provides object structures and functions for QC, normalization, and post-integration analysis. | Seurat v5.0.1 or SingleCellExperiment v1.24.0 |

| R/Python Base | Language Interpreter | The underlying computational engine for executing the analysis code. | R v4.3.2 or Python v3.10.12 |

| renv / Conda | Package Manager | Creates project-specific, version-stable libraries of all R/Python dependencies. | renv v1.0.3, conda v23.11.0 |

| Docker / Apptainer | Containerization Tool | Encapsulates the entire analysis environment (OS, libraries, code) into a portable, executable image. | Docker v24.0, Apptainer v1.2.0 |

| Nextflow / Snakemake | Workflow Manager | Orchestrates multi-step pipeline execution, handles job scheduling, and ensures process completion. | Nextflow v23.10.0, Snakemake v7.32.0 |

| Git | Version Control System | Tracks all changes to analysis scripts, configuration files, and documentation. | Git v2.40.0 |

| High-Performance Compute (HPC) | Infrastructure | Provides the necessary computational power and memory for large-scale single-cell data integration. | Slurm/SGE cluster with >=64GB RAM per node |

Harmony vs. Other Methods: Benchmarking Performance and Choosing the Right Tool

Within the broader thesis on Harmony single-cell RNA-seq integration workflow research, establishing an objective, multi-metric framework for evaluating batch correction success is paramount. This document provides detailed Application Notes and Protocols for comparative assessment, enabling researchers and drug development professionals to benchmark Harmony against other methods using quantitative, biologically-grounded criteria.

Batch effects are non-biological variations arising from technical differences across experimental runs. The Harmony algorithm integrates cells across datasets by iteratively removing dataset-specific centroids from a PCA embedding. Success is not merely computational but must be validated through metrics that assess both technical mixing and biological fidelity.

Core Evaluation Metrics: A Quantitative Framework

The success of batch correction must be measured across three complementary dimensions: batch mixing, biological conservation, and downstream utility.

Table 1: Core Metrics for Objective Evaluation

| Metric Category | Specific Metric | Ideal Outcome | Interpretation |

|---|---|---|---|

| Batch Mixing | Local Inverse Simpson’s Index (LISI) | Higher scores (closer to total # batches). | Measures diversity of batches in local neighborhoods. |

| k-Nearest Neighbor Batch Effect Test (kBET) | High p-value (> 0.05). | Tests if local cell label distribution matches global. | |

| Principal Component Regression (PCR) | Low R² value. | Quantifies variance in PCs explained by batch. | |

| Biological Conservation | Adjusted Rand Index (ARI) / Normalized Mutual Information (NMI) | High score (closer to 1). | Measures concordance of cell-type labels before/after integration. |

| Cell-type Specific Silhouette Width | High score (closer to 1). | Assesses preservation of biological clusters. | |

| Differential Expression (DE) Conservation | High proportion of retained marker genes. | Evaluates retention of true biological signals. | |

| Downstream Utility | Trajectory Inference Consistency | Stable inferred pseudotime/paths. | Assesses if developmental structures are preserved. |

| Differential Abundance Test Power | Reduced false positives from batch. | Tests utility for case-control studies. |

Protocols for Comparative Evaluation

Protocol 1: Calculating Batch Mixing Scores

Objective: Quantify the degree of technical integration.

- Input: A low-dimensional embedding (e.g., Harmony-corrected PCA).

- LISI Calculation:

- For each cell i, compute distances to all other cells in the embedding.

- Define a perplexity-based neighborhood (e.g., using

harmonyorlisiR package). - Calculate the inverse Simpson’s index over batch labels within that neighborhood.

- Aggregate median LISI scores across all cells. Higher median = better mixing.

- kBET Calculation:

- Randomly sample k cells (default k=50) across the dataset.

- For each sample, perform a chi-square test comparing the batch label distribution in its neighborhood to the expected (global) distribution.

- Report the rejection rate across tests. Lower rate (< 0.05-0.1) indicates successful mixing.

Protocol 2: Assessing Biological Conservation

Objective: Ensure integration does not distort genuine biological variation.

- Cell-type Cluster Concordance:

- Cluster cells using the integrated embedding (e.g., Leiden clustering).

- Compare clusters to reference cell-type labels using ARI or NMI.

- ARI > 0.7 indicates strong biological preservation.

- Cell-type Silhouette Analysis:

- For each cell-type class, compute the average silhouette width based on distances in the integrated space.

- A high positive score indicates cells of the same type are close together and separate from other types.

- Marker Gene Conservation Test:

- Identify top n marker genes (e.g., via Wilcoxon rank-sum test) for each cell type in a un-corrected but batch-aware reference.

- In the integrated data, re-rank genes by their discriminatory power for that cell type.

- Calculate the fraction of original top n markers remaining in the new top n list. A high fraction (>80%) indicates conservation.

Protocol 3: Benchmarking Workflow

Objective: Execute a standardized comparison of Harmony against other methods (e.g., Seurat CCA, Scanorama, BBKNN).

- Data Preparation: Use a publicly available benchmark dataset with known batch and biological labels (e.g., PBMC from multiple donors, pancreatic islet datasets).

- Run Integrations: Apply each correction method to the log-normalized count matrix, following author specifications.

- Metric Computation: For each output, compute the full suite of metrics from Table 1.